Welcome

Everyone wants to be an astronaut. Very few want to be the rocket scientist.

Machine learning is no different. Everyone wants to train models, run inference, deploy AI. Few want to understand how the frameworks actually work. Fewer still want to build one.

The world has plenty of users. It does not have enough builders—people who can debug, optimize, and adapt systems when the black box breaks down.

TinyTorch is for the builders.

The Problem

Most people can use PyTorch or TensorFlow. They can import libraries, call functions, train models. But very few understand how these frameworks work: how memory is managed for tensors, how autograd builds computation graphs, how optimizers update parameters. And almost no one has a guided, structured way to learn that from the ground up.

Why does this matter? Because users hit walls that builders do not:

- When your model runs out of memory, you need to understand tensor allocation

- When gradients explode, you need to understand the computation graph

- When training is slow, you need to understand where the bottlenecks are

- When deploying on a microcontroller, you need to know what can be stripped away

The framework becomes a black box you cannot debug, optimize, or adapt. You are stuck waiting for someone else to solve your problem.

Students cannot learn this from production code. PyTorch is too large, too complex, too optimized. Fifty thousand lines of C++ across hundreds of files. No one learns to build rockets by studying the Saturn V.

They also cannot learn it from toy scripts. A hundred-line neural network does not reveal the architecture of a framework. It hides it.

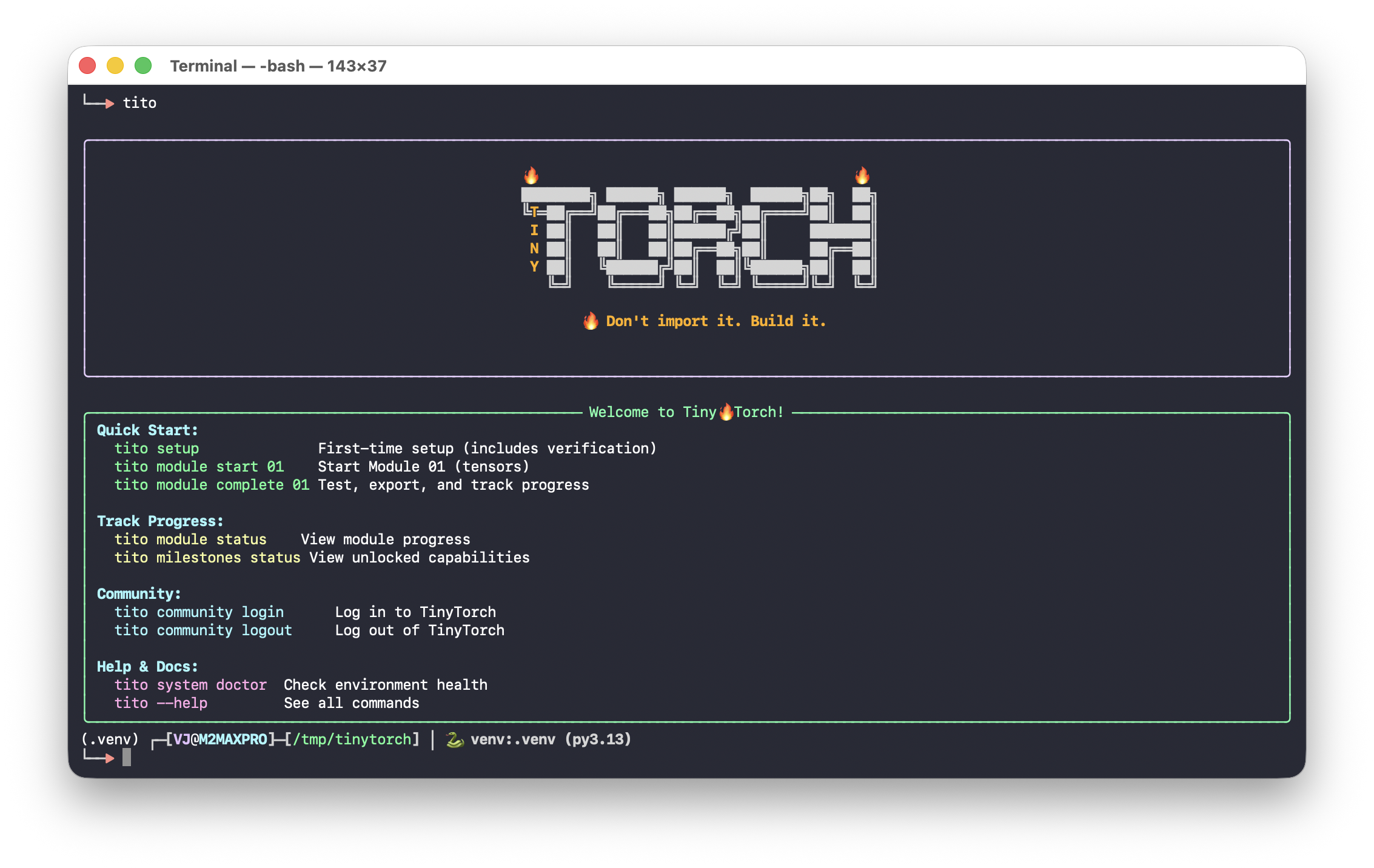

The Solution: AI Bricks

TinyTorch teaches you the AI bricks—the stable engineering foundations you can use to build any AI system. Small enough to learn from: bite-sized code that runs even on a Raspberry Pi. Big enough to matter: showing the real architecture of how frameworks are built.

📖 MLSysBook

The Machine Learning Systems textbook teaches you the concepts of the rocket ship: propulsion, guidance, life support.

TinyTorch

TinyTorch is where you actually build a small rocket with your own hands. Not a toy—a real framework.

This is how you move from using machine learning to engineering it—from running code in a notebook to designing the systems that run underneath.

Who This Is For

Students & Researchers

Want to understand ML systems deeply, not just use them superficially. If you’ve wondered “how does that actually work?”, this is for you.

ML Engineers

Need to debug, optimize, and deploy models in production. Understanding the systems underneath makes you more effective.

Systems Programmers

You understand memory hierarchies, computational complexity, performance optimization. You want to apply it to ML.

Self-taught Engineers

Can use frameworks but want to know how they work. Preparing for ML infrastructure roles and need systems-level understanding.

What you need is not another API tutorial. You need to build.

What You Will Build

By the end of TinyTorch, you will have implemented:

- A tensor library with broadcasting, reshaping, and matrix operations

- Activation functions with numerical stability considerations

- Neural network layers: linear, convolutional, normalization

- An autograd engine that builds computation graphs and computes gradients

- Optimizers that update parameters using those gradients

- Data loaders that handle batching, shuffling, and preprocessing

- A complete training loop that ties everything together

- Tokenizers, embeddings, attention, and transformer architectures

- Profiling, quantization, and optimization techniques

Not a simulation. The actual architecture of modern ML frameworks, implemented at a scale you can hold in your head.

How to Learn

Each module follows a Build-Use-Reflect cycle: implement from scratch, apply to real problems, then connect what you built to production systems and understand the tradeoffs. Work through Foundation first, then choose your path based on your interests.

Type every line yourself

Do not copy-paste. The learning happens in the struggle of implementation.

Profile your code

Use built-in profiling tools. Measure first, optimize second.

Run the tests

Every module ships with tests. When they pass, you have built something real.

Compare with PyTorch

Once your implementation works, compare with PyTorch’s equivalent.

Take your time. The goal is not to finish fast. The goal is to understand deeply.

“Building systems creates irreversible understanding.”

The Bigger Picture

TinyTorch is one half of a two-book sequence. The Machine Learning Systems textbook teaches the concepts: how training works, why GPUs matter, what makes inference cheap or expensive. TinyTorch makes you build it. Together, they form a complete path into ML systems engineering.

This approach follows a long tradition in systems education: SICP’s “build to understand” philosophy, xv6’s transparent operating system, Nachos, Pintos. The pedagogical principles behind TinyTorch are detailed in our research paper, which positions this work within decades of CS education research.

The next generation of engineers cannot rely on magic. They need to see how everything fits together, from a single tensor allocation up to a full training loop, and feel that the systems running modern AI are not an unreachable tower but something they can open, shape, and rebuild.

That is what TinyTorch offers: the confidence that comes from having built it yourself.

Prof. Vijay Janapa Reddi

(Harvard University)

2025

What’s Next?

See the Big Picture → — How all 20 modules connect, what you’ll build, and which path to take.