From Logic to Arithmetic

Neural Computation

Purpose

Why does understanding a neural network’s math matter more than reading its code?

Neural networks reduce to a small set of mathematical operations. Matrix multiplications dominate compute. Activation functions introduce nonlinearity. Gradient computations enable learning. These operations are the workload that every layer of the system stack must execute, and each carries concrete physical consequences: a matrix multiplication’s dimensions determine whether a layer is compute bound or memory bound; an activation function’s complexity determines whether it can be fused with adjacent kernels; the number of parameters determines whether a model fits in accelerator memory at all. When something goes wrong, inspecting the code reveals nothing because it simply says “multiply these matrices.” The bug is not in the logic but in the math itself: a misconfigured learning rate that causes gradients to explode, an activation that saturates and silently blocks learning, a memory footprint that fits during development but exhausts the accelerator in production. This is why the mathematical primitives come first, before architectures, frameworks, or training systems. Every subsequent chapter builds on these operations: architectures compose them into computational graphs, frameworks schedule them onto hardware, training systems orchestrate billions of repetitions, and compression techniques approximate them to fit tighter constraints. An engineer who understands the primitives can look at any new architecture and immediately reason about its compute profile, its memory demands, and its hardware compatibility, because they understand the atoms it is made of.

Learning Objectives

- Explain how limitations of rule-based and classical ML systems necessitated deep learning approaches

- Describe neural network components and compare activation functions (sigmoid, tanh, ReLU, softmax) for their mathematical and hardware implications

- Explain how cross-entropy loss quantifies prediction error and drives gradient-based weight updates

- Contrast training and inference phases in terms of computational demands and deployment considerations

- Explain forward and backward propagation through multi-layer networks as matrix operations and gradient computation for weight updates

- Analyze how neural network operations determine hardware memory and processing requirements

- Trace the end-to-end neural network pipeline from preprocessing through inference, using USPS deployment as a concrete example

A model that runs correctly on one GPU and crashes on another is not suffering from a hardware bug. The matrix dimensions in its attention layer exceed the memory available for intermediate activations, and the crash is a direct consequence of the mathematics inside the model, not the code around it. The ML workflow (ML Workflow) defined how projects progress from problem definition through deployment, and data engineering (Data Engineering) covered how to prepare the raw material that models consume. The question remaining is what happens inside the model itself.

The silicon contract (Iron Law of ML Systems) established that every model architecture makes a computational bargain with the hardware it runs on. The architecture’s mathematical operators set the terms of that bargain: they determine how much memory the model consumes, how long each computation takes, and how much energy the system expends. To honor the contract, a systems engineer must understand those operators.

The operators that follow are not abstract theory but a specification for computational workloads. Neural computation represents a qualitative shift in how we process information: instead of executing a sequence of explicit logical instructions (if-then-else), we execute a massive sequence of continuous mathematical transformations (multiply-add-accumulate). This shift from Logic to Arithmetic changes everything for the systems engineer, creating compute-bound workloads. The “bug” in such a system is rarely a syntax error; it is a numerical instability, a vanishing gradient, or a saturated activation function. Concretely, recognizing a single handwritten digit in the MNIST network we use throughout this chapter requires 109,184 multiply-accumulate (MAC) operations—not one of which is a logical branch.

Definition 1.1: Deep learning

Deep Learning is the computational paradigm of Hierarchical Feature Learning from raw data.

- Significance (quantitative): By stacking nonlinear transformations, it replaces manual Feature Engineering with Architecture Engineering, enabling models to scale with both Data Volume \((D_{\text{vol}})\) and Compute \((R_{\text{peak}})\).

- Distinction (durable): Unlike Shallow Learning, which learns a single transformation, Deep Learning learns a Hierarchy of Abstractions that can be fine-tuned for different tasks.

- Common pitfall: A frequent misconception is that Deep Learning is “just a big neural network.” In reality, it is a Systems Strategy: it uses the iron law to trade computation (\(O\)) for the ability to generalize from high-dimensional inputs.

The landmark Nature review by LeCun, Bengio, and Hinton1 (LeCun et al. 2015) formalized this paradigm.

1 LeCun, Bengio, and Hinton: Recipients of the 2018 ACM Turing Award, their individual contributions (convolutional networks from LeCun, probabilistic sequence models from Bengio, and backpropagation training from Hinton) directly shaped the three operations that dominate modern accelerator workloads: spatial convolution, sequential attention, and gradient computation.

Classical machine learning required human experts to design feature extractors for each new problem, a labor-intensive process that encoded domain knowledge into handcrafted representations. Deep learning eliminates this bottleneck by learning representations directly from raw data through hierarchical layers of nonlinear transformations. To see where neural networks fit in the broader landscape, examine the concentric layers in Figure 1: neural networks sit at the core of deep learning, which is itself a subset of machine learning, which falls under the umbrella of artificial intelligence.

This paradigm shift creates an engineering problem with no precedent in traditional software. When conventional software fails, an error message points to a line of code. When deep learning fails, the symptoms are subtler: gradient instabilities2 that silently prevent learning, numerical precision errors that corrupt model weights over thousands of iterations, or memory access patterns in tensor operations3 that leave GPUs idle for most of each training step. These are not algorithmic bugs that a debugger can catch. They are systems problems that require understanding the mathematical machinery underneath.

2 Gradient Instabilities: In a 20-layer sigmoid network, gradient magnitude after backpropagation is approximately \(0.25^{20}\), or 9.1 × 10⁻¹³—effectively zero, making learning a mathematical impossibility without architectural intervention. These failures are invisible in standard logs (loss simply plateaus or becomes not a number (NaN)), making them among the hardest bugs to diagnose. Rectified linear unit (ReLU) activations (gradient of one for positive inputs) and residual connections (direct gradient highways that bypass layers) were the two architectural breakthroughs that made deep networks tractable; Skip connections: Solving the depth problem treats the residual-connection depth solution in detail.

3 Tensor Operations: The logical structure of a tensor (for example, a 4D image batch) often requires nonsequential memory access patterns to retrieve elements from its flat, 1D physical storage. A concrete example: PyTorch defaults to NCHW (channel-first) layout while most mobile hardware and ARM processors prefer NHWC (channel-last). A 224×224×3 ImageNet tensor is about 151 KB, so transposing it between formats requires about 301 KB of read-plus-write memory traffic per image—a pure memory operation with no arithmetic benefit. On tight latency budgets, this layout mismatch can consume a visible fraction of the end-to-end inference budget before the model does any useful math.

Diagnosing and solving such problems requires mathematical literacy that spans the full neural computation stack. The arc begins with learning paradigms, tracing how they evolved from explicit rules to handcrafted features to learned representations and establishing why deep learning demands qualitatively different system infrastructure than classical machine learning. Neural network fundamentals (neurons, layers, activation functions, and tensor operations) then receive treatment as both mathematical operations and computational workloads, with particular attention to the memory access patterns and arithmetic intensity that determine hardware utilization.

The learning process then takes center stage: the forward pass that produces predictions, the backpropagation algorithm that computes gradients, the loss functions that define optimization objectives, and the optimization algorithms that navigate loss landscapes. Each connects directly to system engineering decisions: matrix multiplication illuminates memory bandwidth requirements (the memory wall explored in Hardware Acceleration), gradient computation explains numerical precision constraints, and optimization dynamics inform resource allocation. The inference pipeline shifts the engineering concerns from throughput to latency and from training stability to deployment efficiency. A historical case study (USPS digit recognition) grounds these concepts in a real deployment, and the D·A·M taxonomy (Data, Algorithm, Machine) closes the arc by explaining why deep learning systems succeed only when all three components align.

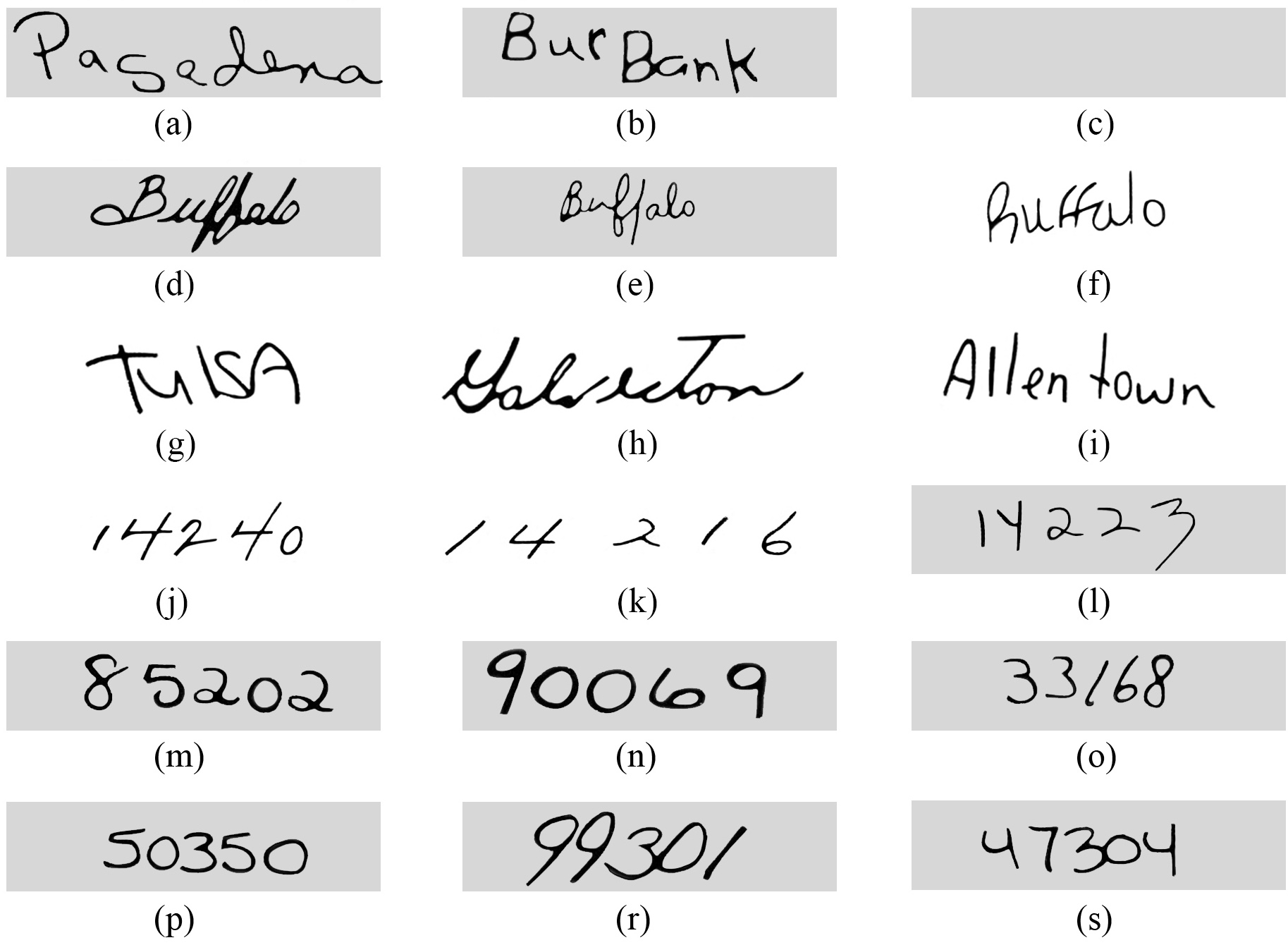

To ground this arc in a concrete systems story, we start by following a single MNIST digit through three computational paradigms and quantify how each step changes the workload profile.

Computing with Patterns

The shift from logic to arithmetic reshapes how we encode real-world patterns in a form a computer can process. To make this evolution concrete, we track a single task across all three paradigms: classifying a handwritten digit from a \(28{\times}28\) pixel image from the MNIST dataset (the same input used throughout this chapter). Watch how the computational profile changes as representation strategies evolve.

From explicit logic to learned patterns

Traditional programming requires developers to explicitly define rules that tell computers how to process inputs and produce outputs. Consider a simple game like Breakout4. The program needs explicit rules for every interaction: when the ball hits a brick, the code must specify that the brick should be removed and the ball’s direction should be reversed (Figure 2). While this approach works effectively for games with clear physics and limited states, it hits a wall when dealing with the messy, unstructured data of the real world.

4 Breakout (DQN): Atari’s 1976 arcade game became an AI milestone when DeepMind’s DQN learned to play it from raw pixels alone (2015), requiring no programmed rules. The systems implication: DQN preprocessed each Atari frame to \(84{\times}84\) pixels and stacked 4 frames as input, selecting a new action every 4 frames (about 15 decisions per second on a 60 Hz game). This real-time inference loop pushed GPU utilization beyond what supervised learning required and foreshadowed the latency constraints of production inference pipelines.

Beyond individual applications, this rule-based paradigm extends to all traditional programming. Notice the data flow in Figure 3: the program takes both rules for processing and input data to produce outputs. Early artificial intelligence research explored whether this approach could scale to solve complex problems by encoding sufficient rules to capture intelligent behavior.

Despite their apparent simplicity, rule-based limitations surface quickly with complex real-world tasks. Recognizing human activities illustrates the challenge. Classifying movement below 6 km/h as walking seems straightforward until real-world complexity intrudes. Speed variations, transitions between activities, and boundary cases each demand additional rules, creating unwieldy decision trees (Figure 4). Computer vision tasks compound these difficulties. Detecting cats requires rules about ears, whiskers, and body shapes while accounting for viewing angles, lighting, occlusions, and natural variations. Early systems achieved success only in controlled environments with well-defined constraints.

Recognizing these limitations, the knowledge engineering approach that characterized AI research in the 1970s and 1980s attempted to systematize rule creation. Expert systems5 encoded domain knowledge as explicit rules, showing promise in specific domains with well-defined parameters but struggling with tasks humans perform naturally: object recognition, speech understanding, and natural language interpretation. These failures highlighted a deeper challenge: many aspects of intelligent behavior rely on implicit knowledge that resists explicit rule-based representation.

5 Expert Systems: These systems convert human expertise into explicit IF-THEN rules. This ‘knowledge engineering’ approach fails for tasks like object recognition, as the text notes, because the required knowledge is implicit and resists articulation. Even in a successful system (DEC’s XCON), the maintenance of 10,000+ hand-authored rules revealed an unsustainable scaling cost that motivated the shift to learned representations.

Consider classifying our \(28{\times}28\) digit with explicit rules: compare pixel intensities against thresholds, check stroke patterns in specific regions, branch on the results. The entire computation is roughly 100 comparisons over 784 bytes of pixel data—sequential, predictable, and comfortably within any CPU’s L1 cache. No special hardware needed. That simplicity is exactly what disappears as we move toward learned representations.

The feature engineering bottleneck

The failures of rule-based systems suggested an alternative: rather than encoding human knowledge as explicit rules, let the system discover patterns from data. Machine learning offered this direction—instead of writing rules for every situation, researchers wrote programs that identified patterns in examples. The success of these methods, however, still depended heavily on human insight to define which patterns to look for, a process known as feature engineering.

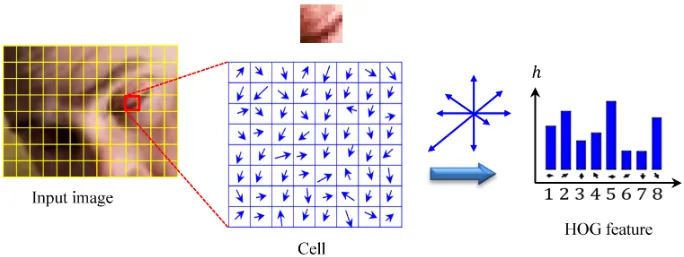

Feature engineering transformed raw data into representations that expose patterns to learning algorithms. The Histogram of Oriented Gradients (HOG)6 (Dalal and Triggs 2005) method exemplifies this approach, identifying edges where brightness changes sharply, dividing images into cells, and measuring edge orientations within each cell (Figure 5). This transforms raw pixels into shape descriptors robust to lighting variations and small positional changes.

6 Histogram of Oriented Gradients (HOG): The gold standard for object detection before deep learning (Dalal and Triggs 2005). HOG computes gradient orientations in fixed \(8{\times}8\) pixel cells, a rigid spatial decomposition that requires expert tuning per domain. The systems contrast with deep learning is instructive: HOG’s fixed computation graph runs efficiently on CPUs with predictable latency, while learned features demand GPU parallelism but generalize across domains without redesign.

Complementary methods like SIFT7 (Lowe 1999) (Scale-Invariant Feature Transform) and Gabor filters8 captured different visual patterns. SIFT detected keypoints stable across scale and orientation changes, while Gabor filters identified textures and frequencies. Each encoded domain expertise about visual pattern recognition.

7 Scale-Invariant Feature Transform (SIFT): SIFT encodes this “domain expertise” in a rigid, four-stage algorithm that identifies keypoints invariant to scale and rotation. This hand-engineering meant the number of keypoints varied unpredictably per image, from hundreds to thousands. This variable-sized output is mechanically incompatible with the fixed-size tensors that modern hardware accelerators demand.

8 Gabor Filters: Originally developed for localized time-frequency analysis (Gabor 1946), these filters detect edges and textures at specific orientations and frequencies. A typical bank contains 40+ filters (8 orientations \(\times\) 5 frequencies), all hand-designed. Deep learning’s first convolutional layers learn filters that closely resemble Gabor functions, but discover them automatically from data, replacing months of expert tuning with GPU-hours of training.

These engineering efforts enabled advances in computer vision during the 2000s. Systems could now recognize objects with some robustness to real-world variations, leading to applications in face detection, pedestrian detection, and object recognition. Despite these successes, the approach had limitations. Experts needed to carefully design feature extractors for each new problem, and the resulting features might miss important patterns that were not anticipated in their design. The bottleneck remained: human expertise could not scale to the complexity and diversity of real-world visual patterns.

Return to the same \(28{\times}28\) digit. HOG divides the image into a 7 by 7 grid of \(4{\times}4\) cells, computes gradient magnitudes and orientations at each pixel, bins them into 9 orientation histograms per cell, and produces a 441-element feature vector. A linear classifier (SVM) then performs ten dot products over that vector. Total: roughly 8,000 arithmetic operations and ~2 KB of working memory—about 80\(\times\) more compute than the rule-based approach, but still structured, predictable, and well-served by CPU vector units using single instruction, multiple data (SIMD). Resource demands scale linearly with image count, not with model complexity.

Automatic pattern discovery

The limitations of handcrafted features motivate a more radical approach: rather than encoding features by hand, the system discovers its own. Neural networks embody exactly this shift—rather than following explicit rules or relying on human-designed feature extractors, the system learns representations directly from raw data.

Deep learning inverts the traditional programming relationship entirely. Traditional programming, as we saw earlier, required both rules and data as inputs to produce answers. Machine learning reverses this: we provide examples (data) and their correct answers, and the system discovers the underlying rules automatically. Figure 6 makes this inversion tangible—notice how data and answers now serve as the inputs, while rules emerge as the output. This shift eliminates the need for humans to specify what patterns are important.

The system discovers patterns from examples through this automated process. When shown millions of images of cats, it learns to identify increasingly complex visual patterns, from simple edges to combinations that constitute cat-like features. This parallels how biological visual systems operate, building understanding from basic visual elements to complex objects.

The gradual layering of patterns reveals why neural network depth matters. Deeper networks can express exponentially more functions with only polynomially more parameters, a compositionality advantage we formalize in Section 1.2.1 with a concrete MNIST example.

Deep learning exhibits predictable scaling: unlike traditional approaches where performance plateaus, these models continue improving with additional data (recognizing more variations) and computation (discovering subtler patterns). The scalability drove dramatic performance gains. In the ImageNet competition, traditional methods achieved approximately 25.8 percent top-5 error in 2011. AlexNet9 reduced this to 15.3 percent in 2012. By 2015, ResNet achieved 3.6 percent top-5 error, surpassing estimated human performance of approximately 5.1 percent.

9 AlexNet’s Two-GPU Split: Krizhevsky’s team split AlexNet across two NVIDIA GTX 580s not by architectural preference but by physical constraint—each card had only 3 GB of VRAM, and the full model required more memory than a single card could provide. This forced the first production instance of model parallelism: half the feature maps on each GPU, with cross-GPU communication only at specific layers. The workaround that felt like a hack in 2012 became the template for model parallelism at scale, and every modern pipeline-parallel strategy traces its lineage to this 3 GB ceiling.

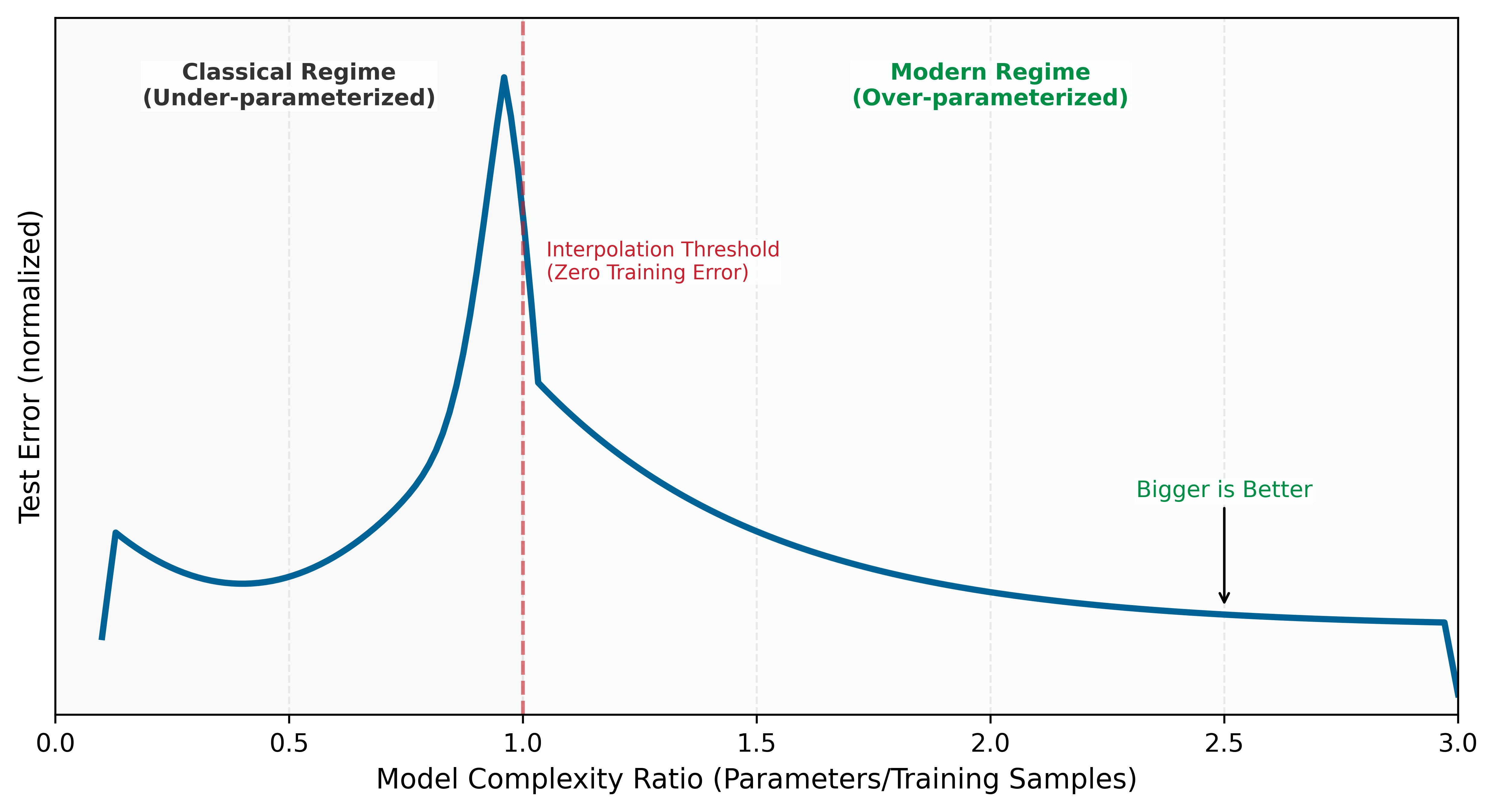

Figure 7 previews this scaling behavior through three distinct regimes. The underlying mechanisms (training error, overfitting, gradient-based learning) are developed in subsequent sections; here we establish the shape of the phenomenon. The Classical Regime is where traditional statistical intuitions hold, the Interpolation Threshold is where the model perfectly fits training data, and the Modern Regime is where massive overparameterization paradoxically improves generalization. The axes are normalized to emphasize shape rather than a specific dataset.

Notice the counterintuitive shape: test error initially follows the expected U-curve, but then decreases again in the overparameterized regime. This scaling behavior resolves the central paradox of deep learning. Classical statistical theory predicted that models should be sized to match data complexity: too small and they underfit, too large and they overfit by memorizing noise. This Bias-Variance Trade-off10 suggested that massive models would inevitably fail on new data. Instead, we observe a ‘Double Descent’ (Belkin et al. 2019) where larger models, trained on sufficient data, find smoother solutions that generalize better than smaller ones. The insight is that bigger is better when properly regularized, and it drives the race for 100B+ parameter foundation models.

10 Bias-Variance Trade-off: In overparameterized networks (parameter count >> training samples), the classical bias-variance trade-off breaks down: test error decreases again after the interpolation threshold, the Double Descent phenomenon. The systems consequence is that larger models trained longer are often more stable than smaller models stopped early, inverting the conventional wisdom that regularization is always the right response to overfitting. This insight drives the engineering decision to scale model size rather than constrain it—bigger networks with more compute often generalize better, not worse.

Neural network performance often follows empirical scaling relationships that impact system design. One durable scale anchor is that frontier model sizes and training compute budgets have increased by multiple orders of magnitude over the past decade. In broad terms, modern AI systems frequently trade off model size, data, and compute budgets rather than relying on a single “train longer” axis. Memory bandwidth and storage capacity can become primary constraints rather than raw computational power, depending on the workload and platform. The detailed formulations and quantitative analysis of scaling behavior are covered in Model Training, while Model Compression explores practical implementation.

Learning directly from raw data reshapes AI system construction. Eliminating manual feature engineering introduces new demands: infrastructure to handle massive datasets, high-throughput hardware to process that data, and specialized accelerators to perform mathematical calculations efficiently. These computational requirements have driven the development of specialized chips optimized for neural network operations. Empirical evidence confirms this pattern across domains: the success of deep learning in computer vision, speech recognition, game playing, and natural language understanding has established it as the dominant paradigm in artificial intelligence.

Return to the same \(28{\times}28\) digit, now processed by even a modest three-layer neural network (784 → 128 → 64 → 10). The forward pass alone requires 109,184 MAC operations, 1,092\(\times\) more than the rule-based approach. The 109,386 learned parameters consume ~438 KB in FP32, exceeding most L1 caches and forcing memory traffic between cache levels on every inference. Training multiplies the cost further: each image must be processed forward, then backward (computing gradients for all 109,386 parameters), then updated, at roughly 3\(\times\) the forward cost per image, repeated over 60,000 images for multiple epochs. The computation is no longer sequential; it is dominated by dense matrix multiplications that leave a standard CPU mostly idle. This is the systems explosion that drives everything that follows.

The scaling advantage comes with computational costs that raise a practical question about when engineers should invest in neural networks vs. simpler alternatives.

Systems Perspective 1.1: When to use neural networks

| Condition | Threshold | Rationale |

|---|---|---|

| Dataset size | > 10,000 labeled examples | Below this, simpler models often match or exceed NN performance |

| Input dimensionality | > 100 raw features | NNs excel at automatic feature learning from high-dimensional data |

| Data has structure | Spatial, sequential, or hierarchical patterns | Architecture can encode inductive bias |

| Accuracy requirement | Need clear improvement over baseline | Late-stage gains often require disproportionate extra compute |

| Problem complexity | Nonlinear relationships dominate | Linear models handle linear relationships more efficiently |

Simpler methods are the better choice under the opposite regime, summarized in Table 2:

| Condition | Better Alternative | Typical Outcome |

|---|---|---|

| < 1,000 samples | Logistic regression, Random Forest | 10 ms training vs. hours; similar accuracy |

| Tabular data, < 100 features | Gradient Boosting (XGBoost, LightGBM) | Often matches NN accuracy with 100\(\times\) less compute |

| Linear relationships | Linear/Ridge regression | Interpretable, fast, often better generalization |

| Real-time constraint < 0.1 ms | Rule-based system | Deterministic latency, no model loading overhead |

| Explainability required | Decision trees, linear models | Regulatory compliance, debugging clarity |

Systems insight: Before building a neural network, train a logistic regression or gradient boosting model in < 1 hour. If it achieves > 90 percent of the target accuracy, the neural network’s additional complexity may not be justified. The USPS system (Section 1.5) succeeded partly because the problem genuinely required hierarchical feature learning that simpler methods could not provide.

Computational infrastructure requirements

The MNIST running example traced a single digit from ~100 comparisons (rule-based) through ~8,000 structured operations (HOG) to 109,184 matrix MACs (neural network): a 1,092\(\times\) escalation in computation, with a corresponding shift from predictable sequential access to bandwidth-hungry parallel matrix operations. Table 3 generalizes this pattern across every systems dimension.

| System Aspect | Traditional Programming | ML with Features | Deep Learning |

|---|---|---|---|

| Computation | Sequential, predictable paths | Structured parallel ops | Massive matrix parallelism |

| Memory Access | Small, predictable patterns | Medium, batch-oriented | Large, complex hierarchical |

| Data Movement | Simple input/output flows | Structured batch processing | Intensive cross-system movement |

| Hardware Needs | CPU-centric | CPU with vector units | Specialized accelerators |

| Resource Scaling | Fixed requirements | Linear with data size | Exponential with complexity |

The computational paradigm shift becomes apparent when comparing these approaches. Traditional programs follow sequential logic flows; deep learning requires massive parallel operations on matrices. This difference explains why conventional CPUs, designed for sequential processing, perform poorly for neural network computations.

The shift toward parallelism creates new bottlenecks that differ qualitatively from those in sequential computing. The central challenge is the Memory Wall11: while computational capacity can be increased by adding more processing units, memory bandwidth to feed those units does not scale as favorably. Matrix multiplication, the core neural network operation, is often limited by memory bandwidth rather than raw computational capability12—adding more processing units does not proportionally improve performance. Hardware responses to this challenge are examined in Understanding the AI memory wall, the complete derivation of training memory costs (weights, gradients, optimizer state, activations) appears in The true cost of training memory, and the formal memory hierarchy with quantitative latency comparisons is in The memory hierarchy.

11 Memory Wall: Fast on-chip storage responds in nanoseconds, while larger off-chip memory is orders of magnitude farther away in latency and energy. Neural network weights rarely fit in the smallest caches (even our MNIST model is hundreds of KB in FP32), forcing repeated memory fetches that can leave compute units idle. This bandwidth bottleneck, not arithmetic capacity alone, is why accelerators invest die area in HBM and on-chip SRAM.

12 Memory-Bound Operations: Matrix multiplication’s arithmetic intensity (FLOPs per byte loaded) determines whether a layer is compute bound or memory bound. Layers that fall below the hardware’s roofline crossover point finish their arithmetic before the next tile of weights arrives from memory. The result: effective hardware utilization can drop sharply, and adding more compute units yields little speedup until memory bandwidth or data reuse improves.

The deeper constraint is energy, not speed. Moving data from main memory to processing units consumes more energy than the actual mathematical operations. This energy hierarchy explains why neural network accelerators focus on maximizing data reuse: keeping frequently accessed weights in fast local storage and carefully scheduling operations to minimize data movement. GPUs address this through both higher memory bandwidth and massive parallelism, but the underlying physics remains unchanged: data movement dominates computation cost, driving the adoption of specialized hardware architectures from data center GPUs to TinyML accelerators.

The memory-computation trade-off manifests differently across the cloud-to-edge spectrum introduced in ML Systems. Cloud servers can afford more memory and power to maximize throughput, while mobile devices must carefully optimize to operate within strict power budgets. Training systems prioritize computational throughput even at higher energy costs, while inference systems emphasize energy efficiency. These different constraints drive different optimization strategies across the ML systems spectrum, ranging from memory-rich cloud deployments to heavily optimized TinyML implementations.

These single-machine constraints compound when scaling across multiple machines: deep learning models consume exponentially more resources as they grow, making distributed computing a necessity rather than a luxury. Memory optimization strategies like quantization and pruning are detailed in Model Compression, hardware architectures and their memory systems in Hardware Acceleration, and scaling laws in Model Training.

The infrastructure demands traced earlier (massive parallelism, memory walls, energy-dominated data movement) arise from four properties of neural computation: adaptive parameterization (weights change during training), parallel integration (many simple units operate simultaneously), hierarchical representation (layers compose low-level features into high-level concepts), and resource economy (data reuse minimizes energy-intensive movement). These properties manifest concretely in the fundamental building block of neural computation: the artificial neuron13. Just as understanding a single transistor reveals how complex processors work, understanding the artificial neuron reveals how million-parameter networks operate.

13 Neuron: McCulloch and Pitts (1943) established the first mathematical model with the “multiply-accumulate then activate” pattern, which is the direct origin of the computational properties discussed in the text. The model’s structure enables parallel integration (many simple units), its weights provide the mechanism for adaptive parameterization, and its output feeds subsequent layers to create hierarchical representations. Modern accelerators descend from this abstraction by devoting much of their datapath area and scheduling machinery to the fused multiply-add (FMA) units that implement it efficiently.

The artificial neuron as a computing primitive

The basic unit of neural computation, the artificial neuron (or node), serves as a simplified mathematical abstraction designed for efficient digital implementation (McCulloch and Pitts 1943). This building block enables complex networks to emerge from simple components working together. Compare the biological and artificial neurons side by side in Figure 8 to see how this computational model distills biological complexity into a standardized processing unit.

The mapping in Figure 8 traces a signal through four stages, each translating a biological structure into a mathematical operation. Table 4 formalizes these correspondences.

| Biological Structure | Artificial Component | Mathematical Operation | Engineering Role |

|---|---|---|---|

| Dendrites (receive signals) | Input Vector | \(\mathbf{x} = [x_1, \dots, x_n]\) | Data ingestion from sensors or prior layers |

| Synapses (modulate strength) | Weight Vector | \(\mathbf{w} = [w_1, \dots, w_n]\) | Learnable parameters encoding importance |

| Cell Body (integrates signals) | Linear Function \(z\) | \(z = \sum (x_i \cdot w_i) + b\) | Linear integration of feature signals |

| Axon (fires output) | Activation Function \(f\) | \(a = f(z)\) | Nonlinear thresholding and signal propagation |

Follow the signal path through the right panel of Figure 8 to see this pipeline in action:

Input Reception (Dendrites → \(x_1, x_2, \dots, x_n\)): The neuron receives a vector of input features \(\mathbf{x}\). In a system like MNIST digit recognition, these represent individual pixel intensities—the digital equivalent of signals arriving at a biological neuron’s dendrites.

Weighted Modulation (Synapses → \(w_1, w_2, \dots, w_n\)): Each input is multiplied by a learnable weight \(w_i\), just as synaptic strengths modulate biological signals. These weights act as “gain” controls, determining how much influence each feature has on the final decision. A bias term \(b\) (shown as the top input \(x_0 = 1\) in Figure 8) shifts the activation threshold. This is where the model’s “knowledge” is stored.

Signal Aggregation (Cell Body → Linear Function \(z\)): The neuron integrates the weighted signals, producing a single scalar value \(z = \sum (x_i \cdot w_i) + b\). This mirrors how a biological cell body sums incoming electrochemical signals to determine whether the neuron has received enough evidence for a particular pattern.

Nonlinear Activation (Axon → Activation Function \(f\)): The aggregated signal passes through an activation function \(f(z)\), producing the output \(y\). This mirrors the axon’s all-or-nothing firing decision: the nonlinearity determines whether the neuron “fires” a signal to the next layer. Unlike the biological case, \(f\) can produce graded outputs (for example, ReLU passes positive values through, zeroes negatives), but the principle is the same—thresholding followed by propagation.

From a systems engineering perspective, this translation reveals why neural networks have such demanding computational requirements. Each “simple” neuron requires \(N\) multiply-accumulate (MAC)14 operations and \(2N+2\) memory accesses (loading \(N\) inputs and \(N\) weights, plus the bias and output). When replicated millions of times across a network, these primitives create the massive arithmetic and bandwidth demands that define modern AI infrastructure.

14 MAC (Multiply-Accumulate): The atomic operation of neural computation: \(a \leftarrow a + (b \times c)\). Modern accelerators are often marketed in FLOPs because a fused multiply-add is counted as two floating-point operations. On that convention, an NVIDIA H100 is rated at 989 dense FP16 TFLOP/s, or about 494 trillion MAC/s if one MAC is counted as one multiply-accumulate. Every layer size and batch size decision ultimately reduces to how many MACs fit within the latency and power budget.

The transition from individual neurons to integrated systems requires navigating the central trade-off between representational capacity and computational cost. While silicon transistors operate at gigahertz frequencies, millions of times faster than biological chemical signaling, the sheer volume of operations in deep networks creates unique bottlenecks.

Replicating intelligent behavior in silicon confronts three interrelated system-level constraints. The memory wall becomes acute as models grow to billions of parameters, making data movement the primary bottleneck rather than raw computation. Concurrency clashes with dependency: while layers can be computed in parallel across thousands of cores for throughput, the sequential nature of deep networks (layer \(\ell+1\) depends on layer \(\ell\)) creates fundamental latency limits. Precision also trades against power: digital systems achieve high accuracy through precise 32-bit or 64-bit math, but each bit increases the energy cost of every operation, driving the search for minimum viable precision explored in Model Compression.

Addressing these constraints requires two complementary strategies. Architectural inductive bias encodes problem-specific structure directly into the network design (convolutional networks for images, recurrent networks for sequences), reducing the search space the optimizer must navigate (Mitchell 1980). Computational scaling compensates for remaining complexity through brute-force optimization on massive hardware arrays. Modern AI engineering sits at the intersection of these two paths: clever architectures shrink the problem, and massive scale solves what remains.

Hardware and software requirements

Translating neural concepts to silicon carries a physical cost. Feature extraction becomes weighted linear sums, thresholding becomes nonlinear activation functions, and pattern interaction becomes fully connected layers, all implemented as matrix operations that modern hardware must execute efficiently. A single matrix multiplication in code translates to millions of transistors switching at high frequency, generating heat and consuming significant power. Each neural network operation creates a specific hardware demand: activation functions require fast nonlinear units, weight operations require high-bandwidth memory access, parallel computation requires specialized processors, and learning algorithms require gradient computation hardware. These demands interact: the sheer volume of weight parameters creates a storage problem, the need to move those weights to processing units creates a bandwidth problem, and the learning process compounds both by requiring space for gradients and optimizer state alongside the weights themselves.

A key difference from traditional computing is that neural network “memory” is distributed across all weights rather than stored at specific addresses. Every prediction requires reading a significant portion of the model’s parameters, and every training step requires coordinating weight updates across the entire network. This creates a fundamental tension between storage capacity and access bandwidth that biological neural systems avoid (synapses both store and process locally). The human brain operates on approximately twenty watts (Raichle and Gusnard 2002); artificial neural networks demand orders of magnitude more energy, primarily because of this data movement overhead. This energy gap drives the specialized hardware architectures covered in Hardware Acceleration and the optimization strategies explored in Model Compression.

These hardware demands did not emerge overnight. The tension between algorithmic ambition and available silicon has shaped the entire trajectory of neural network research, from the earliest perceptrons to today’s trillion-parameter models.

Evolution of neural network computing

Deep learning evolved to meet these challenges through concurrent advances in hardware and algorithms. The journey began with early artificial neural networks in the 1950s, marked by the introduction of the Perceptron15 (Rosenblatt 1958). While novel in concept, these early systems were severely limited by the computational capabilities of their era: mainframe computers that lacked both the processing power and memory capacity needed for complex networks.

15 Perceptron: A machine built to execute a learning algorithm, directly linking hardware and software from the start. Its single-layer architecture was fundamentally constrained to linearly separable problems, a limitation Minsky and Papert later proved was algorithmic, not just computational. This early failure demonstrated that without sufficient model depth (that is, layers), even custom-built hardware with 400 photocell inputs was insufficient for complex tasks.

16 Backpropagation: Short for “backward propagation of errors,” the algorithm solves the credit assignment problem by determining which of millions of weights caused a given error, using the chain rule. Werbos applied it to neural networks in 1974, but the 1986 Rumelhart, Hinton, and Williams publication demonstrated practical effectiveness. The systems cost: backprop requires storing forward-pass activations and additional training state, creating the several-times-higher memory footprint quantified later in the chapter.

The backpropagation16 algorithm, first applied to neural networks by Paul Werbos in his 1974 PhD thesis and building on Seppo Linnainmaa’s 1970 work on automatic differentiation, was popularized by Rumelhart et al. (1986). Their publication demonstrated the algorithm’s practical effectiveness and brought it to widespread attention in the machine learning community, triggering renewed interest in neural networks. The systems-level implementation of this algorithm is detailed in Backpropagation mechanics. Despite this breakthrough, the computational demands far exceeded available hardware capabilities. Training even modest networks could take weeks, making experimentation and practical applications challenging. This mismatch between algorithmic requirements and hardware capabilities contributed to a period of reduced interest in neural networks.

The historical trajectory demonstrates a recurring systems engineering lesson: an algorithm is only as effective as the hardware available to execute it. The decades-long gap between the mathematical formulation of backpropagation17 and its widespread adoption was a latency in infrastructure, not a failure of theory. Efficient ML systems engineering requires co-designing algorithms and silicon together. The deep learning revolution was sparked by the convergence of data availability, algorithmic maturity, and the parallel processing power of GPUs, not by a new mathematical discovery alone.

17 Algorithm-Hardware Adoption Lag: Backpropagation was mathematically complete by 1974 (Werbos) but not widely adopted until 1986—a 12-year gap explained by insufficient compute: training a meaningful network required hardware that did not exist. The pattern recurs more softly with attention: attention mechanisms were introduced for neural machine translation in 2014, and transformers made them central in 2017; GPUs were sufficient for the original Transformer experiments, while later TPU- and GPU-scale infrastructure enabled much larger deployments. The implication is that apparently “failed” algorithms may simply be hardware-premature. When evaluating today’s computationally intractable techniques, the right diagnostic is not whether the algorithm works on current hardware but what hardware regime would make it work.

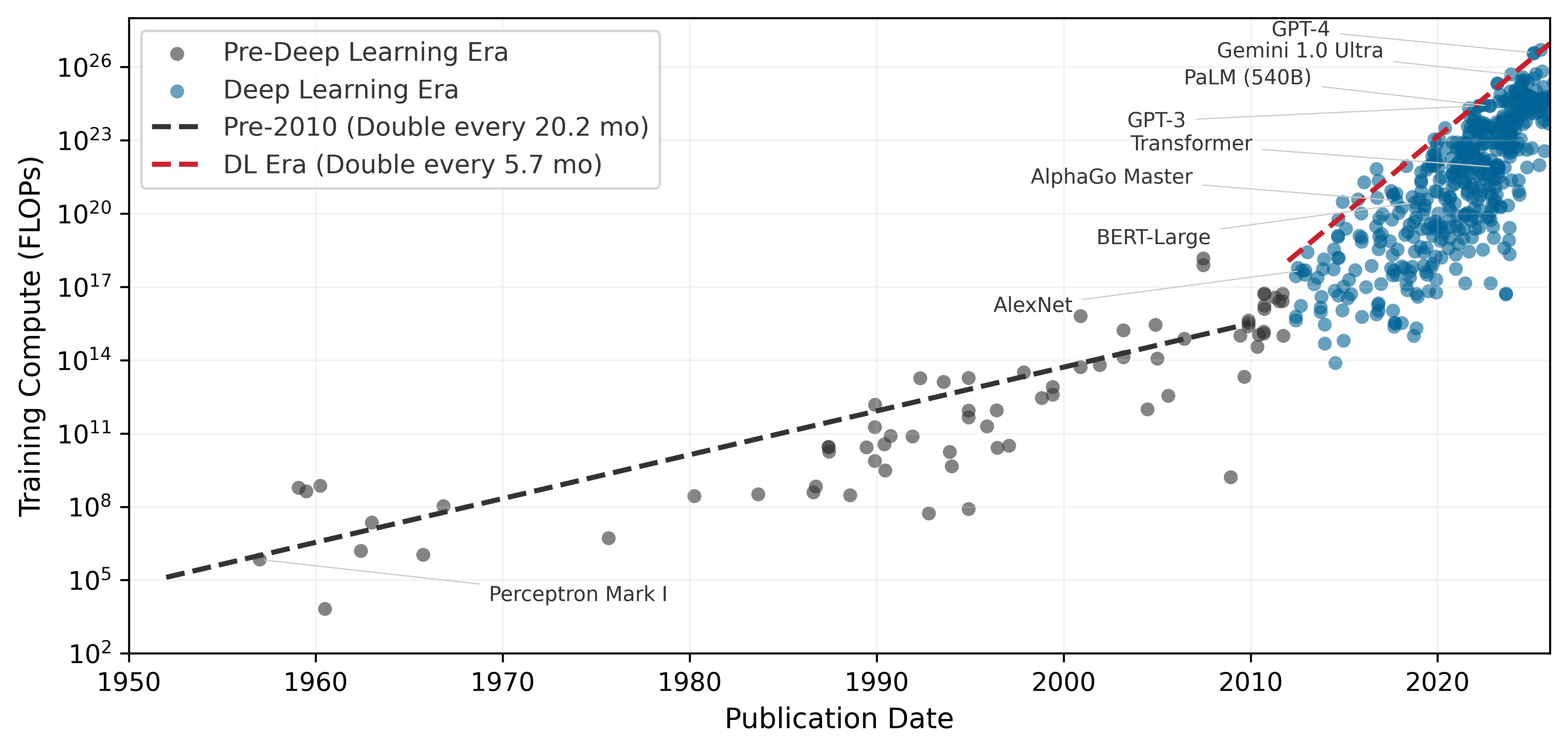

While the preceding sections established the technical foundations of deep learning, the term itself gained prominence in the 2010s, coinciding with advances in computational power and data accessibility. The scale of this computational explosion is difficult to grasp without visualization. Figure 9 plots seven decades of AI training compute on a logarithmic scale, revealing two fitted regimes: total training compute, measured in floating-point operations (FLOPs), followed a comparatively slow pre-2010 trend, then the post-2012 deep-learning frontier accelerated sharply. Large-scale models after 2015 sit orders of magnitude above the pre-2010 trajectory, showing that modern progress reinvests hardware and algorithmic gains into much larger training runs.

Table 5 grounds these trends in concrete systems, showing how parameters, compute, and hardware co-evolved across four decades of neural network development.

| Year | System | Params | Train FLOPs | Hardware | Train Time | Error/Task |

|---|---|---|---|---|---|---|

| 1989 | LeNet-1 | ~9.8K | \(10^{11}\)–\(10^{12}\) | Sun-4/260 workstation | 3 days | 1.0 percent (USPS) |

| 1998 | LeNet-5 | \(60\text{K} \pm 1\text{K}\) | \(10^{14} \pm 1\text{ OoM}\) | SGI Origin 2000 (200 MHz) | 2–3 days | 0.95 percent (MNIST) |

| 2012 | AlexNet | ~60M | \(5 \times 10^{17}\) | 2\(\times\) GTX 580 GPUs | 5–6 days | 15.3 percent (ImageNet) |

| 2015 | ResNet-152 | ~60M | \(10^{19} \pm 0.5\text{ OoM}\) | 8\(\times\) Tesla K80 GPUs | ~3 weeks | 3.6 percent (ImageNet) |

| 2020 | GPT-3 | 175B (exact) | \(3 \times 10^{23}\) | ~10K V100 GPUs | weeks | N/A (language) |

| 2023 | GPT-4 | ~1.8T (MoE, est.) | \(10^{24}\)–\(10^{25}\) | 10–25K A100s (est.) | months | N/A (language) |

Beyond raw compute, this exponential growth carries an energy cost that systems engineers cannot ignore. Training LeNet-1 in 1989 consumed roughly 54 kWh, about a few days of household electricity. A GPT-4-scale training run, using public GPU-day estimates and a datacenter-overhead factor, lands around 48,000 MWh—enough to power roughly 4,571 US homes for a year18. The energy cost of AI has moved from negligible to industrial, forcing engineers to treat energy efficiency (Joules per operation) as a primary design constraint alongside raw FLOPS. The quantitative energy analysis, including Horowitz’s data-movement-dominates-compute numbers and the full energy hierarchy, appears in Hardware Acceleration where it can be connected to concrete hardware architectures.

18 Training Energy Scale: This estimate uses 10.5 MWh/year as a representative US household electricity budget and treats GPT-4’s public GPU-day estimate as A100-equivalent accelerator time with datacenter overhead. The point is order of magnitude, not an audited utility bill: a single frontier training run now rivals a small industrial facility’s energy budget, making Joules-per-operation a first-order design constraint alongside FLOPS.

Three quantitative patterns emerge from this historical data. The plotted post-2012 training-compute frontier doubles on the order of months, while broader summaries that smooth across model families and account for reporting uncertainty show similarly rapid annual growth. Separately, the compute required to achieve a fixed benchmark has improved substantially due to algorithmic and systems advances. Training costs grow more slowly than raw compute because hardware utilization, reduced precision, and software efficiency also improve. Frontier model training costs have nonetheless moved from workstation-scale budgets into industrial-scale investments. These patterns have direct implications for systems engineering: compute scaling determines infrastructure investment timelines, algorithmic efficiency justifies continuous architecture research, and the cost-compute gap shapes build-vs.-buy decisions for ML teams.

Parallel advances across three dimensions drove these evolutionary trends: data availability, algorithmic innovations, and computing infrastructure. Follow the arrows in Figure 10 to see this reinforcing cycle in motion: faster computing infrastructure enabled processing larger datasets, larger datasets drove algorithmic innovations, and better algorithms demanded more sophisticated computing systems. This reinforcing cycle continues to drive progress today.

The data revolution transformed what was possible with neural networks. The rise of the internet and digital devices created vast new sources of training data: image sharing platforms provided millions of labeled images, digital text collections enabled language processing at scale, and sensor networks generated continuous streams of real-world data. This abundance provided the raw material neural networks needed to learn complex patterns effectively.

Algorithmic innovations made it possible to use this data effectively. New methods for initializing networks and controlling learning rates made training more stable. Techniques for preventing overfitting allowed models to generalize better to new data. Researchers discovered that neural network performance scaled predictably with model size, computation, and data quantity, leading to increasingly ambitious architectures.

These algorithmic advances created demand for higher-throughput computing infrastructure, which evolved in response. On the hardware side, GPUs provided the parallel processing capabilities needed for efficient neural network computation, and specialized AI accelerators like TPUs19 (Jouppi et al. 2023) pushed performance further. High-bandwidth memory systems and fast interconnects addressed data movement challenges. Software advances matched the hardware evolution: frameworks and libraries simplified building and training networks, distributed computing systems enabled training at scale, and tools for optimizing model deployment reduced the gap between research and production.

19 Tensor Processing Unit (TPU): Google’s custom accelerator, first deployed internally in 2015, optimized specifically for the matrix multiplications that dominate neural network workloads. The TPU v1 achieved 92 TOPS for INT8 inference at 75 W, a power efficiency that general-purpose GPUs of the era could not match. The name “Tensor Processing Unit” reflects the design decision to sacrifice general-purpose flexibility for maximum throughput on the specific operation that neural networks need most.

The convergence of data availability, algorithmic innovation, and computational infrastructure created the foundation for modern deep learning. Understanding the computational operations that drive these infrastructure requirements is essential: when scaled across millions of parameters and billions of training examples, simple mathematical operations create the massive computational demands that shaped this evolution.

Checkpoint 1.1: Understanding deep learning's emergence

If any of these concepts remain unclear, review the relevant sections before continuing. The mathematical details that follow build directly on this conceptual foundation.

The historical trajectory from Perceptrons through AI winters to the GPU-driven revolution reveals a recurring pattern: algorithms outpace hardware, creating latency between discovery and adoption, until infrastructure catches up and triggers an explosion of capability. This pattern continues today as frontier models push against memory walls and energy budgets. Understanding the mathematical operations that create these pressures is essential for navigating the next cycle—which requires examining the computational primitives themselves.

Self-Check: Question

A team replaces a hand-coded digit classifier (≈100 comparisons, 784 bytes of working state) with the chapter’s 784→128→64→10 MLP (≈109,000 MACs, ≈438 KB of weights) on the same MNIST input. Which systems consequence should they expect first when the new model goes live on a commodity CPU?

- The workload becomes more sequential and fits entirely inside L1 cache, reducing memory traffic.

- Branch prediction becomes the dominant bottleneck because each neuron executes many if-then tests.

- The workload shifts to dense matrix math whose weight footprint exceeds most L1 caches, creating cache-level memory traffic that is absent from the hand-coded rule system.

- Specialized hardware becomes unnecessary because the model has learned the original rules and can discard them.

A CV team must choose between (a) a HOG + SVM classical pipeline they already use, and (b) a convnet of comparable task accuracy. Using the chapter’s treatment of feature engineering as the classical bottleneck, explain the systems-engineering consequence of each choice when the product must extend to six new object categories over the next year.

A vendor proposes that 5× faster single-threaded CPUs would eliminate the need for GPUs or TPUs in deep learning. Based on the section’s account of computational infrastructure requirements, what is the strongest refutation?

- CPUs cannot store neural network weights in registers, so no CPU will ever execute matrix multiplications.

- Deep learning is dominated by dense parallel matrix multiplications whose throughput is bounded by wide SIMD lanes and off-chip memory bandwidth, neither of which is addressed by raising single-thread clock speed.

- Modern CPUs force the optimizer to use smaller learning rates, which offsets any clock-speed gain.

- Faster CPUs would make the softmax output layer too precise, causing training instability.

A pipeline engineer depends on domain experts to invent descriptors (edge histograms, keypoint detectors, texture filters) for each new vision task. One quarter later, the team must support six additional categories. Using the section’s framing, explain two distinct systems consequences of staying inside this feature-engineering regime rather than switching to learned representations.

A reviewer argues that a 1970s neural algorithm that “failed” in its decade should be permanently dismissed. The chapter’s history of backpropagation and attention suggests a different systems-engineering stance. Which response best matches?

- Dismiss the algorithm permanently, since algorithms that were once infeasible remain infeasible.

- Ask which hardware or data regime would make the algorithm practical, because the history shows algorithms can be hardware-premature rather than wrong — backpropagation waited for GPU matrix throughput, and attention waited for dense HBM.

- Replace it with rule-based logic so it runs on current CPUs immediately.

- Assume that more labeled data alone will revive it, without any change in hardware or cost structure.

The chapter characterizes the rise of modern deep learning as a self-reinforcing cycle among data abundance, algorithmic innovation, and compute infrastructure. Which description most accurately captures how the cycle produces accelerating returns rather than additive gains?

- The three factors progressed in a strict linear sequence — compute, then algorithms, then data — each finishing before the next began.

- Each factor contributed roughly equally and independently, with no causal interaction among them.

- Each factor raised the marginal return on the others: abundant data justified larger algorithms, larger algorithms exposed which compute paths were worth accelerating, and faster compute justified collecting still more data.

- Compute infrastructure was the single decisive factor; data abundance and algorithmic innovation were downstream consequences of cheap GPUs.

Neural Network Fundamentals

Compute grew exponentially, algorithms matured, and data became abundant. The question now is why the computational demands are so extreme. A GPU processes neural networks faster than a CPU not because of raw clock speed but because of the specific mathematical operations neural networks perform. Training requires more memory than inference not because of software overhead but because the chain rule demands storing every intermediate result. Understanding these operations reveals how simple arithmetic on individual neurons compounds into the infrastructure requirements that shaped modern AI.

The concepts here apply to all neural networks, from simple classifiers to large language models. While architectures evolve and new paradigms emerge, these fundamentals remain constant: weighted sums, nonlinear activations, gradient-based learning. Mastering these operations and their computational characteristics enables reasoning about any neural network’s resource requirements.

Why depth matters: The power of hierarchical representations

A single-layer network attempting to classify handwritten digits must map raw pixels directly to labels, essentially memorizing every variation of every digit. A network with three layers solves the same problem with far fewer parameters by decomposing it hierarchically. The question is why depth provides such dramatic representational advantages, and the answer grounds all the mathematical development that follows.

Deep networks succeed because they use compositionality: complex patterns decompose into simpler patterns that themselves decompose further. In image recognition, pixels combine into edges, edges into textures, textures into parts, and parts into objects. This hierarchical decomposition reflects the structure of the world itself and explains why “deep” learning earns its name.

Consider recognizing the digit “seven” in our MNIST example. A single-layer network would need to directly map all 784 pixel values to a decision, essentially memorizing every variation of how people write “seven.” A deep network takes an entirely different approach:

- Layer 1 learns simple edge detectors: vertical lines, horizontal lines, diagonal strokes

- Layer 2 combines edges into shapes: the horizontal top stroke of a “seven,” the diagonal downstroke

- Layer 3 combines shapes into complete digit patterns

Each layer builds on the previous, exponentially expanding representational capacity with only linear parameter growth. This hierarchy enables efficiency that shallow networks cannot match. The same edge detectors learned for “seven” also detect edges in “one,” “four,” and every other digit. This parameter reuse means a deep network with 100K parameters can represent patterns that would require millions of parameters in a shallow network attempting direct pixel-to-label mapping. However, the choice between adding layers and widening existing ones is not symmetric: depth and width contribute to representational capacity through very different mechanisms, with consequences that compound rather than cancel.

Systems Perspective 1.2: The depth vs. width trade-off

However, depth introduces engineering challenges. Each additional layer:

- Adds sequential dependencies (layer \(L+1\) waits for layer \(L\)), limiting parallelism

- Increases gradient path length, risking vanishing/exploding gradients

- Requires storing intermediate activations for backpropagation

Modern architectures balance depth (representational power) against width (parallelism). A network with ten layers of 100 neurons has the same 1,000 total hidden neurons as one with two layers of 500 neurons, but fundamentally different computational characteristics. The deeper network can represent more complex functions; the wider network can compute all neurons in a layer simultaneously.

Biological visual systems employ the same hierarchical decomposition. The specific architectures examined in Network Architectures formalize different ways to encode this hierarchical structure, from the local connectivity of convolutional networks to the attention mechanisms of transformers.

The intuition for why depth matters motivates the next question: how do neural networks implement this hierarchical processing? The following sections develop the precise mechanics: how neurons compute, how layers connect, and how information flows from input to output.

Network architecture fundamentals

A neural network’s architecture determines how information flows from input to output. Modern networks can be enormously complex, but they all build on a few organizational principles that shape both implementation and the computational infrastructure they demand.

To ground these concepts in a concrete example, we use handwritten digit recognition throughout this section, specifically the task of classifying images from the MNIST dataset (LeCun et al. 1998). This seemingly simple task reveals all the core principles of neural networks while providing intuition for more complex applications.

Example 1.1: Running example: MNIST digit recognition

Input Representation: Each image contains 784 pixels (\(28{\times}28\)), with values ranging from 0 (white) to 255 (black). We normalize these to the range [0,1] by dividing by 255. When fed to a neural network, these 784 values form our input vector \(\mathbf{x} \in \mathbb{R}^{784}\).

Output Representation: The network produces 10 values, one for each possible digit. These values represent the network’s confidence that the input image contains each digit. The digit with the highest confidence becomes the prediction.

Why This Example: MNIST is small enough to understand completely (784 inputs, ~100K parameters for a simple network) yet large enough to be realistic. The task is intuitive: everyone understands what “recognize a handwritten seven” means, making it ideal for learning neural network principles that scale to much larger problems.

Network Architecture Preview: A typical MNIST classifier might use: 784 input neurons (one per pixel) → 128 hidden neurons → 64 hidden neurons → 10 output neurons (one per digit class). As we develop concepts, we will reference this specific architecture.

Each architectural choice, from how neurons are connected to how layers are organized, creates specific computational patterns that must be efficiently mapped to hardware. This mapping between network architecture and computational requirements is essential for building scalable ML systems.

Nonlinear activation functions

The conceptual framework of layers and hierarchical processing leads to the computational machinery within each layer. Central to all neural architectures is a basic building block: the artificial neuron or perceptron, which implements the biological-to-artificial translation principles established earlier. From a systems perspective, understanding the perceptron’s mathematical operations matters because these simple operations, replicated millions of times across a network, create the computational bottlenecks discussed earlier.

Consider our MNIST digit recognition task. Each pixel in a \(28{\times}28\) image becomes an input to our network. A single neuron in the first hidden layer might learn to detect a specific pattern, perhaps a vertical edge that appears in digits like “one” or “seven.” This neuron must somehow combine all 784 pixel values into a single output that indicates whether its pattern is present.

The perceptron accomplishes this through weighted summation. It takes multiple inputs \(x_1, x_2, ..., x_n\) (in our case, \(n=784\) pixel values), each representing a feature of the object under analysis. For digit recognition, these features are the raw pixel intensities.

With this weighted summation, a perceptron can perform either regression or classification tasks. For regression, the numerical output \(\hat{y}\) is used directly. For classification, the output depends on whether \(\hat{y}\) crosses a threshold: above the threshold, the perceptron outputs one class (for example, “yes”); below it, another class (for example, “no”).

Follow the signal path through Figure 11 to see how weighted inputs combine with activation functions to produce a decision: each input \(x_i\) multiplies by its corresponding weight \(w_{ij}\), the products sum with a bias term, and the activation function produces the final output.

Layers of perceptrons work in concert, with each layer’s output feeding the subsequent layer. This hierarchical arrangement creates deep learning models capable of tackling increasingly sophisticated tasks, from image recognition to natural language processing.

Each input \(x_i\) has a corresponding weight \(w_{ij}\), and the perceptron multiplies each input by its matching weight. The intermediate output, \(z\), is computed as the weighted sum of inputs in Equation 1: \[ z = \sum (x_i \cdot w_{ij}) \tag{1}\]

In plain terms, each input feature is scaled by how important it is (its weight), and the results are summed into a single score. This is the dot product of two vectors—the fundamental operation that hardware accelerators are designed to execute at maximum throughput, and the reason neural network performance is measured in multiply-accumulate (MAC) operations per second.

A bias term \(b\) shifts the linear output up or down, giving the model additional flexibility to fit the data. Thus, the intermediate linear combination computed by the perceptron including the bias becomes Equation 2: \[ z = \sum (x_i \cdot w_{ij}) + b \tag{2}\]

Each neuron thus requires \(N\) multiply-accumulate operations and \(2N+2\) memory accesses (loading \(N\) inputs and \(N\) weights, plus the bias and output). A layer of \(M\) neurons repeats this \(M\) times, so the layer’s total cost is \(M \times N\) MACs—exactly the matrix multiplication \(\mathbf{x}\mathbf{W}\) that hardware must execute.

Activation functions are critical nonlinear transformations that enable neural networks to learn complex patterns by converting linear weighted sums into nonlinear outputs. Without activation functions, multiple linear layers would collapse into a single linear transformation, severely limiting the network’s expressive power. Three commonly used element-wise activation functions and one vector-level function (softmax) each exhibit distinct mathematical characteristics that shape their effectiveness in different contexts (Figure 12).

The choice of activation function affects both learning effectiveness and computational efficiency—and the history of that choice reveals why systems constraints shape algorithmic design. ReLU (\(\max(0, x)\)) became the default hidden-layer activation for many feed-forward and convolutional networks, and it remains a useful baseline for good reason: it is computationally trivial (a single comparison), its gradient never vanishes for positive inputs, and it introduces natural sparsity. ReLU’s dominance, however, only makes sense against the backdrop of what came before and the alternatives used in modern architectures, such as the Gaussian Error Linear Unit (GELU), Sigmoid-weighted Linear Unit (SiLU), and gated variants in transformer feed-forward blocks. The earliest networks used sigmoid and tanh activations, whose smooth S-curves seemed mathematically elegant but created a systems nightmare: gradients that shrank exponentially through deep layers, killing learning before it could begin. Understanding why sigmoid and tanh fail in deep networks is essential for understanding why ReLU succeeded and what its own limitations imply for modern architectures.

Sigmoid

The sigmoid function20 maps any input value to a bounded range between 0 and 1, as defined in Equation 3: \[ \sigma(x) = \frac{1}{1 + e^{-x}} \tag{3}\]

20 Sigmoid: From Greek sigma + eidos (“sigma-shaped”), referring to the S-curve that maps inputs to the bounded (0, 1) range. The mapping requires a floating-point exponential (\(e^{-x}\)), which costs ~2,500 transistors and 20–40 CPU cycles per evaluation, vs. ReLU’s single comparator at ~50 transistors and one cycle—a 50\(\times\) silicon cost difference per activation. This arithmetic penalty scales with every neuron in every layer, making sigmoid’s replacement by ReLU as much a hardware efficiency decision as a gradient stability one.

The S-shaped curve produces outputs interpretable as probabilities, making sigmoid particularly useful for binary classification tasks. For large positive inputs, the function approaches one; for large negative inputs, it approaches 0. The smooth, continuous nature of sigmoid makes it differentiable everywhere, which is necessary for gradient-based learning.

Sigmoid has a significant limitation: for inputs with large absolute values (far from zero), the gradient becomes extremely small, a phenomenon called the vanishing gradient problem21. During backpropagation, these small gradients multiply together across layers, causing gradients in early layers to become exponentially tiny. This effectively prevents learning in deep networks, as weight updates become negligible.

21 Vanishing Gradient Problem: The chain rule’s multiplication of gradients across layers causes this failure mode when using activations like sigmoid, whose derivative is always less than 1. With sigmoid’s maximum derivative of 0.25, the gradient in a 10-layer network shrinks by a factor of nearly one million (\(0.25^{10} \approx 10^{-6}\)), preventing weights in early layers from updating.

Sigmoid outputs are not zero-centered (all outputs are positive). This asymmetry can cause inefficient weight updates during optimization, as gradients for weights connected to sigmoid units will all have the same sign.

Tanh

The hyperbolic tangent function22 addresses sigmoid’s zero-centering limitation by mapping inputs to the range \((-1, 1)\), as defined in Equation 4: \[ \tanh(x) = \frac{e^x - e^{-x}}{e^x + e^{-x}} \tag{4}\]

22 Tanh (Hyperbolic Tangent): By centering its output range on zero, tanh allows weight gradients to be both positive and negative, fixing the all-positive update bias that slows sigmoid-based training. While its computational cost is similar to sigmoid, this zero-centering is critical in recurrent architectures like LSTMs to prevent runaway activation values across many time steps, where unbounded activations would quickly exceed hardware floating-point limits.

Tanh produces an S-shaped curve similar to sigmoid but centered at zero: negative inputs map to negative outputs and positive inputs to positive outputs. This symmetry balances gradient flow during training, often yielding faster convergence than sigmoid.

Like sigmoid, tanh is smooth and differentiable everywhere, and it still suffers from the vanishing gradient problem for inputs with large magnitudes. When the function saturates (approaches -1 or 1), gradients shrink toward zero. Despite this limitation, tanh’s zero-centered outputs make it preferable to sigmoid for hidden layers in many architectures, particularly in recurrent neural networks where maintaining balanced activations across time steps is important.

Both sigmoid and tanh share a critical limitation: gradient saturation at extreme input values. The search for an activation function that avoids this problem while remaining computationally efficient led to one of deep learning’s most important innovations.

ReLU

The Rectified Linear Unit (ReLU)23 function was known for decades before deep learning, but Nair and Hinton (2010) demonstrated that ReLU enabled more effective training of deep networks. Combined with GPU computing, dropout24, and other innovations, ReLU helped enable the AlexNet breakthrough in 2012 (Krizhevsky et al. 2012). The ReLU function is defined in Equation 5: \[ \text{ReLU}(x) = \max(0, x) = \begin{cases} x & \text{if } x > 0 \\ 0 & \text{if } x \leq 0 \end{cases} \tag{5}\]

23 ReLU (Rectified Linear Unit): Unlike the costly exponential operations in prior activation functions, ReLU’s max(0, x) operation compiles to a single, fast hardware instruction. This efficiency, and its solution to the vanishing gradient problem, were essential for making the deep architectures of the AlexNet era computationally tractable. The resulting 5–10\(\times\) faster activation computation per element was a key enabler of the 2012 breakthrough.

24 Dropout: Randomly deactivating neurons during training forces a network to learn redundant representations, a key innovation that helped enable the AlexNet breakthrough. This creates a systems-level divergence between the computational graphs for training (stochastic) and inference (deterministic). Failing to switch from the training to the inference graph is a common bug that silently degrades accuracy by 5–15 percent.

ReLU’s characteristic shape—a straight line for positive inputs and zero for negative inputs—provides three advantages that explain its dominance. First, gradient flow remains intact: for positive inputs, ReLU’s gradient is exactly one, allowing gradients to propagate unchanged through many layers and preventing the vanishing gradient problem that plagues sigmoid and tanh in deep architectures. Second, ReLU introduces natural sparsity by zeroing all negative activations. Typically, about 50 percent of neurons in a ReLU network output zero for any given input, reducing overfitting and improving interpretability. Third, computational efficiency improves dramatically: unlike sigmoid and tanh, which require expensive exponential calculations, ReLU is computed with a single comparison, output = (input > 0) ? input : 0, translating to faster execution and lower energy consumption, particularly important on resource-constrained devices.

ReLU is not without drawbacks. The dying ReLU problem—neurons that permanently output zero and cease learning—occurs when neurons become stuck in the inactive state. If a neuron’s weights evolve during training such that the preactivation \(z = \mathbf{w}^T\mathbf{x} + b\) is consistently negative across all training examples, the neuron outputs zero for every input. Since ReLU’s gradient is also zero for negative inputs, no gradient flows back through this neuron during backpropagation: the weights cannot update, and the neuron remains dead. This can happen with large learning rates that push weights into unfavorable regions. From a systems perspective, dead neurons represent wasted capacity: parameters that consume memory and compute during inference but contribute nothing to the output. In extreme cases, 10–40 percent of a network’s neurons can die during training, effectively reducing model capacity without reducing resource consumption. Careful initialization (He et al. 2015), moderate learning rates, and architectural choices (leaky ReLU variants or batch normalization25 (Ioffe and Szegedy 2015)) help mitigate this issue.

25 Batch Normalization Systems Cost: BatchNorm adds two learned parameters per feature (scale \(\gamma\) and shift \(\beta\)), a synchronization barrier during training (requiring all-reduce across the batch dimension), and diverges in computational graph structure between training (live mean/variance from the batch) and inference (frozen running statistics). The critical failure mode is small-batch sensitivity: batch sizes below 8–16 produce noisy mean/variance estimates that degrade accuracy by 3–8 percent, forcing larger batches and more memory. This coupling between a regularization technique and hardware batch-size constraints is why LayerNorm replaced BatchNorm in transformers—LayerNorm normalizes across features, not batch, making its statistics independent of batch size.

Softmax

Unlike the previous activation functions that operate element-wise (independently on each value), softmax26 is a vector-level function: it considers all values simultaneously to produce a probability distribution. In simple classifiers, softmax is commonly used in the output layer rather than as an element-wise hidden activation; in modern architectures it is also used internally, most notably to normalize attention weights. The softmax function is defined in Equation 6: \[ \text{softmax}(z_i) = \frac{e^{z_i}}{\sum_{j=1}^K e^{z_j}} \tag{6}\]

26 Softmax: The name reflects its role as a “soft” or differentiable version of argmax, a function that must evaluate an entire vector to find its maximum value. This vector-wise operation is not used as a drop-in replacement for element-wise nonlinearities such as ReLU, but it is central whenever a model must normalize a vector of scores, including classification heads and attention layers. Critically, its use of exponentiation creates a numerical stability hazard: inputs greater than ~88 will overflow standard 32-bit floats, a common source of silent NaN failures in production.

27 Logits (log-odds units): Short for “log-odds units,” these raw scores preserve the relative ordering of class evidence before softmax normalizes them into probabilities. Because argmax over logits and argmax over softmax probabilities always select the same class, optimized inference pipelines skip the softmax computation entirely when only the top prediction is needed, saving \(K\) exponentiations per sample.

For a vector of \(K\) values (often called logits27), softmax transforms them into \(K\) probabilities that sum to 1. One component of the softmax output appears in Figure 12 (bottom-right); in practice, softmax processes entire vectors where each element’s output depends on all input values.

In multi-class classifiers, softmax is usually used in the output layer. By converting arbitrary real-valued logits into probabilities, softmax enables the network to express confidence across multiple classes. The class with the highest probability becomes the predicted class. The exponential function ensures that larger logits receive disproportionately higher probabilities, creating clear distinctions between classes when the network is confident.

The mathematical relationship between input logits and output probabilities is differentiable, allowing gradients to flow back through softmax during training. When combined with cross-entropy loss (discussed in Section 1.3.3), softmax produces particularly clean gradient expressions that guide learning effectively. Beyond their mathematical properties, the choice of activation functions has direct consequences for hardware efficiency.

Systems Perspective 1.3: Activation functions and hardware

The transistor tax: Logic unit cost

The hardware dominance extends beyond speed to silicon area. In computer architecture, we measure the Logic Unit Cost in terms of transistor count and energy per operation.