Network Architectures

Purpose

Why is choosing a neural network architecture an infrastructure commitment rather than a modeling decision?

Selecting a neural network architecture is not a modeling decision but a contract with physics. A convolutional network commits the system to spatially local computation that parallelizes naturally across hardware cores. A transformer commits the system to attention mechanisms whose memory grows quadratically with sequence length. A recommendation model commits the system to enormous embedding tables that dominate memory and turn every training step into a bandwidth-bound lookup. These are not abstract trade-offs resolved during model selection; they are physical consequences that propagate through the entire system stack. The architecture determines whether the model fits in mobile device memory or requires a data center, whether training completes in days or months, whether inference meets millisecond latency targets, and whether deployment is economically viable at scale. More critically, the choice is irreversible in practice: data pipelines are built around the architecture’s input format, training infrastructure is provisioned for its compute profile, serving systems are optimized for its inference pattern, and monitoring dashboards are calibrated to its failure modes. Changing the architecture means rebuilding all of this, which is why architecture decisions made early in a project persist long after better alternatives emerge. The architecture is not what the model does but what the hardware must do, and every downstream engineering decision inherits the physical contract it imposes.

Learning Objectives

- Distinguish the computational characteristics of major neural network architectures (MLPs, CNNs, RNNs, Transformers, and Deep Learning Recommendation Model (DLRM))

- Explain how inductive biases enable architectures to exploit structure in different data types

- Analyze computational complexity and memory scaling behaviors across architectural families

- Identify the architectural building blocks (skip connections, normalization, gating) that enable training deep networks and transfer across architectural families

- Apply the architecture selection framework to match data characteristics with appropriate designs

- Evaluate how computational, memory access, and data movement primitives determine hardware mapping efficiency across architectures

- Assess system-level deployment constraints including latency, bandwidth, and parallelization requirements

- Critique common architectural selection fallacies using systems engineering principles

Architectural Principles

The mathematical operators established in Neural Computation (matrix multiplication, activation functions, and gradient computation) form the “verbs” of neural networks. Those operators are the atoms; this chapter examines how they assemble into architectures: specialized structures optimized for specific data types and computational constraints. As defined in the silicon contract (Principle 4) (Iron Law of ML Systems), every architecture makes an implicit agreement with hardware, trading computational patterns for efficiency on particular problem classes.

Every neural network architecture answers one central question: how should we structure computation to match the structure in our data? Images have spatial locality, language has sequential dependencies, and tabular records have no inherent structure at all. The architecture encodes assumptions about these patterns directly into the computational graph, and those assumptions determine everything from parameter count to hardware utilization to deployment feasibility. Architecture selection is therefore a systems engineering problem that directly determines the iron law terms: the number of operations \(O\) and the volume of data movement \(D_{\text{vol}}\).

The structural assumptions that each architecture encodes are known as inductive biases1, and they serve as the unifying concept for this entire chapter.

1 Inductive Bias: From Latin inducere, “to lead into,” encoding a structural assumption “leads” the model toward a smaller solution space, which is why this concept unifies the entire chapter: every architecture discussed here—multilayer perceptron (MLP), convolutional neural network (CNN), recurrent neural network (RNN), and transformer—is defined by its choice of bias. A CNN’s locality bias cuts parameters by orders of magnitude vs. an equivalent MLP, directly shrinking the iron law’s \(O\) and \(D_{\text{vol}}\) terms, while a transformer’s lack of spatial bias demands quadratic memory in exchange for flexible long-range connectivity.

Definition 1.1: Inductive bias

Inductive Bias is a structural constraint built into a model architecture that restricts the hypothesis space, enabling generalization from finite data by encoding domain-specific assumptions (such as spatial locality or sequential ordering) directly into the computational graph.

- Significance (quantitative): Inductive bias directly reduces the data volume \((D_{\text{vol}})\) required for generalization. A CNN’s spatial locality bias reduces the hypothesis space from \(O(P^2)\) (fully connected) to \(O(P \cdot K^2)\) (local filters), where \(K \ll P\): for a \(224{\times}224\) image, a \(3{\times}3\) CNN kernel needs roughly 5,575\(\times\) fewer parameters than an equivalent dense input connection, cutting both the memory footprint and the data required to avoid overfitting by the same factor.

- Distinction (durable): Unlike Regularization (which penalizes hypothesis complexity at training time via L1/L2 terms), Inductive Bias eliminates entire hypothesis classes at architecture design time: a CNN cannot represent arbitrary nonlocal functions regardless of training data, while regularization merely discourages them.

- Common pitfall: A frequent misconception is that stronger inductive bias is always better. A strong locality bias (CNN) excels on spatial data but fails to represent long-range dependencies in language, where a transformer’s lack of spatial bias—at the cost of \(O(N^2)\) memory scaling—is necessary to achieve state-of-the-art performance.

A convolutional neural network (CNN) encodes an inductive bias of spatial locality: nearby pixels matter more than distant ones. A transformer’s inductive bias is that any element may attend to any other, enabling flexible long-range relationships at the cost of quadratic memory scaling. These biases are not incidental design choices; they are the mechanism through which architectures achieve efficiency by restricting the space of functions they can represent. Without these biases, the hypothesis space is so large that learning even simple tasks would require effectively infinite data and compute. We formalize how inductive biases unify all architectural families in Section 1.10.4, after examining how each architecture’s bias manifests in practice.

Machine learning systems face a core engineering trade-off: representational power vs. computational efficiency. Under the iron law of ML systems (Principle 3) (Iron Law of ML Systems), architectural choice is the primary determinant of the Ops term. A transformer’s attention mechanism enables global relationships but scales as \(O(N^2)\) operations with sequence length \(N\); a CNN exploits spatial locality to reduce operations to linear scaling in the number of spatial positions. Matching the right inductive biases to a workload’s data while setting a manageable Ops budget defines the practice of neural architecture selection.

Five architectural families define modern neural computation, each optimized for different data characteristics. Table 1 maps each family to its data domain, core innovation, and dominant system bottleneck:

| Architecture | Data Type | Core Innovation | System Bottleneck |

|---|---|---|---|

| MLPs | Tabular/Unstructured | Dense connectivity | Memory bandwidth |

| CNNs | Spatial (images) | Local filters + weight sharing | Compute throughput |

| RNNs | Sequential (time series) | Recurrent state | Sequential dependencies |

| Transformers | Relational (language) | Dynamic attention | Memory capacity \((N^2)\) |

| DLRM | Categorical (recommendations) | Embedding tables | Memory capacity (TB+) |

Each architectural choice creates distinct computational signatures that propagate through every level of the implementation stack.

Throughout this book, we use five specific model architectures as recurring Lighthouse Models. These serve as consistent reference points to ground abstract concepts in concrete systems reality. Their system-level characteristics appear here, covering both qualitative roles and quantitative profiles, before each architecture receives detailed examination in its respective section. These examples are concrete implementations of the Workload Archetypes (Compute Beast, Bandwidth Hog, etc.) introduced in Workload archetypes.

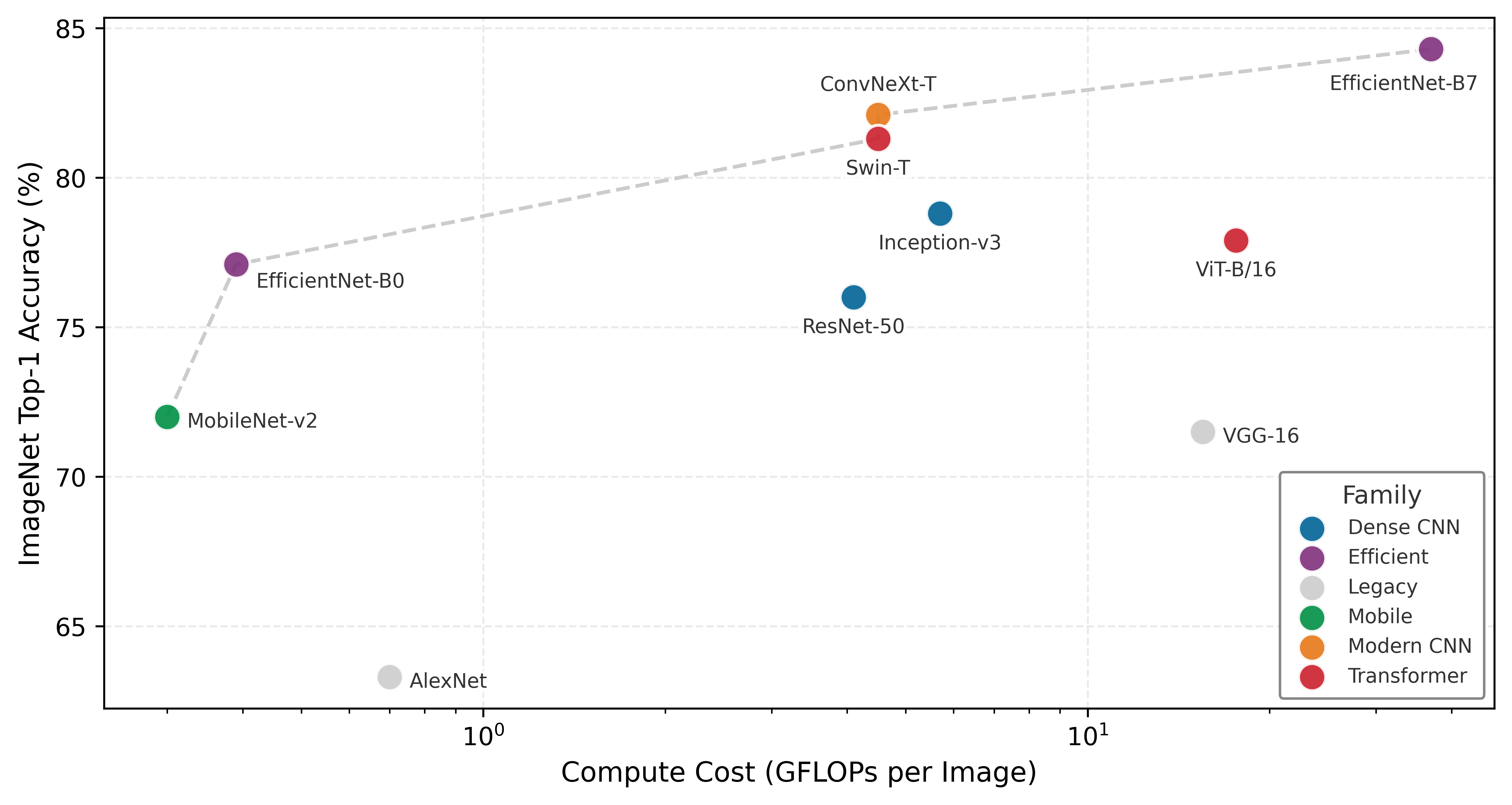

To understand why these specific models were chosen, consider the history of model evolution through the lens of the efficiency frontier (Figure 1).

These models serve as more than convenient examples; they form a set of canonical workloads for understanding system constraints. Each occupies a distinct position on the trade-off between accuracy and computational cost, as mapped in Figure 1. The Pareto frontier reveals three distinct eras of architectural thinking: the original dense CNNs that pushed accuracy at any cost, the efficiency revolution of MobileNets that minimized compute per unit accuracy, and the transformer era that trades massive computational cost for unprecedented capability. The architectural choices made at design time determine where a system lands on this frontier.

Lighthouse 1.1: Canonical workloads

We choose these specific models because they isolate distinct system bottlenecks:

- ResNet-50 isolates Compute (Dense Matrix Math).

- GPT-2 isolates Memory Bandwidth (Data Movement).

- DLRM isolates memory capacity (Random Access).

- MobileNet isolates edge latency (Efficient Convolution).

- KWS isolates always-on power (Tiny Inference).

By studying these “Lighthouses,” we learn engineering principles (roofline analysis, arithmetic intensity, memory hierarchies) that remain valid even as the specific “State of the Art” model architectures evolve.

Lighthouse roster: Model biographies

Before using these models as engineering benchmarks, we review their historical context and why they became standards.

ResNet-50 (He et al. 2016) The Residual Network (ResNet) addressed the degradation problem in very deep plain networks: adding layers could increase training error despite sufficient capacity. By introducing “skip connections” that improve optimization and gradient flow, it enabled networks of 50, 100, or even 1000 layers. The ResNet architecture won the ImageNet 2015 competition (with very deep 152-layer models), and ResNet-50 later became the standard “backbone” for computer vision. From a systems perspective, it is a highly regular, compute-intensive workload composed almost entirely of dense convolutions, making it the ideal test for GPU floating-point throughput.

2 Autoregressive Generation: A decoding strategy where each output token is conditioned on all previously generated tokens, requiring a full model forward pass per token. For a 1.5B-parameter model in FP16, generating one token loads about 3.0 GB of weights from HBM yet performs only a matrix-vector multiply, yielding an arithmetic intensity of about 1.0 FLOP/byte. This token-by-token serial dependency is what makes LLM inference fundamentally bandwidth-bound rather than compute bound, and why the KV cache, which stores prior key-value vectors to avoid recomputation, becomes the dominant memory consumer during generation.

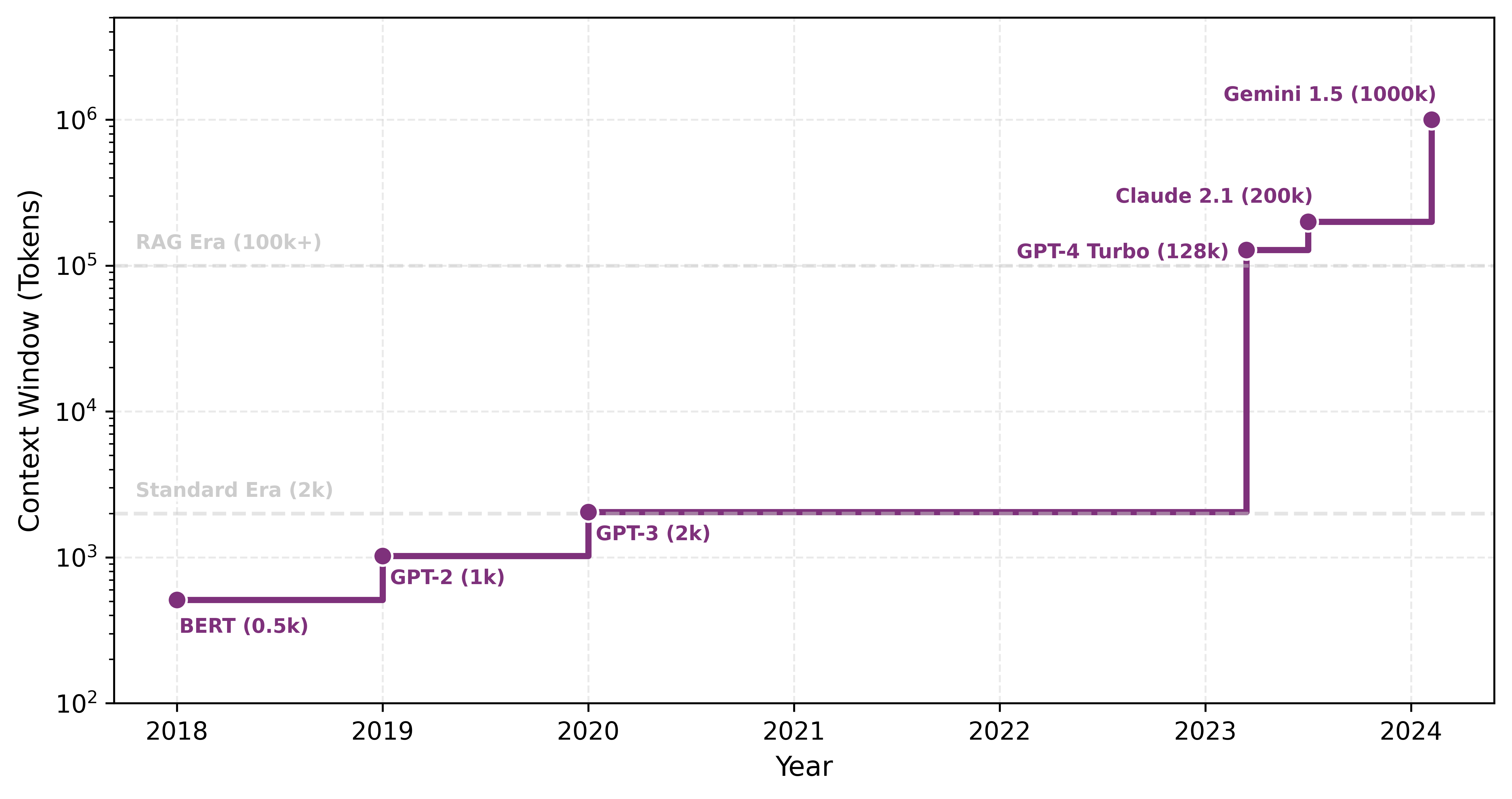

GPT-2 (Radford et al. 2019) Generative Pre-trained Transformer 2 (GPT-2) demonstrated that scaling up a simple architecture (the Transformer Decoder) on massive datasets could produce coherent text generation. Unlike BERT (which processes text bidirectionally), GPT-2 generates text sequentially (autoregressively2), creating a unique memory bandwidth bottleneck where the entire model must be loaded to generate just one token. It serves as our archetype for modern Large Language Models (LLMs) like Llama and ChatGPT.

DLRM (Naumov et al. 2019) The Deep Learning Recommendation Model (DLRM) was open-sourced by Meta to expose a workload that differs from CNNs and transformers in a critical way. While vision and language models are compute-heavy, recommendation systems are memory-heavy. They must look up user and item preferences in massive embedding tables that can reach terabytes in size, creating unique challenges for latency-critical serving (Model Serving). DLRM is the standard benchmark for memory capacity and sparse memory access patterns in the data center.

MobileNet (Howard et al. 2017) MobileNet challenged the trend of ever-larger models by prioritizing efficiency. It popularized depthwise separable convolutions for efficient vision models, an architectural innovation that reduced computational cost (FLOPs) by 8–9\(\times\) for \(3{\times}3\) kernels with minimal accuracy loss, making it a prime candidate for quantization techniques covered in Model Compression. It proved that model architecture could be co-designed with hardware constraints, becoming the standard for running vision models on smartphones and embedded devices where battery life and latency are critical.

Keyword Spotting (KWS) (Warden 2018) Keyword Spotting models (like those detecting “Hey Siri” or “Ok Google”) represent the extreme end of efficiency. Designed to run on “always-on” microcontrollers with kilobyte-scale memory and milliwatt power budgets, these models (often Depthwise Separable CNNs) exemplify the constraints of TinyML. They force engineers to count every byte and cycle, driving innovations in extreme quantization (int8/int4) and specialized hardware.

Arithmetic intensity spectrum

The quantitative characteristics of these Lighthouse models expose a critical engineering constraint established in Neural Computation: arithmetic intensity. As we saw, this ratio of operations performed per byte of data moved determines whether a workload is compute bound or memory bound.

Workload signatures: The arithmetic intensity table

These bottlenecks are not accidental; they are the “signatures” of the underlying math. We quantify these signatures using arithmetic intensity \((I)\), defined as the ratio of floating-point operations performed per byte of data moved from main memory.

Table 2 compares the signatures of our three primary Lighthouses, exposing the roughly 80\(\times\) gap between ResNet and GPT-2:

| Model Family | Lighthouse | Intensity \((I)\) | Hardware Affinity |

|---|---|---|---|

| Dense CNN | ResNet-50 | ~40.0 | Compute-Rich (GPUs/TPUs) |

| Efficient Vision | MobileNetV2 | ~21.4 | Balanced (Mobile NPUs) |

| Transformer | GPT-2 (Inf) | ~0.50 | Bandwidth-Rich (HBM3/H100) |

This table provides the quantitative justification for architecture selection: one chooses a transformer not because it is “better” in the abstract, but because the project can afford the bandwidth pressure diagnosed by the arithmetic intensity law (Principle 6) in exchange for its relational flexibility. Conversely, MobileNet is the right choice when the “Machine” axis lacks the bandwidth to sustain a denser signature.

The “Bottleneck” column in Table 3 deserves particular attention: it identifies which system resource (compute throughput, memory bandwidth, memory capacity, latency, or power) limits performance for each workload class. In iron law terms (Iron Law of ML Systems), the bottleneck identifies whether \(O\) (operations) or \(D_{\text{vol}}\) (data movement) dominates the runtime. These distinctions determine which optimization strategies prove effective, a theme we return to throughout subsequent chapters.

| Model | Domain | Params | FLOPs/Inf | Memory | Bottleneck | Role in Textbook |

|---|---|---|---|---|---|---|

| ResNet-50 | Vision | 25.6 M | 4.1 GFLOPs | 102 MB | Compute | Parallelism, quantization, and batching |

| GPT-2 XL | Language | 1.5 B | 3.0 GFLOPs/token | 6.0 GB | Mem. Bandwidth | Autoregressive generation and KV caching |

| DLRM | Recommender | 25 B | Low | 100 GB | Mem. Capacity | Embedding tables and scale-out systems |

| MobileNetV2 | Edge Vision | 3.5 M | 300 MFLOPs | 14 MB | Latency | Depthwise convolutions and efficiency |

| KWS (DS-CNN) | Audio | 200 K | 20 MFLOPs | 800 KB | Power | Extreme quantization and always-on ops |

Architecture selection is ultimately an engineering trade-off between math \((O)\) and memory movement \((D_{\text{vol}})\). By comparing our Lighthouses, we can see how architectural choices shift a model’s position on the intensity spectrum:

- ResNet-50 (Compute Bound): High intensity (roughly 40 FLOPs/byte at the whole-model level in Table 2, with deeper bottleneck layers reaching 100–200+ FLOPs/byte). Convolutional layers reuse each weight many times across the spatial dimensions of an image. Its performance is limited by how fast the hardware can do math.

- GPT-2 (Bandwidth Bound): Low intensity (\(\approx 0.5\) FLOPs/byte). Each token produces only a matrix-vector multiplication rather than the matrix-matrix operations of batch processing, so the system must load massive weights from memory for a single token’s math. Its performance is limited by how fast memory can move bits.

- MobileNet (Memory Bound on GPUs): Moderate whole-model intensity (~21.4 FLOPs/byte in Table 2), with individual depthwise layers falling lower once activation traffic is included. MobileNet reduces total \(O\) through depthwise separable convolutions, but it moves more data relative to that work. It fits mobile hardware perfectly but often “starves” high-end GPUs optimized for dense math.

This spectrum determines whether the system needs a faster processor or faster memory to improve performance. The Roofline Model (The roofline model) provides the analytical framework for quantifying these limits on specific hardware, with applied examples in Hardware Acceleration.

Checkpoint 1.1: Arithmetic intensity and architecture

Match the architectural choice to its systems implication:

The preceding quantitative reference points set the stage for a detailed examination of each architectural family, starting with the foundational multilayer perceptron, the architecture that established the computational patterns underlying all modern neural networks. From there, we progress through increasingly specialized designs: CNNs that exploit spatial structure, recurrent neural networks (RNNs) that capture temporal dependencies, attention mechanisms that enable dynamic relevance weighting, transformers that build entire architectures from attention, and finally DLRM that handles massive categorical features. Each architecture represents a different answer to the same fundamental question: how should we structure computation to match the patterns in our data?

For each family, we follow a consistent analysis: what data patterns the architecture targets (pattern processing needs), how it computes (algorithmic structure), how those computations map to hardware (computational mapping), and what system bottlenecks emerge (system implications). This four-part lens ensures that every architecture is evaluated for what it costs to run, not only for what it learns.

Self-Check: Question

A team must choose between an MLP and a CNN for classifying 224-by-224 pixel medical images. A dense first layer would need 150,528 input weights per output unit, so a 1,000-unit layer would already carry roughly 150 million weights; the CNN uses filters with fewer than 10,000 weights shared across positions. Using the chapter’s framing of inductive bias, which statement best explains why the CNN is the better starting point?

- The CNN’s locality-and-weight-sharing assumption matches the spatial structure of images, which simultaneously reduces sample complexity and cuts per-layer memory traffic by orders of magnitude.

- The CNN is more expressive than the MLP, so it can fit any function the MLP can fit with fewer parameters.

- The MLP cannot represent image-classification functions at all, so the CNN is the only viable choice.

- The CNN eliminates the need for training entirely by using handcrafted filters, which avoids the gradient-descent cost of the MLP.

A dense MLP layer on a single-sample forward pass reports roughly 0.5 FLOPs per byte, while a 3-by-3 convolution in ResNet-50 reuses each filter weight across more than 50,000 spatial positions. Using arithmetic intensity, explain why these two architectures sit in opposite regimes on the roofline and what that implies for which hardware upgrade helps each.

A team profiles a production workload and finds that a single model’s embedding tables occupy roughly 1 TB of DRAM, that each request performs a handful of random row lookups, and that matrix-multiply kernels use less than 5 percent of accelerator time. Which lighthouse model best represents this workload’s dominant bottleneck?

- ResNet-50, because the workload spends most of its time in convolution kernels that benefit from dense matrix hardware.

- GPT-2 XL, because autoregressive generation is the canonical example of a bandwidth-limited serving workload.

- DLRM, because the binding constraint is memory capacity for terabyte-scale embedding tables accessed via irregular sparse gathers.

- MobileNetV2, because the low compute utilization signature is diagnostic of depthwise-separable convolutions.

A 3-by-3 convolution filter in a ResNet layer is applied at more than 50,000 spatial positions in a single forward pass, while a dense matrix-vector multiply uses each weight exactly once per sample. The ratio of math done to bytes moved — the ____ — is what places these two workloads on opposite sides of the roofline and dictates whether faster HBM or more TFLOPS is the correct hardware response.

Why does the chapter frame architecture selection as ‘signing a contract with physics’ rather than as a modeling preference?

- Because the chosen architecture fixes compute patterns (locality, quadratic attention, sparse lookups) that propagate into training-cluster provisioning, serving memory, and deployment feasibility — commitments that cannot be undone by clever optimization.

- Because the Python framework a team uses (PyTorch, TensorFlow, JAX) permanently binds a model to one vendor’s hardware.

- Because an architecture’s optimizer cannot be changed after the first training step without restarting training from scratch.

- Because the chapter’s theoretical analysis deliberately ignores real engineering constraints in favor of abstract mathematical results.

True or False: A stronger inductive bias is always preferable to a weaker one because it reduces the parameter count and the amount of data the model needs to learn from.

MLPs: Dense Pattern Processing

Consider a smartphone’s spam filter: given a set of features extracted from an email (sender reputation score, number of links, presence of certain keywords), the model must output a single probability: spam or not. This classification task, where every input feature connects to every output, is the domain of fully connected networks.

We begin with the simplest architecture in our spectrum. Multilayer perceptrons3 (MLPs) represent the fully-connected architectures introduced in Neural Computation, now examined through the four-part systems lens established earlier.

3 Perceptron: A portmanteau of “perception” and “electron,” coined by Rosenblatt (1957) for the atomic unit of neural computation: a weighted sum followed by a nonlinear activation. MLPs are composed entirely of these units arranged in fully-connected layers, so the efficiency of this single operation, a multiply-accumulate, determines system throughput. Modern accelerators execute over \(10^{14}\) of these operations per second, making the perceptron the computational primitive that the entire ML hardware ecosystem is optimized around.

MLPs embody an inductive bias: they assume no prior structure in the data, allowing any input to relate to any output. This architectural choice enables maximum flexibility by treating all input relationships as equally plausible, making MLPs versatile but computationally intensive compared to specialized alternatives. Their computational power was established theoretically by the Universal Approximation Theorem (UAT)4 (Cybenko 1989; Hornik et al. 1989), which we encountered as a footnote in Neural Computation. This theorem states that a sufficiently large MLP with nonlinear activation functions can approximate any continuous function on a compact domain, given suitable weights and biases. That combination of theoretical universality and dense connectivity is the architectural concept captured by the multilayer perceptron.

4 Universal Approximation Theorem (UAT): This theorem provides the mathematical guarantee for the MLP’s “no prior structure” inductive bias by proving a sufficiently wide network can approximate any continuous function. The systems-level catch is that “sufficiently wide” can require a number of neurons that grows exponentially with input dimensionality, rendering the theoretical guarantee practically unattainable for even moderately-sized inputs like a \(256{\times}256\) image.

Definition 1.2: Multilayer perceptrons

Multilayer Perceptrons are feed-forward neural network architectures that apply fully connected layers in sequence, where every neuron in one layer connects to every neuron in the next, encoding no structural assumption about the input domain.

- Significance (quantitative): The lack of structural prior incurs \(O(d^2)\) parameter scaling per layer (where \(d\) is layer width): a single layer mapping 1,024 inputs to 1,024 outputs requires 1,048,576 parameters and about 2 MB of weight memory in FP16. A \(3{\times}3\) convolution mapping 1,024 input channels to 1,024 output channels has about 9.4 million weights; convolution’s advantage for images comes from spatial weight sharing across positions, not from reducing the channel-mixing matrix itself. This makes MLPs inefficient for high-dimensional structured inputs like images.

- Distinction (durable): Unlike Convolutional Neural Networks, which exploit spatial locality to reduce parameter count, MLPs treat all input elements symmetrically, making them the architecture of choice for tabular data where no spatial or sequential structure is present.

- Common pitfall: A frequent misconception is that MLPs are too simple for modern tasks. Every other architecture (CNN, transformer) can be viewed as an MLP with additional structural constraints and weight sharing—the MLP is the universal baseline against which all inductive biases are measured.

In practice, the UAT explains why MLPs succeed across diverse tasks while revealing the gap between theoretical capability and practical implementation. The theorem guarantees that some MLP can approximate any function, yet provides no guidance on requisite network size or weight determination. While MLPs can theoretically solve any pattern recognition problem, doing so may demand impractically large networks or prohibitive computation. This theoretical power drives the selection of MLPs for tabular data, recommendation systems, and problems where input relationships are unknown. At the same time, these practical limitations motivated the development of specialized architectures that exploit data structure for computational efficiency, as the subsequent CNN, RNN, and transformer sections demonstrate.

Learnability gap

The UAT sounds definitive, yet a fundamental gap separates what MLPs can represent from what they can learn in practice. The answer lies in a critical distinction between what a network can represent and what it can learn.

Representation capacity refers to the functions an architecture can express given unlimited resources; the UAT established earlier guarantees MLPs have universal representation capacity. This capacity is particularly effective because of the manifold hypothesis5, which suggests that high-dimensional data actually occupies a much simpler structure. Learnability refers to whether gradient descent can find good weights given finite training samples and computational budgets. A function may be representable yet practically unlearnable.

5 Manifold Hypothesis: The assumption that high-dimensional data lies on a low-dimensional surface embedded within the full space. A \(256{\times}256\) image lives in a 65,536-dimensional space, but “valid cat images” occupy a tiny structured region. Deep networks progressively unfold this crumpled manifold into linearly separable representations. The systems consequence: if data truly occupied the full space, no architecture could learn from feasible dataset sizes; the manifold structure is what makes finite training budgets sufficient.

This distinction resolves what appears to be a paradox: if MLPs are universal approximators, why has architectural innovation (ResNets, transformers) driven deep learning progress? Specialized architectures improve learnability by embedding inductive biases that match data structure, even when doing so restricts representational capacity.

Three factors create the learnability gap:

Sample complexity: The UAT provides no bounds on training examples needed. For \(28{\times}28\) images, an MLP treats 784 pixels independently, requiring exponentially many samples to learn spatial correlations. A CNN embeds locality bias, drastically reducing sample requirements. Mathematically, sample complexity can scale as \(O(\exp(d))\) for MLPs but \(O(\text{poly}(d))\) for architectures matching data structure.

Parameter efficiency: The UAT guarantees some width suffices, but provides no constructive bounds. Required width can be exponential in input dimension: approximating \(\sin(x_1) + \cdots + \sin(x_d)\) may require \(O(\exp(d))\) MLP neurons vs. \(O(d)\) for architectures processing dimensions independently.

Optimization difficulty: Even when optimal weights exist, gradient descent may not find them. MLP loss surfaces exhibit complex topology without the regularizing effect of architectural constraints. Specialized architectures reduce the search space, introducing symmetries that gradient descent exploits.

The classic MNIST handwritten digit benchmark illustrates this gap between representation and learnability concretely.

Example 1.1: MNIST: Representation vs. learnability

MLP Approach:

- Architecture: 784 → 4096 → 4096 → 10

- Parameters: \((784{\times}4096) + (4096{\times}4096) + (4096{\times}10)\) ≈ 20M parameters

- Training: 60,000 examples (standard MNIST training set)

- Test Accuracy: ~97–98 percent

- Rationale: Treats every pixel independently. Must learn all spatial correlations from data alone. No prior knowledge about spatial structure.

CNN Approach:

- Architecture: Conv(32, \(3{\times}3\)) → Pool → Conv(64, \(3{\times}3\)) → Pool → FC(128) → 10

- Parameters: (\(3{\times}3{\times}1{\times}32\)) + (\(3{\times}3{\times}32{\times}64\)) + (\(64{\times}7{\times}7{\times}128\)) + (\(128{\times}10\)) ≈ 421K parameters

- Training: 60,000 examples (same data)

- Test Accuracy: ~99 percent+

- Rationale: Embeds locality bias (nearby pixels are related) and translation invariance (digit patterns are meaningful regardless of position). These structural assumptions reduce parameter count and improve generalization.

Comparison:

- Parameter Efficiency: CNN uses 47\(\times\) fewer parameters

- Sample Efficiency: CNN achieves better accuracy with the same training data

- Systems Implications: CNN requires 47\(\times\) less memory, trains faster, and runs faster at inference

For this task, both architectures can represent an effective digit classifier. The difference is learnability: the CNN’s inductive bias matches the spatial structure of images, enabling efficient learning with limited data and compute while using a more constrained hypothesis space than an unconstrained MLP.

The learnability gap motivates the core design principle of this chapter: embed inductive biases that match data structure. Each architecture sacrifices theoretical generality for practical learnability. The No Free Lunch theorem6 (Wolpert 1996) formalizes this trade-off: the bias that helps one task may hurt another. CNN’s translation invariance aids image classification but hurts tasks where absolute position matters. Architecture selection is fundamentally the act of matching inductive bias to data structure.

6 No Free Lunch Theorem: Wolpert and Macready’s 1997 result proved that no optimization algorithm outperforms random search across all possible problems: averaged over every conceivable function, all algorithms are equivalent. The ML systems consequence: every inductive bias (locality, equivariance, attention) improves performance on problems matching that bias while necessarily degrading performance on problems that violate it, making architecture selection an irreversible engineering commitment to a problem class.

These theoretical insights translate directly into engineering decisions. Appropriate inductive biases reduce parameter counts (enabling edge deployment), accelerate convergence (reducing training costs), and produce structured computation patterns that map efficiently to specialized hardware (Hardware Acceleration). A 20M-parameter MLP infeasible for edge deployment becomes a 421K-parameter CNN that fits comfortably, a 47\(\times\) reduction achieved by matching architecture to data structure. The next question is what specific pattern processing requirements dense architectures address.

Pattern processing needs

Deep learning models frequently encounter problems where any input feature may influence any output without inherent constraints. In financial market analysis, any economic indicator may affect any market outcome. In natural language processing, word meaning may depend on any other word in the sentence. These scenarios demand an architectural pattern capable of learning arbitrary relationships across all input features . The architecture must provide unrestricted feature interactions where each output can depend on any combination of inputs, learned feature importance where the system determines which connections matter rather than relying on prescribed relationships, and adaptive representation where the network reshapes internal representations based on the data itself.

The MNIST digit recognition task illustrates this uncertainty concretely. While humans might focus on specific parts of digits (loops in ‘six’ or crossings in ‘eight’), the pixel combinations critical for classification remain indeterminate. A ‘seven’ written with a serif may share pixel patterns with a ‘two’, and variations in handwriting mean discriminative features may appear anywhere in the image. This uncertainty about feature relationships requires a dense processing approach where every pixel can potentially influence the classification decision—an architectural commitment that leads directly to the mathematical foundation of MLPs.

Algorithmic structure

These pattern processing needs demand an architecture capable of relating any input to any output. MLPs solve this with complete connectivity between all nodes. This connectivity requirement manifests through a series of fully-connected layers, where each neuron connects to every neuron in adjacent layers, the “dense” connectivity pattern introduced in Neural Computation.

Dense connectivity translates directly into matrix multiplication operations, the mathematical basis introduced in Matrix multiplication formulation that makes MLPs computationally tractable. Trace through Figure 2 to see how each layer transforms its input through the core operation:

The dense layer computation follows Equation 1: \[ \mathbf{h}^{(l)} = f\big(\mathbf{h}^{(l-1)}\mathbf{W}^{(l)} + \mathbf{b}^{(l)}\big) \tag{1}\]

Recall that \(\mathbf{h}^{(l)}\) represents the layer \(l\) output (activation vector), \(\mathbf{h}^{(l-1)}\) represents the input from the previous layer, \(\mathbf{W}^{(l)}\) denotes the weight matrix for layer \(l\), \(\mathbf{b}^{(l)}\) denotes the bias vector, and \(f(\cdot)\) denotes the activation function; Nonlinear activation functions develops ReLU and related nonlinearities in detail. This layer-wise transformation, while conceptually simple, creates computational patterns whose efficiency depends critically on how we organize these operations for different problem structures.

The dimensions of these operations reveal the computational scale of dense pattern processing. The input vector \(\mathbf{h}^{(0)} \in \mathbb{R}^{d_{\text{in}}}\) (treated as a row vector in this formulation) represents all potential input features. Weight matrices \(\mathbf{W}^{(l)} \in \mathbb{R}^{d_{\text{in}} \times d_{\text{out}}}\) capture all possible input-output relationships. The output vector \(\mathbf{h}^{(l)} \in \mathbb{R}^{d_{\text{out}}}\) produces transformed representations. A four-pixel example turns this bookkeeping into arithmetic.

Example 1.2: Concrete computation example

Input: \(\mathbf{h}^{(0)} = [0.8, 0.2, 0.9, 0.1]\) (4 pixel intensities)

Weight matrix: \(\mathbf{W}^{(1)} = \begin{bmatrix} 0.5 & 0.1 & -0.2 \\ -0.3 & 0.8 & 0.4 \\ 0.2 & -0.4 & 0.6 \\ 0.7 & 0.3 & -0.1 \end{bmatrix}\) (\(4{\times}3\) matrix)

Computation: \[\begin{gather*} \mathbf{z}^{(1)} = \mathbf{h}^{(0)}\mathbf{W}^{(1)} = \begin{bmatrix} 0.5{\times}0.8 + (-0.3)\times 0.2 + 0.2{\times}0.9 + 0.7{\times}0.1 \\ 0.1{\times}0.8 + 0.8{\times}0.2 + (-0.4)\times 0.9 + 0.3{\times}0.1 \\ (-0.2)\times 0.8 + 0.4{\times}0.2 + 0.6{\times}0.9 + (-0.1)\times 0.1 \end{bmatrix} = \begin{bmatrix} 0.59 \\ -0.09 \\ 0.45 \end{bmatrix} \end{gather*}\] After ReLU: \(\mathbf{h}^{(1)} = [0.59, 0, 0.45]\) (negative values zeroed)

Each hidden neuron combines ALL input pixels with different weights, demonstrating unrestricted feature interaction.

The MNIST example makes this scale concrete. The 784-dimensional input connects to every neuron in the first hidden layer. A hidden layer with 100 neurons requires a \(784{\times}100\) weight matrix (78,400 parameters), where each weight represents a learnable relationship between an input pixel and a hidden feature. This single layer anchors the computational analysis throughout this chapter.

This algorithmic structure enables arbitrary feature relationships while creating specific computational patterns that computer systems must accommodate. Dense connectivity provides the universal approximation capability established earlier but introduces computational redundancy: while the theoretical power of MLPs enables modeling of any continuous function given sufficient width, this flexibility requires numerous parameters to learn relatively simple patterns. Every input feature influences every output, yielding maximum expressiveness at the cost of maximum computational expense. These trade-offs motivate optimization techniques that reduce computational demands while preserving model capability. Strategies including pruning and quantization are examined in Model Compression, with Hardware Acceleration exploring hardware-specific implementations that exploit regular matrix operation structure.

Computational mapping

The preceding algorithmic structure defines what an MLP computes; computational mapping reveals how that computation translates to hardware operations. Listing 1 demonstrates how this mapping progresses from mathematical abstraction to computational reality.

def mlp_layer_matrix(X, W, b):

"""MLP forward pass using framework-level matrix operations."""

# X: input matrix (batch_size x num_inputs)

# W: weight matrix (num_inputs x num_outputs)

# b: bias vector (num_outputs)

# Single GEMM call: frameworks dispatch to optimized BLAS/cuBLAS

# For MNIST: 784 x 100 = 78,400 MACs per sample

H = activation(matmul(X, W) + b)

return HThe function mlp_layer_matrix directly mirrors the mathematical equation, employing high-level matrix operations (matmul) to express the computation in a single line while abstracting the underlying complexity. This implementation style characterizes deep learning frameworks, where optimized libraries manage the actual computation.

To understand the system implications of this architecture, we must look “under the hood” of the high-level framework call. The elegant one-line matrix multiplication output = matmul(X, W) is, from the hardware’s perspective, a series of nested loops that expose the true computational demands on the system. This translation from logical model to physical execution reveals critical patterns that determine memory access, parallelization strategies, and hardware utilization.

The second implementation in Listing 2 exposes the actual computational pattern through nested loops, revealing what really happens when we compute a layer’s output: we process each sample in the batch, computing each output neuron by accumulating weighted contributions from all inputs.

This translation from mathematical abstraction to concrete computation exposes how dense matrix multiplication decomposes into nested loops of simpler operations. The outer loop processes each sample in the batch, while the middle loop computes values for each output neuron. Within the innermost loop, the system performs repeated multiply-accumulate operations7, combining each input with its corresponding weight.

7 Multiply-Accumulate (MAC): The atomic operation of neural networks: multiply two values and add to a running sum. Data center accelerators sustain \(10^{14}\)–\(10^{15}\) MAC/s on dense kernels, while mobile chips reach \(10^{12}\)–\(10^{13}\) MAC/s. The critical systems insight: a MAC itself costs ~1 pJ, but fetching its operands from off-chip DRAM costs ~200 pJ, a 200\(\times\) energy gap that makes data movement, not arithmetic, the dominant constraint in ML system design.

8 BLAS (Basic Linear Algebra Subprograms): This standard API for matrix operations enables the use of highly optimized libraries (for example, cuBLAS) to accelerate the 784 multiply-accumulates per neuron. These libraries are tuned for large, square matrices and hit an “efficiency cliff” with the \(784{\times}100\) matrix of the MNIST example. This nonstandard shape fails to saturate the hardware’s parallel compute units, yielding utilization far below the 80–95 percent of peak throughput achieved in larger transformer layers.

9 Tensor Cores: Specialized units in NVIDIA GPUs that accelerate the thousands of multiply-accumulate operations described by fusing them into single, highly parallelized matrix instructions. Tensor Cores are most efficient when matrix dimensions meet datatype- and architecture-specific alignment multiples; modern cuBLAS/cuDNN can still use Tensor Cores for many nonaligned cases, often with lower efficiency or internal padding. On an A100 GPU, this creates roughly a 16\(\times\) performance gap between the 312 TFLOPS from Tensor Cores and the 19.5 from standard CUDA cores.

In our reference MNIST layer, each output neuron requires 78,400 divided by 100, or 784, multiply-accumulate operations and at least 1,568 memory accesses (784 for inputs, 784 for weights). While actual implementations use optimizations through libraries like Basic Linear Algebra Subprograms (BLAS)8 or cuBLAS, these patterns drive key system design decisions. The hardware architectures that accelerate these matrix operations, including GPU Tensor Cores9 and specialized AI accelerators, are covered in Hardware Acceleration.

def mlp_layer_compute(X, W, b):

"""Explicit loop structure exposing MLP computational patterns."""

# Loop 1: Process each sample independently (parallelizable)

for batch in range(batch_size):

# Loop 2: Compute each output neuron

for out in range(num_outputs):

Z[batch, out] = b[out] # Initialize with bias

# Loop 3: Accumulate weighted inputs (innermost loop)

# This is the MAC operation: result += input * weight

for in_ in range(num_inputs):

Z[batch, out] += X[batch, in_] * W[in_, out]

# Total per output: num_inputs MACs +

# num_inputs memory reads

H = activation(Z) # Element-wise nonlinearity

return HSystem implications

The preceding computational mapping showed how MLP operations decompose into nested loops of multiply-accumulate operations. The system-level constraints that emerge from these patterns span three dimensions: memory requirements, computation needs, and data movement.

Memory requirements

For dense pattern processing, memory usage is dominated by parameter storage. Our reference MNIST layer \((784{\times}100)\) requires only 78,400 parameters, but this \(O(M \times N)\) scaling becomes prohibitive for high-dimensional inputs. A typical 2048-unit layer connected to a 2048-unit layer requires 4194304 parameters (17 MB at FP32). Since every weight is used exactly once per input vector, there is no opportunity for weight reuse within a single sample processing, making the workload heavily dependent on memory capacity and bandwidth.

Computation needs

The core computation is dense matrix-vector multiplication (GEMV) or matrix-matrix multiplication (GEMM) when batched. While regular and parallelizable, the arithmetic intensity (FLOPs/byte) is low for small batch sizes (the batch size is the number of input samples processed together in one forward pass; larger batches amortize weight-loading cost over more computations). Modern processors optimize this via specialized SIMD (Single Instruction, Multiple Data) units (for example, AVX-512 on CPUs) or systolic arrays (on Tensor Processing Units (TPUs)/GPUs) that amortize control overhead over massive blocks of parallel arithmetic.

Data movement

The all-to-all connectivity pattern creates a fundamental data movement bottleneck. To compute 100 hidden values from 784 inputs, the system must move \(784{\times}100\) weights from memory to the compute units. Applying the arithmetic intensity framework from Section 1.1.2 to this layer yields roughly 0.5 FLOPs/byte (assuming FP32) if batch size is one, as shown in Equation 2: \[ \text{Intensity} \approx \frac{2 \cdot M \cdot N \text{ (Ops)}}{4 \cdot M \cdot N \text{ (Bytes)}} = 0.5 \text{ FLOPs/byte} \tag{2}\]

Since modern accelerators (like the A100) require intensities around 153 FLOPs/byte to saturate FP16 Tensor Cores, dense layers are almost always memory-bandwidth-bound unless batch sizes exceed several hundred. This explains why “fully connected” layers are often the performance bottleneck in inference workloads, despite performing fewer total FLOPs than convolutional layers.

Dense connectivity thus moves maximum data for minimum compute. For data with inherent structure, spatial locality in images or temporal order in sequences, specialized architectures can exploit that structure for both better accuracy and better efficiency. The most established such architecture is the convolutional neural network.

Self-Check: Question

A 2,048-unit dense layer connected to another 2,048-unit layer stores roughly 4.2 million weights, consuming about 16 MB in FP32 — and every weight is used exactly once per input sample. A team considering this layer as the front end of an image classifier asks why CNN-based classifiers typically use thousands of times fewer parameters for the same task. Which statement best captures the systems consequence of the MLP’s architectural assumption?

- The MLP treats every input feature as potentially relevant to every output feature, so it pays O(MN) memory and O(MN) bytes-moved per sample regardless of whether any spatial structure exists in the data.

- The MLP’s activation function is more expensive than a convolution, which is why its total memory footprint is higher.

- The MLP uses a fundamentally different optimizer that requires more state per parameter than a CNN’s optimizer.

- The MLP’s bias vector grows quadratically with input dimension, which dominates the parameter count.

A team cites the Universal Approximation Theorem to argue that a sufficiently wide MLP could solve any image classification task. They plan to train a 3-layer MLP on 224-by-224 ImageNet images. Explain why UAT does not justify this plan and what the practical learnability gap looks like in both statistical and systems terms.

A 2,048-to-2,048 dense layer processing a single FP32 input sample reports roughly 0.5 FLOPs per byte on an A100, and the kernel runs at 4 percent of the advertised Tensor Core peak. Which optimization path is most directly aligned with the section’s analysis of this regime?

- Increase the batch size so weights are reused across many samples, raising arithmetic intensity above the ridge point and letting the Tensor Cores stay fed.

- Upgrade to an accelerator with 2x the advertised TFLOPS while keeping batch size 1, because the workload is compute-bound.

- Replace the matrix multiply with an element-wise activation to reduce total FLOPs to near zero.

- Disable the BLAS library and route the computation through a scalar Python loop to improve cache locality.

Order the following steps in a dense layer’s forward pass for one output neuron: (1) apply the activation function to the accumulated pre-activation, (2) initialize the output neuron with its bias value, (3) accumulate input-times-weight products across all input features.

A team ports an MNIST-style 784-by-100 dense layer to an A100 and measures throughput far below the advertised FP16 Tensor Core peak. The layer is small and has awkward dimensions for Tensor Core tiling. Which explanation is most consistent with the section’s discussion of Tensor Core alignment?

- Tensor Core peak assumes hardware-friendly tile shapes and enough work to amortize overhead; awkward small matrices may need padding or less efficient kernels, so their realized throughput can be far below peak.

- Small dense layers are never executed on GPUs and are silently dispatched to the CPU by the runtime.

- The activation function on a 100-dimensional output vector is the dominant cost and hides the GEMM’s throughput.

- The 784-by-100 layer has excessive arithmetic intensity that saturates memory and leaves compute units idle.

True or False: Because MLPs are universal approximators, they are the most practical architecture for any high-dimensional structured input such as a 224-by-224 image.

CNNs: Spatial Pattern Processing

The MLP’s assumption that all input features interact equally with all outputs proves particularly costly for spatially structured data like images. As the earlier MNIST comparison demonstrated, a CNN achieves higher accuracy with 47\(\times\) fewer parameters by exploiting spatial locality rather than treating every pixel independently.

Convolutional Neural Networks10 emerged as the solution to this challenge (LeCun et al. 1998; Krizhevsky et al. 2012). Consider what happens when viewing a photograph: the visual system does not perceive every pixel simultaneously in relation to every other pixel. Instead, it detects local patterns (edges, textures, corners) and composes them into objects. CNNs encode this same insight architecturally.

10 Convolution: From Latin convolvere (“to roll together”), describing a filter that slides across an input, combining local elements at each position. This “rolling together” enforces a locality constraint that is the source of the operation’s efficiency: a single \(5{\times}5\) kernel reuses its 25 weights at every spatial position, reducing one feature detector for a 1-megapixel single-channel image from roughly 1,000,000 weights to 25, about 40,000\(\times\) fewer parameters than a fully connected detector.

Spatial locality produces two key innovations that enhance efficiency for spatially structured data. Parameter sharing allows the same feature detector to be applied across different spatial positions, reducing parameters from millions to thousands while improving generalization. Local connectivity restricts connections to spatially adjacent regions, reflecting the insight that spatial proximity correlates with feature relevance. Together, these innovations define convolutional neural networks as an architectural family.

Definition 1.3: Convolutional neural networks

Convolutional Neural Networks (CNNs) are architectures defined by Translation Equivariance and Spatial Locality.

- Significance (quantitative): They exploit weight sharing to decouple parameter count from input size, enabling \(O(1)\) scaling for high-dimensional grid data (for example, images) while maximizing Compute Density \((R_{\text{peak}})\).

- Distinction (durable): Unlike MLPs, which have Global Connectivity, CNNs restrict connections to spatially adjacent regions, reflecting the insight that proximity correlates with feature relevance.

- Common pitfall: A frequent misconception is that CNNs are “vision-only” models. In reality, they are a Symmetry-Aware Architecture: they can be applied to any data with a grid-like topology, including audio (spectrograms) and text (1D-convolutions).

The trade-off is explicit: CNNs sacrifice the theoretical generality of MLPs for practical efficiency gains when data exhibits known structure. Where MLPs treat each input element independently, CNNs exploit spatial relationships to achieve both computational savings and improved accuracy on vision tasks.

Pattern processing needs

Spatial pattern processing addresses scenarios where the relationship between data points depends on their relative positions or proximity. Consider processing a natural image: a pixel’s relationship with its neighbors is important for detecting edges, textures, and shapes. These local patterns then combine hierarchically to form more complex features: edges form shapes, shapes form objects, and objects form scenes.

This hierarchical processing appears across many domains: local pixel patterns forming edges that combine into objects (computer vision), nearby time-segment correlations identifying phonemes (speech), proximate sensor correlations (sensor networks), and tissue pattern recognition (medical imaging). The approach succeeds not because it mimics the brain, but because it mirrors the compositional structure of the data itself.

Focusing on image processing to illustrate these principles, if we want to detect a cat in an image, certain spatial patterns must be recognized: the triangular shape of ears, the round contours of the face, the texture of fur. These patterns maintain their meaning regardless of where they appear in the image. A cat is still a cat whether it appears in the top-left or bottom-right corner. This indicates two key requirements for spatial pattern processing: the ability to detect local patterns and the ability to recognize these patterns regardless of their position11. As Figure 3 illustrates, convolutional neural networks meet both requirements through hierarchical feature extraction, where simple patterns compose into increasingly complex representations at successive layers.

11 ImageNet: The dataset that validated these two spatial processing requirements at scale. AlexNet’s 2012 victory in the ImageNet Large Scale Visual Recognition Challenge (ILSVRC) reduced top-5 error from 26.2 percent to 15.3 percent on the 1000-class ImageNet challenge with roughly 1.2 million training images; the broader ImageNet database contained more than 14 million images across over 20,000 synsets. The enduring systems lesson: every subsequent accuracy gain (VGG, ResNet, vision transformer (ViT)) required proportionally larger datasets and compute budgets, establishing the scaling relationship between architectural inductive bias and infrastructure cost.

CNNs put these spatial processing principles into practice through parameter sharing, local connectivity, and translation equivariance12, the key innovations pioneered by Yann LeCun13 and LeCun et al. (1989).

12 Translation Equivariance: An inherent property of the convolution operation where shifting the input guarantees a corresponding spatial shift in the resulting feature map. This is distinct from true invariance, which is forced by a subsequent pooling layer that intentionally discards this precise positional data. The system design choice is stark: preserve equivariant data for segmentation or discard it via pooling, reducing downstream feature map size by 75 percent for classification.

13 Yann LeCun and LeNet: LeCun’s architecture directly addressed the intractable scaling of applying dense networks to images by enforcing the principles of local connectivity and parameter sharing. These constraints reduced the parameter count for an image-like input layer by over 95 percent, enabling LeNet-5 to achieve production-grade accuracy on commercial tasks like check reading with only ~60,000 total parameters.

Algorithmic structure

The core operation in a CNN can be expressed mathematically as Equation 3: \[ \mathbf{H}^{(l)}_{i,j,k} = f\left(\sum_{di}\sum_{dj}\sum_{c} \mathbf{W}^{(l)}_{di,dj,c,k}\mathbf{H}^{(l-1)}_{i+di,j+dj,c} + \mathbf{b}^{(l)}_k\right) \tag{3}\]

This equation describes how CNNs process spatial data. \(\mathbf{H}^{(l)}_{i,j,k}\) is the output at spatial position \((i,j)\) in channel \(k\) of layer \(l\). The triple sum iterates over the filter dimensions: \((di,dj)\) scans the spatial filter size, and \(c\) covers input channels. \(\mathbf{W}^{(l)}_{di,dj,c,k}\) represents the filter weights, capturing local spatial patterns. Unlike MLPs that connect all inputs to outputs, CNNs only connect local spatial neighborhoods.

Breaking down the notation further, \((i,j)\) corresponds to spatial positions, \(k\) indexes output channels, \(c\) indexes input channels, and \((di,dj)\) spans the local receptive field14. Unlike the dense matrix multiplication of MLPs, this operation:

14 Receptive Field: The input region influencing a particular output neuron. With \(3{\times}3\) filters, receptive fields grow by 2 pixels per layer, so a neuron at layer 3 “sees” a \(7{\times}7\) region. This growth rate constrains architecture depth: detecting objects spanning 100+ pixels in a \(224{\times}224\) image requires either deep stacks of small filters (more layers, more memory for activations) or larger kernels (more parameters per layer), a fundamental depth-vs.-width trade-off in CNN design.

Convolutional layers process local neighborhoods (typically \(3{\times}3\) or \(5{\times}5\)), reuse the same weights at each spatial position, and maintain spatial structure in the output.

To illustrate, consider applying a CNN to the same MNIST images used in our MLP analysis. Each convolutional layer applies a set of filters (for example, \(3{\times}3\)) that slide across the \(28{\times}28\) input, computing local weighted sums. With 32 filters and padding to preserve dimensions, the layer produces a \(28{\times}28{\times}32\) output, where each spatial position contains 32 different feature measurements of its local neighborhood. This contrasts sharply with the MLP approach, where the entire image is flattened into a single vector before processing.

This algorithmic structure directly implements the requirements for spatial pattern processing, creating distinct computational patterns that influence system design. Unlike MLPs, convolutional networks preserve spatial locality, using the hierarchical feature extraction principles established earlier. These properties drive architectural optimizations in AI accelerators, where operations such as data reuse, tiling, and parallel filter computation are important for performance.

The property of translation equivariance is central to understanding why CNNs work effectively for spatial data: shifting the input shifts the output feature map correspondingly. We examine this property in four stages: the equivariance-invariance distinction, the mathematical formulation, the group theory generalization, and the systems implications for deployment.

Equivariance and invariance are related but distinct concepts that determine how architectures handle transformations. Equivariance means that transforming the input produces the same transformation in the output, as defined in Equation 4: \[ f(T(\mathbf{x})) = T(f(\mathbf{x})) \tag{4}\]

For CNNs with translation \(T_v\) (shift by vector \(v\)), under stride-1 convolution away from boundary effects (and before any pooling or strided downsampling), if the input shifts by five pixels right, the feature maps also shift by five pixels right. Position information is preserved through the transformation. Invariance, by contrast, means transforming the input does not change the output, as defined in Equation 5: \[ f(T(\mathbf{x})) = f(\mathbf{x}) \tag{5}\]

Global average pooling over an entire feature map exhibits translation invariance: shifting the input does not change the averaged output. Position information is discarded.

Equivariance matters for learning because it preserves information needed for structured representations. Consider spatial relationships: a feature detector responding to an eye at position \((x, y)\) will respond to the same eye at position \((x+5, y)\), but the response moves to reflect the new position. The network can learn spatial relationships like “eye above nose” that matter for face detection. Full invariance would lose this relational information, leaving only “eye and nose both present somewhere,” which proves insufficient for many tasks.

Object detection illustrates why equivariance is essential for localization. Detection outputs bounding boxes like “car at \((100, 200)\) with size \(50{\times}80\)”, requiring equivariant layers to track position through the network while invariant final layers determine class. This architectural choice matches task structure: equivariance for localization, invariance for classification.

Equivariance also supports hierarchical composition. Early layers detect edges equivariantly at all positions, middle layers combine edges into shapes while maintaining equivariance, and final layers may use partial invariance through pooling for classification. This hierarchy works precisely because intermediate features maintain spatial structure for composition.

Systems Perspective 1.1: Equivariance formalism

Applying translation \(T_v\) (shift by \(v = (v_1, v_2)\)) to the input: \[ (T_v \mathbf{x})[i, j] = \mathbf{x}[i - v_1, j - v_2] \]

The convolution of the translated input becomes: \[\begin{gather*} (f * \mathbf{w})[T_v \mathbf{x}][i, j] = \sum_{m,n} \mathbf{w}[m, n] \cdot \mathbf{x}[(i - v_1) + m, (j - v_2) + n] \\ = \sum_{m,n} \mathbf{w}[m, n] \cdot \mathbf{x}[(i + m) - v_1, (j + n) - v_2] = (f * \mathbf{w})[\mathbf{x}][i - v_1, j - v_2] = T_v((f * \mathbf{w})[\mathbf{x}])[i, j] \end{gather*}\]

This proves translation equivariance: \(f(T_v \mathbf{x}) = T_v(f(\mathbf{x}))\).

A concrete example illustrates these properties. Consider detecting whisker patterns in a cat image where the cat face appears at position \((50, 50)\). An equivariant convolutional layer applies a \(3{\times}3\) filter to detect whisker textures, producing whisker features at position \((50, 50)\) in the feature map. If the input shifts so the cat face appears at \((55, 55)\), the whisker features shift correspondingly to position \((55, 55)\) in the feature map. The feature position tracks the input position, preserving spatial information.

An invariant global pooling layer behaves entirely differently. Average pooling over the entire spatial dimensions produces a scalar output (say, average whisker strength of \(0.8\)) with no position information. Whether the cat face appears at \((50, 50)\) or \((55, 55)\), the output remains \(0.8\). The layer ignores spatial position entirely.

The equivariant layers preserve where features occur, enabling the network to learn that “whiskers near mouth” and “ears above eyes” matter for cat classification. Invariant final layers discard absolute position for classification.

Example 1.3: Equivariance: Feature detection

Vertical edge detector filter: \[ \mathbf{w} = \begin{bmatrix} -1 & 0 & 1 \\ -1 & 0 & 1 \\ -1 & 0 & 1 \end{bmatrix} \]

Convolving original image:

Output feature map shows positive activation where the filter transitions from dark to bright (left side of edge) and negative activation where it transitions from bright to dark (right side): \[ f(\mathbf{x}) = \begin{bmatrix} 3 & 0 & -3 & 0 & 0 \\ 3 & 0 & -3 & 0 & 0 \\ 3 & 0 & -3 & 0 & 0 \\ 3 & 0 & -3 & 0 & 0 \\ 3 & 0 & -3 & 0 & 0 \end{bmatrix} \]

Shifted input (edge moved to column 5): \[ T_2 \mathbf{x} = \begin{bmatrix} 0 & 0 & 0 & 0 & 1 & 0 & 0 \\ 0 & 0 & 0 & 0 & 1 & 0 & 0 \\ 0 & 0 & 0 & 0 & 1 & 0 & 0 \\ 0 & 0 & 0 & 0 & 1 & 0 & 0 \\ 0 & 0 & 0 & 0 & 1 & 0 & 0 \\ 0 & 0 & 0 & 0 & 1 & 0 & 0 \\ 0 & 0 & 0 & 0 & 1 & 0 & 0 \end{bmatrix} \]

Convolving shifted image: \[ f(T_2 \mathbf{x}) = \begin{bmatrix} 0 & 0 & 3 & 0 & -3 \\ 0 & 0 & 3 & 0 & -3 \\ 0 & 0 & 3 & 0 & -3 \\ 0 & 0 & 3 & 0 & -3 \\ 0 & 0 & 3 & 0 & -3 \end{bmatrix} = T_2(f(\mathbf{x})) \]

The feature activation shifts by the same amount as the input, demonstrating equivariance. The network knows the edge is at column 5 in the shifted image, not just that an edge exists somewhere.

Equivariance carries systems implications that extend beyond mathematical elegance. Parameter efficiency is the most immediate benefit: equivariance through parameter sharing produces dramatic reductions in model size. Consider processing a \(224{\times}224\) RGB image. An MLP would require each hidden neuron to connect to all \(224{\times}224{\times}3\) = 150,528 input pixels. A CNN with a \(3{\times}3\) filter needs only \(3{\times}3{\times}3\) = 27 parameters per filter, reused across all \(224{\times}224\) positions. This represents approximately 5,575\(\times\) fewer parameters per feature detector, and the memory savings enable larger models and bigger batches on fixed hardware.

The computational structure created by equivariance proves equally valuable for systems optimization. The sliding window pattern applies the same operation at every spatial position, creating regular computation that hardware can exploit. Input pixels are used by multiple filter positions, enabling im2col optimizations that restructure data for efficient matrix operations. The resulting computation is inherently SIMD-friendly, as modern GPUs can execute identical instructions across spatial positions simultaneously. This structural regularity explains why TPUs and AI accelerators include specialized units for convolution: the operation maps efficiently to silicon precisely because equivariance creates predictable, parallelizable patterns.

Equivariance also improves sample efficiency in ways that benefit the entire training pipeline. When a network learns an edge detector at one position, equivariance ensures that same detector works at all positions automatically. Training no longer requires examples with edges at every possible location, providing a form of built-in data augmentation. The systems benefits cascade: less training data means reduced storage requirements, faster training, and lower bandwidth consumption during data loading.

From a group theory perspective, convolution’s equivariance to translations represents one instance of a general principle. The translation group \((\mathbb{R}^2, +)\) consists of all 2D translations, closed under composition (translating by \(v\) then \(u\) equals translating by \(v + u\)). Convolution is equivariant to this group. Recent research extends this framework to other symmetry groups. Cohen and Welling (2016) developed Group-Equivariant CNNs that handle rotations and reflections by constructing filters equivariant to rotation groups. This allows learning rotation-invariant features for tasks like satellite imagery or medical imaging where orientation does not determine meaning.

The mathematical framework generalizes cleanly: for group \(G\) acting on input space \(X\) and output space \(Y\), a function \(f: X \to Y\) is \(G\)-equivariant if: \[ f(g \cdot \mathbf{x}) = g \cdot f(\mathbf{x}) \quad \forall g \in G, \mathbf{x} \in X \]

Standard CNNs are translation-equivariant, while rotation-equivariant networks extend this to rotation groups. The architectural principle generalizes: data symmetries should be embedded as equivariances in the architecture. For systems engineering, this means that identifying data symmetries directly informs architecture choice, that more constrained architectures with stronger symmetries often produce smaller models, and that specialized equivariances may require custom operations like rotation convolutions that need either hardware support or efficient software implementations.

In practice, perfect equivariance is often sacrificed for computational efficiency or training stability. Asymmetric padding at image boundaries breaks perfect translation equivariance, as does strided downsampling, which introduces quantization where a one-pixel shift in input produces a noninteger shift in output. Batch normalization, when computing statistics per position in some implementations, also breaks equivariance. Modern networks accept these deviations as necessary trade-offs, and the slight loss of theoretical purity rarely impacts practical performance.

Different tasks impose different requirements on where equivariance should be maintained vs. where invariance should be introduced. Image classification needs only the final class label to be invariant; intermediate layers benefit from staying equivariant to preserve spatial information for hierarchical feature learning. Object detection requires equivariance throughout the network because bounding box coordinates must track object positions. Semantic segmentation demands full equivariance to the output layer since per-pixel labels must align with input positions. Image generation similarly requires equivariance to maintain spatial structure in the output. The architectural decision of where to introduce invariance through pooling or global averaging vs. maintaining equivariance reflects these task requirements and directly shapes network design.

The preceding task-specific requirements illustrate the inductive bias principle defined in Section 1.1: by restricting connectivity to local neighborhoods and sharing parameters across spatial positions, CNNs encode prior knowledge about the structure of visual data—that important features are local and translation-invariant. This architectural constraint reduces the hypothesis space that the network must search, enabling more efficient learning from limited data compared to fully connected networks.

Checkpoint 1.2: Spatial inductive bias

CNNs succeed because they match the structure of image data. Verify you understand how:

CNNs naturally implement hierarchical representation learning (Bengio et al. 2013) through their layered structure. Early layers detect low-level features like edges and textures with small receptive fields, while deeper layers combine these into increasingly complex patterns with larger receptive fields. This hierarchical organization enables CNNs to build compositional representations: complex objects are represented as compositions of simpler parts. The mathematical foundation for this emerges from stacking convolutional layers, which creates a tree-like dependency structure where each deeper neuron depends on a progressively larger input region; with fixed small kernels, receptive-field side length grows roughly linearly with depth and receptive-field area grows roughly quadratically until it covers the image.

The parameter sharing introduced earlier dramatically reduces complexity compared to MLPs. This sharing embodies the assumption that useful features can appear anywhere in an image, making the same feature detector valuable across all spatial positions.

The preceding architectural properties make CNNs highly amenable to systems-level analysis, and one model in particular has become the standard reference point for compute-bound vision workloads: the ResNet-50 architecture.

Lighthouse 1.2: ResNet-50 (vision lighthouse)

Why it matters: ResNet-50 is the standard reference point for regular, convolution-heavy vision workloads. Its architecture consists almost entirely of dense convolutional layers, making it highly regular and efficient on GPUs. Under batched execution with good data reuse, ResNet-50 performance is typically limited by floating-point throughput (FLOPs), making it a useful lighthouse for explaining data parallelism, quantization, and batching strategies. Table 4 summarizes the quantitative properties and their system consequences:

| Property | Value | System Implication |

|---|---|---|

| Parameters | 25.6 million | 102 MB model size at FP32; fits comfortably in GPU memory. |

| FLOPs/Image | 4.1 GFLOPs \((224{\times}224)\) | \(3{\times}3\) convolutions are the largest single kernel class at roughly 48 percent of MACs. |

| Constraint | Compute-heavy when batched | Limited by raw FLOPs when weight and activation reuse are high; small-batch inference can move toward the memory-bound regime. |

| Bottleneck | FP Throughput | Benefits maximally from specialized Matrix Units (Tensor Cores). |

| Profile | High effective arithmetic intensity under reuse | Arithmetic intensity depends on batch size, convolution algorithm, and materialized memory traffic. |

ResNet-50’s compute-bound profile assumes abundant hardware resources, yet the deployment target may be a smartphone rather than a data center GPU. At the opposite end of the efficiency spectrum, MobileNet demonstrates that architectural innovation can achieve similar accuracy with a fraction of the computational cost.

Lighthouse 1.3: MobileNet (efficiency lighthouse)

Why it matters: MobileNet represents latency-constrained edge workloads. Its depthwise separable convolutions trade channel mixing capacity for speed, making it the standard baseline for mobile apps, embedded vision, and neural architecture search (NAS). Table 5 summarizes the efficiency lighthouse’s quantitative properties:

| Property | Value | System Implication |

|---|---|---|

| Parameters | 3.5 million | 14 MB at FP32; 7\(\times\) smaller than ResNet-50. |

| FLOPs/Image | 300 MFLOPs | 14\(\times\) fewer than ResNet-50 for similar accuracy. |

| Constraint | Latency Bound | Single-image inference speed is the priority. |

| Bottleneck | Overhead/Serial Ops | Kernel launch overhead often dominates actual compute. |

| Profile | Low Arithmetic Intensity | Memory access and control logic matter more than raw FLOPs. |

The contrast between ResNet-50 and MobileNet highlights a counterintuitive lesson that trips up many practitioners.

Misconception: “MobileNet has 14\(\times\) fewer FLOPs than ResNet-50, so it must run 14\(\times\) faster.”

Reality: On high-end GPUs, MobileNet often runs slower than ResNet-50 despite using far fewer operations. MobileNet’s depthwise separable convolutions have low arithmetic intensity: they move more data relative to computation. GPUs optimized for dense matrix operations (high arithmetic intensity) cannot saturate their compute units on MobileNet’s memory-bound kernels. FLOPs measure work; throughput depends on how well that work maps to hardware. This is why MobileNet excels on mobile CPUs (where memory bandwidth matches compute) but underperforms on data center GPUs (where compute far exceeds bandwidth). We revisit this hardware-architecture mismatch as a general fallacy in Section 1.11.

The architectural efficiency of CNNs allows further optimization through specialized techniques like depthwise separable convolutions and pruning, detailed in Model Compression. These optimization strategies build on spatial locality principles, with Hardware Acceleration detailing how modern processors exploit convolution’s inherent data reuse patterns.

Study the mechanics in Figure 4: a small filter slides over the input image, computing a dot product at each position to generate a feature map. This sliding window captures local structures while maintaining translation equivariance—the same filter detects the same pattern regardless of where it appears. For an interactive visual exploration of convolutional networks, the CNN Explainer (Wang et al. 2021) project provides an insightful demonstration of how these networks are constructed.

Computational mapping

Convolution operations create computational patterns distinct from MLP dense matrix multiplication. While high-level frameworks abstract this as a sliding window, the underlying hardware implementation typically transforms the problem to exploit highly optimized matrix multiplication units.

The most common transformation is im2col (image-to-column), which rearranges the input image patches into columns of a large matrix, allowing the convolution to be executed as a single General Matrix Multiplication (GEMM). (The im2col transformation is illustrated later in this chapter when we discuss computational primitives.)

def conv_layer_spatial(input, kernel, bias):

"""Framework-level convolution.

Single call dispatches to optimized kernel (often via im2col + GEMM).

"""

# Convolution applies shared weights across all positions

# For a 3x3 kernel on 28x28 input (padded):

# 9 MACs per position x 784 positions

output = convolution(input, kernel) + bias

return activation(output)The bridge between the logical model and physical execution becomes critical for understanding CNN system requirements. While Listing 3 shows the framework-level abstraction as a simple function call, the hardware must orchestrate complex data movement patterns and exploit spatial locality for efficiency.

Listing 4 reveals the logical computational pattern: seven nested loops that process each spatial position. While functionally correct, this naive implementation is rarely used in practice due to poor memory locality. Instead, the im2col approach trades memory (duplicating overlapping input pixels) for computational regularity, converting the messy nested loops into a streamlined matrix multiplication that saturates hardware FP units.