Deployment Paradigm Framework

ML Systems

Purpose

Why does deploying the same model to a phone vs. a data center demand fundamentally different engineering?

The defining insight of ML systems engineering is that constraints drive architecture. The speed of light sets an absolute floor on how quickly distant servers can respond. Thermodynamics limits how much computation can occur in a given volume before heat becomes unmanageable. Memory physics makes moving data often more expensive than processing it. These are not engineering limitations awaiting better technology; they are permanent physical boundaries that partition the world into fundamentally distinct operating regimes. A data center can train billion-parameter models but cannot guarantee low-latency responses to users thousands of miles away. A smartphone can respond instantly but has a fraction of the memory budget. A microcontroller can run on a coin-cell battery for years but has barely enough compute for a simple keyword detector. The same model—the same algorithm applied to the same data—demands radically different engineering in each regime, not because of design preferences but because different physics governs each environment. Teams that treat deployment as an afterthought, training a model in the cloud and then asking “how do we ship this?”, discover too late that the physics of their target environment invalidates months of architectural decisions. Understanding these regimes transforms deployment from an operational detail into a first-order engineering decision: the question is never simply “how do I make this model work?” but rather “which physical constraints govern my problem, and how do they shape what is even possible?”

Learning Objectives

- Explain how physical constraints (speed of light, power wall, memory wall) necessitate the deployment spectrum from cloud to TinyML

- Apply the iron law and bottleneck principle to determine whether a workload is compute bound, memory bound, or I/O-bound

- Map workload archetypes to deployment paradigms using Lighthouse Model examples

- Distinguish the four deployment paradigms (Cloud, Edge, Mobile, TinyML) by their operational characteristics and quantitative trade-offs

- Apply the decision framework to select deployment paradigms based on privacy, latency, computational, and cost requirements

- Analyze hybrid integration patterns to determine which combinations address specific system constraints

- Evaluate deployment decisions by identifying common fallacies (including Amdahl’s Law limits) and assessing architecture-requirement alignment

- Identify universal principles (data pipelines, resource management, architecture) that span deployment paradigms and explain why optimizations transfer between scales

Where an ML model runs shapes what is possible in ways no algorithmic choice can override. Yet deployment is far harder than it appears, and the reason is not the model itself. In production ML systems, the model is often only a small part of the overall system (Sculley et al. 2015). The surrounding infrastructure consists of data collection, feature processing, serving infrastructure, monitoring, and resource management. All of this surrounding infrastructure changes dramatically depending on where the model executes.

Consider two extremes: a wake-word detector on a smartwatch and a recommendation engine in a data center. The wake-word detector represents a TinyML workload operating under milliwatt power budgets and kilobyte memory limits; the recommendation engine exemplifies a cloud ML workload requiring terabytes of embedding tables and megawatt-scale infrastructure. These systems solve different problems under opposite physical constraints, and the infrastructure that supports them shares almost nothing in common. This reality transforms deployment from an operational afterthought into a first-order engineering decision, one that the D·A·M taxonomy shown in The D·A·M Taxonomy helps us reason about by foregrounding infrastructure alongside data and algorithms.

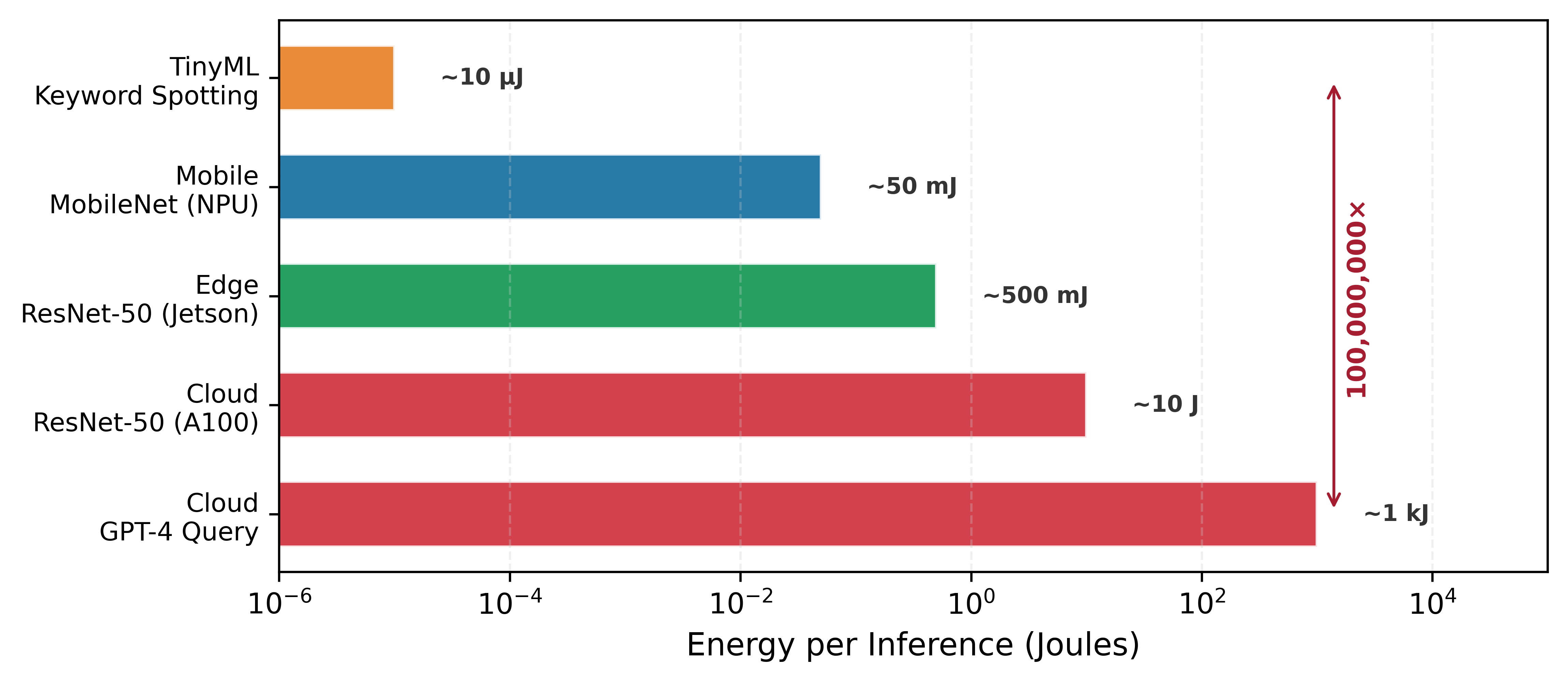

The physical constraints that govern each environment (latency, power, and memory) force ML deployment into four distinct paradigms, each with its own engineering trade-offs and system design patterns. Cloud ML aggregates computational resources in data centers, offering virtually unlimited compute and storage at the cost of network latency. Edge ML moves computation closer to where data originates, including factory floors, retail stores, and hospitals, achieving lower latency and keeping sensitive data on-premises. Mobile ML brings intelligence directly to smartphones and tablets, balancing computational capability against battery life and thermal constraints. TinyML pushes intelligence to the smallest devices: microcontrollers costing dollars and consuming milliwatts, enabling always-on sensing that runs for months on a coin-cell battery. These four paradigms span nine orders of magnitude in power consumption (megawatts to milliwatts) and memory capacity (terabytes to kilobytes), a range so vast that the engineering principles governing one end of the spectrum barely apply at the other.

These four paradigms exist not because of engineering choices but because of physical laws that no amount of optimization can overcome. Three fundamental constraints carve the deployment landscape into distinct operating regimes: the speed of light (establishing latency floors), thermodynamic limits on power dissipation (capping computation per watt), and the energy cost of memory signaling (creating the memory wall). These are not design preferences but physical boundaries: a self-driving car cannot be served from a data center 36 ms away, and a 1.5-billion-parameter model cannot be trained on a microcontroller.

The Architectural Anchor: The Single-Node Stack

To navigate these operating regimes, we anchor our engineering decisions in a four-layer architectural model of the Single-Node Stack. This model provides the foundational framework for analyzing any ML system before it is projected onto a larger distributed fleet. Understanding how these layers interact within a single machine is the technical prerequisite for mastering larger scales.

- Application (The Mission): The top layer where high-level requirements (throughput for training loops, latency for inference serving) are defined. This is where the “Dual Mandate” of accuracy and physics is managed (Model Training, Model Serving).

- ML Framework (The Compiler): The translation layer (PyTorch, JAX) that maps high-level math to hardware-specific execution plans. It manages the computational graph, automatic differentiation, and memory scheduling (ML Frameworks).

- Operating System (The Runtime): The interface between framework and hardware, responsible for the low-level orchestration of resources. This includes the CUDA Runtime for kernel management and PCIe DMA (Direct Memory Access) for efficient data movement between host and device.

- Hardware (The Silicon): The physical foundation where bits are transformed. This layer is defined by HBM (High Bandwidth Memory) capacity and high-speed intra-node interconnects like NVLink (900 GB/s). Here, the memory wall acts as the primary physical constraint (Hardware Acceleration).

Every chapter in the first half of this text interrogates one or more of these layers. Mastery of this single-node regime establishes the “silicon contract” that governs all subsequent optimization and scaling efforts.

These physical constraints interact with the iron law of ML systems (Iron Law of ML Systems), which decomposes end-to-end latency into data movement, computation, and overhead. Different deployment environments stress different terms of this equation: cloud systems are typically compute bound, mobile systems hit power walls, and TinyML devices are memory-capacity-limited. By pairing the physical constraints with the iron law, we develop a quantitative vocabulary for reasoning about which paradigm fits a given workload and why. To anchor this analysis concretely, the chapter introduces five Lighthouse Models (ResNet-50, GPT-2, Deep Learning Recommendation Model (DLRM), MobileNet, and a Keyword Spotter) that span the deployment spectrum and isolate distinct system bottlenecks. These reference workloads recur throughout the book, providing a consistent basis for comparing optimization techniques across chapters.

The physics that creates these paradigm boundaries comes first, followed by the analytical tools (iron law, bottleneck principle, workload archetypes) for mapping workloads to deployment targets. Each paradigm then receives an in-depth treatment covering infrastructure, trade-offs, and representative applications. Figure 1 orients the discussion by showing where each paradigm sits along the centralization spectrum. The chapter closes with a comparative decision framework and the hybrid architectures that combine paradigms when no single deployment target satisfies all requirements.

These four paradigms function as distinct operating envelopes, each defined by how much power, memory, and network connectivity is available. Every ML application must fit within at least one of these envelopes, and that fit determines which algorithms, hardware, and engineering trade-offs apply. The four paradigms span a continuous spectrum from centralized cloud infrastructure to distributed ultra-low-power devices. Figure 1 traces this spectrum visually, mapping where each paradigm sits along the centralization axis, while Table 1 pins down the quantitative trade-offs:

| Paradigm | Where | Latency | Power | Memory | Best For |

|---|---|---|---|---|---|

| Cloud ML | Data centers | 100-500 ms | MW | TB | Training, complex inference |

| Edge ML | Local servers | 10-100 ms | 100 W | GB | Real-time inference, privacy |

| Mobile ML | Smartphones | 5-50 ms | 3-5 W | GB | Personal AI, offline |

| TinyML | Microcontrollers | 1-10 ms | mW | KB | Always-on sensing |

The nine-order-of-magnitude span in Table 1 is not an accident of engineering history—it is a consequence of physics. No amount of optimization can make a data center respond faster than light can travel, or make a microcontroller dissipate more heat than its surface area allows. The existence of four paradigms, rather than a single universal solution, follows from three physical laws.

Self-Check: Question

Order the following layers of the Single-Node Stack from the point where high-level requirements are expressed to the point where bits are physically transformed: (1) Hardware (HBM + NVLink), (2) Application (throughput and latency goals), (3) Operating System (CUDA runtime + PCIe DMA), (4) ML Framework (PyTorch / JAX computational graph).

An engineer writes 20 lines of PyTorch defining a Transformer block and a cross-entropy loss. Before any kernel runs on the accelerator, some component must translate this high-level math into a device-specific execution plan: a computational graph, autodiff tape, memory schedule, and selected kernels. Which layer of the Single-Node Stack owns that translation?

- The hardware layer, because the silicon rewrites the computational graph internally before executing any instruction.

- The operating system layer, because the CUDA runtime and PCIe DMA engine set throughput and accuracy goals for the application.

- The ML framework layer, because it constructs the computational graph, performs autodifferentiation, and schedules memory and kernels for the target device.

- The application layer, because business-level throughput and latency requirements are what directly decide kernel launch order.

An engineer inherits a 512-GPU distributed training job that delivers only 22 percent of its expected throughput. Before touching the cluster’s interconnect or scheduler, the section advises reasoning about the single-node stack first. Using the Silicon Contract framing, explain why the single-node diagnosis must come before the distributed one, and name two specific single-node bottlenecks that 512 GPUs would amplify rather than resolve.

A student is deciding which Lighthouse Model from this chapter (ResNet-50, GPT-2, DLRM, MobileNet, Keyword Spotter) to use as the primary example in a lesson on memory-capacity limits versus memory-bandwidth limits in the iron law. Which model is the best pedagogical anchor, and why?

- ResNet-50, because its fixed-size 224×224 inputs make it compute-bound at every batch size, which cleanly isolates the R_peak term.

- DLRM, because its massive sparse embedding tables make the \(D_{vol}\) / capacity dimension the binding constraint — the model cannot execute until the right embedding rows are fetched, regardless of raw FLOPs.

- MobileNet, because its mobile deployment target means all bottlenecks trace to battery energy rather than to memory behavior.

- A Keyword Spotter, because its few-kilobyte footprint eliminates every memory-related iron-law term and leaves only the latency term.

Physical Constraints: Why Paradigms Exist

The physical laws of speed of light, power thermodynamics, and memory signaling dictate that no single “ideal” computer exists. Where a system runs reshapes the contract between model and hardware. These three constraints, which we call the light barrier, power wall, and memory wall, govern the engineering trade-offs ahead.1

1 Deployment Paradigm: A distinct operating regime whose boundaries are set by physics, not convention. The Cloud-to-TinyML spectrum spans nine orders of magnitude in power because thermodynamic and electromagnetic constraints create hard walls that no software optimization can cross, forcing qualitatively different system architectures at each tier. Misidentifying the paradigm boundary wastes engineering effort: optimizing a cloud model for 5 percent higher throughput is pointless if the application’s 10 ms latency budget demands edge deployment.

The light barrier

The light barrier establishes the absolute latency2 floor. The minimum round-trip time is governed by Equation 1: \[\text{Latency}_{\min} = \frac{2 \times \text{Distance}}{c_{\text{fiber}}} \approx \frac{2 \times \text{Distance}}{200{,}000 \text{ km/s}} \tag{1}\]

2 Latency: The time between issuing a request and receiving a result, corresponding to \(L_{\text{lat}}\) in the iron law. The light barrier makes this floor irreducible: the speed of light in fiber imposes a ~36 ms minimum round trip across the continental US, consuming the entire latency budget of a 10 ms safety-critical system before any computation begins. Every millisecond consumed by distance is a millisecond unavailable for model inference, which is why the light barrier forces paradigm selection rather than mere optimization.

California to Virginia (~3,600 km straight-line) requires ~36 ms minimum before any computation begins. Actual cloud services typically add 60–150 ms of software overhead. Applications requiring sub-10 ms response cannot use distant cloud infrastructure—physics forbids it. This constraint creates the need for edge ML and TinyML: when latency budgets are tight, computation must move closer to the data source.

The power wall

The power wall emerged because thermodynamics limits how much computation can occur in a given volume. Under classical Dennard scaling3 (which held until approximately 2006), the relationship between power and frequency was cubic. Here \(C\) is effective capacitance, \(V\) is voltage, and \(f\) is clock frequency. As voltage tracks frequency \((V \propto f)\), power rises as \(f^3\), as Equation 2 shows: \[\text{Power} \propto C \times V^2 \times f \quad \text{where } V \propto f \implies \text{Power} \propto f^3 \tag{2}\]

3 Dennard Scaling: Named after Dennard et al. (1974) at IBM, who showed that as transistors shrink, voltage and current scale proportionally, keeping power density constant. This held for three decades, delivering “free” performance gains each chip generation. When leakage current made further voltage reduction impossible around the 90 nm node (2005–2006), power density began rising with each generation—ending single-core frequency scaling and forcing the industry toward the parallelism and specialization (multi-core, GPU, TPU) that now defines ML hardware.

Doubling clock frequency required approximately 8\(\times\) more power. The breakdown of this scaling relationship ended the era of “free” speedups via frequency scaling and forced the industry toward the parallelism (multi-core) and specialization (GPUs, Tensor Processing Units (TPUs)) that defines modern ML. Mobile devices hit hard thermal limits at 3-5 W; exceeding this causes “throttling,” where the device reduces performance to prevent overheating. In practice, this means a mobile model that runs at 60 FPS for 1 minute may throttle to 15 FPS as the device heats up. This physical limit gives rise to mobile ML: battery-powered devices cannot simply run cloud-scale models locally.

The memory wall

The memory wall (Wulf and McKee 1995) reflects the widening bandwidth4 gap: \[\frac{\text{Compute Growth Rate}}{\text{Memory Bandwidth Growth Rate}} \approx \frac{1.6}{1.2} \approx 1.33 \tag{3}\]

4 Memory Bandwidth (The memory wall): The term “memory wall” was coined by Wulf and McKee in 1995, who predicted that the processor-memory performance gap would eventually dominate system performance—a prediction that proved prescient for ML workloads where weight loading, not arithmetic, is the typical bottleneck. In the iron law, bandwidth \((\text{BW})\) appears in the denominator of the data term \(D_{\text{vol}}/\text{BW}\), so every doubling of model size that is not matched by a doubling of memory bandwidth directly increases wall-clock time. This asymmetry, growing at roughly 1.33\(\times\) per year, is why modern ML systems are more often memory-bound than compute bound.

Equation 3 quantifies this divergence: processors have doubled in compute capacity roughly every 18 months, but memory bandwidth has improved only ~20 percent annually. This widening gap makes data movement the dominant bottleneck and energy cost for most ML workloads. This constraint affects all paradigms but is especially acute for TinyML, where devices have only kilobytes of memory to work with. We examine the hardware architectural responses to the memory wall, including HBM and on-chip SRAM hierarchies, in detail in Understanding the AI memory wall.

Checkpoint 1.1: Physical constraints and deployment

Deployment choices are governed by physics, not just preference. Check your understanding:

These physical laws explain why the four paradigms exist. Physics creates the boundaries; privacy regulation, economic incentives, and data sovereignty requirements reinforce and sharpen them. We examine these additional drivers within each paradigm section, but the central insight is that the paradigms would exist even without those concerns. No regulation can make the speed of light faster, and no economic model can repeal thermodynamics.

Knowing that these barriers exist is necessary but not sufficient. Given a specific ML workload (say, a recommendation engine or a wake-word detector), we need to determine which paradigm fits and which barrier the workload will hit first. The answer requires analytical tools that connect workload characteristics to these physical constraints: the iron law to decompose latency, the bottleneck principle to identify the dominant constraint, and a set of workload archetypes to classify where each model falls on the spectrum.

Self-Check: Question

A safety-critical control loop has a 10 ms end-to-end latency budget, and the nearest cloud data center is 3,600 km away across a direct fiber path. Applying the section’s light-barrier analysis, what follows?

- Cloud deployment is feasible if the model inference itself takes less than 1 ms.

- Cloud deployment is infeasible because round-trip propagation delay alone is roughly 36 ms, before any compute or software overhead.

- Cloud deployment is feasible if enough parallel GPUs hide the network delay.

- Cloud deployment is blocked only by software overhead, not by physics.

A smartphone runs an image-enhancement model at 60 FPS for the first 90 seconds of recording, then drops to 15 FPS for the rest of the session even though the user has not changed any settings. Using the section’s Dennard-scaling-breakdown and power-wall argument, walk through the mechanism behind this failure and explain why the mobile regime chose efficiency and parallelism over raw clock speed as a response.

A profiler shows a new accelerator generation delivering 3× the peak FP16 TFLOPS of the previous one, but a production inference pipeline’s end-to-end latency improves by only 8 percent. A GPU-busy-time counter reads 91 percent, and HBM bandwidth utilization reads 94 percent. Which interpretation matches the section’s memory-wall argument?

- The workload is still compute-bound, so the remedy is to raise the accelerator’s clock frequency and unlock more FLOPs.

- The immediate constraint is SSD capacity, so a larger disk will let the pipeline cache more weights and restore scaling.

- Compute capability has grown faster than memory bandwidth, so data movement now sets the latency ceiling; the 94 percent HBM figure confirms the kernel is bandwidth-starved, not FLOP-starved.

- The memory wall is a database-query phenomenon and does not bind neural-network kernels, so the 8 percent improvement must come from unrelated software overhead.

Given the memory-wall argument — compute has grown much faster than memory bandwidth — explain which class of optimization techniques becomes disproportionately valuable for ML inference, and why raw accelerator upgrades deliver diminishing returns on memory-bound kernels.

True or False: The four ML deployment paradigms (Cloud, Edge, Mobile, TinyML) are product-marketing categories that solidified because different engineering teams chose different deployment styles over time.

Analyzing Workloads

The central analytical tool for this chapter is the iron law of ML systems, established in Iron Law of ML Systems and restated here as Equation 4: \[T = \frac{D_{\text{vol}}}{\text{BW}} + \frac{O}{R_{\text{peak}} \cdot \eta_{\text{hw}}} + L_{\text{lat}} \tag{4}\]

This equation decomposes total latency into three terms: data movement \((D_{\text{vol}}/\text{BW})\), compute \((O/(R_{\text{peak}} \cdot \eta_{\text{hw}}))\), and fixed overhead \((L_{\text{lat}})\). For a single inference, these costs simply add up—each is paid sequentially. In production systems, however, tasks are processed continuously as a stream, and the question shifts from “how long does one task take?” to “which of these three terms actually limits the system?” The answer depends entirely on the deployment environment: a model that is compute bound during training may become memory bound during inference; a system that runs efficiently in the cloud may hit power limits on mobile devices. To determine which term dominates, we need a companion principle.

The bottleneck principle

The iron law tells us the cost of each term. The bottleneck principle tells us which term matters. Unlike traditional software where optimizing the average case works, ML systems are dominated by their slowest component: optimizing fast operations yields zero benefit while the slowest stage remains unchanged. Modern accelerators use pipelined execution to overlap data movement with computation: while the accelerator computes on batch \(n\), the memory system prefetches batch \(n+1\). With this overlap, whichever operation is slower determines the system’s throughput—the faster one “hides” behind it. The iron law’s sum becomes a maximum, as Equation 5 formalizes: \[ T_{\text{bottleneck}} = \max\left(\frac{D_{\text{vol}}}{\text{BW}}, \frac{O}{R_{\text{peak}} \cdot \eta_{\text{hw}}}, T_{\text{network}}\right) + L_{\text{lat}} \tag{5}\]

- \(\frac{D_{\text{vol}}}{\text{BW}}\) (Memory): Time to move data between memory and processor.

- \(\frac{O}{R_{\text{peak}} \cdot \eta_{\text{hw}}}\) (Compute): Time to execute calculations.

- \(T_{\text{network}}\): Time for network communication (if offloading).

- \(L_{\text{lat}}\) (Overhead): Fixed latency (kernel launch, runtime overhead).

This principle dictates that if a system is memory bound \((D_{\text{vol}}/\text{BW} > O/(R_{\text{peak}} \cdot \eta_{\text{hw}}))\), buying faster processors \((R_{\text{peak}})\) yields exactly 0 percent speedup—just as widening a six-lane highway yields no benefit when all traffic must funnel through a two-lane bridge. Engineers must identify the dominant term before optimizing. This trade-off is governed by the energy of transmission.

Napkin Math 1.1: The energy of transmission

Variables:

- Data \((D_{\text{vol}})\): 1 MB (for example, one second of audio).

- Transmission energy \((E_{\text{tx}})\): 100 mJ/MB (Wi-Fi/LTE).

- Compute energy \((E_{\text{op}})\): 0.1 mJ/inference (MobileNet on NPU).

Math:

- Cloud approach: \(E_{\text{cloud}} \approx D_{\text{vol}} \times E_{\text{tx}}\) = 1 MB\(\times\) 100 mJ/MB = 100 mJ.

- Local approach: \(E_{\text{local}} \approx\) Inference = 0.1 mJ.

Systems insight: Transmitting raw data is 1,000\(\times\) more expensive than processing it locally. Even if the cloud had infinite speed \((\text{Time} \approx 0)\), the energy wall makes cloud offloading physically impossible for always-on battery devices. The “machine” constraint (battery) dictates the “algorithm” choice (TinyML).

The iron law’s variables interact differently across deployment scenarios. Before specific workload archetypes are examined, these core performance determinants need a compact definition.

Definition 1.1: The iron law

The iron law is the fundamental physical constraint governing all machine learning performance, expressed as the total time \(T\) required for a workload:

\[T = \frac{D_{\text{vol}}}{\text{BW}} + \frac{O}{R_{\text{peak}} \cdot \eta_{\text{hw}}} + L_{\text{lat}}\]

- Significance (quantitative): It defines the Physical Ceiling for any system by quantifying the relationship between data volume \((D_{\text{vol}})\), compute capacity \((R_{\text{peak}})\), and communication overhead \((L_{\text{lat}})\).

- Distinction (durable): Unlike Amdahl’s Law, which focuses on Parallel Speedup, the iron law addresses the Total Energy and Time required to move and transform data.

- Common pitfall: A frequent misconception is that these terms are independent. In reality, they are Trade-off Axes: for example, increasing batch size may improve the duty cycle \((\eta_{\text{hw}})\) but also increase the data volume \((D_{\text{vol}})\) per request, potentially shifting a compute-bound problem to a memory-bound one.

The iron law quantifies the cost of each ingredient; the bottleneck principle identifies the speed of the assembly line. As a rule of thumb, use the additive form in Equation 4 when analyzing the latency of a single task, and the max form in Equation 5 when analyzing the throughput of a continuous stream of tasks.

Workload archetypes

The bottleneck principle reduces optimization to a single diagnostic: identifying which constraint dominates for a given workload. The answer depends on the D·A·M taxonomy in The D·A·M Taxonomy, which decomposes every ML system into Data, Algorithm, and Machine. Different deployment environments create different bottlenecks along these axes—a cloud server with terabytes of memory faces algorithm constraints, while a microcontroller with kilobytes faces machine constraints.

To navigate these constraints systematically, we categorize ML workloads into four Archetypes5. These represent the primary physical bottlenecks, not just specific model architectures. We introduce each archetype briefly here; the Lighthouse Models that follow will ground each category in concrete, recurring examples.

5 Workload Archetype: A classification of ML workloads by their dominant iron law bottleneck rather than their model family. The distinction matters because the optimization strategy differs fundamentally: a compute-bound workload benefits from faster arithmetic \((R_{\text{peak}})\), while a bandwidth-bound workload benefits only from wider memory buses \((\text{BW})\). Misidentifying the archetype wastes optimization effort on the wrong term of the iron law, as when teams add accelerator FLOPS to a memory-bound inference pipeline and observe zero speedup.

The first archetype, the Compute Beast, describes workloads that perform many calculations per byte of data loaded. The binding constraint is raw computational throughput. Training large neural networks falls into this category.

The second archetype, the Bandwidth Hog, describes workloads that spend more time loading data than computing. Memory bandwidth becomes the binding constraint. Autoregressive text generation (like ChatGPT producing one token at a time) falls into this category.

The third archetype, the Sparse Scatter, describes workloads with irregular memory access patterns and poor cache locality. Memory capacity and access latency constrain performance. Recommendation systems with massive embedding tables are canonical examples.

The fourth archetype, the Tiny Constraint, describes workloads operating under extreme power envelopes (\(< 1\) mW) and memory limits (\(< 256\) KB). The binding constraint is energy per inference—efficiency, not raw speed. Always-on sensing operates in this regime.

These archetypes map naturally to deployment paradigms: compute beasts and sparse scatter workloads gravitate toward cloud ML where resources are abundant. Bandwidth hogs span cloud and edge depending on latency requirements. Tiny constraint workloads are exclusively TinyML territory. To make these abstractions concrete, we anchor each archetype to a specific model that recurs throughout this book as one of five reference workloads.

Lighthouse 1.1: Five reference workloads

Throughout this book, we use the five Lighthouse Models summarized in Table 2: concrete workloads that span the deployment spectrum and isolate distinct system bottlenecks. Network Architectures provides full architectural details and model biographies.

| Lighthouse | Archetype | Deployment Paradigm |

|---|---|---|

| ResNet-50 | Compute Beast | Cloud training, edge inference |

| GPT-2/Llama | Bandwidth Hog | Cloud inference |

| DLRM | Sparse Scatter | Cloud only (distributed) |

| MobileNet | Compute Beast (efficient) | Mobile, edge |

| Keyword Spotting (KWS) | Tiny Constraint | TinyML, always-on |

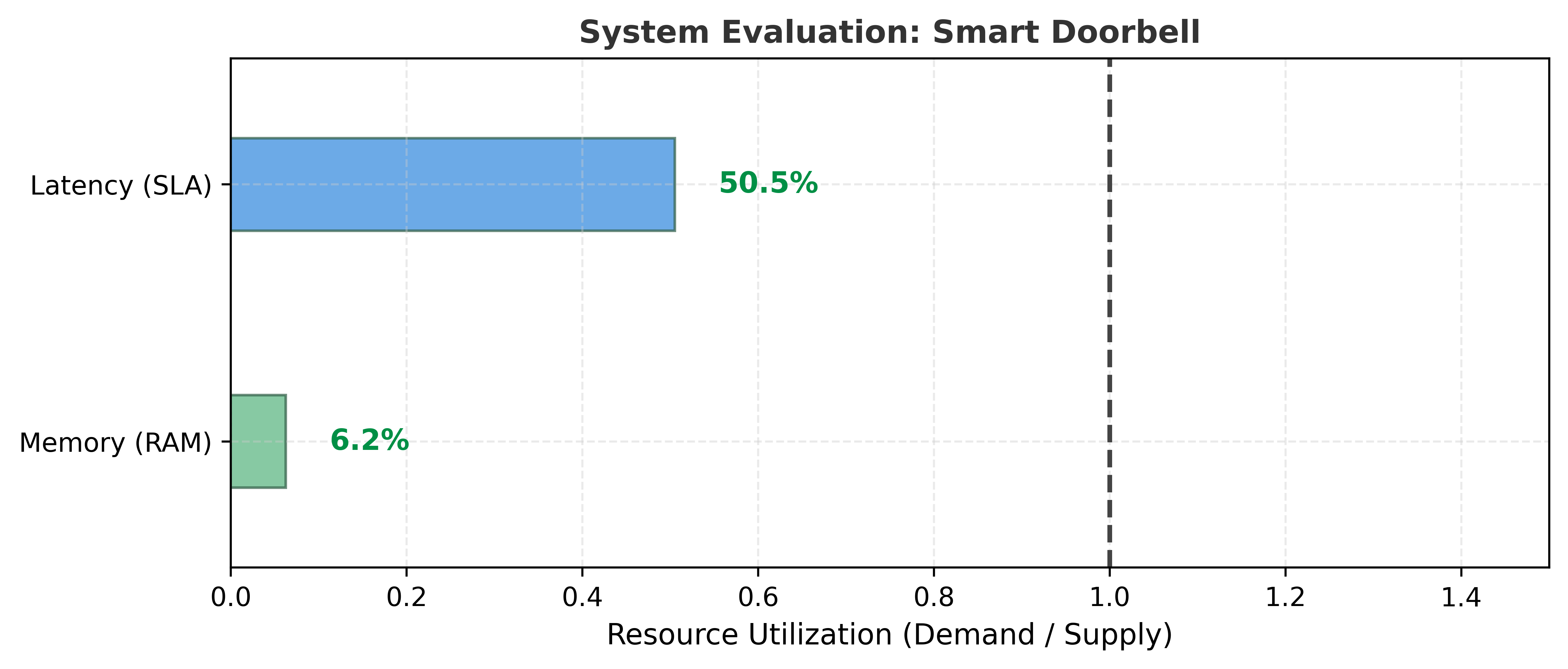

To ground the abstract interdependencies of the iron law in concrete practice, we analyze the Lighthouse Models summarized in Table 2. The following summaries recap each workload from a systems perspective, connecting them to the specific iron law bottlenecks they exemplify, as visualized in the scorecard for our central Smart Doorbell narrative (Figure 2).

The first lighthouse, ResNet-50, classifies images into one thousand categories, processing each image through approximately 4.1 billion floating-point operations using 25.6 million parameters (102 MB at FP32). Used in medical imaging diagnostics, autonomous vehicle perception pipelines, and as the backbone for content moderation systems, its regular, compute-dense structure makes it the canonical benchmark for hardware accelerator performance.

The language models GPT-2/Llama power chatbots, code assistants, and content generation tools. These models generate text one token at a time, requiring the model to read its full parameter set (1.5 billion for GPT-2, 7 to 70 billion for Llama) from memory for each output token. This sequential memory access pattern creates the autoregressive bottleneck that dominates serving costs.

The recommendation lighthouse, DLRM (Deep Learning Recommendation Model), powers the “You might also like” recommendations on platforms like Meta and Netflix. It maps users and items to embedding vectors stored in tables that can exceed 100 GB, making memory capacity rather than computation the binding constraint.

The mobile lighthouse, MobileNet, runs in smartphone camera apps for real-time photo categorization and on-device visual search. It performs the same image classification task as ResNet but uses depthwise separable convolutions to reduce computation by 14\(\times\), enabling real-time inference on smartphones at 2 to 5 watts.

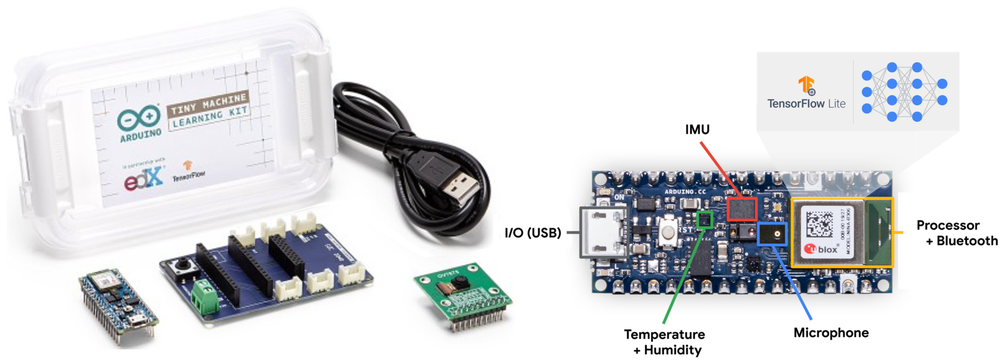

The TinyML lighthouse, Keyword Spotting (KWS), represents the always-on sensing archetype. Used in applications like Smart Doorbells, it detects wake words (“Ding Dong”, “Hello”) using a depthwise separable convolutional neural network (CNN) with approximately 200K parameters (small variants; the DS-CNN benchmark in MLPerf Tiny uses ~200K) fitting in about 800 KB, running continuously at under one milliwatt (Zhang et al. 2017).

The range in compute requirements and memory footprints explains why no single deployment paradigm fits all workloads. A keyword spotter can operate with roughly 20 MFLOPs and 800 KB, while ResNet-50 requires about 4.1 GFLOPs and roughly 102 MB per image. The reference DLRM example already reaches 100 GB of embeddings, and production DLRM-style recommendation systems can exceed 100 TB. Language models add a bandwidth-dominated regime: billions of parameters streamed repeatedly from memory during autoregressive inference. These five Lighthouse Models serve as concrete anchors throughout the book, each isolating a distinct system bottleneck revisited in every chapter.

Analytical tools alone remain abstract until grounded in real silicon. The next step translates the iron law, bottleneck principle, and workload archetypes into quantitative engineering decisions by examining how system balance (the interplay of compute, memory, and I/O) varies across real hardware platforms.

Self-Check: Question

Two engineers are analyzing the same inference service on the same hardware. Engineer A asks ‘what is the 99th-percentile end-to-end latency of a single request arriving when the queue is empty?’, and Engineer B asks ‘what is the sustained queries-per-second this service delivers when fully loaded with overlapped preprocessing, transfer, and compute?’. Which pair of iron-law formulations matches these two questions?

- Both questions use the additive iron law, because time is always a sum of the three terms regardless of context.

- Engineer A’s single-request-latency question uses the additive form (data + compute + latency add because the one request waits at every stage), while Engineer B’s steady-state throughput question uses the max form (overlapped stages make the slowest one — the bottleneck — set the rate).

- Both questions use the max-form Bottleneck Principle, because deployment systems always pipeline their stages.

- Neither form applies to inference; the iron law is a training-only framework in this chapter.

An inference pipeline has three stages measured per request: preprocessing on a CPU at 50 ms, host-to-device PCIe transfer at 10 ms, and GPU compute at 80 ms. A team doubles the accelerator’s FLOPS by buying a newer GPU; the compute stage falls to 40 ms, but pipelined throughput improves by only 60 percent rather than doubling. Use the Bottleneck Principle to explain the result and identify the optimization that would actually move the needle.

A battery-powered acoustic sensor can either transmit 1 MB of raw audio to a cloud classifier at roughly 100 mJ per megabyte, or run one local inference pass that costs roughly 0.1 mJ. Applying the section’s Energy of Transmission argument, what is the correct conclusion for always-on operation?

- Cloud offloading is usually more energy-efficient because the wireless radio amortizes compute costs across many devices.

- The two approaches are close enough that latency — not energy — should be the deciding factor.

- Local and cloud processing consume energy in the same order of magnitude, so either is viable for multi-month battery operation.

- Local processing is roughly 1,000× more energy-efficient per inference, so always-on battery-constrained sensing is pushed toward TinyML rather than cloud offload regardless of the cloud’s compute capability.

Which pairing of Lighthouse Model and Workload Archetype correctly reflects the section’s mapping?

- GPT-2 / Llama → Sparse Scatter, because autoregressive decoding scatters attention across irregular token positions.

- DLRM → Sparse Scatter, because massive embedding tables create irregular-access, capacity-dominated memory patterns.

- Keyword Spotting → Compute Beast, because always-on classification demands sustained peak arithmetic throughput.

- MobileNet → Bandwidth Hog, because depthwise-separable convolutions saturate HBM bandwidth on every layer.

True or False: A workload’s archetype is primarily determined by its model family (e.g., all language models are one archetype, all vision models are another), so teams can pick optimization strategies by architecture type alone without profiling.

System Balance and Hardware

Physical constraints translate into engineering decisions through concrete numbers. Table 3 provides order-of-magnitude latencies that should inform every deployment decision—spanning eight orders of magnitude from nanosecond compute operations to hundreds of milliseconds for cross-region network calls. Detailed hardware latencies and bandwidth constraints are covered in Hardware Acceleration. The key decision rule is simple: an operation with latency \(> X\) cannot appear on the critical path of a system whose latency budget is \(X\) ms.6

6 Critical Path: The longest sequential chain of dependent operations in a pipeline. The decision rule in the triggering sentence is strict: if a 200 ms cross-region network call appears anywhere on the critical path, a system with a 100 ms total budget is guaranteed to fail regardless of how fast every other stage runs. In practice, ML inference is rarely the longest stage; data preprocessing and postprocessing often dominate, making the critical path longer than the model execution time alone suggests.

These latencies, organized by category in Table 3, span eight orders of magnitude:

| Operation | Latency | Deployment Implication |

|---|---|---|

| Compute | ||

| GPU matrix multiply (per op) | ~1 ns | Compute is rarely the bottleneck |

| NPU inference (MobileNet) | 5–20 ms | Mobile can do real-time vision |

| LLM token generation | 20–100 ms | Perceived as “typing speed” |

| Memory | ||

| L1 cache hit | ~1 ns | Keep hot data in registers |

| HBM read (GPU) | 20–50 ns | 20–50\(\times\) slower than compute |

| DRAM read (mobile) | 50–100 ns | Memory bound on most devices |

| Network | ||

| Same data center | 0.5 ms | Microservices feasible |

| Same region | 1–5 ms | Edge servers viable |

| Cross-region | 50–150 ms | Batch processing only |

| ML Operations | ||

| Wake-word detection (TinyML) | 100 μs | Always-on feasible at <1 mW |

| Face detection (mobile) | 10–30 ms | Real-time at 30 FPS |

| GPT-4 first token | 200–500 ms | User notices delay |

| ResNet-50 training step | 200–400 ms | Throughput-optimized |

The four deployment paradigms gain precision when grounded in concrete hardware. While Table 1 defined the paradigms conceptually, Table 6 (which appears later in this section, after the System Balance discussion) provides specific devices, processors, and quantitative thresholds that practitioners use to select deployment targets.78 The nine-order-of-magnitude range in power (MW cloud facilities vs. mW TinyML devices) and the wide cost spread ($millions vs. $10) determine which paradigm can serve a given workload economically.

7 ML Hardware Cost Spectrum: AI infrastructure spans six orders of magnitude in cost, from $10 microcontrollers to multi-million-dollar GPU clusters. This million-fold range means deployment paradigm selection is simultaneously a physics decision and an economics decision. Even within individual-device choices, the same accuracy target may be achievable on a $2 microcontroller (via aggressive quantization) or a $30,000 GPU (at full precision), with fundamentally different latency, power, and operational cost profiles.

8 Power Usage Effectiveness (PUE): This metric isolates the energy overhead (for example, cooling) that determines the economic viability of the “MW cloud” paradigm. For a data center, the remaining 6 percent overhead of an elite 1.06 PUE still translates to megawatts of noncompute cost. This entire cost category does not exist for the “mW TinyML” paradigm, explaining a key part of the six-order-of-magnitude economic range.

These hardware differences translate directly into performance bottlenecks. To understand which constraint dominates in each paradigm, we apply the bottleneck principle (Section 1.3.1) using the pipelined form of the iron law.

Systems Perspective 1.1: System balance across paradigms

The pipelined form of the iron law of ML systems from Iron Law of ML Systems states that execution time is bounded by the slowest resource, as Equation 6 formalizes: \[T = \max\left( \frac{O}{R_{\text{peak}} \cdot \eta_{\text{hw}}}, \frac{D_{\text{vol}}}{\text{BW}}, \frac{D_{\text{vol}}}{\text{BW}_{\text{IO}}} \right) + L_{\text{lat}} \tag{6}\]

Here, \(O\) represents total operations, \(R_{\text{peak}}\) is peak compute rate, \(\eta_{\text{hw}}\) is hardware utilization efficiency, \(D_{\text{vol}}\) is data volume, \(\text{BW}\) is memory bandwidth, \(\text{BW}_{\text{IO}}\) is I/O bandwidth (storage or network), and \(L_{\text{lat}}\) is fixed overhead. The equation identifies which resource (compute, memory, or I/O) limits performance. For a systematic diagnostic guide to identifying these bottlenecks, consult the D·A·M taxonomy (The D·A·M Taxonomy).

The dominant term varies by paradigm and workload, shifting the optimization strategy entirely; Table 4 names which iron-law term limits each paradigm and the resulting optimization focus.

| Paradigm | Dominant Constraint | Why | Optimization Focus |

|---|---|---|---|

| Cloud Training | \(O/R_{\text{peak}}\) (Compute) | Abundant memory/network; FLOPS limit throughput | Maximize accelerator utilization, batch size |

| Cloud LLM Inference | \(D_{\text{vol}}/\text{BW}\) (Memory BW) | Autoregressive: ~1 FLOP/byte, memory-bound | KV-caching, quantization, batching |

| Edge Inference | \(D_{\text{vol}}/\text{BW}\) (Memory BW) | Limited HBM; models often memory-bound | Model compression, operator fusion |

| Mobile | Energy (implicit) | Battery = \(\int \text{Power} \cdot dt\); thermal throttling | Reduced precision, duty cycling |

| TinyML | \(D_{\text{vol}}/\text{Capacity}\) | 256 KB total; model must fit on-chip | Extreme compression, binary networks |

The same ResNet-50 model is compute-bound during cloud training (high batch size, high arithmetic intensity) but memory-bound during single-image inference (batch=1, low arithmetic intensity) (Williams et al. 2009). Deployment paradigm selection must account for this shift.

This shift between training and inference is critical to understand. Recall the D·A·M taxonomy from The D·A·M Taxonomy: every ML system comprises Data, Algorithm, and Machine. Table 5 shows how each component behaves differently depending on whether the system is training (learning patterns) or serving (applying them).

| Component | Training (Mutable) | Inference (Immutable) |

|---|---|---|

| Data | Massive throughput: large batches, shuffling, augmentation | Low latency: single samples, freshness, speed |

| Algorithm | Bidirectional: forward + backward pass, optimizer state | Unidirectional: forward pass only, weights frozen |

| Machine | Throughput-optimized: high-bandwidth clusters, large memory | Latency-optimized: edge devices, inference accelerators |

A quantitative comparison applies this analysis to ResNet-50 inference on a data-center GPU and a mobile NPU.

Napkin Math 1.2: ResNet-50 on cloud vs. mobile

Given (from Lighthouse Models):

- ResNet-50: 4.1 GFLOPs per inference, 25.6 M parameters (102 MB at FP32, 51 MB at FP16)

Analysis:

(a) Cloud: NVIDIA A100 (batch=1, FP16)

- Peak compute: 312 TFLOPS (FP16)

- Memory bandwidth: 2 TB/s (HBM2e)

- Compute time: \(T_{\text{comp}}\) = \(\frac{4.10 \times 10^{9}}{3.12 \times 10^{14}}\) = 0.013 ms

- Memory time: \(T_{\text{mem}}\) = \(\frac{5.12 \times 10^{7}}{2.04 \times 10^{12}}\) = 0.025 ms

- Bottleneck: Memory (2\(\times\) slower than compute)

- Arithmetic intensity: \(\frac{4.10 \times 10^{9}}{5.12 \times 10^{7}}\) = 80 FLOPs/byte—this ratio of compute operations to bytes loaded measures how efficiently a workload uses the hardware. When arithmetic intensity exceeds the hardware’s compute-to-bandwidth ratio \((R_{\text{peak}}/\text{BW})\), the workload is compute bound; below it, the workload is memory bound. For single-image inference, the low batch size yields low arithmetic intensity, explaining why even powerful GPUs are memory bound at batch=1.

(b) Mobile: Flagship NPU (batch=1, INT8)

- Peak compute: ~35 TOPS (INT8)—representative of modern mobile NPUs

- Memory bandwidth: ~100 GB/s (LPDDR5)

- Model size: 26 MB (INT8 quantized)

- Compute time: \(T_{\text{comp}}\) = \(\frac{4.10 \times 10^{9}}{3.50 \times 10^{13}}\) = 0.12 ms

- Memory time: \(T_{\text{mem}}\) = \(\frac{2.56 \times 10^{7}}{1.00 \times 10^{11}}\) = 0.26 ms

- Bottleneck: Memory (2\(\times\) slower than compute)

Key Insight: Both platforms are memory bound for single-image inference! The A100’s faster memory bandwidth (2 TB/s vs. 100 GB/s = 20\(\times\)) translates to roughly 10\(\times\) faster inference; peak compute alone is not the limiting comparison. This explains why quantization (reducing bytes) often beats faster hardware (increasing FLOPS) for deployment.

ResNet-50 becomes compute bound when batching and data reuse raise arithmetic intensity above the hardware balance point, \(\frac{\text{Ops}}{\text{Compute}} > \frac{\text{Bytes}}{\text{Memory BW}}\). The crossover is architecture- and implementation-dependent because activation traffic, input/output movement, cache reuse, and kernel fusion all change the effective bytes moved per inference.

As systems transition from Cloud to Edge to TinyML, available resources decrease dramatically. Table 6 quantifies this progression with concrete hardware examples: memory drops from 131 TB (cloud) to 520 KB (TinyML), a 250 million-fold reduction, while power budgets span nine orders of magnitude from megawatts to milliwatts9. This resource disparity is most acute on microcontrollers, the primary hardware platform for TinyML, where memory and storage capacities are insufficient for conventional ML models.

9 ML Hardware Cost Spectrum: AI infrastructure spans six orders of magnitude in cost, from $10 microcontrollers to multi-million-dollar GPU clusters. This million-fold range means deployment paradigm selection is simultaneously a physics decision and an economics decision. Even within individual-device choices, the same accuracy target may be achievable on a $2 microcontroller (via aggressive quantization) or a $30,000 GPU (at full precision), with fundamentally different latency, power, and operational cost profiles.

Table 6 grounds these paradigms in concrete hardware platforms and price points.

| Category | Example Device | Processor | Memory | Storage | Power | Price Range |

|---|---|---|---|---|---|---|

| Cloud ML | Google TPU v4 Pod | 4,096 TPU v4 chips, >1 EFLOP | 131 TB HBM2 | Cloud-scale (PB) | ~3 MW | Cloud service (rental) |

| Edge ML | NVIDIA DGX Spark | GB10 Grace Blackwell, 1 PFLOPS AI | 128 GB LPDDR5x | 4 TB NVMe | ~200 W | ~$3,000–5,000 |

| Mobile ML | Flagship Smartphone | Mobile SoC (CPU + GPU + NPU) | 8-16 GB RAM | 128 GB-1 TB | 2 to 5 W | USD 999+ |

| TinyML | ESP32-CAM | Dual-core @ 240 MHz | 520 KB RAM | 4 MB Flash | 0.05–1.2 W active board power | $10 |

These deployment paradigms emerged from decades of hardware evolution, from floating-point coprocessors in the 1980s through graphics processors in the 2000s to today’s domain-specific AI accelerators. Hardware Acceleration traces this historical progression and the architectural principles that drove it. Here, we focus on the consequences of this evolution: the deployment spectrum that results from having qualitatively different hardware available at different points in the infrastructure.

Each paradigm occupies a distinct region of the deployment spectrum, governed by the physical constraints (light barrier, power wall, memory wall) and quantified by the analytical tools (iron law, bottleneck principle) introduced earlier. The quantitative thresholds in Table 7 help practitioners determine which paradigm suits their workload. The following four sections progress from cloud to TinyML, tracing the gradient from maximum computational resources to maximum efficiency constraints.

| Paradigm | Compute | Memory BW | Power | Latency |

|---|---|---|---|---|

| Cloud ML | >1000 TFLOPS | >100 GB/s | MW class (PUE 1.1–1.3) | 100-500 ms |

| Edge ML | ~1 PFLOPS AI | >270 GB/s | 100 W class | 10-100 ms |

| Mobile ML | 15-45 TOPS | 60-100 GB/s | 2 to 5 W | 5-50 ms |

| TinyML | <1 TOPS | — | <1 mW always-on average target | 1-10 ms |

Each section follows a consistent structure: definition, key characteristics, benefits and trade-offs, and representative applications. This parallel treatment reveals both what distinguishes each paradigm and what principles they share, setting the stage for the hybrid architectures that combine them. We begin at the resource-rich end of the spectrum and progressively tighten the constraints.

Self-Check: Question

An application has a strict 30 ms end-to-end latency budget and must choose which operations can appear on its critical path. Using the section’s latency-table decision rule, which operation is automatically disqualified from the critical path regardless of what else happens?

- NPU inference at 5–20 ms.

- Cross-region network communication at 50–150 ms.

- Wake-word detection at 100 microseconds.

- Same-region network communication at 1–5 ms.

The same ResNet-50 model is compute-bound when trained on an A100 at batch 256 but memory-bound when used for single-image inference on the same A100. Explain why the dominant bottleneck flips despite the identical model and hardware, and what the optimization priorities must become in each phase.

ResNet-50 inference on a cloud A100 is only about an order of magnitude faster than on a mobile NPU in the worked example, even though the A100 has much higher peak compute and memory bandwidth. What explains the much smaller-than-expected cloud advantage?

- The A100 and the mobile NPU have similar compute throughput once INT8 quantization is enabled, so the peak-FLOPS gap is illusory.

- Batch-1 inference is memory-bandwidth-bound on both platforms, so the effective speedup tracks bytes moved through memory bandwidth rather than peak compute; the mobile case also uses INT8 weights, reducing the bytes it must move.

- The mobile NPU is compute-bound while the A100 is network-bound, so the bottlenecks are incomparable and no meaningful speedup exists.

- The A100 spends most of its batch-1 inference time on operating-system context switches and Python overhead, erasing its compute advantage.

In a pipelined inference server, one stage’s data-movement time exceeds the sum of all other stages’ compute times. Using the Bottleneck Principle, explain what happens to the accelerator’s realized throughput and utilization, and why adding a faster compute kernel does not fix the problem.

A team profiles batch-1 ResNet-50 inference and confirms memory-access time exceeds compute time on both cloud and mobile targets. Which next optimization aligns with the section’s memory-bound diagnosis?

- Double the accelerator’s peak FLOPS by moving to a newer GPU generation, leaving model precision and size unchanged.

- Apply INT8 weight quantization to shrink model bytes and cut the dominant data-movement term directly.

- Add more cross-region replicas so single-device memory pressure is distributed across the fleet.

- Enlarge the training dataset so the model learns a more efficient internal representation that uses less memory.

Cloud ML: Computational Power

Consider what it took to train GPT-3: 3,634 petaflop-days of computation, 10,000 GPUs running for approximately 15 days, consuming megawatts of power—at an estimated cost of ~$4.6M10. No smartphone, no edge server, no single machine on Earth could have performed this computation. Only a data center, with its virtually unlimited compute, memory, and storage, could aggregate enough resources to make this possible. This is the defining proposition of cloud ML: when latency can be tolerated, it offers computational scale that no other paradigm can match.

10 Large Language Model (LLM) Training Scale: GPT-3 required approximately 3,634 petaflop-days, 10,000 V100 GPUs, and an estimated $4.6M in compute at 2020 cloud rates. This scale illustrates the core cloud ML trade-off: only centralized infrastructure can aggregate enough \(R_{\text{peak}}\) for peta-scale training, but the resulting \(L_{\text{lat}}\) penalty (100–500 ms network round trip) makes that same infrastructure unsuitable for real-time inference.

11 Cloud as Utility Computing: The utility model allows providers to offer a specialized hardware portfolio that is economically infeasible for a single organization to maintain. This provides direct, on-demand access to the specific architectures required by each workload archetype: dense accelerator pods for Compute Beasts, HBM-equipped nodes for Bandwidth Hogs, and high-memory systems with fast interconnects for Sparse Scatter. A team can therefore rent a purpose-built, $10M+ supercomputing pod for a few hours rather than owning it.

Cloud ML aggregates computational resources in data centers11 to handle computationally intensive tasks: large-scale data processing, collaborative model development, and advanced analytics. This infrastructure serves as the natural home for three of the four workload archetypes: compute beasts like ResNet training that demand sustained TFLOPS across thousands of accelerators, bandwidth hogs like large language model inference that benefit from TB/s HBM bandwidth, and sparse scatter workloads like recommendation systems that require terabytes of embedding tables and high-bandwidth interconnects for all-to-all communication patterns.

Cloud deployments range from single-machine instances (workstations, multi-GPU servers, DGX systems) to large-scale distributed systems spanning multiple data centers. This book focuses on single-machine cloud systems, where the reader learns to build and optimize ML systems on individual powerful machines. Future studies can address distributed cloud infrastructure, where systems coordinate computation across multiple networked machines. This follows the principle of establishing foundations before adding complexity.

What unifies these diverse cloud workloads is a single defining trade-off:

Definition 1.2: Cloud ML

Cloud Machine Learning is the deployment paradigm that optimizes for Resource Elasticity by decoupling computational capacity from physical location.

- Significance (quantitative): It enables systems to scale resources \((R_{\text{peak}})\) proportional to workload variance, allowing for bursts of peta-flops that would be economically unfeasible to maintain locally.

- Distinction (durable): Unlike edge ML, which prioritizes data locality, cloud ML prioritizes computational density and centralized management.

- Common pitfall: A frequent misconception is that cloud ML is “unlimited compute.” In reality, it is constrained by the distance penalty \((L_{\text{lat}})\) and the ingestion bottleneck \((\text{BW})\), making it unsuitable for sub-10 ms real-time control loops.

Figure 3 breaks down cloud ML across several dimensions that define its computational paradigm. The Characteristics branch emphasizes centralization and dynamic scalability, which directly enables the Benefits of scalable data processing and global accessibility. This centralization, however, creates the Challenges of latency and internet dependence, shaping the kinds of Examples that thrive in the cloud: virtual assistants, recommendation systems, and fraud detection. The most fundamental of these challenges, network latency, is not an engineering limitation but a physics constraint. A quick calculation of the distance penalty after the figure makes this concrete.

Napkin Math 1.3: The distance penalty

Physics:

- Light in Fiber: ~200,000 km/s.

- Round-trip propagation: (1,500 km\(\times\) 2)/200,000 km/s = 15 ms.

- Result: The safety budget is already negative (-5 ms) before the model even starts its first calculation.

Systems insight: Physics has made cloud ML impossible for this application. The model must move to the Edge.

Cloud infrastructure and scale

Cloud ML aggregates computational resources in data centers at unprecedented scale. Figure 4 captures the physical scale behind this abstraction: Google’s Cloud TPU12 data center, where row upon row of specialized accelerators deliver petaflop-scale training throughput. Table 6 quantifies how cloud systems provide orders-of-magnitude more compute and memory bandwidth than mobile devices, at correspondingly higher power and operational cost. Modern cloud accelerator systems operate at petaflops to exaflops of peak reduced-precision throughput and require megawatt-scale facility power in large clusters. These facilities enable workloads that are impractical on resource-constrained devices, but their remote location introduces critical trade-offs: network round-trip latency of 100–500 ms eliminates real-time applications, and operational costs scale linearly with usage.

12 Tensor Processing Unit (TPU): A custom-built processor (ASIC) that delivers petaflop-scale throughput by hard-wiring its architecture for the matrix multiplication operations that dominate ML workloads. This extreme specialization trades general-purpose flexibility for a >10\(\times\) improvement in performance-per-watt compared to a general-purpose accelerator on the same ML task. The high cost of deploying these accelerators at data center scale is therefore only economical for massive, sustained ML computation.

The physical reality of petaflop-scale compute is visible in the infrastructure itself: a single facility floor houses thousands of accelerator chips organized into rows of liquid-cooled racks, each rack consuming kilowatts of power to sustain the aggregate throughput that no individual device can approach.

Cloud ML excels at processing massive data volumes through parallelized architectures, enabling training on datasets requiring hundreds of terabytes of storage and petaflops of computation—resources that remain impractical on constrained devices. The training techniques covered in Model Training and the hardware analysis in Hardware Acceleration explain how practitioners achieve this scale.

Beyond raw computation, cloud infrastructure creates deployment flexibility through cloud APIs, making trained models accessible worldwide across mobile, web, and IoT platforms. Shared infrastructure enables multiple teams to collaborate simultaneously with integrated version control, while pay-as-you-go pricing models13 eliminate upfront capital expenditure and scale elastically with demand.

13 Pay-as-You-Go Pricing: A cloud economic model where users pay for accelerator-hours consumed rather than hardware owned. Elastic pricing converts the fixed cost of idle \(R_{\text{peak}}\) into a variable cost proportional to actual utilization, but the inverse also holds: sustained 24/7 workloads (continuous inference serving) often cost 2–3\(\times\) more on cloud than equivalent on-premises hardware amortized over three years, a crossover that drives the total cost of ownership (TCO) analysis later in this section.

A common misconception holds that cloud ML’s vast computational resources make it universally superior. Exceptional computational power and storage do not automatically translate to optimal solutions for all applications. The Data Gravity Invariant in Napkin math: The physics of data gravity explains why: as data scales, the cost of moving it to compute \((C_{\text{move}}(D) \gg C_{\text{move}}(\text{Compute}))\) eventually dominates. The trade-offs listed in the preceding definition become concrete when we consider where edge and embedded deployments excel: real-time response with sub-10 ms decision-making in autonomous control loops, strict data privacy for medical devices processing patient data, predictable costs through one-time hardware investment vs. recurring cloud fees, or operation in disconnected environments such as industrial equipment in remote locations. The optimal deployment paradigm depends on specific application requirements rather than raw computational capability.

Cloud ML trade-offs and constraints

Cloud ML’s advantages carry inherent trade-offs that shape deployment decisions. Latency is the most consequential: network round-trip delays of 100–500 ms make cloud processing unsuitable for real-time applications requiring sub-10 ms responses, such as autonomous vehicles and industrial control systems. Unpredictable response times further complicate performance monitoring and debugging across geographically distributed infrastructure.

Privacy and security pose serious challenges for cloud deployment. Transmitting sensitive data to remote data centers creates vulnerabilities and complicates regulatory compliance. Organizations handling data subject to regulations like the General Data Protection Regulation (GDPR)14 or the Health Insurance Portability and Accountability Act (HIPAA)15 must implement comprehensive security measures including encryption, strict access controls, and continuous monitoring to meet stringent data handling requirements. Privacy-preserving ML techniques, including federated learning and differential privacy, address these challenges at the systems level.

14 GDPR (General Data Protection Regulation): The European privacy framework (2018) whose “Right to be Forgotten” provision creates a systems constraint unique to ML: deleting a user’s data may require retraining or fine-tuning any model that learned from it, because weight updates are not individually reversible. This transforms a legal requirement into a compute cost that scales with model size and retraining frequency.

15 HIPAA (Health Insurance Portability and Accountability Act): This US law translates the security measures from the context sentence—encryption, access controls, and monitoring—into direct systems-level costs like isolated compute, immutable logging for every inference, and end-to-end data encryption. These nonnegotiable safeguards are the source of the “stringent data handling requirements” and typically add 15–30 percent to infrastructure and operational overhead for a production ML system.

16 Total Cost of Ownership (TCO): This analysis quantifies the gap between sticker price and true system cost by including all direct and indirect costs (power, cooling, labor) over a system’s lifetime. The cloud vs. edge decision makes this explicit, trading high upfront capital expense (CapEx) for hardware against recurring operational expenses (OpEx) for cloud services. For an on-premise GPU, the initial purchase price is often only 30–40 percent of the three-year TCO, with the rest dominated by these operational costs.

Cost management introduces operational complexity requiring TCO16 analysis rather than naive unit comparisons. A worked cloud vs. edge TCO comparison illustrates the gap between sticker price and true system cost.

Napkin Math 1.4: Cloud vs. edge TCO

Cloud Implementation (illustrative public list pricing). Table 8 itemizes the annual GPU, network, load-balancer, and observability costs:

| Cost Component | Calculation | Annual Cost |

|---|---|---|

| GPU inference (A10G) | 4 instances \(\times\) 8,760 hrs \(\times\) $0.75/hr | ~$26,280 |

| Network egress | 100 GB/day \(\times\) 365 \(\times\) USD 0.09/GB | ~$3,285 |

| Load balancer | USD 0.025/hr + LCU charges | ~$3,723 |

| CloudWatch/logging | Monitoring, alerts | ~$2,000 |

| Total Cloud | ~$35,288/year |

Edge Implementation (On-premise NVIDIA T4 server). Table 9 itemizes the corresponding hardware, power, cooling, network, and labor costs:

| Cost Component | Calculation | Annual Cost |

|---|---|---|

| Hardware CAPEX | $15,000 server ÷ 3-year life | ~$5,000 |

| Power (24/7) | 300W \(\times\) 8,760 hrs \(\times\) $0.12/kWh | ~$315 |

| Cooling overhead | ~30 percent of power | ~$95 |

| Network (fiber) | Fixed line for remote management | ~$1,200 |

| DevOps labor | 0.1 FTE \(\times\) $150,000 salary | ~$15,000 |

| Total Edge | ~$21,610/year |

Break-even Analysis: Equation 7 determines when edge deployment becomes cost-effective. Edge Fixed Costs include hardware amortization and maintenance, Cloud Variable Cost per Unit is the per-inference cloud pricing, and Capacity is the maximum inference rate of the edge system: \[\text{Break-even utilization} = \frac{\text{Edge Fixed Costs}}{\text{Cloud Variable Cost per Unit} \times \text{Capacity}} \tag{7}\]

Under this steady-capacity scenario, edge reaches cost parity at roughly 612K inferences/day, or about 61% of the 1M/day operating point. At high, steady volume, edge wins by ~39%; below the crossover, cloud elasticity usually wins.

Systems insight: Edge TCO is dominated by labor (69%), not hardware. Organizations without existing DevOps capacity should factor in the full cost of maintaining on-premise infrastructure.

Unpredictable usage spikes complicate budgeting, requiring comprehensive monitoring and cost governance frameworks.

Network dependency creates a further constraint: any connectivity disruption directly impacts system availability, particularly where network access is limited or unreliable. Vendor lock-in compounds this problem, as dependencies on specific tools and APIs create portability challenges when transitioning between providers. Organizations must balance these constraints against cloud benefits based on their specific application requirements and risk tolerance.

Despite these trade-offs, cloud ML’s computational advantages make it indispensable for consumer applications operating at global scale.

Large-scale training and inference

Cloud ML’s computational advantages manifest most visibly in consumer-facing applications that require massive scale. Virtual assistants like Siri and Alexa illustrate the hybrid architectures that characterize modern ML systems: wake-word detection runs on dedicated low-power hardware (often sub-milliwatt) directly on the device, enabling always-on listening without draining batteries; initial speech recognition increasingly runs on-device for privacy and responsiveness; and complex natural language understanding and generation use cloud infrastructure for access to larger models and broader knowledge.

Economics drive this architecture as much as latency. Attempting to process voice interactions for billions of devices entirely in the cloud runs into both an economic and an infrastructure ceiling. Quantifying the voice assistant wall shows both limits at once.

Napkin Math 1.5: The voice assistant wall

Part 1: the economic wall

- Cloud cost: ~USD 0.50 per device/year → 1 B devices = USD 500,000,000/year. Economically prohibitive for a free feature.

- TinyML alternative: 0.1–1 mW local wake-word detection, <USD 0.01/year per device. Viable at any scale.

Part 2: the infrastructure wall

The economic argument is compelling, but the physics argument is decisive:

- Query volume: 1 B devices\(\times\) 20 queries/day = 20 billion queries/day.

- GPU demand: Each query requires ~200 ms of GPU time. Total: 1,111,111 GPU-hours/day.

- Data center capacity: A large data center (~10,000 GPUs) provides 240,000 GPU-hours/day.

- Average requirement: ~5 dedicated data centers just for voice inference.

- Peak reality: Queries cluster in waking hours (~4.5\(\times\) peak-to-average), requiring ~21 data centers at peak.

The bandwidth wall: Wake-word detection requires continuous audio monitoring. If devices streamed audio to the cloud (16 kHz, 16-bit), each transmits ~32 KB/s. Across 1 billion devices: 32 TB/s—a significant fraction of total global internet backbone capacity.

Systems insight: Cloud-only voice processing is not merely expensive; it is physically impossible at global scale. Local wake-word detection is an infrastructure necessity, not an optimization.

The voice assistant pipeline illustrates a core systems principle: deployment decisions are constrained by performance requirements, economic realities, and infrastructure physics. The hybrid approach reduces end-to-end latency relative to pure cloud processing while maintaining the computational power needed for complex language understanding, all within sustainable cost boundaries.

Recommendation engines deployed by Netflix and Amazon demonstrate another compelling application of cloud resources. These systems process massive datasets using collaborative filtering and deep learning architectures like the Deep Learning Recommendation Model (DLRM)17 to uncover patterns in user preferences. DLRM exemplifies a memory-capacity-bound workload: its massive embedding tables, representing millions of users and items, can exceed terabytes in size, requiring distributed memory across many servers just to store the model parameters. Cloud computational resources enable continuous updates and refinements as user data grows, with Netflix processing over 100 billion data points daily to deliver personalized content suggestions that directly enhance user engagement.

17 Deep Learning Recommendation Model (DLRM): Meta’s 2019 architecture that exemplifies the “Sparse Scatter” archetype. Embedding tables for production recommendation systems can exceed 100 TB, making DLRM constrained by memory capacity and communication \(\text{BW}\) rather than raw \(R_{\text{peak}}\). This inversion of the typical compute-bound assumption forces specialized cluster designs where memory, not arithmetic, is the scarce resource.

These applications share a common thread: they trade latency for scale, accepting hundreds of milliseconds of round-trip delay in exchange for access to computational resources that no other paradigm can provide. Fraud detection systems analyzing millions of transactions, recommendation engines processing terabytes of embedding tables, and language models generating text one token at a time all depend on this bargain. Yet as the voice assistant wall demonstrated, there exist applications where no amount of cloud compute can compensate for the physics of distance. When latency budgets drop below what the speed of light permits, or when data volumes exceed what networks can carry, the computation must move closer to the data source.

Self-Check: Question

Which statement most accurately captures the defining trade-off of the Cloud ML paradigm as framed in this chapter?

- Cloud ML trades latency tolerance for access to effectively unbounded centralized compute, memory, and storage — a bargain that fails precisely when the application cannot tolerate the round-trip time.

- Cloud ML is the right choice whenever privacy is not a regulatory requirement, because remote compute is always cheaper than local compute at any utilization level.

- Cloud ML is the best choice whenever a workload’s compute intensity exceeds local device limits, regardless of whether the latency budget is strict or relaxed.

- Cloud ML eliminates the need to reason about ingestion bandwidth and data movement, because the provider’s backbone makes capacity effectively free from the client’s perspective.

A robotic safety monitor has a 10 ms response budget and the nearest cloud data center is 1,500 km away. A proposal suggests ‘scale the cloud fleet 10× and the problem is solved.’ Using the light-barrier analysis, explain why no amount of cloud provisioning rescues this workload, and name the kind of investment that would actually help.

In the section’s worked cloud-vs-edge TCO example at roughly one million inferences per day, what is the most important engineering lesson for choosing where to deploy?

- Edge is always cheaper because hardware amortization dominates every other cost line.

- Cloud always wins because operational labor on cloud is negligible next to GPU rental.

- At sustained high utilization, edge compute can be cheaper per inference, but operational labor (DevOps, updates, monitoring) often dominates edge TCO enough that minimizing hardware spend alone is a misleading objective.

- Model accuracy is the main determinant of TCO, because higher accuracy reduces the number of servers needed.

The section’s ‘Voice Assistant Wall’ argument concludes that cloud-only voice processing is infeasible at global scale. Which pair of reasons captures the core argument?

- Speech models cannot be trained in the cloud quickly enough to keep up with new device launches.

- Both the annual cloud cost and the aggregate data-center plus bandwidth capacity required become prohibitive when billions of always-listening devices continuously rely on remote processing — the scaling is economic and infrastructural.

- Wake-word detection accuracy always degrades when the model is not co-located on the device.

- Mobile operating systems forbid persistent network connections for audio streaming.

True or False: Because Cloud ML offers effectively unbounded compute and storage, it is the universally best deployment paradigm for any team that can afford it.

Edge ML: Latency and Privacy

When latency budgets drop below 100 ms, cloud infrastructure hits a hard physical wall. The Distance Penalty means the speed of light alone imposes minimum latencies of 40–150 ms for cross-region requests—before any computation begins. When an autonomous vehicle needs to decide whether to brake, or an industrial robot needs to stop before hitting an obstacle, 100 ms is an eternity. The logical engineering response is to move the computation closer to the data source.

Edge ML emerged from this constraint, trading unlimited computational resources for sub-100 ms latency and local data retention. In archetype terms, edge deployment transforms the optimization target: a bandwidth hog workload like LLM inference that is memory bound in the cloud becomes latency-bound at the edge, where the 50–100 ms network penalty dominates the 10–20 ms compute time. Edge hardware with sufficient local memory can eliminate this penalty entirely, shifting the bottleneck back to the underlying memory bandwidth constraint. Recall the iron law from Equation 6: by processing locally, edge deployment eliminates the \(D_{\text{vol}}/\text{BW}_{\text{IO}}\) (network I/O) term entirely, collapsing the latency to \(\max(D_{\text{vol}}/\text{BW}, O/(R_{\text{peak}} \cdot \eta_{\text{hw}})) + L_{\text{lat}}\)—the same memory-vs.-compute trade-off, but without the network penalty that dominates cloud inference.

This paradigm shift is essential for applications where cloud’s 100–500 ms round-trip delays are unacceptable. Autonomous systems requiring split-second decisions and industrial IoT18 applications demanding real-time response cannot tolerate network delays. Similarly, applications subject to strict data privacy regulations must process information locally rather than transmitting it to remote data centers. Edge devices (gateways and IoT hubs) occupy a middle ground in the deployment spectrum, maintaining acceptable performance while operating under intermediate resource constraints.

18 Industrial IoT (IIoT): A domain where latency constraints are set by physical safety, not user perception. The 100+ ms round-trip delay mentioned is intolerable for a robotic arm that must halt within 5 ms of detecting a human. This forces computation to the edge, trading near-zero network latency for significant on-device compute \((R_{\text{peak}})\) constraints.

This locality-first trade-off defines the edge paradigm.

Definition 1.3: Edge ML

Edge Machine Learning is the deployment paradigm optimized for Latency Determinism and Data Locality by locating computation physically adjacent to data sources.

- Significance (quantitative): It circumvents the Distance Penalty \((L_{\text{lat}})\) of the cloud, trading elastic scale for a fixed Local Compute Capacity \((R_{\text{peak}})\).

- Distinction (durable): Unlike Cloud ML, which prioritizes Throughput, edge ML prioritizes Determinism and privacy. Unlike TinyML, edge ML may still use workstation-class accelerators (GPGPUs).

- Common pitfall: A frequent misconception is that edge ML refers to a specific hardware class. In reality, it is a Location Paradigm: it spans from IoT gateways to on-premise servers, unified by physical proximity to the data source.

Figure 5 organizes these trade-offs into four operational dimensions. The Characteristics branch highlights decentralized processing, which drives the key Benefit of reduced latency. This trade-off, however, introduces Challenges in maintenance and security, as the physical hardware is distributed and harder to secure than a centralized data center.

The benefits of lower bandwidth usage and reduced latency become stark when we examine real-world data rates. The defining characteristic of edge deployment is less about where processing occurs than about how much data that location must handle. When the data rate exceeds available network capacity, the resulting bandwidth bottleneck forces processing to the edge regardless of other considerations.

Napkin Math 1.6: The bandwidth bottleneck

Physics:

- Raw data rate per camera: 1920 \(\times\) 1080 \(\times\) 3 bytes\(\times\) 30 FPS ≈ 187 MB/s.

- Total data rate: 100 cameras\(\times\) 187 MB/s = 18.7 GB/s.