Sustainable AI

Purpose

Why does energy consumption determine what machine learning systems can exist, not just what they cost to operate?

Power is not merely an operational expense but a hard physical constraint that limits what can be built. A data center has a fixed power budget determined by its electrical infrastructure and cooling capacity; exceeding that budget is not expensive but impossible. Training runs that require more power than available cannot happen regardless of budget. Deployment locations are constrained by grid capacity and cooling feasibility, not just real estate prices. At frontier scale, the question shifts from “can we afford this” to “can this physically exist”—and the answer increasingly depends on energy efficiency rather than algorithmic capability. The organizations pushing machine learning forward are those that treat energy as a first-class engineering constraint alongside accuracy and latency, because sustainability is not about virtue but about the physics that determines which ambitious systems can actually be built and operated (Lam et al. 2023; Kurth et al. 2023).

Learning Objectives

- Explain the sustainability paradox where AI compute growth outpaces hardware efficiency gains

- Analyze how Jevons Paradox causes efficiency improvements to increase total resource consumption

- Calculate Power Usage Effectiveness (PUE) and lifecycle carbon footprints across training, inference, and manufacturing, differentiating operational emissions from embodied carbon

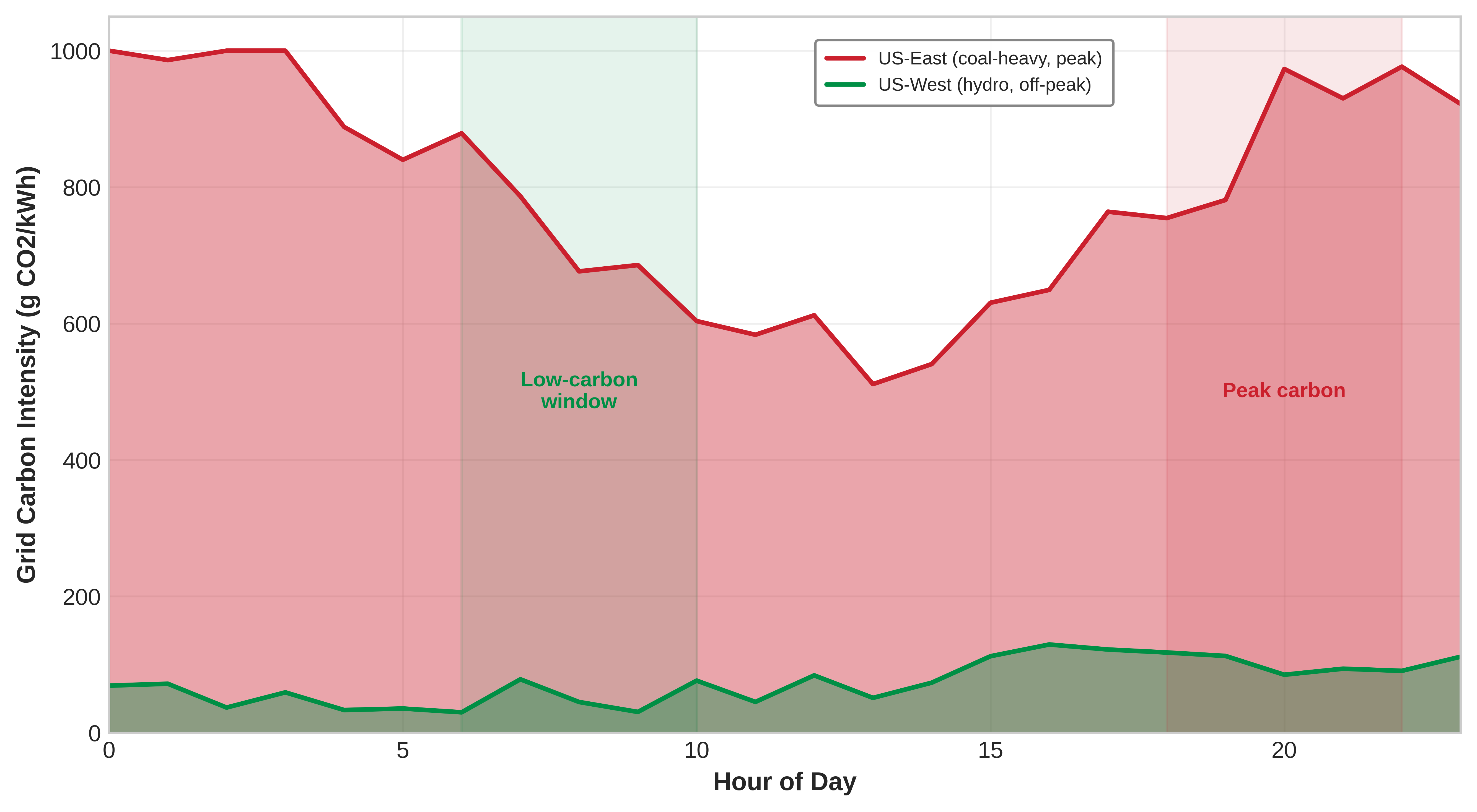

- Analyze geographic and temporal factors affecting carbon intensity and apply these insights to workload scheduling decisions

- Evaluate algorithmic optimizations (pruning, quantization, knowledge distillation) and edge deployment for accuracy-energy trade-offs and lifecycle sustainability

- Design carbon-aware scheduling that uses renewable energy and grid intensity to cut emissions 50–80 percent while meeting performance requirements

- Critique carbon offsets vs. actual emissions reductions and synthesize multi-layer plans integrating algorithmic, infrastructure, and policy levers

This chapter’s position in the book’s organizing framework, the fleet stack, clarifies why energy and environmental constraints are not external concerns but physical limits that bound what the entire system can achieve.

Systems Perspective 1.1: Fleet stack connection

The Energy Ceiling

When an engineer optimizes a database query to save 100 milliseconds, it is considered standard performance tuning. When that same query is executed billions of times a day across a global data center, however, that 100-millisecond savings translates to megawatts of electrical power and tons of avoided carbon emissions (Lacoste et al. 2019). Sustainable AI ceases to be a theoretical ethical concern once we recognize that power density is the absolute physical ceiling on data center computational capacity; energy is the ultimate currency of machine learning.1

1 Joule: The SI unit of energy (1 J = 1 Watt-second). To ground the scale of the fleet: a single A100 GPU at peak load consumes ~400 Joules every second. Large-model training runs are measured in billions to trillions of joules, so small per-operation inefficiencies scale into facility-level energy demand.

Security (Security & Privacy) protects ML systems from adversarial threats. Robustness (Robust AI) ensures they perform reliably under distribution shift. This chapter addresses a third operational concern that determines long-term viability: the resource constraints that govern whether systems remain economically and environmentally sustainable at scale.

Contemporary machine learning applications operate at industrial scales, with environmental impact now comparable to established heavy industries. Training a single frontier AI model can consume as much electricity as roughly 122 US homes do in an entire year. The exponential growth trajectory of computational demands outpaces efficiency improvements in underlying hardware by orders of magnitude, establishing the sustainability paradox in artificial intelligence (Sevilla et al. 2022). This chapter formalizes these constraints into an engineering discipline: Sustainable AI.

Definition 1.1: Sustainable AI

Sustainable AI is the systems engineering practice of measuring and optimizing the full environmental cost of ML systems (energy, water, and embodied carbon across training, inference, and hardware manufacturing) and incorporating those costs as explicit constraints in architecture decisions alongside performance and accuracy objectives (Lannelongue et al. 2021).

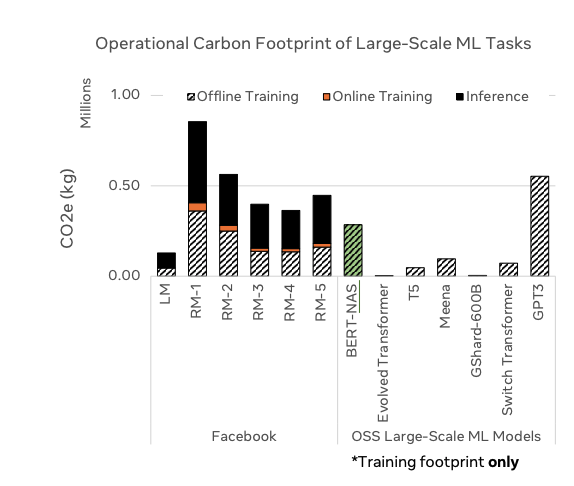

- Significance (quantitative): Training GPT-3 consumed approximately 1,287 MWh of energy (Li 2020), equivalent to roughly 122 US household-years of electricity. Fine-tuning a pretrained model on domain data consumes roughly 1–5 percent of full training cost, making transfer learning a 20–100\(\times\) more energy-efficient path to the same capability. At inference scale, a 175B-parameter model serving 10M queries/day at 100 ms per query can consume more cumulative energy in months of production service than its training, making inference optimization the dominant sustainability lever at production scale.

- Distinction (durable): Unlike corporate sustainability reporting (which aggregates energy usage into annual CO2 disclosures), sustainable AI engineering operates at the individual workload level—selecting hardware based on FLOP/watt efficiency, scheduling training during periods of high renewable availability, and choosing model architectures that minimize inference FLOPs rather than simply maximizing accuracy.

- Common pitfall: A frequent misconception is that switching to renewable energy solves the sustainability problem. For hardware-intensive ML, embodied carbon (the carbon emitted manufacturing the chips, servers, and cooling equipment before they ever run a training job) often equals or exceeds operational carbon; over 50 percent of an edge device’s lifecycle carbon can come from manufacturing, making hardware longevity and utilization rate as important as energy source.

The environmental impact of AI systems spans the complete lifecycle: from semiconductor manufacturing and data center construction to model training, inference deployment, and electronic waste (Jones et al. 2021). Treating this full lifecycle as an engineering problem rather than a corporate responsibility exercise transforms sustainability from a vague objective into a measurable engineering requirement. Before we can optimize this massive footprint, however, we must ground our intuition by calculating the raw physical energy required to produce frontier machine intelligence.

Checkpoint 1.1: The energy of intelligence

A 175B parameter model requires approximately \(3.14 \times 10^{23}\) FLOPs to train. Assuming a data center PUE of 1.1 and an end-to-end realized training efficiency of 50 GFLOPs/Watt:

- Calculate the total energy consumption in megawatt-hours (MWh).

- If the average US household consumes 10.6 MWh per year, how many “household-years” does this single training run represent?

- Discuss whether this metric captures the true environmental cost, considering the difference between energy consumption (MWh) and carbon intensity (g CO2/kWh).

The measurement, modeling, and mitigation frameworks presented in this chapter represent essential engineering competencies alongside traditional performance optimization. Mastering them requires understanding the scale of the problem, the physics that constrain solutions, and the system-level interventions that move the needle.

The scale of environmental impact

The numbers become visceral when translated into familiar physical quantities. To appreciate the scale of the problem, translate a single frontier training run into emissions and a familiar travel comparison.

Napkin Math 1.1: The carbon cost of training

Math:

- Energy: 1,287 MWh = 1,287,000 kWh.

- Carbon intensity (US average): \(\approx\) 0.429 kg CO\(_2\)/kWh.

- Total Emissions: \(1,287,000 \times 0.429 \approx\) 552,123 kg CO\(_2\).

- Comparison:

- One passenger, NY to London (round trip): \(\approx 1,000 \text{ kg CO}_2\).

- Ratio: 552,123 / 1,000 = 552 flights.

Systems insight: A single training run emits as much carbon as hundreds of trans-Atlantic passenger round trips. Optimization matters. Moving this job to a hydro-powered region (0.02 kg/kWh) would reduce emissions by 21\(\times\) to about 25 passenger round trips.

That arithmetic shows the carbon cost of one run; at frontier scale, the next constraint is whether enough power can be delivered to the cluster at all.

Lighthouse 1.1: Archetype A (GPT-4/Llama-3): The energy wall

AI systems consume resources at industrial scales that rival traditional heavy industries.

Napkin Math 1.2: Automated carbon-aware scheduling (Tier 3 optimizer)

Solution: We invoke the PlacementOptimizer to synthesize grid carbon intensity, regional electricity rates, and the carbon tax into a single optimization objective.

Result: The optimizer evaluates the design space and selects the global minimum:

- Optimal Region: Quebec

- Total Projected Cost: USD 0.79 million (Including carbon penalty)

Systems insight: In a pure energy-cost-only model, engineers might choose the region with the lowest raw electricity rate. However, once a carbon tax is introduced, the Externalities Wall becomes a first-class economic constraint. The optimizer proves that the hydro-powered grid in Quebec is the most cost-effective choice, as the massive carbon savings more than offset any marginal difference in electricity pricing.

That optimization result rests on a simpler physical fact: identical energy demand produces radically different emissions depending on the grid that supplies it.

Napkin Math 1.3: The geography of carbon

- Site A (Quebec): Hydropower, 20 g \(\text{CO}_2\)/kWh.

- Site B (Poland): Coal-heavy, 800 g \(\text{CO}_2\)/kWh. How does the location affect a model’s carbon footprint?

Math: Carbon = Energy \(\times\) Grid Intensity.

- Site A Emissions: 10,000,000 kWh \(\times\) 20 g = 200,000,000 g = 200 tonnes \(\text{CO}_2\).

- Site B Emissions: 10,000,000 kWh \(\times\) 800 g = 8,000,000,000 g = 8,000 tonnes \(\text{CO}_2\).

- Ratio: 8,000/200 = 40\(\times\) difference.

Systems insight: Site selection is the single most effective tool for sustainable AI. A 40\(\times\) difference in carbon emissions is larger than any possible algorithmic speedup. In the Machine Learning Fleet, Carbon-Aware Scheduling (moving nonurgent jobs to low-carbon regions or hours) is a first-class operational competency. Efficiency extends beyond FLOPs to the Carbon-Intensity of those FLOPs.

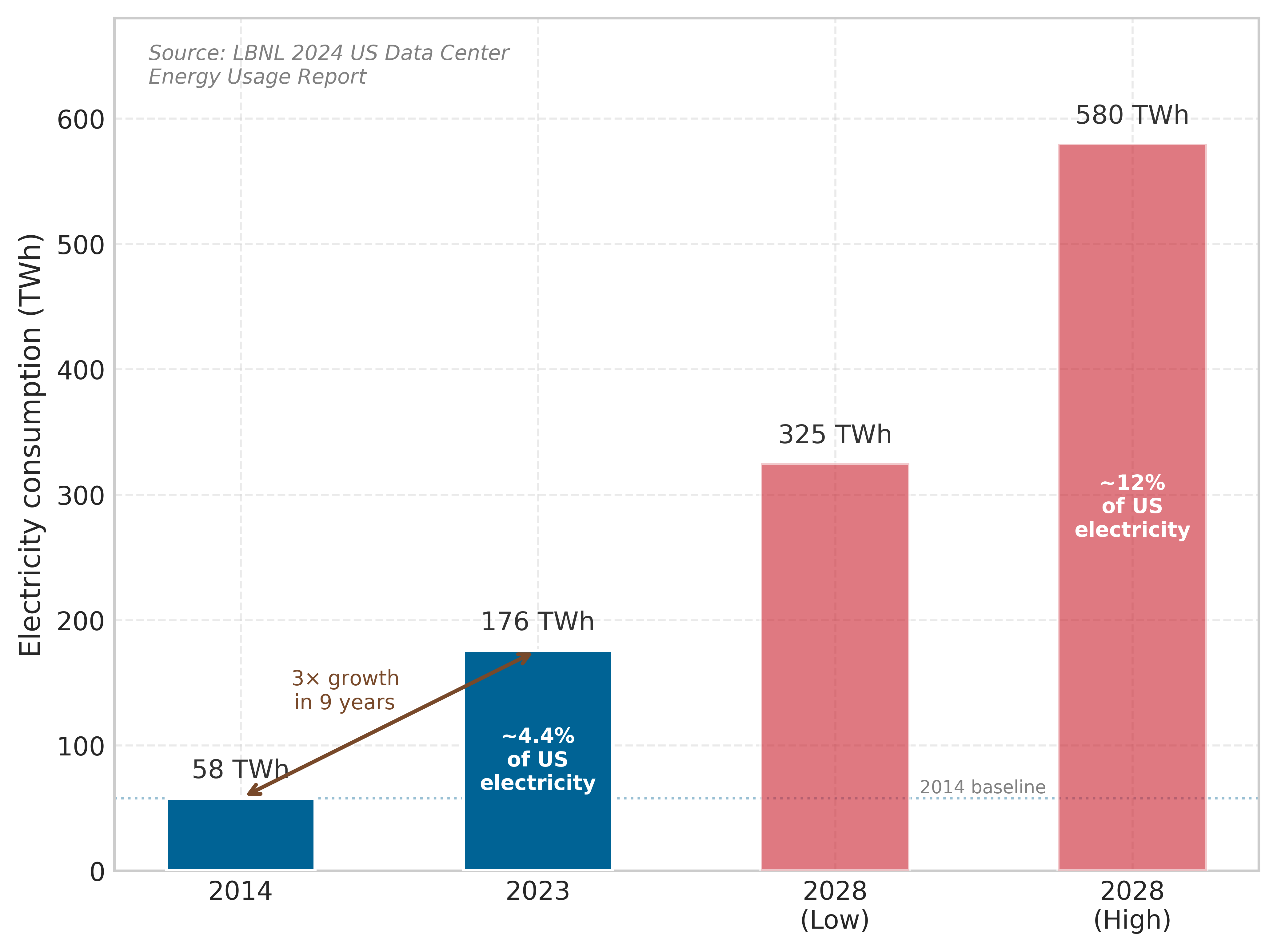

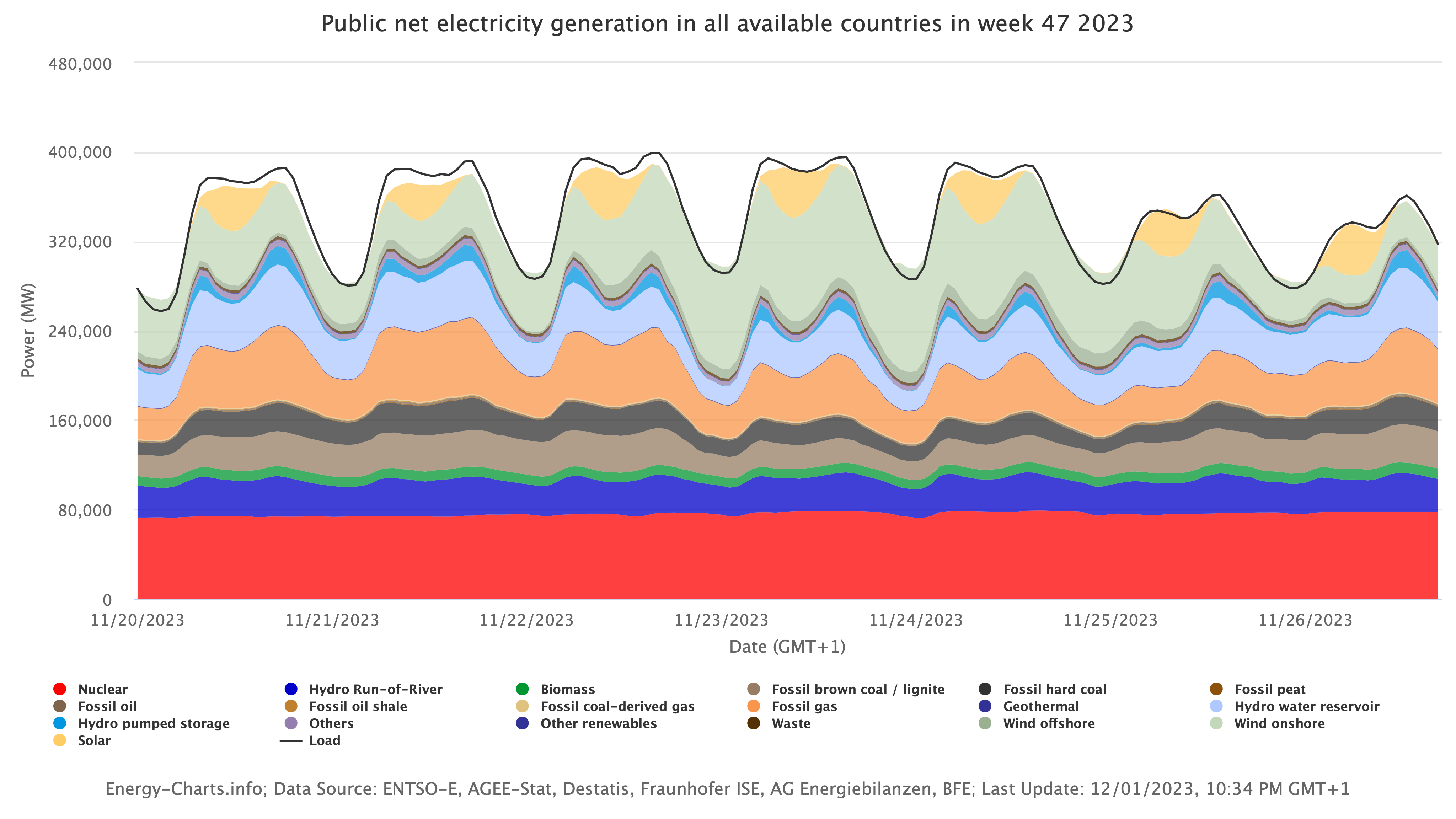

Training a single large language model consumes thousands of megawatt-hours of electricity, equivalent to powering hundreds of households for months.2 IEA projects global data-center electricity consumption to reach about 945 TWh by 2030, just under 3 percent of global electricity demand, with AI-accelerated servers driving much of the growth.3 Computational demands increased 350,000\(\times\) from 2012 to 2019 (Schwartz et al. 2020), while hardware efficiency improved at a far slower rate, creating an unsustainable growth trajectory.

2 Household Energy Baseline: The average U.S. household consumes 10,500 kWh annually. GPT-3’s verified 1,287 MWh training run equals 122 households’ annual electricity, and later frontier-scale runs can require substantially more compute. This comparison anchors an otherwise abstract energy figure to physical infrastructure: a single training run draws more grid capacity than a residential neighborhood.

3 Data Center Industrial Scale: IEA’s 2025 Energy and AI analysis projects data centers to consume about 945 TWh of electricity by 2030, just under 3 percent of global electricity demand. This is an electricity-demand metric, not directly comparable to aviation or cement shares of global emissions, but it still means AI infrastructure competes for grid capacity with heavy industry: regions that cannot expand power generation cannot expand AI deployment, regardless of demand.

4 GPU Manufacturing Embodied Carbon: A single H100 GPU embodies 150–200 kg CO2 from fabrication before computing its first FLOP. Advanced-node manufacturing also requires substantial ultrapure water, specialty gases, chemicals, and high-temperature process steps. This embodied cost means that in clean-grid regions (hydro, nuclear), manufacturing emissions can rival or exceed operational carbon, making hardware longevity and circular economy reuse critical sustainability levers.

5 AI Hardware E-Waste: Global e-waste reached 53.6 million metric tons in 2019, with computing equipment contributing 15 percent. AI accelerators compound this: 3-5 year obsolescence cycles driven by rapidly advancing architectures mean that a fleet of 10,000 GPUs generates 10–20 metric tons of toxic e-waste per refresh cycle, containing lead, mercury, and cadmium requiring specialized disposal.

Beyond direct energy consumption, AI systems drive environmental impact through hardware manufacturing and resource consumption. Training and inference workloads depend on specialized processors that require rare earth metals whose extraction and processing generate pollution.4 The growing demand for AI applications accelerates electronic waste production, with global e-waste reaching 54 million metric tons annually (Forti et al. 2020; Baldé et al. 2017). AI hardware rapidly becomes obsolete due to accelerating performance requirements.5

Addressing these environmental challenges demands a coordinated response across technical, policy, and ethical dimensions to ensure AI development remains viable and responsible.

Environmental impact and ethical foundations

When training a single language model consumes electricity equivalent to hundreds of homes annually, urgent questions arise about who benefits from AI progress and who bears its ecological costs. The intersection of exponential computational demands with finite planetary resources demands that the field confront difficult choices about sustainable development pathways balancing innovation with environmental responsibility.

Environmental justice and responsible development

The environmental impact of AI creates ethical responsibilities that extend beyond technical optimization. Environmental sustainability emerges as a critical component of trustworthy AI systems, extending the responsible AI principles examined in Responsible Engineering to include ecological stewardship (Vinuesa et al. 2020). The computational resources required for AI development concentrate environmental costs on specific communities while distributing benefits unequally across global populations. Data centers consume on the order of a few percent of global electricity and substantial water for cooling (Andrae and Edler 2015; Jones 2018), often in regions where energy grids rely on fossil fuels and water resources face stress from climate change.

6 Environmental Justice in Data center Siting: Data centers gravitate toward low-cost land and electricity, which often means economically disadvantaged areas. The result is an asymmetric externality: communities hosting AI infrastructure bear water depletion, heat island effects, and grid strain, while economic benefits concentrate in distant tech hubs. For ML systems engineers, this creates a design constraint: site selection must factor in social license alongside grid carbon intensity, because community opposition can block or delay facility expansion.

Geographic concentration of environmental burden creates questions of environmental justice that align with broader responsible AI frameworks.6 Fairness considerations require examining who benefits from AI systems and who bears their risks; environmental responsibility demands understanding who pays the ecological costs of AI advancement. Communities hosting AI infrastructure bear disproportionate environmental burdens while having limited access to AI’s economic benefits, exemplifying the need to extend ethical AI frameworks beyond algorithmic fairness to encompass environmental stewardship.

Exponential growth vs. physical constraints

Exponential growth in computational demands challenges the long-term sustainability of AI training and deployment. Over the past decade, AI systems have scaled faster than any prior computing workload, with compute requirements increasing 350,000\(\times\) from 2012 to 20197 (Schwartz et al. 2020). This trend continues as machine learning systems prioritize larger models with more parameters, larger training datasets, and higher computational complexity. Sustaining this trajectory poses sustainability challenges, as hardware efficiency gains fail to keep pace with rising AI workload demands.

7 AI Compute Growth Rate: The 350,000\(\times\) increase from 2012 to 2019 implies a doubling time of approximately 4.6 months, roughly 5.3\(\times\) faster than Moore’s Law’s 2-year doubling. This divergence is the root cause of the energy wall: no physically realizable improvement in silicon efficiency can match a doubling cadence measured in months, making algorithmic efficiency and carbon-aware scheduling the only viable sustainability levers at scale.

8 Moore’s Law: Gordon Moore’s 1965 observation that transistor density doubles every two years drove 60 years of “free” efficiency gains for the semiconductor industry. At 3nm process nodes, physical limits are ending this trajectory: individual atoms become the constraint. For AI sustainability, the end of Moore’s Law means that future efficiency gains must come from architectural specialization and algorithmic optimization rather than process shrinks.

9 Dennard Scaling: Robert Dennard observed in 1974 that smaller transistors could operate at constant power density by reducing voltage proportionally. This ended around 2005 when leakage current made further voltage reduction impractical. The consequence for AI sustainability is direct: without Dennard scaling, each new process node no longer delivers proportional power savings, forcing the shift to specialized accelerators—GPUs and Tensor Processing Units (TPUs)—that achieve efficiency through architectural parallelism rather than transistor physics.

Historically, computational efficiency improved with advances in semiconductor technology. Moore’s Law predicted that the number of transistors on a chip would double approximately every two years, leading to continuous improvements in processing power and energy efficiency.8 However, Moore’s Law is now reaching core physical limits, making further transistor scaling difficult and costly. Dennard scaling, which once ensured that smaller transistors would operate at lower power levels, has also ended, leading to stagnation in energy efficiency improvements per transistor.9

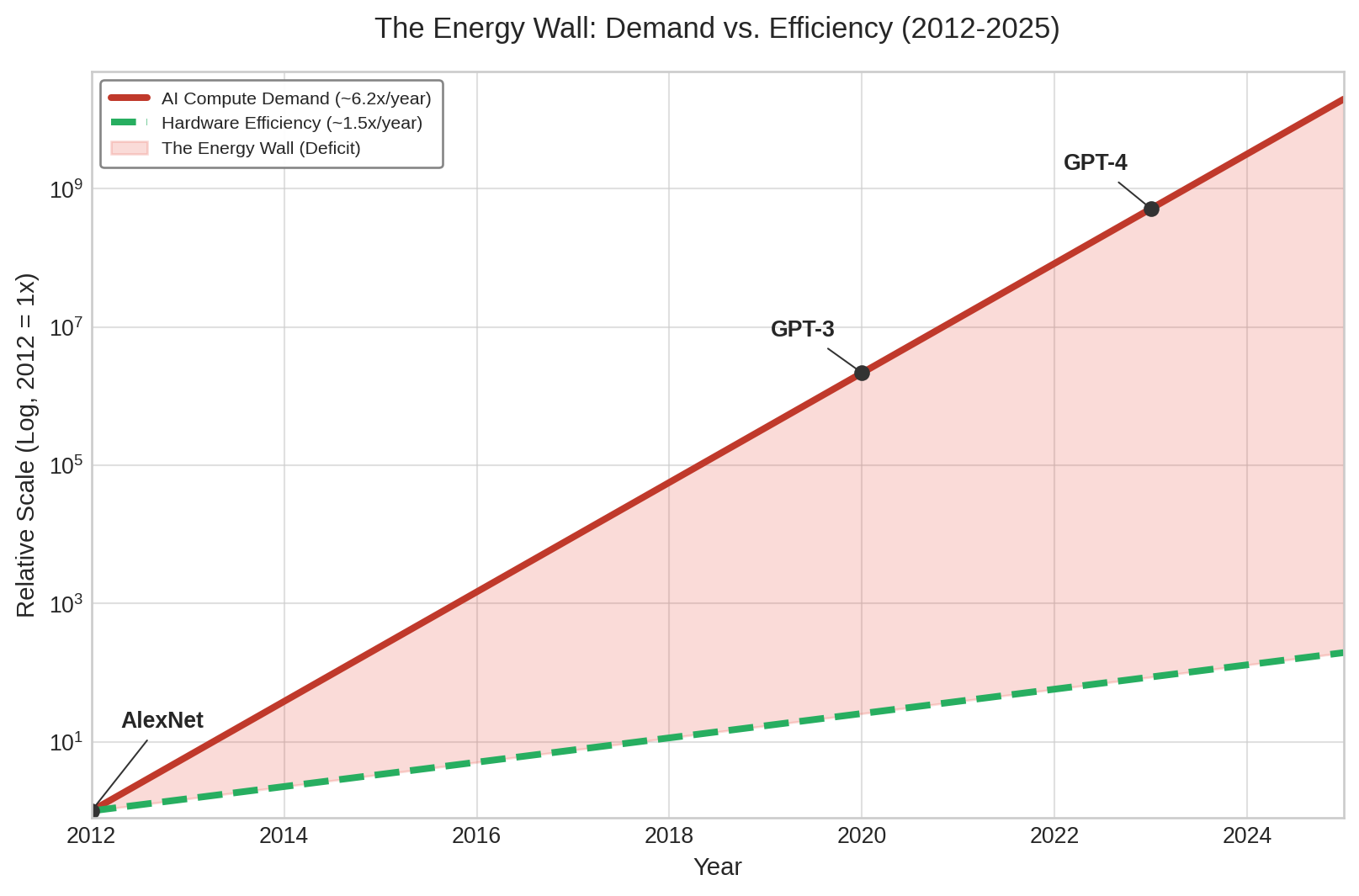

While AI models continue to scale in size and capability, the hardware running these models no longer improves at the same exponential rate. As Figure 1 illustrates, this growing divergence between computational demand and hardware efficiency creates an unsustainable trajectory where AI consumes ever-increasing amounts of energy. This technical reality underscores why sustainable AI development requires coordinated action across the entire systems stack, from individual algorithmic choices to infrastructure design and policy frameworks.

To make the uncertainty visible, Figure 2 shows high-growth sensitivity scenarios for data center electricity usage rather than the IEA baseline forecast above. The spread between best, expected, and worst cases illustrates how strongly the outcome depends on efficiency improvements and demand growth assumptions.

The energy wall: Divergent scaling

Figure 1 framed the energy wall as a divergence between compute demand and silicon efficiency. This section reframes the same divergence against a different ceiling, the physical energy infrastructure (battery density and grid efficiency), to show that the wall persists even if every accelerator hit its theoretical efficiency limit. AI sustainability presents a unique engineering challenge because it is a race between two fundamentally different physics: the exponential scaling of logic and the linear scaling of energy infrastructure.

As Figure 3 shows, AI compute grew ~350,000\(\times\) over the 2012–2019 period cited above while battery density and grid efficiency improve at only ~2–5 percent annually.

While AI logic follows the “iron law” of software optimization, energy follows the laws of chemistry and thermodynamics. Over the same seven-year interval, battery energy density would improve by only ~41 percent at a 5 percent annual rate, and grid efficiency by ~15 percent at a 2 percent annual rate. The 248,738\(\times\) gap between these curves is the Sustainability Wall—the point where we can no longer “buy our way out” of the efficiency problem with more power.

Data center grid dynamics

Sustainable AI requires looking beyond the server rack to the Electrical Grid Interface. Traditional data centers are “Steady-State” loads; they pull constant power 24/7. ML training clusters, however, are Transient Loads.

The power ramp and grid stability

As discussed in Power delivery, a 10,000-GPU cluster can swing its load by 5–10 megawatts in milliseconds during an AllReduce synchronization step. For an electrical utility, this is a noise event. When thousands of GPUs suddenly stop computing to wait for the network, they cause a voltage spike on the grid; when they resume, they cause a voltage sag. Managing these transients requires Energy Buffering: using on-site battery arrays or massive capacitors to smooth the training iterations, ensuring the ML Fleet does not destabilize the local municipal power grid.

Heat reuse: Turning waste into fuel

A data center is physically a system that converts high-quality energy (electricity) into low-quality energy (waste heat). In a sustainable fleet, this heat is not exhausted into the atmosphere but harvested. * District Heating: Modern facilities in Nordic regions (for example, Meta’s Odense facility) pipe waste heat into local municipal heating systems, providing enough thermal energy to warm thousands of homes. * Industrial Coupling: Using low-grade waste heat (~45°C) for greenhouse climate control or water desalination.

By treating heat as a byproduct rather than a pollutant, the fleet moves toward a circular energy economy10 (Un and Forum 2019).

10 PUE (Power Usage Effectiveness): In the early 2000s, PUE values of 2.0-2.5 were common, meaning more power went to cooling than to computing (Grid 2007). Google’s 2009 disclosure of PUE 1.21 proved that free-air cooling could halve data center overhead. The shift from PUE to CUE (Carbon Usage Effectiveness) and WUE (Water Usage Effectiveness) reflects a systems-level insight: optimizing watts alone is insufficient when water and carbon constraints bind independently.

11 GPT-3 Energy Scale: GPT-3’s 1,287 MWh training cost translates to roughly $130,000 in US electricity and 552 metric tons of CO2 at average grid intensity. The energy-per-parameter ratio of approximately 7.35 MWh per billion parameters reveals the co-design opportunity: optimized architectures using mixed precision and sparsity achieve sub-1 MWh per billion parameters, a several-fold efficiency gain that compounds across frontier-scale training runs.

12 Training Communication Overhead: Distributed training adds 15–30 percent energy overhead beyond raw computation due to gradient synchronization and checkpointing across nodes. For frontier models requiring thousands of GPUs, this communication tax alone can consume more energy than the entire training run of a mid-scale model, making parallelism strategy selection a first-order sustainability decision.

Training complex AI systems demands high levels of computing power, resulting in significant energy consumption. OpenAI’s GPT-3 exemplifies this scale: training required 1,287 megawatt-hours of electricity, equivalent to powering roughly 122 US homes for an entire year11 (Maslej et al. 2023). This energy consumption reflects the computation required to train modern large language models on large datasets.12

The scale of energy consumption makes efficiency improvements an engineering imperative. Generative AI models have proliferated in recent years, with each generation trained at larger parameter counts.

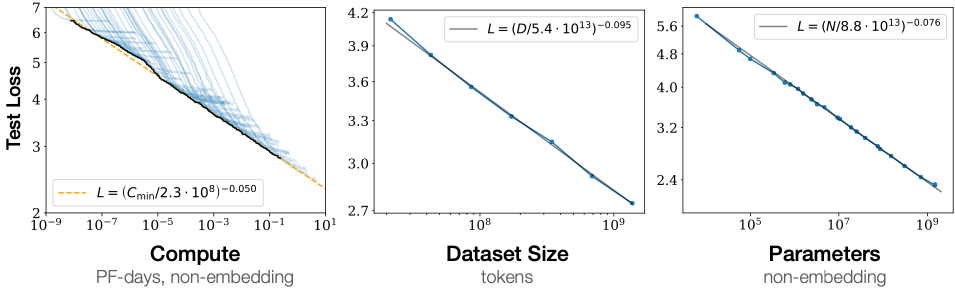

Research shows that increasing model size, dataset size, and compute used for training improves performance smoothly with no signs of saturation (Kaplan et al. 2020). Figure 4 demonstrates that test loss decreases predictably as each of these three factors increases, with no apparent ceiling in sight. Beyond training, AI-powered applications such as large-scale recommender systems and generative models require continuous inference at scale, consuming energy even after training completes. As AI adoption grows across industries from finance to healthcare to entertainment, the cumulative energy burden of AI workloads continues to rise, raising concerns about the environmental impact of widespread deployment.

Beyond electricity consumption, the sustainability challenges of AI extend to hardware resource demands and the energy efficiency limitations of current architectures. Different processor types affect environmental impact through their energy characteristics. Using pJ/FLOP as a common comparison point, central processing units consume approximately 100 pJ/FLOP, graphics processing units achieve roughly 10 pJ/FLOP for dense tensor operations, specialized tensor processors reach about 1–2 pJ/FLOP, and fixed-function low-precision accelerators approach 0.1 pJ/operation.13 These hardware platforms require rare earth metals and complex manufacturing processes with embodied carbon.

13 pJ/FLOP and pJ/MAC: Energy-efficiency specifications often mix floating-point operations and multiply-accumulate operations. One MAC performs a multiply and an add, so direct comparisons require converting the unit convention and precision. The simplified hierarchy used here aligns with Table 2: CPUs at roughly 100 pJ/FLOP, GPUs around 10 pJ/FLOP for dense tensor operations, TPUs around 1–2 pJ/FLOP, and custom low-precision ASICs approaching 0.1 pJ/operation. This hierarchy defines the sustainability opportunity: choosing the right hardware tier for a given workload can reduce energy consumption by 100–1,000\(\times\) without any algorithmic changes.

The production of AI chips is energy-intensive, involving multiple fabrication steps that constitute a major portion of Scope 3 emissions in the overall AI system lifecycle. As model sizes continue to grow, the demand for AI hardware increases, exacerbating the environmental impact of semiconductor production and disposal.

Theoretical efficiency limits as a sustainability model

To understand the scale of AI’s energy challenge, it helps to compare current systems with the theoretical limits of computational efficiency. Modern large language models (LLMs) operate with an energy efficiency gap of \(10^6\times\) compared to the most efficient known physical implementations of pattern recognition and reasoning. This disparity establishes a “Sustainability Wall” where industrial-scale energy infrastructure is required to achieve tasks that theoretically require only milliwatts of power.

Training a single model like GPT-3 creates a stark reminder of this gap: while silicon-based systems consume megawatts to process \(10^{12}\) tokens, theoretical models of distributed processing suggest that similar cognitive capabilities are achievable with power budgets comparable to a household light bulb. This motivates the search for alternative computing paradigms that prioritize energy-aware architecture over raw throughput.

Principles of high-efficiency computing

Physical efficiency in information processing stems from three key principles that differ from current AI systems:

Selective, Event-Driven Activation: Rather than processing all information continuously, high-efficiency systems are asynchronous. They activate only small portions of the network at any time and consume energy only when actively processing changing signals.14

Local Learning and Sample Efficiency: Current architectures require training on trillions of tokens to achieve competence. High-efficiency models use strong inductive biases and self-supervised local learning to acquire capabilities from 10,000\(\times\) less data, reducing the cumulative energy cost of the training phase.

Sparsity and Sparse Interconnects: In modern GPUs, the majority of energy is spent on data movement and global synchronization. High-efficiency systems use sparse representations where only 1-2 percent of parameters are active for any given task, reducing bandwidth and switching energy by 50–100\(\times\).

14 Event-Driven Computing: A paradigm where computation triggers only on input changes rather than continuous clock cycles. Neuromorphic chips like Intel’s Loihi exploit this to achieve 100–1,000\(\times\) energy reductions for temporal tasks (audio, video, sensor data) by drawing near-zero power when inputs are static. The trade-off: event-driven architectures sacrifice throughput on batch workloads where all data changes simultaneously.

15 Spiking Neural Networks (SNNs): Third-generation neural networks that communicate through discrete spikes rather than continuous activations. SNNs process information only when spikes occur, achieving 10–100\(\times\) energy savings on temporal data (audio, video, sensor streams). The sustainability trade-off: current SNN training algorithms remain immature compared to backpropagation, limiting accuracy on standard benchmarks, but hardware implementations like Intel Loihi 2 demonstrate the efficiency ceiling these architectures can approach.

The biological model points toward promising research directions for sustainable AI. Architectures that implement Spiking Neural Networks (SNNs) or sparse activation patterns can achieve significant energy reductions by mimicking sparse communication models15 (Prakash et al. 2023). Local learning algorithms and self-supervised approaches offer additional pathways toward more sample-efficient and energy-conscious systems.

Achieving sustainable AI requires a systematic shift in system design, moving from continuously active, dense architectures toward event-driven, sparse computation models. As compute demands outpace incremental efficiency improvements in silicon manufacturing, addressing AI’s environmental impact demands rethinking the fundamental “Physics” of the algorithm based on these efficiency principles.

Figure 5 shows how six successive intervention steps combine to reduce the energy gap by approximately 10,000\(\times\), transforming an intractable divergence into an engineering challenge. No single lever is sufficient; closing the gap requires simultaneous progress across algorithmic, hardware, and systemic fronts.

The convergence of exponential computational demands with hard physical efficiency limits creates an unsustainable trajectory that threatens the long-term viability of AI scaling. To alter this trajectory, we must move beyond back-of-the-envelope calculations and establish rigorous, systemic frameworks for measuring and assessing energy consumption across the entire ML infrastructure.

Self-Check: Question

A hyperscaler commits to a 500 MW campus for a new training cluster, but the local grid interconnect approval is capped at 320 MW for the next three years. The company’s credit line would cover the projected electricity bill five times over. Which framing best captures why the chapter treats sustainability as a first-class engineering constraint rather than a reporting concern?

- Carbon accounting rules require disclosing the full planned capacity before any portion can be energized, so the 180 MW gap creates a compliance problem the team must file before training begins.

- Power-delivery and grid-interconnect capacity impose a physical ceiling that dollars cannot resolve on the required timescale, so the 180 MW gap becomes an infeasibility the training plan must route around.

- Electricity price volatility makes the 180 MW gap a budgeting risk, so the primary response is to hedge power contracts and continue the original training plan.

- Public concern about AI ethics will force the company to match every unapproved megawatt with offsets, adding cost but not changing what can be built.

A team plans a 10,000 MWh training run. Their procurement team can route it to Quebec at roughly 20 gCO2/kWh or Poland at roughly 800 gCO2/kWh, or invest six engineer-months in a 15 percent algorithmic speedup that runs at the Poland site. Using the section’s carbon-intensity reasoning, justify which lever the team should pull first and quantify the difference.

True or False: Because specialized accelerators have delivered order-of-magnitude energy-efficiency gains each hardware generation, a team can plan a decade-long AI strategy that relies on continued silicon improvements alone to keep total datacenter power flat.

Order the following events during a synchronized gradient-update step in a large training cluster when they create a grid-side transient: (1) GPUs resume a compute phase and cluster draw returns toward peak, (2) on-site batteries or supercapacitors absorb the dip and smooth the voltage, (3) thousands of GPUs simultaneously pause for AllReduce, causing a sudden drop in cluster power draw.

A research group wants to cut AI energy use without assuming the grid will keep scaling. Which architectural direction most directly attacks the root cause of the inefficiency the section identifies?

- Keeping every layer and attention head active on every input so utilization is always high, because higher utilization always means better efficiency.

- Event-driven and sparse-activation architectures that compute only on changes or salient inputs, because most of today’s energy pays for dense, globally synchronized data movement.

- Replacing all ReLU activations with a different pointwise nonlinearity to shave a small fraction of arithmetic per layer.

- Deferring sustainability work until emissions reporting standards stabilize, because architectural choices cannot be justified without fixed metrics.

Energy Measurement and Modeling

Engineers cannot optimize what they cannot measure. A cluster consuming five megawatts during a large language model training run directs only a fraction of that power into matrix multiplications; the remainder is consumed by cooling fans removing the resulting heat. Effective energy modeling requires decomposing the monolithic data center power bill into granular, component-level metrics that engineers can target for optimization.

The data center infrastructure foundations from Compute Infrastructure established power and cooling as dominant engineering constraints. Systematic measurement transforms these constraints into actionable sustainability metrics across three critical areas: energy consumption tracking during training and inference, carbon footprint analysis across system lifecycles, and resource usage assessment for hardware and infrastructure. Just as performance engineering requires profiling before optimization, sustainable AI engineering requires measurement before mitigation.

Carbon footprint analysis

Carbon footprint analysis provides the foundation for making informed design decisions about AI system sustainability. As AI systems continue to scale, systematic measurement of energy consumption and resource demands enables proactive approaches to environmental optimization. Developers and companies that build and deploy AI systems must consider not only performance and efficiency but also the environmental consequences of their design choices.

A central ethical challenge lies in balancing technological progress with ecological responsibility. The pursuit of increasingly large models often prioritizes accuracy and capability over energy efficiency, creating exponential increases in carbon emissions. While optimizing for sustainability may introduce trade-offs such as 10 to 30 percent longer development cycles or 1 to 5 percent accuracy reductions through techniques like pruning and quantization, these costs are substantially outweighed by environmental benefits. Integrating environmental considerations into AI system design has become an ethical imperative. The shift demands new industry norms: energy-aware training techniques, low-power hardware designs, and carbon-conscious deployment strategies (Patterson et al. 2021).

The ethical imperative extends beyond sustainability to encompass broader concerns related to transparency, fairness, and accountability. Figure 6 frames these concerns as connected environmental-justice and accountability trade-offs: transparency gaps obscure energy and carbon costs, fairness failures distribute harms unevenly, and weak accountability makes resource consumption difficult to trace. These concerns extend to sustainability, as the environmental trade-offs of AI development are often opaque and difficult to quantify. The lack of traceability in energy consumption and carbon emissions can lead to unjustified actions, where companies prioritize performance gains without fully understanding or disclosing the environmental costs.

Addressing these concerns demands greater transparency and accountability from AI companies. Large technology firms operate extensive cloud infrastructures that power modern AI applications, yet their environmental impact remains opaque. Organizations must measure, report, and reduce their carbon footprint throughout the AI lifecycle, from hardware manufacturing to model training and inference. Voluntary self-regulation provides an initial step, but policy interventions and industry-wide standards may be necessary to ensure long-term sustainability. Reported metrics such as energy consumption, carbon emissions, and efficiency benchmarks can hold organizations accountable.

Ethical AI development requires open discourse on environmental trade-offs. Researchers must advocate for sustainability within their institutions and organizations, ensuring that environmental concerns are integrated into AI development priorities. The broader AI community has begun addressing these issues, as exemplified by an open letter advocating a pause on large-scale AI experiments, which highlights concerns about unchecked expansion (Future of Life Institute 2023). Fostering a culture of transparency and ethical responsibility allows the AI industry to align technological advancement with ecological sustainability.

AI has the potential to reshape industries and societies, but its long-term viability depends on responsible development practices. Ethical AI development involves preventing harm to individuals and communities while ensuring that AI-driven innovation does not occur at the cost of environmental degradation. As stewards of these technologies, developers and organizations must integrate sustainability into AI’s future trajectory.

Preventing environmental harm requires us to hold AI systems accountable for their resource usage with the same rigor we apply to latency or accuracy. To achieve this transparency, we must translate abstract power consumption metrics into the universally recognized metric of environmental impact: the carbon footprint calculation.

Checkpoint 1.2: Lifecycle carbon estimation

Parameters: 2,048 H100 GPUs, 30 days, 700 W TDP, PUE 1.3, grid intensity 400 g \(\text{CO}_2\)/kWh.

Operational: Power = 2,048 \(\times\) 0.7 kW \(\times\) 1.3 \(\approx\) 1,864 kW. Energy = 1,864 kW \(\times\) 24h \(\times\) 30 \(\approx\) 1.34 million kWh. Emissions \(\approx\) 537 metric tons \(\text{CO}_2\).

Embodied: Assume manufacturing footprint is \(\approx\) 164 kg \(\text{CO}_2\) per H100 GPU (NVIDIA’s product carbon footprint). Amortized for 1 month of a 3-year cycle: (2,048 \(\times\) 164 kg) / 36 months \(\approx\) 9.3 metric tons.

Total: 537 + 9.3 \(\approx\) 546 metric tons \(\text{CO}_2\).

Translating power consumption into carbon emissions is only the first measurement challenge. A systematic lifecycle assessment across the full hardware lifecycle reveals where carbon emissions concentrate and where engineering interventions yield the greatest returns.

Three-phase lifecycle assessment framework

Effective carbon footprint measurement requires systematic analysis across three distinct phases that collectively determine environmental impact:

For training-centric research workloads, the training phase often dominates operational emissions because mathematical optimization requires sustained parallel computation16. As demonstrated by the GPT-3 case study, large language model training runs exemplify this energy intensity. Geographic placement affects emissions: moving an identical workload between hydro-heavy and coal-heavy grids can create tens-fold differences in carbon intensity.17

16 Optimizer Memory as Energy Cost: Adaptive Moment Estimation (Adam) requires 3\(\times\) the memory of plain SGD because it stores per-parameter first and second moment estimates alongside the weights themselves. For a 70B model in FP32, this means 840 GB of optimizer state. The sustainability implication is direct: larger optimizer state means more HBM accesses per training step, and at 100 pJ/byte for DRAM, memory overhead can dominate the energy budget of parameter updates.

17 Carbon Intensity Variance: Grid carbon intensity spans two orders of magnitude: coal at 820 g CO2/kWh vs. hydro at 10–30 g CO2/kWh. Critically, intensity also varies temporally: Texas fluctuates 10\(\times\) within a single day based on wind generation. This dual geographic and temporal variance is what makes carbon-aware scheduling viable: identical training runs can differ by 40–75\(\times\) in emissions based solely on when and where they execute.

For high-volume production services, the inference phase can dominate lifetime emissions because model serving repeats continuously after the training run is complete. While individual inferences require less computation than training, the cumulative impact scales with deployment breadth and usage frequency. Models serving millions of users generate ongoing emissions that can exceed training costs over extended deployment periods.

The manufacturing phase contributes embodied carbon from hardware production, including semiconductor fabrication, rare earth mining, and supply chain logistics.18 Its share is smaller for long-running workloads on carbon-intensive grids, but it can reach 30–50 percent of lifetime emissions on clean grids or low-utilization hardware. Often overlooked, this phase represents irreducible baseline emissions independent of operational efficiency.

18 Embodied Carbon: The CO2 emitted during manufacturing, transport, and disposal before a device computes its first FLOP. A single H100 embodies 150–200 kg CO2 from fabrication alone; at 700 W on the average U.S. grid, continuous operation matches embodied carbon in roughly three to four weeks. As data centers shift to renewables, embodied carbon’s share of total lifetime emissions grows, potentially exceeding 30 percent, making hardware refresh cycles a first-order sustainability decision.

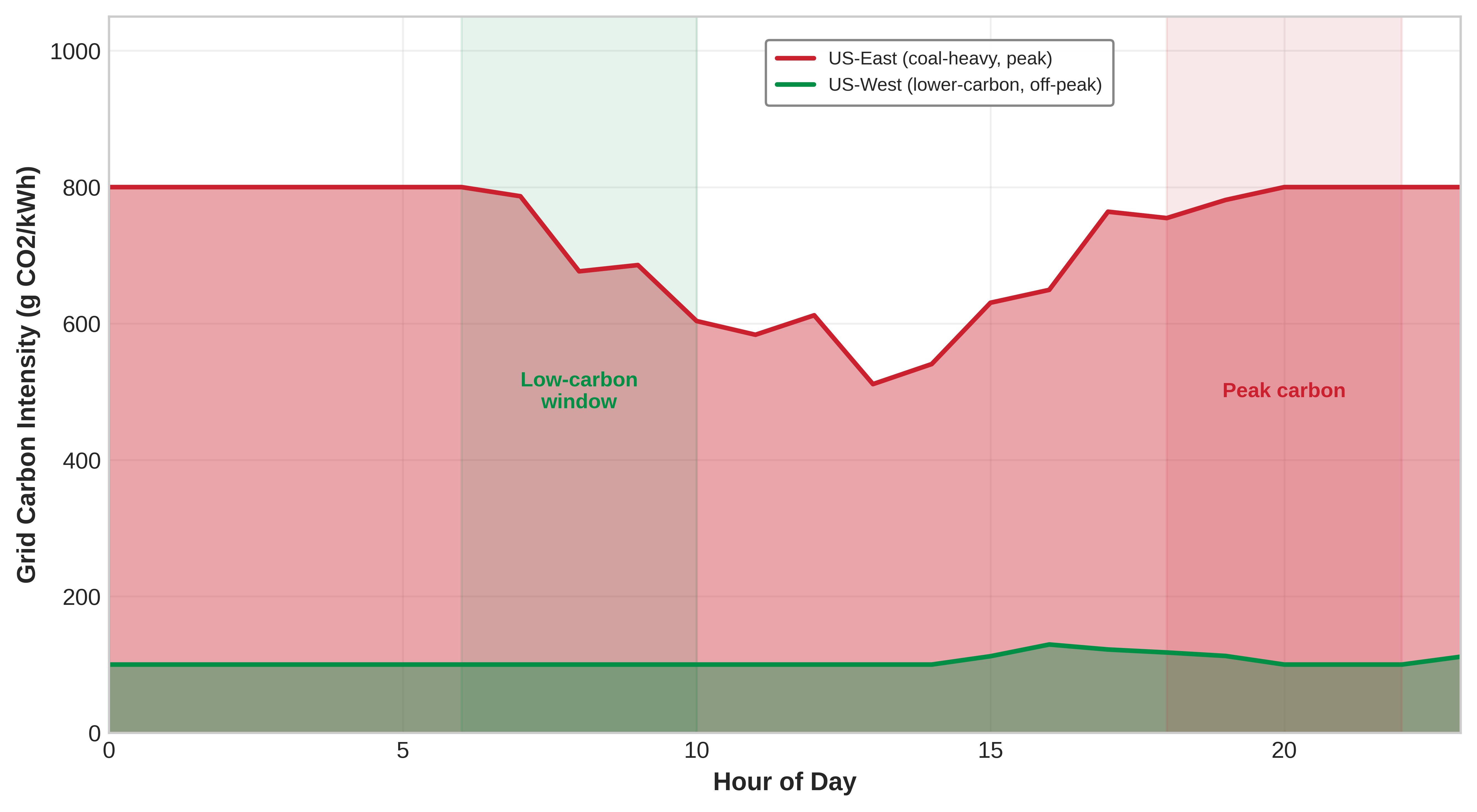

Geographic and temporal optimization

Carbon intensity varies across geographic locations and time periods, creating optimization opportunities. Temporal scheduling can reduce emissions by 50–80 percent by aligning compute workloads with renewable energy availability, such as peak solar generation during daylight hours (Patterson et al. 2022). Carbon-aware scheduling systems can automatically shift nonurgent training jobs to regions and times with lower carbon intensity.

Measuring carbon footprint during development requires integrating tracking tools into ML workflows. Listing 1 demonstrates how the CodeCarbon library wraps model training to capture real-time emissions data, enabling data-driven sustainability decisions.

from codecarbon import EmissionsTracker

import torch

# Initialize carbon tracking

tracker = EmissionsTracker()

tracker.start()

# Your model training code

model = torch.nn.Linear(100, 10)

optimizer = torch.optim.Adam(model.parameters())

for epoch in range(100):

# Training step

loss = model(data).mean()

loss.backward()

optimizer.step()

# Get emissions report

emissions = tracker.stop()

print(f"Training emissions: {emissions:.4f} kg CO2")Integration of energy tracking into the development workflow allows engineers to make informed decisions about model complexity vs. environmental impact during development.

Power modeling fundamentals

Understanding where energy goes in AI systems requires grounding in the physics of digital computation. The CMOS power equation provides the foundation for reasoning about energy consumption in modern processors, explaining why different optimization techniques achieve their efficiency gains and enabling quantitative comparison of architectural choices.

The CMOS power equation

Every digital circuit consumes power through two fundamental mechanisms. Dynamic power arises from switching transistors between states, while static power results from leakage current that flows even when transistors are nominally off. Equation 1 formalizes the total power consumption:

\[P_{\text{total}} = P_{\text{dynamic}} + P_{\text{static}} = \alpha C V^2 f + V I_{\text{leak}} \tag{1}\]

The dynamic power component \(P_{\text{dynamic}} = \alpha C V^2 f\) depends on four parameters. The switching activity factor \(\alpha\) represents the fraction of transistors changing state per clock cycle, ranging from 0 to 1. General-purpose CPUs typically exhibit \(\alpha \approx 0.1\) to \(0.3\) due to diverse instruction mixes, while specialized AI accelerators can achieve \(\alpha \approx 0.6\) to \(0.8\) through optimized dataflow that keeps more circuits active during computation. The load capacitance \(C\) scales with transistor count and interconnect length. Supply voltage \(V\) enters quadratically, making voltage reduction the highest-impact lever for energy efficiency. Clock frequency \(f\) determines operations per second.

The static power component \(P_{\text{static}} = V \cdot I_{\text{leak}}\) represents leakage current that increases exponentially with temperature, approximately doubling for every 10 degrees Celsius rise. This thermal dependence creates a feedback loop: higher power generates heat, which increases leakage, which generates more heat. Managing this thermal runaway constrains the power density achievable in modern processors and explains why cooling infrastructure represents such a significant fraction of data center energy consumption (Dayarathna et al. 2016).

The practical implications for AI systems follow directly from these physics. The quadratic voltage dependence means that reducing voltage from 1.0V to 0.8V decreases dynamic power by 36 percent, even before considering that lower voltages often enable frequency reduction with additional linear savings. This relationship explains why specialized AI accelerators operating at lower voltages but higher utilization can achieve order-of-magnitude efficiency improvements over general-purpose processors.

Why optimization techniques save energy

The power equation illuminates why specific optimization techniques achieve their efficiency gains. Quantization reduces numerical precision from 32-bit floating point to 8-bit integers, which directly reduces datapath capacitance \(C\) by approximately 4 times since narrower datapaths require fewer transistors and shorter interconnects. Additionally, lower precision arithmetic enables reduced supply voltage \(V\) because the circuits have larger noise margins. The combined effect yields 6 to 10 times energy reduction per operation, closely matching published measurements of INT8 vs. FP32 inference efficiency.

Pruning removes weights from neural networks, reducing the effective capacitance \(C\) by eliminating computation paths that would otherwise consume switching energy. Structured pruning, which removes entire channels or attention heads, achieves larger efficiency gains than unstructured pruning because it eliminates complete circuit paths rather than individual operations that the hardware must still orchestrate.

Specialized accelerators improve the activity factor \(\alpha\) by designing circuits specifically for matrix multiplication and convolution operations. Where a CPU might activate 10 percent of its transistors during typical ML workloads, a systolic array architecture can keep 70 percent or more of its compute units active, effectively performing more useful work per watt of power consumed.

Facility-level power metrics

Beyond chip-level power, data center infrastructure imposes additional energy overhead. Equation 2 captures this relationship through the Power Usage Effectiveness (PUE) metric:

\[\text{PUE} = \frac{P_{\text{total\_facility}}}{P_{\text{IT\_equipment}}} \tag{2}\]

Napkin Math 1.4: PUE: The cost of cooling

Math: Energy saved is the difference in infrastructure overhead \((\text{PUE}-1)\) across the IT load.

- Overhead Reduction: 1.58 - 1.10 = 0.48.

- Annual energy savings: \(2.0 \text{ MW} \times 0.48 \times 8,760 \text{ hours} \approx\) 8,410 MWh.

- Financial Savings: 8,410 MWh \(\times\) $70/MWh \(\approx\) $588,672.

Systems insight: Infrastructure optimization is as valuable as algorithmic optimization. Dropping PUE by 0.48 is equivalent to discovering an algorithmic “free lunch” that makes the entire model 30 percent more efficient without changing a single line of training code. For large operators, cooling efficiency is the primary economic lever for sustainability.

A PUE of 1.0 would indicate perfect efficiency where all energy powers computation, though this is physically impossible since cooling, power distribution, and lighting require nonzero energy. Industry-average data centers operate at PUE of 1.5 to 2.0, meaning that 50 percent to 100 percent additional energy beyond computation goes to infrastructure (Davis et al. 2022). Leading hyperscale facilities achieve PUE between 1.1 and 1.2 through advanced cooling techniques including free-air cooling in cold climates, liquid cooling for high-density GPU clusters, and optimized power distribution.

Equation 3 formalizes Water Usage Effectiveness (WUE), capturing the water consumption that evaporative cooling and other processes require:

\[\text{WUE} = \frac{W_{\text{annual\_water\_usage}}}{P_{\text{IT\_equipment\_energy}}} \tag{3}\]

The units are liters per kilowatt-hour, with typical values ranging from 0.5 to 2.0 L/kWh depending on climate and cooling technology. A data center with WUE of 1.8 L/kWh training a model requiring 10,000 MWh would consume 18 million liters of water, equivalent to roughly 40–50 US household-years of water use under a 380,000–450,000 L/year household baseline.

Facility-level metrics identify where engineering intervention yields the greatest returns. The following case study demonstrates how ML-driven optimization of PUE translates directly into measurable energy savings.

Case study: DeepMind energy efficiency

Google’s data centers form the backbone of services such as Search, Gmail, and YouTube, handling billions of queries daily (Centers 2023). These facilities require substantial electricity consumption, particularly for cooling infrastructure that ensures optimal server performance. Improving data center energy efficiency has long been a priority, but conventional engineering approaches faced diminishing returns due to cooling system complexity and highly dynamic environmental conditions (Buyya et al. 2010). To address these challenges, Google collaborated with DeepMind to develop a machine learning optimization system that automates and enhances energy management at scale.

After more than a decade of efforts to optimize data center design, energy-efficient hardware, and renewable energy integration, DeepMind’s AI approach targeted cooling systems, among the most energy-intensive aspects of data centers. Traditional cooling relies on manually set heuristics that account for server heat output, external weather conditions, and architectural constraints. These systems exhibit nonlinear interactions, so simple rule-based optimizations often fail to capture the full complexity of their operations. The result was suboptimal cooling efficiency, leading to unnecessary energy waste.

DeepMind’s team trained a neural network model using Google’s historical sensor data, which included real-time temperature readings, power consumption levels, cooling pump activity, and other operational parameters. Building on Jim Gao’s earlier work demonstrating that machine learning could predict data center PUE with 99.6 percent accuracy (Gao 2014), the model learned the intricate relationships between these factors and could dynamically predict the most efficient cooling configurations. Unlike traditional approaches that relied on human engineers periodically adjusting system settings, the AI model continuously adapted in real time to changing environmental and workload conditions.

19 PUE Optimization via ML: Google’s best facilities achieve PUE 1.08, meaning only 8 percent energy overhead for cooling and power distribution. DeepMind’s reinforcement-learning controller reduced cooling energy by 40 percent by exploiting nonlinear interactions between chillers, pumps, and ambient conditions that rule-based systems miss. This is a rare positive feedback loop where AI improves the efficiency of the infrastructure that powers AI.

The results demonstrated significant efficiency gains. When deployed in live data center environments, DeepMind’s AI-driven cooling system reduced cooling energy consumption by 40 percent, leading to an overall 15 percent improvement in PUE19 (Barroso et al. 2019; Evans and Gao 2016). For a facility operating at the industry-average PUE of 1.5 from Equation 2, a 15 percent improvement reclaims a substantial fraction of the energy lost to cooling overhead. These improvements were achieved without additional hardware modifications, demonstrating the potential of software-driven optimizations to reduce AI’s carbon footprint.

The DeepMind case study illustrates a rare positive feedback loop: machine learning optimizing the infrastructure that powers machine learning. The framework generalizes across facility designs and climate conditions, offering a scalable approach for global data center networks.

Carbon intensity and regional variation

The carbon impact of electricity consumption depends critically on the energy generation mix, quantified by carbon intensity measured in grams of CO2 equivalent per kilowatt-hour (g CO2eq/kWh). Table 1 quantifies how dramatically these intensities vary across energy sources:

| Energy Source | Carbon Intensity (g CO2eq/kWh) | Regional Examples |

|---|---|---|

| Coal | 820 to 1,200 | Poland, West Virginia |

| Natural Gas | 350 to 500 | Texas combined cycle plants |

| Solar PV | 20 to 50 | California, Arizona |

| Wind | 7 to 15 | Denmark, Scotland |

| Hydroelectric | 10 to 30 | Quebec, Norway |

| Nuclear | 5 to 20 | France, Ontario |

Geographic optimization can reduce carbon emissions by 10–50\(\times\) through strategic training location selection, as Figure 7 illustrates across representative regions.

Systematic energy metrics

Quantifying energy efficiency requires systematic metrics that enable comparison across hardware architectures and algorithmic approaches. These metrics provide the foundation for reasoning about optimization trade-offs and identifying bottlenecks in AI system energy consumption.

Energy per operation

The fundamental metric for computational energy efficiency is energy consumed per operation, typically measured in picojoules. For AI workloads, the most relevant metrics are energy per floating-point operation and energy per multiply-accumulate, where one MAC operation performs both a multiplication and addition, equivalent to two FLOPs.

Hardware architecture determines energy efficiency across orders of magnitude, spanning nearly four orders of magnitude from general-purpose CPUs to specialized analog accelerators. Table 2 quantifies these differences:

| Architecture | Energy Efficiency (pJ/FLOP or pJ/MAC) | Characteristics |

|---|---|---|

| CPU (general) | 100 pJ/FLOP | Low utilization, high flexibility |

| GPU (tensor cores) | 10 pJ/FLOP | High throughput, parallel execution |

| TPU (systolic array) | 1-2 pJ/FLOP | Specialized matrix operations, optimized dataflow |

| Google Edge TPU | 2-4 pJ/FLOP | On-device inference, INT8 optimized |

| ARM Ethos-U55 | 0.5-2 pJ/MAC | Microcontroller NPU, sub-watt TinyML |

| Maxim MAX78000 | 0.3-1 pJ/MAC | CNN accelerator with local weight storage |

| ASIC (INT8) | 0.1 pJ/operation | Fixed-function, low precision |

| Analog/In-Memory Compute | 0.01-0.1 pJ/MAC | Emerging technology, compute in memory array |

The four-order-of-magnitude spread reflects both circuit-level efficiency and architectural choices affecting utilization. CPUs execute diverse instruction mixes with low average utilization of arithmetic units. GPUs achieve higher utilization through massive parallelism. TPUs and ASICs maximize utilization through specialized datapaths optimized for specific operation types.

Precision directly affects energy per operation. INT8 integer arithmetic consumes approximately one-sixteenth the energy of FP32 floating-point at the same frequency and voltage. This combines reduced datapath capacitance of 4\(\times\) from bit width with lower voltage requirements of 2\(\times\) from larger noise margins and simpler control logic of 2\(\times\) from reduced complexity.

Energy per byte

Data movement often dominates energy consumption in modern AI systems. The energy cost of memory access spans five orders of magnitude across the storage hierarchy:

Table 3 reveals a critical insight: moving data from DRAM consumes 10 to 100 times more energy than performing arithmetic operations. For a GPU operating at 10 pJ/FLOP, accessing one FP32 operand from DRAM (4 bytes times 100 pJ/byte = 400 pJ) costs 40 times more than the computation itself. This energy gap drives architectural innovations including:

| Memory Level | Energy Cost (pJ/byte) | Access Latency |

|---|---|---|

| Register | 0.1 pJ/byte | 1 cycle |

| L1 Cache | 1 pJ/byte | 3-5 cycles |

| L2 Cache | 5 pJ/byte | 10-20 cycles |

| DRAM | 100 pJ/byte | 200-300 cycles |

| NVMe SSD | 1,000 pJ/byte | 50,000-100,000 cycles |

| Network | 10,000+ pJ/byte | Millions of cycles |

- On-chip memory for data reuse (NVIDIA tensor cores with shared memory)

- Optimized data layouts minimizing DRAM access (Google TPU systolic arrays)

- Compression reducing data movement (sparse tensor representations)

Arithmetic intensity and energy roofline

The balance between computation and data movement determines whether energy consumption is compute-bound or memory-bound. Equation 4 defines arithmetic intensity (AI), the ratio that determines which resource dominates energy consumption:

\[\text{AI} = \frac{\text{Total FLOPs}}{\text{Total Bytes Moved}} \tag{4}\]

Arithmetic intensity measured in FLOPs per byte determines the dominant energy consumer. Equation 5 expresses total energy as the sum of compute and memory contributions, while Equation 6 isolates the roofline-style dominant term:

\[E_{\text{total}} = E_{\text{compute}} + E_{\text{memory}} = \text{FLOPs} \times e_{\text{flop}} + \text{Bytes} \times e_{\text{byte}} \tag{5}\]

\[E_{\text{dominant}} = \max\left(E_{\text{compute}}, E_{\text{memory}}\right) = \max\left(\text{FLOPs} \times e_{\text{flop}}, \text{Bytes} \times e_{\text{byte}}\right) \tag{6}\]

where \(e_{\text{flop}}\) is energy per FLOP and \(e_{\text{byte}}\) is energy per byte moved. The maximum term identifies the dominant bottleneck for roofline reasoning; it is not the full energy in balanced cases. Equation 7 defines the crossover arithmetic intensity where compute and memory energy balance:

\[\text{AI}_{\text{crossover}} = \frac{e_{\text{byte}}}{e_{\text{flop}}} \tag{7}\]

For a GPU with \(e_{\text{flop}} = 10\) pJ/FLOP and \(e_{\text{byte}} = 100\) pJ/byte (DRAM access):

\[\text{AI}_{\text{crossover}} = \frac{100 \text{ pJ/byte}}{10 \text{ pJ/FLOP}} = 10 \text{ FLOPs/byte}\]

The energy roofline model (Figure 8) visualizes this relationship between arithmetic intensity and energy efficiency, revealing how different workload types are constrained by different bottlenecks.

To make this framework concrete, we can apply it to the most common operation in deep learning: matrix multiplication.

Example 1.1: MatMul energy analysis

Consider matrix multiplication \(C = A \times B\) for \(N{\times}N\) matrices in FP32 precision on a GPU with the energy characteristics above.

Step 1: Calculate FLOPs and bytes. - FLOPs: \(2N^3\) (one multiply-add for each of \(N^2\) output elements, accumulating over \(N\) elements) - Bytes: \(3N^2 \times 4\) bytes (read matrices \(A\) and \(B\), write matrix \(C\), each FP32 = 4 bytes) - Arithmetic intensity: \(\text{AI} = \frac{2N^3}{12N^2} = \frac{N}{6}\) FLOPs/byte

Step 2: Determine energy-limiting factor. For small matrices (\(N = 60\)):

- \(\text{AI} = 60/6 = 10\) FLOPs/byte (at crossover)

- Compute energy: \(2 \times 60^3 \times 10 \text{ pJ} = 4.32\,\mu\text{J} = 0.00432\) mJ

- Memory energy: \(3 \times 60^2 \times 4 \times 100 \text{ pJ} = 4.32\,\mu\text{J} = 0.00432\) mJ

- Balanced: both compute and memory contribute equally

For large matrices (\(N = 1000\)):

- \(\text{AI} = 1000/6 = 167\) FLOPs/byte (compute-bound)

- Compute energy: \(2 \times 10^9 \times 10 \text{ pJ} = 20\) mJ (dominates)

- Memory energy: \(3 \times 10^6 \times 4 \times 100 \text{ pJ} = 1.2\) mJ (negligible)

- Optimization priority: Focus on compute efficiency

For element-wise operations (\(N = 1000\), vector addition):

- FLOPs: \(N = 1000\) (one addition per element)

- Bytes: \(3N \times 4 = 12,000\) bytes (read two vectors, write one)

- \(\text{AI} = 1000/12000 = 0.083\) FLOPs/byte (memory-bound)

- Compute energy: \(1000 \times 10 \text{ pJ} = 0.00001\) mJ (negligible)

- Memory energy: \(12000 \times 100 \text{ pJ} = 0.0012\) mJ (dominates)

- Optimization priority: Reduce data movement through fusion

The energy roofline model reveals why different optimization strategies suit different workloads. Large dense matrix operations benefit from faster arithmetic units. Memory-bound operations like element-wise kernels benefit from data layout optimization, kernel fusion to reduce memory round-trips, and on-chip memory utilization. This framework guides architectural and algorithmic choices for sustainable AI system design.

Energy measurement techniques

Quantifying AI system energy consumption requires measurement at multiple levels of the hardware stack, from chip-level instrumentation to facility-wide monitoring. Each measurement approach offers different granularity, accuracy, and overhead trade-offs that practitioners must understand to select appropriate methods for their use case.

Hardware power counters

Modern processors include dedicated circuitry for power measurement that software can query through manufacturer-provided interfaces. These hardware counters measure actual power draw rather than estimating from activity, providing ground-truth energy consumption data at microsecond resolution.

Intel’s Running Average Power Limit (RAPL) interface exposes power measurements for CPU packages, DRAM, and integrated graphics through model-specific registers (MSRs). RAPL reports energy consumption in microjoules with updates every millisecond, enabling fine-grained attribution of energy to specific code regions. Listing 2 demonstrates how to read RAPL counters and calculate average power draw during a training loop:

import subprocess

import time

from mlsysim.core.constants import ureg

def read_rapl_energy():

"""Read current RAPL energy counters.

Requires root or perf permissions.

"""

result = subprocess.run(

[

"cat",

"/sys/class/powercap/intel-rapl/intel-rapl:0/energy_uj",

],

capture_output=True,

text=True,

)

return int(result.stdout.strip()) # Returns microjoules

# Measure training energy

start_energy = read_rapl_energy()

start_time = time.time()

# Training loop

for epoch in range(num_epochs):

train_one_epoch(model, dataloader, optimizer)

end_energy = read_rapl_energy()

end_time = time.time()

energy_joules = ((end_energy - start_energy) * ureg.microjoule).m_as(

ureg.joule

)

avg_power_watts = energy_joules / (end_time - start_time)

print(

f"Training energy: {energy_joules:.2f} J, Average power: {avg_power_watts:.2f} W"

)RAPL measurements exclude discrete GPUs, which require separate monitoring through vendor-specific interfaces.

GPU power monitoring

NVIDIA GPUs expose power measurements through the NVIDIA Management Library (NVML), accessible via the nvidia-smi command-line tool or programmatic bindings. GPU power monitoring reports instantaneous power draw, which can vary significantly during computation due to dynamic voltage and frequency scaling. Listing 3 implements a measurement loop that samples power at regular intervals, computing average and peak power over the inference workload:

import pynvml

import torch

import time

from mlsysim.core.constants import milliwatt, watt

pynvml.nvmlInit()

handle = pynvml.nvmlDeviceGetHandleByIndex(0) # First GPU

def measure_inference_power(model, input_data, num_iterations=100):

"""Measure average GPU power during inference."""

power_readings = []

model.eval()

with torch.no_grad():

for _ in range(num_iterations):

# Run inference

_ = model(input_data)

torch.cuda.synchronize()

# Sample power and convert mW → W via pint.

power_mw = pynvml.nvmlDeviceGetPowerUsage(handle)

power_readings.append((power_mw * milliwatt).m_as(watt))

avg_power = sum(power_readings) / len(power_readings)

return avg_power

avg_power = measure_inference_power(model, sample_input)

print(f"Average inference power: {avg_power:.1f} W")For accurate energy measurement rather than instantaneous power sampling, integrate power readings over time or use NVIDIA’s energy counter when available on data center GPUs.

Edge and mobile device energy measurement

The measurement techniques described earlier apply to data center hardware with built-in power monitoring capabilities. Edge devices and microcontrollers present fundamentally different measurement challenges: they lack built-in power counters, operate at milliwatt rather than kilowatt scales, and require external instrumentation for accurate energy profiling. As TinyML deployments expand to billions of devices, understanding edge energy measurement becomes essential for comprehensive sustainability assessment.

Hardware power monitors for embedded systems

Microcontrollers and edge processors require external current and voltage measurement to quantify energy consumption. Several instrumentation approaches provide different trade-offs between accuracy, resolution, and cost:

The INA219 and INA226 I2C-based current sensors provide affordable measurement for development and validation, sampling at rates sufficient to capture inference-level energy consumption. For research requiring nanosecond-resolution measurements of individual operations, instruments like the Joulescope JS220 measure current from sub-microamp sleep states through ampere-level active peaks, enabling characterization of the full dynamic range of edge AI workloads.

Mobile platform energy profiling

Mobile devices provide platform-specific APIs for energy attribution, though with less granularity than hardware monitors:

- Android PowerStats HAL: Provides per-component power attribution for CPU, GPU, NPU, and radio subsystems, enabling developers to identify which model operations dominate energy consumption.

- Qualcomm Trepn Profiler: Offers millisecond-resolution power measurement on Snapdragon platforms, correlating power traces with code execution for NPU workload optimization.

- ARM Streamline: Provides energy-annotated profiling for Cortex-A and Mali GPU platforms, enabling identification of inefficient kernel implementations.

- Apple Instruments Energy Log: Reports thermal state and energy impact scores for iOS applications, though without direct wattage measurements.

Mobile profiling tools integrate with development workflows, enabling iterative optimization of on-device inference energy consumption during model deployment. Table 4 summarizes the available instrumentation options across platforms, including resolution, accuracy, and integration requirements.

| Instrument | Resolution | Accuracy | Use Case |

|---|---|---|---|

| INA219/INA226 | 100 microsecond sampling | plus or minus 1 percent | Low-cost embedded profiling |

| PAC1934 | 1 millisecond, 4 channels | plus or minus 2 percent | Multi-rail MCU measurement |

| Joulescope JS220 | Sub-microsecond, nanoamp range | plus or minus 0.1 percent | Professional TinyML benchmarking |

| Otii Arc Pro | 10 microsecond, automation | plus or minus 0.5 percent | Automated battery life testing |

Edge measurement methodology

Edge energy measurement requires careful methodology to produce reproducible results:

Baseline characterization: Measure idle power consumption across all sleep states, as baseline power can vary from 1 microamp in deep sleep to 1 milliamp in idle active states on typical microcontrollers.

Warm-up Period: Execute 100 or more inference iterations before measurement to reach thermal equilibrium, as initial iterations may exhibit different power characteristics due to cache warming and voltage regulator settling.

Duty Cycle Accounting: Edge devices typically operate with significant idle periods between inferences. Report both peak inference power and average power at realistic duty cycles. Equation 8 expresses this relationship:

\[P_{\text{average}} = P_{\text{active}} \times D + P_{\text{idle}} \times (1 - D) \tag{8}\]

where \(D\) is the duty cycle (fraction of time performing inference).

- Peripheral Isolation: Disable or account for peripheral power consumption (sensors, radios, displays) when measuring model inference energy, as these can dominate total system power.

System-level energy profiling

Comprehensive energy accounting requires combining chip-level measurements with infrastructure overhead. Equation 9 formalizes total energy as the sum of component contributions scaled by facility overhead:

\[E_{\text{total}} = (E_{\text{CPU}} + E_{\text{GPU}} + E_{\text{memory}} + E_{\text{network}}) \times \text{PUE} \tag{9}\]

System-level profilers like Intel VTune, NVIDIA Nsight Systems, and open-source tools such as PowerJoular aggregate measurements across components. For production deployments, smart power distribution units (PDUs) at the rack level provide facility-verified measurements that include cooling overhead.

Equation 10 expresses the relationship between measured component power and total facility energy:

\[P_{\text{facility}} = P_{\text{IT}} \times \text{PUE} = (P_{\text{servers}} + P_{\text{network}} + P_{\text{storage}}) \times \text{PUE} \tag{10}\]

For a cluster consuming 1 MW of IT power in a facility with PUE of 1.4, total facility power consumption reaches 1.4 MW, with the additional 400 kW powering cooling, power conversion, and infrastructure systems.

Understanding that a PUE of 1.4 means an automatic 40 percent overhead on all computational power highlights the critical role of facility efficiency. However, operational power consumption is only one piece of the equation; to capture the true environmental cost of our systems, we must formalize how we convert raw kilowatts into tons of carbon emissions.

Self-Check: Question

A profiling run on an accelerator with approximately 10 pJ per FLOP of compute energy and approximately 100 pJ per byte of DRAM energy reports an arithmetic intensity of 3 FLOPs per byte for an attention kernel. Which optimization family is most likely to move this workload closer to the energy roofline?

- Replacing the accelerator with one that advertises 2x the peak FLOPS per watt while keeping the memory subsystem unchanged, because raising the compute ceiling always lowers energy.

- Fusing operators and tiling to keep intermediate activations in on-chip SRAM, because the kernel sits far to the left of the energy crossover at about 10 FLOPs per byte and pays most of its joules in DRAM traffic.

- Prioritizing a PUE reduction on the facility because chip-level bottlenecks do not affect per-query energy.

- Raising numerical precision from FP16 to FP32, because higher precision does more useful work per byte read.

A 2 MW cluster drops its PUE from 1.58 to 1.10 without changing any model code or hardware SKU. Explain why the chapter counts this as a first-order sustainability intervention, and quantify roughly what the facility saves per year.

An engineer must profile energy for a battery-powered microcontroller running a wake-word detector that sleeps most of the second. The device has no internal power counters and draws microwatts during deep sleep. Which measurement approach best matches the section’s edge methodology?

- Sample a tool such as nvidia-smi at 10 Hz and integrate the series, because server-grade sampling tools work across platforms.

- Use an external current-sense monitor such as an INA219 or Joulescope, sample at a rate that resolves the active burst and deep-sleep transitions, and explicitly account for duty cycle, warm-up, and peripherals.

- Estimate total energy by multiplying parameter count by a fixed J-per-parameter constant, because compute energy is the dominant term in TinyML.

- Rely on CPU-package RAPL counters, because they generalize from server CPUs to microcontroller-class devices.

A facility reports 4.2 MW of compute IT load and 6.3 MW of total site draw over the same hour. The sustainability team wants a single scalar that captures how much the non-IT infrastructure contributes to the total, so they can compare the site to peers year over year. Which metric gives them exactly that ratio, and what does a drop in it imply?

- Grid carbon intensity; a drop means the grid has decarbonized.

- Arithmetic intensity; a drop means the workload has become more memory-bound.

- PUE, computed as 6.3 / 4.2 = 1.5; a drop means every joule of useful IT work now carries less cooling and power-distribution overhead.

- Model FLOPs utilization; a drop means the accelerators are underused.

A profiling sweep across a training workload shows element-wise normalization and activation kernels spending roughly 8x more joules on HBM reads than on arithmetic. The service owner proposes four follow-ups. Which best matches the energy model this section develops?

- Upgrading to a newer accelerator with 2x peak tensor-core FLOPS, because more FLOPS always lowers total energy per step.

- Fusing the normalization and activation into adjacent matrix-multiply kernels so intermediate tensors stay in on-chip SRAM and round-trips to HBM collapse.

- Ignoring the kernel and investing only in carbon-aware scheduling, because chip-level energy is negligible once the grid is considered.

- Raising numerical precision to FP32 to make each byte of DRAM carry more useful arithmetic.

A team proposes to report total AI system energy as the simple sum of CPU, GPU, memory, and network component measurements. Explain why the section rejects this accounting and what form the corrected total must take.

Carbon Footprint Calculation

Consider a data center running on 100 percent renewable hydroelectric power. Its operational carbon emissions are effectively zero, but AI trained there is not carbon-free. Mining the silicon, manufacturing the GPUs, and pouring the concrete for the data center released thousands of tons of CO2 before the servers were ever turned on. A true carbon footprint calculation must account for both the energy consumed during operation and the “embodied carbon” emitted during construction.

Operational carbon calculation

Operational carbon emissions result from electricity consumption during training and inference, scaled by grid carbon intensity. Equation 11 quantifies this as the product of energy, grid carbon intensity, and facility overhead:

\[C_{\text{operational}} = E_{\text{total}} \times \text{CI}_{\text{grid}} \tag{11}\]

where \(E_{\text{total}}\) is the facility-level energy from Equation 9 (component energy already scaled by \(\text{PUE}\)) and \(\text{CI}_{\text{grid}}\) is the carbon intensity of the electricity grid. The facility overhead enters once, through \(E_{\text{total}}\), so it does not appear again in the carbon equation. A concrete training emissions calculation illustrates this framework.

Example 1.2: Training emissions calculation

Step 1: Compute energy. - GPU power: 400 W per A100 at typical training utilization - Training time: 14 days times 24 hours = 336 hours - GPU energy: 64 GPUs times 400 W times 336h = 8,601,600 Wh = 8,602 kWh

Step 2: Apply PUE. - Facility PUE: 1.2 (efficient hyperscale data center) - Total facility energy: 8,602 kWh times 1.2 = 10,322 kWh

Step 3: Calculate emissions. - Grid carbon intensity: 429 g CO2/kWh (US average) - Operational emissions: 10,322 kWh times 429 g/kWh = 4,428 kg CO2 = 4.4 metric tons

Comparison: Same training in low-carbon region

- Quebec grid intensity: 20 g CO2/kWh

- Emissions: 10,322 kWh times 20 g/kWh = 206 kg CO2

The geographic choice alone produces a 21-fold difference in training emissions.

Embodied carbon assessment