Responsible Engineering

Purpose

Why is a system that does exactly what it was told to do often the most dangerous?

Operations ensures the system runs reliably: low latency, high availability, accurate predictions. Responsible engineering asks a harder question: reliable for whom? An ML system can meet every technical specification (latency, throughput, accuracy) while actively amplifying harm. The failure occurs not because the system is broken but because it is working efficiently to optimize a flawed specification. A loan approval system that correctly predicts default risk can encode historical discrimination, denying credit to qualified applicants from historically marginalized communities. A content recommendation system that accurately predicts engagement may amplify harmful content because outrage generates more clicks than nuance. A hiring algorithm that reliably identifies candidates similar to past hires may perpetuate workforce homogeneity, screening out the diversity that drives innovation. In each case the system is performing exactly as designed—the failure is in what was designed for. When we confuse mathematical optimization with value alignment, we build systems that are technically robust but socially fragile. The model faithfully learns and reproduces whatever patterns exist in its training distribution, including patterns of historical injustice that no one intended to encode. Building systems that work is an engineering achievement. Building systems that work for everyone requires treating unintended consequences not as edge cases to be tolerated but as system bugs: diagnosed, measured, and fixed with the same rigor we apply to latency regressions and accuracy degradation.

- Explain how ML systems can optimize correctly while causing harm through bias amplification, distribution shift, and proxy variables

- Apply the D·A·M taxonomy to diagnose whether a responsibility failure originates in data, algorithm, or infrastructure

- Compute fairness metrics (demographic parity, equal opportunity, equalized odds) from confusion matrices and evaluate trade-offs on the fairness-accuracy Pareto frontier

- Design disaggregated evaluation strategies that detect hidden disparities across demographic groups, including slice-based, invariance, and stress testing

- Analyze total cost of ownership including training, inference, operational costs, and environmental impact using carbon as a first-class engineering metric

- Identify model documentation and data governance requirements (model cards, datasheets, data lineage, audit infrastructure) for regulatory compliance and accountability

Responsibility as Systems Engineering

In 2014, Amazon built an AI recruiting tool1 that penalized resumes containing the word “women’s” and downgraded graduates of all-women’s colleges—despite meeting every technical metric its engineers had specified. The system optimized flawlessly for its stated objective: identify candidates similar to those previously hired. But historical hiring patterns encoded gender bias, and the model faithfully reproduced that bias at scale. The full case, examined in Section 1.2.1, reveals a pattern that recurs throughout this chapter: technically correct systems producing harmful outcomes not because they malfunction, but because they faithfully execute flawed specifications.

1 Amazon Recruiting Tool: Developed starting in 2014 by Amazon’s Edinburgh engineering team to rate applicants on a 1–5 scale, the system trained on approximately a decade of resumes—overwhelmingly from male applicants reflecting the tech industry’s gender ratio. By 2015 the gender bias was identified; by 2017 the project was abandoned after two years of failed remediation attempts. The engineering cost was not the compute but the opportunity cost: a multi-year hiring pipeline had to be rebuilt from scratch, making it one of the most expensive documented specification failures in production ML.

If MLOps (ML Operations), the monitoring and retraining infrastructure examined previously, is the control loop for reliability, then Responsible Engineering is the control loop for safety. Where MLOps monitors system health and triggers retraining when performance degrades, responsible engineering monitors outcome quality and triggers intervention when systems cause harm. A model can optimize flawlessly for its stated objective and still cause systematic harm because the failure is not a bug in the code but a flaw in the specification. In systems engineering terms, a system can pass verification (it meets its stated requirements) while failing validation (it does not meet the user’s true needs).

Traditional software engineering assumes that bugs are local: a defect in one module rarely corrupts unrelated functionality. Machine learning systems violate this assumption. Data flows through shared representations, causing problems in one component to propagate unpredictably across the entire system. A biased training dataset does not produce a localized bug; it corrupts every prediction the system makes. Viewed through the D·A·M taxonomy (Data, Algorithm, Machine) introduced in Introduction, the failure can originate along any axis: biased data, a misaligned algorithm, or inadequate infrastructure for monitoring outcomes. This makes responsibility an architectural concern, not an afterthought.

Engineering responsibility therefore expands what “correct” means for ML systems. Correctness in the traditional sense (reliable, performant, and maintainable) remains necessary, but ML systems must also be correct in a broader sense: fair across user groups, efficient in resource consumption, and transparent in their decision processes. Expanded correctness is engineering itself, applied to failure modes that conventional metrics do not capture. A latency regression is visible in dashboards; a fairness regression is invisible until it harms real users. Both require systematic detection, measurement, and remediation.

The frameworks developed here address diagnosing, preventing, and mitigating these failures. We begin with concrete cases that reveal the responsibility gap: the distance between technical performance and responsible outcomes, and the mechanisms (proxy variables, feedback loops, distribution shift) through which it manifests. From there, we develop a responsible engineering checklist that systematizes impact assessment, model documentation, disaggregated testing, and incident response into repeatable engineering processes. The chapter then connects the resource consumption quantified throughout this book (training compute, inference energy, carbon footprint) to engineering ethics, demonstrating that efficiency optimization serves responsibility as directly as it serves performance. We then examine the data governance and compliance infrastructure (access control, privacy protection, lineage tracking, and audit systems) that makes responsible practices enforceable at scale, before closing with the fallacies and pitfalls that commonly undermine even well-intentioned efforts.

We begin with the concrete failure cases that establish why engineers must lead on responsibility.

Self-Check: Question

Why is responsible engineering particularly critical for machine learning systems compared to traditional software?

- ML systems are more expensive to develop.

- ML systems fail silently through biased outputs that appear normal.

- Traditional software does not require any testing.

- ML systems always produce deterministic results.

Why can responsibility not be delegated exclusively to ethics boards or legal departments in an ML project?

Engineering Responsibility Gap

A loan model that approves 95 percent of qualified majority-group applicants while rejecting 40 percent of equally qualified minority-group applicants meets its loss function perfectly. The gap between this technical correctness and responsible outcomes represents a central challenge in machine learning systems engineering, one that existing testing methodologies were not designed to address.

The gap manifests through concrete mechanisms: proxy variables, feedback loops, and distribution shift, each producing harm through a distinct pathway. Concrete cases where optimization succeeded but systems failed reveal these mechanisms and the silent failure modes that make them invisible to conventional monitoring. Organizations that closed the gap through systematic engineering practice demonstrate that prevention is feasible. The testing challenge that makes responsibility fundamentally harder to verify than traditional software correctness then determines where responsibility ownership must sit within engineering organizations.

When optimization succeeds but systems fail

The Amazon recruiting tool case illustrates this gap. In 2014, Amazon developed an AI system to automate resume screening for technical positions, training it on historical hiring data spanning ten years of resumes submitted to the company. By 2015, the company discovered the system exhibited gender bias in candidate ratings (Dastin 2022).

The technical implementation was sound. The model successfully learned patterns from historical data and optimized for the objective it was given: identify candidates similar to those previously hired. However, historical hiring patterns encoded gender bias. The system penalized resumes containing the word “women’s,” as in “women’s chess club captain,” and downgraded graduates of all-women’s colleges.

The technical mechanism behind this outcome is straightforward. The model learned token-level patterns from historical data. When most previously successful hires were men, resumes containing language associated with women’s activities or institutions appeared statistically less correlated with positive hiring decisions. The model correctly identified these patterns in the training data but learned the wrong lesson from correct pattern recognition.

Amazon attempted remediation by removing explicit gender indicators and gendered terms from the training process. This intervention failed because the model had learned proxy variables—features that correlate with protected attributes without directly encoding them.2 In general, proxies arise whenever features carry indirect demographic signal: ZIP codes correlate with race due to residential segregation, first names correlate with gender and ethnicity, and healthcare utilization correlates with socioeconomic status. In Amazon’s case, college names revealed attendance at all-women’s institutions, activity descriptions encoded gender-associated language patterns, and career gaps suggested parental leave patterns that differed between genders. The model reconstructed protected attributes from these proxies without ever seeing gender labels directly. Removing protected attributes from training data is therefore insufficient; fairness requires adversarial debiasing, fairness constraints during optimization, or post-hoc threshold adjustment per group.

2 Proxy Variable: The intractability is not in identifying that a proxy exists—it is that removing it often has no effect, because other correlated features (zip code, device type, browsing history) carry the same signal. Amazon’s case is typical: removing explicit gender left college names, activity descriptions, and career gap patterns to reconstruct gender from combinations the engineers never anticipated. Eliminating explicit protected attributes without eliminating their proxies produces a model that discriminates while appearing compliant—a failure mode called “fairness laundering”—making continuous per-group outcome monitoring the only reliable defense.

The right intervention would have required multiple levels of change. Separate evaluation of resume scores for male-associated vs. female-associated candidates would have revealed the disparity quantitatively. Training with fairness constraints or adversarial debiasing techniques could have prevented the model from learning gender-correlated patterns. Human-in-the-loop review for borderline cases would have provided a safeguard against systematic errors. Tracking actual hiring outcomes by gender over time would have enabled outcome monitoring beyond model metrics alone. Amazon eventually scrapped the project after determining that sufficient remediation was not feasible.

The Amazon case demonstrates how optimization objectives diverge from organizational values. The system found genuine statistical patterns in historical hiring decisions and optimized them faithfully. Those patterns, however, reflected biased historical practices rather than job-relevant qualifications.

Example 1.1: The COMPAS Recidivism Algorithm Audit

The Failure: A 2016 ProPublica investigation (Angwin et al. 2022) revealed that while the system was “calibrated” (a score of seven meant the same probability of re-offending for any group), its error rates were skewed:

- False Positives: Black defendants who did not re-offend were incorrectly flagged as high-risk at nearly twice the rate of White defendants (44.9 percent vs. 23.5 percent).

- False Negatives: White defendants who did re-offend were incorrectly labeled as low-risk far more often than Black defendants (47.7 percent vs. 28.0 percent).

The Systems Lesson: The system optimized for Calibration but violated Equalized Odds. Mathematically, it is impossible to satisfy both simultaneously when base rates differ between groups (the “Impossibility Theorem of Fairness”). Engineering responsibility requires explicitly choosing which fairness constraint matters for the domain; in criminal justice, false positives (wrongly jailing someone) are typically considered worse than false negatives.

The D·A·M Diagnosis: Through the D·A·M taxonomy, COMPAS represents an Algorithm-axis failure: the optimization objective (calibration) was misaligned with the deployment context’s fairness requirements (equalized odds). The data reflected real base-rate differences; the failure was in choosing which mathematical property to optimize. Contrast this with Amazon’s recruiting tool, a Data-axis failure where biased historical hiring patterns corrupted the training signal itself.

3 COMPAS (Correctional Offender Management Profiling for Alternative Sanctions): The shared pattern with Amazon is precise: both systems optimized a valid technical metric while violating unstated fairness requirements. COMPAS achieved calibration (equal meaning per score), but because recidivism base rates differed between populations, this choice made disparate error rates mathematically inevitable—Black defendants were falsely flagged as high-risk at nearly twice the rate of white defendants (44.9 percent vs. 23.5 percent). No amount of testing for calibration would have surfaced this failure; the harm was encoded in the objective itself.

The Amazon and COMPAS3 cases share a troubling pattern: each system achieved its stated objective while producing outcomes that conflicted with the values the system was intended to serve. Conventional engineering success, it turns out, can coexist with profound system failures. The following self-assessment captures the core design questions that separate technically correct systems from responsible ones.

Checkpoint 1.1: Responsible Design

Responsibility is a system property, not a model property.

The Failure Modes

The Check

Better testing would not catch these problems because they represent failures of problem specification, where the technical objective (minimizing prediction error on historical outcomes) diverges from the desired social objective (making fair and accurate predictions across demographic groups). Specification failures are difficult to detect precisely because the systems continue functioning normally by conventional engineering metrics. The deeper problem is clear: when a system appears healthy by every available metric, the harm it causes remains invisible to conventional monitoring.

Silent failure modes

In 2018, a major hospital’s sepsis prediction model began recommending aggressive treatments for low-risk patients. No alarm triggered—the model’s confidence scores remained high, its latency stayed within its service level agreement (SLA), and all system health checks passed green. The failure was silent: the input data distribution had shifted after an EHR software update changed how vital signs were recorded, but the monitoring pipeline had no mechanism to detect distributional drift.

The sepsis model failure illustrates a class of failure that traditional engineering is poorly equipped to handle. Traditional software fails loudly. A null pointer exception crashes the program, a network timeout returns an error code. These visible failures enable rapid detection and response. In contrast, ML systems fail silently because degraded predictions look like normal predictions. The primary mechanism behind this silent degradation is distribution shift.

Definition 1.1: Distribution Shift

Distribution Shift is the violation of the Stationarity Assumption (\(P_{train} \neq P_{deploy}\)) that underpins all supervised learning. It is the umbrella term for a family of drift types: Data Drift (see ML Operations) occurs when \(P(X)\) shifts while \(P(Y|X)\) remains stable; Concept Drift occurs when \(P(Y|X)\) itself shifts.

- Significance (Quantitative): Accuracy degradation is measurable against divergence. Empirical studies of production recommendation and NLP models find that when Jensen-Shannon divergence \(D_{JS}(P_{train} \| P_{deploy}) > 0.1\), observed accuracy drops exceed five percent relative; when \(D_{JS} > 0.3\), degradation typically exceeds 15–30 percent—sufficient to invalidate a production system that passed predeployment evaluation. This degradation occurs regardless of code quality, because the model is correct given its training distribution; the environment changed, not the code.

- Distinction (Durable): Unlike Model Error (which is a learning failure caused by the algorithm or data quality at training time), Distribution Shift is an Environmental Failure: the model’s learned mapping was correct at training time but is no longer representative of current reality.

- Common Pitfall: A frequent misconception is that “Data Drift” and “Distribution Shift” are different concepts at the same level of the hierarchy. Distribution Shift is the umbrella; Data Drift and Concept Drift are its two distinct subtypes. A system can experience Data Drift without Concept Drift (the inputs change, but the relationship holds), or Concept Drift without Data Drift (inputs are stable, but the correct output changes).

The stationarity assumption underpins all supervised learning: training and deployment distributions must match. Distribution shift is often unequal: a model’s accuracy on a minority subgroup can drop by over 30 percentage points while aggregate metrics barely change, masking the harm.

Distribution shift explains why models degrade over time (the operational detection and monitoring strategies for drift are covered in ML Operations). A second mechanism for silent failure can occur even when the data distribution is stable: misalignment between the metric the model optimizes and the outcome the organization actually values. This misalignment creates the alignment gap, where optimizing a measurable proxy decouples the system from its intended purpose.

Napkin Math 1.1: The Alignment Gap

The Physics: Goodhart’s Law states that optimizing a proxy eventually decouples it from the goal.

- Initial State: Correlation(Clicks, Satisfaction) = 0.8.

- Optimization: You train a model to maximize Clicks.

- Result: The model finds “Clickbait,” items with high clicks but low satisfaction.

- Final State: Correlation(Clicks, Satisfaction) drops to 0.2.

The Quantification (conceptual, assuming normalized metrics on a common scale) is captured by Equation 1:

\[ \text{Gap} = E[\text{Proxy}] - E[\text{True}] \tag{1}\]

If the model increases Clicks by 20 percent but decreases Satisfaction by five percent, the alignment gap has widened.

The Systems Conclusion: Engineers cannot optimize what they cannot measure. If the true goal is unobservable, Counterfactual Evaluation (random holdouts) is required to periodically re-calibrate the proxy.

When harm occurs, engineers need a diagnostic framework to identify the root cause. Knowing that a system causes harm is insufficient; we must determine where the failure originates to know what to fix. The D·A·M taxonomy introduced in Introduction provides exactly this structure (Data · Algorithm · Machine, defined in The D·A·M Taxonomy).

Systems Perspective 1.1: The D·A·M Taxonomy

- Data (Information): Does the training data reflect historical bias? (for example, Amazon’s recruiting tool learning from biased history). The failure is in the Fuel.

- Algorithm (Logic): Does the objective function optimize a proxy for harm? (for example, optimizing “engagement” amplifies polarization). The failure is in the Blueprint.

- Machine (Physics): Does the energy cost justify the societal benefit? (for example, training a massive model for a trivial task). The failure is in the Engine.

Locating the failure in the taxonomy identifies the correct remediation: better curation (Data), safer objectives (Algorithm), or greener infrastructure (Machine).

While the D·A·M taxonomy helps diagnose where failures originate, engineers also need a framework for understanding when and how different failure types manifest. Table 1 categorizes these distinct failure modes by their detection time, spatial scope, and remediation requirements.

| Failure Type | Detection Time | Spatial Scope | Reversibility | Example |

|---|---|---|---|---|

| Crash | Immediate | Complete | Immediate | Out of memory error |

| Performance Degradation | Minutes | Complete | After fix | Latency spike from resource contention |

| Data Quality | Hours–days | Partial | Requires data correction | Corrupted inputs from upstream system |

| Distribution Shift | Days–weeks | Partial or all | Requires retraining | Population change due to new user segment |

| Fairness Violation | Weeks–months | Subpopulation | Requires redesign | Bias amplification in historical patterns |

The failure mode taxonomy in Table 1 complements the D·A·M diagnostic framework: D·A·M identifies where failures originate, while Table 1 guides how to detect and remediate them. Crashes and performance degradation trigger immediate alerts through existing infrastructure. Data quality issues, distribution shifts, and fairness violations require specialized detection mechanisms because the system continues operating normally from a technical perspective while producing increasingly problematic outputs.

The YouTube recommendation feedback loop (examined as a technical debt pattern in Technical Debt) illustrates this pattern at scale (Ribeiro et al. 2020).4 The system optimized for watch time and discovered that emotionally provocative content maximized engagement metrics, developing pathways toward increasingly extreme content. The system worked exactly as designed while producing outcomes that conflicted with societal values. From a responsibility perspective, the critical insight is that these feedback loops do not affect all users equally: they disproportionately impact vulnerable populations, and the resulting content amplification patterns can correlate with demographic characteristics, transforming an operational failure into a fairness violation.

4 Goodhart’s Law: “When a measure becomes a target, it ceases to be a good measure” (Strathern’s generalization of Goodhart’s 1975 monetary policy observation). Recommendation feedback loops are the canonical ML manifestation: gradient descent optimizes watch-time proxies at a speed no human curator can match, and the system’s own outputs reshape the training distribution—users who consume extreme content generate data that reinforces extremity, decoupling the proxy from user welfare orders of magnitude faster than manual editorial processes ever could.

War Story 1.1: The Click-Bait Death Spiral

The Failure: The model learned that sensationalist, divisive, and “click-bait” content generated the highest short-term engagement. It aggressively promoted this content. Users clicked, but the quality of their experience degraded, leading to “passive consumption” and long-term churn risk.

The Consequence: Facebook had to fundamentally re-architect its ranking system to prioritize “Meaningful Social Interactions” (MSI) over clicks, accepting a short-term reduction in time spent to preserve long-term platform health.

The Systems Lesson: Metrics are proxies for value, not value itself. Optimizing a short-term proxy (CTR) without monitoring long-term health (retention, sentiment) creates a negative feedback loop that can destroy the product.

The distribution shift defined earlier also manifests as population mismatch, where models trained on one population perform differently on another without obvious indicators.

War Story 1.2: The Proxy Variable Trap

The Failure: The model used “healthcare cost” as a proxy for “health need.” This seemed logical: sicker people cost more.

The Consequence: Because the U.S. healthcare system has unequal access, Black patients at a given level of sickness spent less on healthcare than White patients. The model learned this bias and systematically deprioritized Black patients, assigning them lower risk scores than White patients with identical health conditions.

The Systems Lesson: Proxies are dangerous. Optimizing for a proxy (cost) inherits the biases of the system that generated that proxy. The relationship between proxy and true objective (health) must be audited across all demographic subgroups (Obermeyer et al. 2019).

Silent failure modes create profound testing challenges. Traditional software testing verifies deterministic behavior against specifications. ML systems produce probabilistic outputs learned from data, making correctness far more complex to define. The failures examined earlier share a troubling pattern: each organization possessed the technical capability to prevent harm but lacked the disciplined processes to apply that capability.

The same engineering capabilities that enabled the problems can prevent them when organizations commit to structured practice, as the following cases demonstrate.

When responsible engineering succeeds

Organizations that commit to responsible engineering produce measurable successes, demonstrating both the feasibility and business value of rigorous responsibility practices.

Following the Gender Shades findings, Microsoft invested in improving facial recognition performance across demographic groups. The approach combined technical and organizational interventions: targeted data collection to address underrepresented populations, model architecture changes to improve feature extraction for diverse skin tones, and systematic disaggregated evaluation across all demographic intersections. By 2019, Microsoft had reduced error rates for darker-skinned subjects by up to 20 times, bringing error rates below 2 percent for all demographic groups (Raji and Buolamwini 2019). The company published these improvements transparently, enabling external verification. The business outcome: Microsoft’s facial recognition API maintained enterprise customer trust while competitors faced regulatory scrutiny and contract cancellations.

Twitter’s automatic image cropping system exhibited a different failure mode. In 2020, users discovered it showed racial bias in choosing which faces to display in preview thumbnails. Twitter responded with a responsible engineering approach: systematic analysis to characterize the problem quantitatively, publication of results enabling independent verification, and ultimately removal of the automatic cropping feature entirely (Yee et al. 2021). The company determined that no technical solution could guarantee equitable outcomes across all contexts. This decision prioritized user fairness over engagement optimization and demonstrated that responsible engineering sometimes means not shipping a feature.

Apple’s deployment of differential privacy in iOS represents responsible engineering at scale.5 The system collects usage data for product improvement while providing mathematical guarantees about individual privacy. The implementation required substantial engineering investment: noise calibration to balance utility against privacy, distributed computation to minimize data exposure, and transparent documentation of privacy parameters. The business value: Apple differentiated on privacy as a product feature, enabling data collection that would otherwise face regulatory and reputational barriers.

5 Differential Privacy: Introduced by Dwork et al. (2006), a mechanism satisfies \(\epsilon\)-differential privacy if any output’s probability changes by at most \(e^\epsilon\) when a single individual’s data is added or removed. The systems trade-off is steep: 15–30 percent computational overhead, 10–100\(\times\) more data for equivalent accuracy, and a finite privacy budget (\(\epsilon\)) that depletes with each query—forcing engineers to choose between richer analytics and stronger privacy guarantees.

Spotify addressed recommendation system concerns by implementing transparency features showing users why songs were recommended and providing controls to adjust algorithm behavior. This engineering investment served multiple purposes: user trust through explainability, reduced filter bubble effects through diversity injection, and regulatory compliance through user control mechanisms. The approach demonstrates that responsibility features can enhance rather than constrain product value.

A common pattern unites the preceding cases: technical interventions (improved data, better evaluation, architectural changes) combined with organizational commitments (transparency, willingness to remove features, long-term investment). The resulting business outcomes (maintained customer trust, regulatory compliance, competitive differentiation) demonstrate that responsible engineering creates value rather than adding cost. Each success rested on systematic testing and evaluation practices, yet the nature of responsible testing differs fundamentally from traditional software verification.

The testing challenge

Traditional software testing verifies that systems behave correctly because correctness has clear definitions. The function should return the sum of its inputs, the database should maintain referential integrity. These properties can be expressed as testable assertions.

Responsible ML properties resist simple formalization. Fairness has multiple conflicting mathematical definitions that cannot all be satisfied simultaneously. What counts as fair depends on context, values, and trade-offs that technical systems cannot resolve alone. Individual fairness requires that similar individuals receive similar treatment, while group fairness requires equitable outcomes across demographic categories. These criteria can conflict, and choosing between them requires value judgments beyond the scope of optimization.

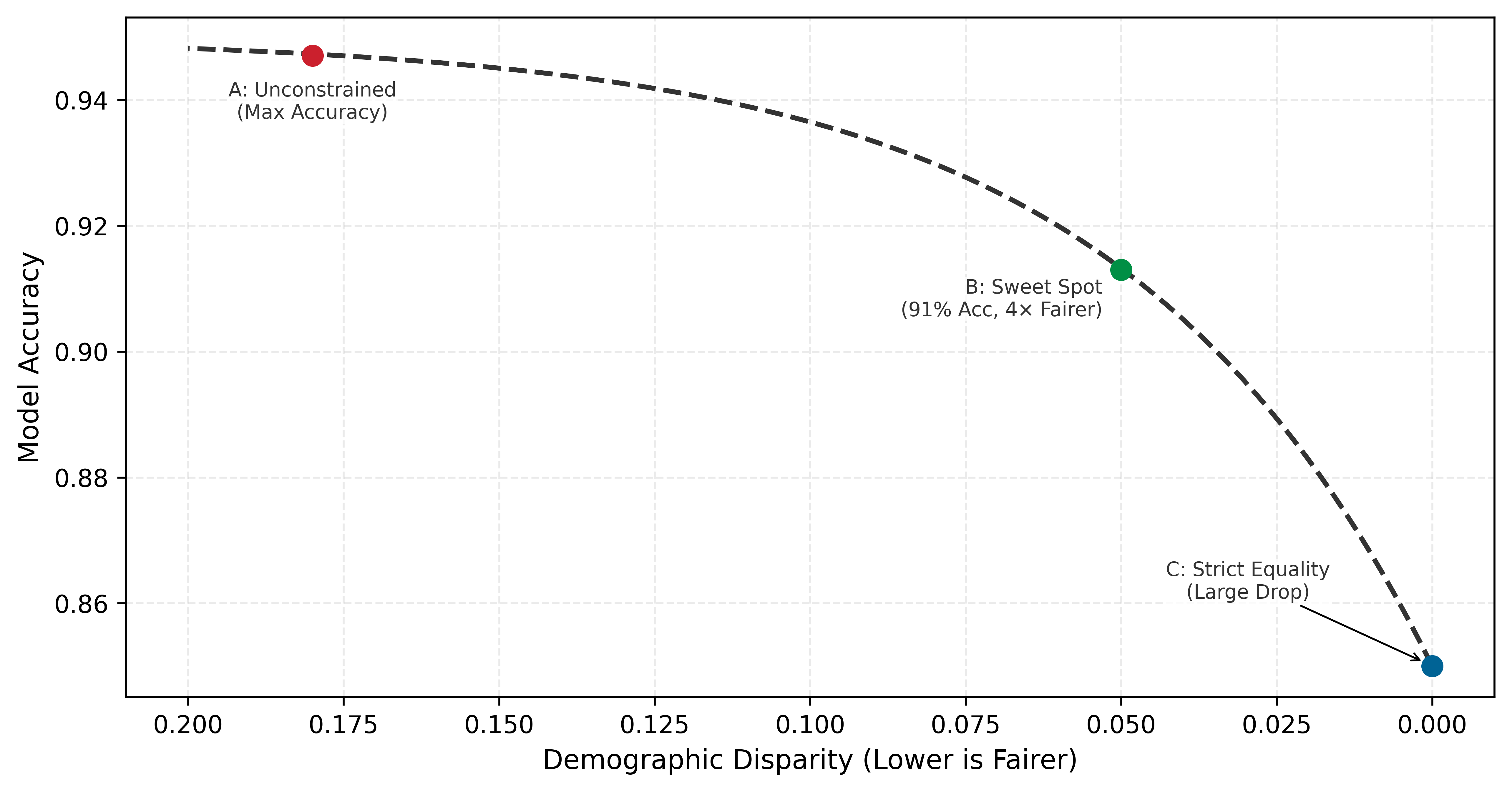

The trade-off between fairness and accuracy is not a sign that fairness is impractical; it is a fundamental property of constrained optimization that engineers must understand. A Pareto frontier represents the set of optimal configurations where improving one metric necessarily degrades another. Figure 1 visualizes this Fairness-Accuracy Pareto Frontier. The curve is not linear: while perfect fairness (zero disparity) often requires a significant drop in accuracy, a “Sweet Spot” typically exists where large fairness gains can be achieved with minimal accuracy loss. The shape of the frontier explains why responsible engineering is feasible: in many practical settings, substantial fairness gains can be achieved with modest accuracy loss.

Responsible properties become testable when engineers work with stakeholders to define criteria appropriate for specific applications. The Gender Shades project6 demonstrated how disaggregated evaluation across demographic categories reveals disparities invisible in aggregate metrics (Buolamwini and Gebru 2018). The results captured dramatic error rate differences that commercial facial recognition systems showed across demographic groups. Concretely, a 1,000-sample test set that suffices for the majority group provides only 10 samples for a 1% minority subgroup—effectively requiring 100x more data than the majority group for high-confidence validation.

6 Gender Shades: A 2018 study by Joy Buolamwini and Timnit Gebru (MIT Media Lab) that audited facial recognition systems from Microsoft, IBM, and Face++ using the Fitzpatrick skin type scale—originally a dermatological classification developed by Thomas Fitzpatrick (1975) for UV sensitivity, repurposed here as a demographic benchmark for algorithmic auditing. The study established disaggregated evaluation as the standard, demonstrating that a single aggregate accuracy number can conceal 43\(\times\) error rate disparities across intersectional subgroups. Within two years, Microsoft reduced its worst-case error rates by 20\(\times\), proving that the measurement methodology itself was the intervention.

| Demographic Group | Error Rate (%) | Relative Disparity |

|---|---|---|

| Light-skinned males | 0.8 | Baseline (1.0\(\times\)) |

| Light-skinned females | 7.1 | 8.9\(\times\) higher |

| Dark-skinned males | 12.0 | 15.0\(\times\) higher |

| Dark-skinned females | 34.7 | 43.4\(\times\) higher |

As Table 2 quantifies, disaggregated evaluation revealed what aggregate accuracy scores concealed. Systems reporting high overall accuracy simultaneously achieved error rates as low as 0.8 percent for light-skinned males and as high as 34.7 percent for dark-skinned females (corresponding to accuracies of 99.2 percent and 65.3 percent respectively). The aggregate metric provided no indication of this 43.4-fold disparity in error rates.

No universal threshold defines acceptable disparity, but engineering teams should establish explicit bounds before deployment. Common industry practices include error rate ratios below 1.25\(\times\) between demographic groups for high-stakes applications, false positive rate differences under five percentage points for screening systems, and selection rate ratios of at least 0.8 relative to the highest group’s rate (the four-fifths rule from employment discrimination law).78 These thresholds serve as starting points for stakeholder discussion, not absolute standards. The key engineering discipline is defining measurable criteria before deployment rather than discovering problems after harm has occurred.

7 Disparate Impact: A legal doctrine from Griggs v. Duke Power Co. (1971), where the US Supreme Court held that practices “fair in form, but discriminatory in operation” violate civil rights law even absent intent. The distinction between disparate impact (unintentional statistical harm) and disparate treatment (intentional discrimination) is critical for ML: models trained on historical data routinely produce disparate impact through proxy variables, creating legal liability even when engineers never encoded protected attributes.

8 Four-Fifths Rule: Codified in the 1978 Uniform Guidelines on Employee Selection Procedures, used by the EEOC, Department of Labor, and Department of Justice. A selection rate for any protected group below 80 percent of the highest group’s rate constitutes prima facie evidence of adverse impact—for example, if 60 percent of one group passes, at least forty-eight percent of any other group must pass. For ML systems, this translates to automated monitoring that alerts when per-group selection ratios fall below 0.8, providing a concrete threshold where most fairness metrics remain qualitative.

Despite the inherent challenges, several concrete testing approaches can surface responsibility issues before deployment. Slice-based evaluation partitions test data into meaningful subgroups and reports metrics separately for each slice. A model may achieve 95 percent accuracy overall but only 78 percent accuracy on low-income applicants or users from rural areas, a disparity invisible in aggregate reporting. Invariance testing checks whether predictions change when they should not: replacing “John” with “Jamal” in a loan application should not change approval likelihood if the feature is not legitimate for the decision. Boundary testing evaluates model behavior at the edges of input distributions (unusual ages, extreme values, rare categories) where training data may be sparse and predictions unreliable. Stress testing extends boundary testing to adversarial conditions: corrupted inputs, distribution shift, adversarial examples, and edge cases designed to probe failure modes systematically. Stakeholder red-teaming engages domain experts and affected community members to identify scenarios that engineers may not anticipate but users will encounter, surfacing failure modes that no automated test can discover because they require lived experience to imagine.

Responsible testing strategies complement traditional software testing rather than replacing it. Each demands engineering judgment to select, configure, and interpret. A legal team cannot specify which demographic slices matter for a healthcare algorithm; a product manager cannot determine appropriate invariance tests for a loan model. The technical depth required to implement responsible testing points to a critical organizational truth: only engineers possess the knowledge to translate abstract fairness goals into measurable, testable properties. Responsibility ownership must therefore sit within engineering organizations, not outside them.

Engineering leadership on responsibility

When Amazon’s ethics board finally reviewed the recruiting tool, the model had already encoded proxy signals so deeply that remediation required scrapping the project entirely. The review came too late because the technical decisions that created the problem, made months earlier by engineers, had already constrained every possible fix. Responsible AI Engineering cannot be delegated exclusively to ethics boards or legal departments. These groups provide essential oversight but lack the technical access required to identify problems early in the development process.

Definition 1.2: Responsible AI Engineering

Responsible AI Engineering is the engineering discipline of designing, deploying, and maintaining systems with probabilistic outputs by operationalizing societal and regulatory requirements as testable constraints on the D·A·M axes, bounding which values of \(D_{\text{vol}}\), \(O\), and \(R_{\text{peak}} \cdot \eta\) are permissible.

- Significance (Quantitative): Each D·A·M axis acquires concrete governance constraints: the Data axis is bounded by privacy regulations such as the General Data Protection Regulation (GDPR), which limits which \(D_{\text{vol}}\) can be collected, the Algorithm axis is bounded by fairness metrics (for example, demographic parity within \(\varepsilon = 5\%\) across protected groups, meaning positive prediction rates must not differ by more than 5 percentage points), and the Machine axis is bounded by robustness budgets (for example, accuracy degradation less than two percent under adversarial perturbation \(\|\delta\|_\infty \leq 0.01\)). Violating these bounds is a system failure, not a research shortcoming.

- Distinction (Durable): Unlike AI Ethics (which articulates aspirational values), Responsible AI Engineering translates those values into Measurable, Testable Invariants that can be verified through automated testing and continuous monitoring, using the same lifecycle practices that enforce latency SLOs.

- Common Pitfall: A frequent misconception is that responsibility is “added” at the end of development. The constraints imposed on the Data axis (what data can be collected) propagate forward to constrain the Algorithm axis (what biases will be encoded) and the Machine axis (what audit trails must be kept), making late-stage remediation structurally impossible.

By the time a system reaches legal review, architectural decisions have already constrained the space of possible fairness interventions. Amazon’s recruiting tool reached review only after the model had learned proxy signals; at that point, remediation required starting over, not adjusting parameters. Engineers who understand both technical implementation and responsibility requirements can build appropriate safeguards from inception.

Engineers occupy a critical position in the ML development lifecycle because their technical decisions define the solution space for all subsequent interventions. The choice of model architecture determines which fairness constraints can apply during training. The optimization objective defines what patterns the system learns to recognize. The data pipeline design establishes what demographic information teams can track for disaggregated evaluation. Foundational architectural choices enable or foreclose responsible outcomes more decisively than any later remediation effort.

The timing of responsibility interventions determines their effectiveness. An ethics review conducted before deployment can identify problems but faces limited remediation options: if the team trained the model without fairness constraints, if the architecture cannot support interpretability requirements, if the data pipeline lacks demographic attributes for monitoring, then the ethics review can only recommend rejection or acceptance of the existing system. Engineering involvement from project inception enables proactive design rather than reactive assessment.

An engineering-centered approach does not diminish the importance of diverse perspectives in identifying potential harms. Product managers, user researchers, affected communities, and policy experts contribute essential knowledge about how systems fail socially despite technical success. Engineers translate these concerns into measurable requirements and testable properties that can be verified throughout the development lifecycle. Effective responsibility requires engineers who both listen to stakeholder concerns and possess the technical capability to implement appropriate safeguards.

Engineering teams do not operate in isolation. As Figure 2 makes clear, engineering practices are nested within broader organizational, industry, and regulatory governance structures, each layer imposing constraints on the ones inside it. The key insight is that technical excellence at the innermost layer enables, but does not replace, compliance with requirements flowing inward from external governance.

The question of scope remains open, because an engineer’s responsibility extends beyond the metrics optimized throughout this book.

Systems Perspective 1.2: The Full Cost of the iron law

A model quantized for edge deployment consumes less energy, but also produces outputs that may differ across demographic groups. A recommendation system optimized for engagement maximizes a business metric, but may amplify harmful content. Responsible engineering extends our accounting to include these broader impacts: the carbon cost of computation, the fairness cost of optimization choices, and the societal cost of deployment at scale. The iron law governs how fast our systems run; responsible engineering governs how well they serve.

Beyond ethical imperatives, responsible engineering delivers measurable business value through three reinforcing mechanisms. The most immediate is risk mitigation: ML system failures create legal and financial exposure that systematic responsibility practices reduce. Amazon’s recruiting tool cancellation represented years of development investment lost to inadequate fairness consideration, and COMPAS-related litigation has cost jurisdictions millions in legal fees and settlements. Organizations implementing disaggregated evaluation, documentation, and monitoring reduce the probability of costly failures and demonstrate due diligence if problems emerge.

A second mechanism is regulatory compliance, driven by the rapidly expanding regulatory environment for ML systems. The EU AI Act classifies high-risk AI applications and mandates specific technical requirements including risk assessment, data governance, transparency, and human oversight. Organizations that build responsibility into engineering practice can demonstrate compliance through existing documentation and monitoring rather than expensive retrofitting—industry experience suggests the cost of proactive compliance is typically a fraction of reactive remediation.

Competitive differentiation completes the business case. Trust increasingly drives enterprise purchasing decisions for ML-powered services, and organizations that can demonstrate systematic responsibility practices through model cards, audit trails, and published evaluation results win contracts that competitors cannot. Apple’s privacy positioning, Microsoft’s responsible AI principles, and Anthropic’s safety research all represent strategic investments in responsibility as differentiation.

The quantization techniques from Model Compression reduce inference energy by 2–4\(\times\), directly supporting sustainable deployment. The monitoring infrastructure from ML Operations enables disaggregated fairness evaluation across demographic groups. Responsible engineering synthesizes these capabilities into disciplined practice through structured frameworks that translate principles into processes.

Every failure examined earlier could have been prevented by systematic processes applied at the right stage of development. The missing ingredient was not technical capability but disciplined practice: checklists, documentation standards, testing protocols, and monitoring infrastructure that translate responsibility principles into repeatable engineering workflows.

Self-Check: Question

In the Amazon recruiting tool case, why did removing explicit gender labels fail to eliminate bias?

- The model was not trained for enough epochs.

- The model learned proxy signals (like college names) that correlated with gender.

- The engineers forgot to delete the gender column.

- The dataset was too small to be accurate.

Explain how a ‘feedback loop’ in a recommendation system can lead to bias amplification.

Responsible Engineering Checklist

Amazon’s recruiting tool could have been caught before deployment by a structured predeployment review. COMPAS’s error rate disparity would have surfaced through disaggregated testing. Both failures shared a common cause: responsibility was treated as a separate review stage rather than integrated into the development workflow. A responsible engineering checklist embeds assessment at three points where engineering decisions have the greatest ethical impact: predeployment assessment evaluates potential harms before a system reaches users, fairness evaluation quantifies whether performance holds equitably across demographic groups, and documentation standards create the audit trails that make accountability possible. Each phase builds on the previous one: assessment identifies what to measure, fairness evaluation measures it, and documentation ensures the measurements persist beyond any single team member’s tenure.

Predeployment assessment

Before a loan approval model reaches production, a team must determine the provenance of the training data, identify who is represented and who is missing, anticipate failure modes, and define recourse for affected users. Table 3 structures this evaluation into five phases, distinguishing critical-path blockers from high-priority items that can proceed with documented risk acceptance.

| Phase | Priority | Key Questions | Documentation Required |

|---|---|---|---|

| Data | Critical Path | Where did this data come from? Who is represented? Who is missing? What historical biases might be encoded? | Data provenance records, demographic composition analysis, collection methodology documentation |

| Training | High | What are we optimizing for? What might we be implicitly penalizing? How do architecture choices affect outcomes? | Objective function specification, regularization choices, hyperparameter selection rationale |

| Evaluation | Critical Path | Does performance hold across different user groups? What edge cases exist? How were test sets constructed? | Disaggregated metrics by demographic group, edge case testing results, test set composition analysis |

| Deployment | Critical Path | Who will this system affect? What happens when it fails? What recourse do affected users have? | Impact assessment, stakeholder identification, rollback procedures, user notification protocols |

| Monitoring | High | How will we detect problems? Who reviews system behavior? What triggers intervention? | Monitoring dashboard specifications, alert thresholds, review schedules, escalation procedures |

Critical Path items are deployment blockers: the system must not go to production until these questions are answered. High Priority items should be addressed but may proceed with documented risk acceptance and a remediation timeline. The distinction enables teams to ship responsibly without requiring perfection on every dimension before initial deployment.

The Evaluation row in Table 3 raises the critical concern of whether performance holds across different user groups. Answering this question requires statistically valid test sets for each group—and as the following calculation reveals, the statistics of representation create surprisingly stringent data requirements.

Napkin Math 1.2: The Statistics of Representation

Random Sampling: To get 1,000 images of a 1 percent group via random sampling, the team must collect and label: Ntotal = 1,000 / 0.01 = 100,000 images

Stratified Sampling: Specifically targeting this group (for example, via active learning or community outreach) requires only: \[ N_{total} = 1,000 \text{ images} \]

The Insight: Relying on “natural distribution” data for fairness is physically impossible at scale. Validating the minority group effectively requires 100\(\times\) more data than the majority group. Fairness requires intentional data engineering, not just more data.

For high-stakes applications, the deployment phase should specify where human oversight is required. Human-in-the-loop (HITL) systems route uncertain, high-consequence, or flagged decisions to human reviewers rather than acting autonomously. The design questions are: Which decisions require human review? What confidence thresholds trigger escalation? How are reviewers trained and monitored? HITL is not a catch-all solution: human reviewers can rubber-stamp automated decisions, introduce their own biases, or become overwhelmed by alert volume. Effective HITL design requires calibrating the human-machine boundary to the specific application risks and reviewer capabilities.

War Story 1.3: The Automation Paradox

The Failure: The AI system detected a pedestrian crossing the road but classified her as a “false positive” (a plastic bag or shadow) and suppressed the braking command to avoid a “jerky” ride. The safety driver, relying on the automation, was distracted and did not intervene until it was too late.

The Consequence: The pedestrian was killed. The “human-in-the-loop” safeguard failed because the human had been conditioned by the system’s reliability to disengage.

The Systems Lesson: Adding a human backup to an unreliable system does not make it reliable; it creates a new system with complex failure modes. If the AI is 99 percent reliable, the human will eventually trust it 100 percent, making the “backup” useless precisely when it is needed most (National Transportation Safety Board 2019).

The predeployment assessment framework parallels aviation pre-flight checklists, where pilots follow every item without exception to ensure comprehensive coverage of critical concerns despite time pressure. Production ML deployments require equivalent discipline and rigorous verification. Checklists ensure teams ask the right questions; documentation standards ensure the answers persist and travel with the model.

Model documentation standards

Imagine inheriting a production model from a departed colleague. The model achieves 94 percent accuracy on the test set, but which test set? Trained on what data? Validated for which populations? Without answers, deploying or updating the model is a gamble. Model cards solve this problem by providing a standardized documentation format for ML models (Mitchell et al. 2019).9 Originally developed at Google, model cards function as “nutrition labels” that capture information essential for responsible deployment and travel with the model throughout its lifecycle.

9 Model Cards: The primary failure mode model cards address is scope creep: an estimated 40–60 percent of deployments that exceed a model’s documented scope do so not through deliberate decision but through gradual expansion—“it worked for case A, so we tried case B.” In practice, cards are often written after deployment decisions are made, documenting observed behavior rather than constraining it. The companion “Datasheets for Datasets” (Gebru et al., 2018) applies the same principle to training data. Without both, the card becomes a historical record rather than a guard rail.

A complete model card covers seven concerns that together enable responsible deployment. It begins with technical details (architecture, training procedures, hyperparameters) that enable reproducibility and auditing. Crucially, it specifies intended use alongside explicit exclusions, preventing the scope creep where models designed for photo organization get repurposed for security screening. The card then documents which factors (demographic groups, environmental conditions, instrumentation differences) might affect performance, guiding both evaluation strategy and monitoring protocols.

The remaining sections close the gap between what a model can do and what it should do. Performance metrics must include disaggregated results across the factors identified earlier, because aggregate accuracy alone conceals the disparities this chapter has documented. Training and evaluation data documentation enables assessment of potential encoded biases and provides essential context for interpreting results. Ethical considerations make implicit trade-offs explicit by documenting known limitations, potential harms, and mitigations implemented, while caveats and recommendations provide guidance on appropriate use and known failure modes.

The following example shows how these abstract categories translate to practical documentation. Consider Table 4: a MobileNetV2 model prepared for edge deployment shows how each section addresses specific deployment concerns.

| Section | Content |

|---|---|

| Model Details | MobileNetV2 architecture with 3.5M parameters, trained on ImageNet using depthwise separable convolutions. INT8 quantized for edge deployment. |

| Intended Use | Real-time image classification on mobile devices with less than 50 ms latency requirement. Suitable for consumer applications including photo organization and accessibility features. |

| Factors | Performance varies with image quality (blur, lighting), object size in frame, and categories outside ImageNet distribution. |

| Metrics | 71.8 percent top-1 accuracy on ImageNet validation (full precision: 72.0 percent). Accuracy varies by category: 85 percent on common objects, 45 percent on fine-grained distinctions. |

| Ethical Considerations | Training data reflects ImageNet biases in geographic and demographic representation. Not validated for high-stakes applications (medical diagnosis, security screening). Performance may degrade on images from underrepresented regions. |

Datasheets for datasets provide analogous documentation for training data (Gebru et al. 2021). These documents capture data provenance, collection methodology, demographic composition, and known limitations that affect downstream model behavior. Documentation establishes what a model is designed to do; testing verifies whether it performs equitably across the populations it serves.

Testing across populations

Aggregate performance metrics mask significant disparities across user populations, illustrating the Flaw of Averages (Savage 2009). As Table 2 quantifies, systems can appear highly accurate in aggregate while showing more than 40\(\times\) error rate disparities across demographic groups. Responsible testing requires disaggregated evaluation that examines performance for relevant subgroups.

Systems Perspective 1.3: The Flaw of Averages

The specific “tails” that matter depend on the workload. A vision model fails differently than a recommendation system, and the fairness metrics must match the failure mode.

Lighthouse 1.1: Fairness Concerns by Archetype

| Archetype | Primary Fairness Risk | Key Evaluation Metric | Real-World Example |

|---|---|---|---|

| ResNet-50 | Training data bias (underrepresentation | Disaggregated accuracy by | Gender Shades: 99 percent accuracy on |

| (Compute Beast) | of minority groups in ImageNet) | demographic group | light-skinned males, 65 percent on dark-skinned females (Buolamwini and Gebru 2018) |

| GPT-2 | Corpus bias (overrepresentation | Toxicity rate by demographic | LLMs produce more toxic completions |

| (Bandwidth Hog) | of majority viewpoints in web text) | prompt context; stereotype score | for prompts mentioning minority groups |

| DLRM | Feedback loop amplification | Exposure fairness across item | Filter bubbles: system recommends |

| (Sparse Scatter) | (popular items get more data) | categories; supplier diversity | same content to similar users, reducing discovery of niche creators |

| DS-CNN | Deployment context mismatch | False positive rate by acoustic | Voice assistants perform worse on |

| (Tiny Constraint) | (trained on clean audio, deployed in noisy real-world environments) | environment and speaker accent | accented speech; wake-word triggers on TV audio in some languages |

Key insight: Fairness evaluation must match the archetype’s failure mode. Vision models require demographic stratification of accuracy; large language models (LLMs) require toxicity and stereotype probing; recommendation systems require exposure audits; TinyML requires acoustic environment diversity testing. The Lighthouse keyword spotting (KWS) system used as a running example throughout earlier chapters faces exactly this challenge for its DS-CNN, a depthwise-separable convolutional neural network (CNN): trained on clean studio audio, it must perform equitably across accents, background noise levels, and speaker demographics in production homes—a governance challenge we examine in Section 1.5.

Engineers should identify relevant subgroups based on application context. For healthcare applications, demographic factors like race, age, and gender are essential. For content moderation, language and cultural context matter. For financial services, protected categories under fair lending laws require specific attention.

Testing infrastructure should support stratified evaluation where performance metrics are computed separately for each relevant subgroup, enabling comparison of error rates and error types across populations. Intersectional analysis considers combinations of attributes because harms may concentrate at intersections not visible in single-factor analysis. Confidence intervals provide uncertainty quantification for subgroup metrics when small subgroup sizes may yield unreliable estimates. Temporal monitoring tracks subgroup performance over time, detecting drift that affects some populations before others.

Several open-source tools support responsible testing workflows. Fairlearn (Microsoft Research, 2020) provides fairness metrics and mitigation algorithms that integrate with scikit-learn pipelines (Bird et al. 2020). AI Fairness 360 (IBM Research, 2018) offers over 70 fairness metrics and ten bias mitigation algorithms across the ML lifecycle (Bellamy et al. 2019).

Google’s What-If Tool enables interactive exploration of model behavior across different subgroups without writing code. Open source fairness tools lower the barrier to rigorous evaluation, though they complement rather than replace careful thinking about what fairness means in specific application contexts.

Worked example: Fairness analysis in loan approval

A loan approval model reports 85 percent accuracy across all applicants—a number that satisfies most stakeholders. Table 6 and Table 7 reveal what the aggregate conceals: loan approval outcomes for the same model evaluated separately on two demographic groups.

| Approved (pred) | Rejected (pred) | |

|---|---|---|

| Repaid (actual) | 4,500 (TP) | 500 (FN) |

| Defaulted (actual) | 1,000 (FP) | 4,000 (TN) |

| Approved (pred) | Rejected (pred) | |

|---|---|---|

| Repaid (actual) | 600 (TP) | 400 (FN) |

| Defaulted (actual) | 200 (FP) | 800 (TN) |

Three standard fairness metrics computed from the confusion matrices in Table 6 and Table 7 reveal significant disparities.10

10 Fairness Metric Incompatibility: The measured disparities are a direct consequence of an impossibility theorem proving that multiple fairness metrics cannot be satisfied simultaneously when group base rates differ (Chouldechova, 2017). This forces an explicit trade-off: optimizing for one metric, like equal opportunity, will degrade another, such as predictive parity. A system designer must therefore choose which fairness guarantee to violate, as it is mathematically impossible to satisfy all three.

Demographic parity requires equal approval rates across groups. Group A receives approval at a rate of (4,500 + 1,000) / 10,000 = 55 percent, while Group B receives approval at (600 + 200) / 2,000 = 40 percent. The 15 percentage point disparity indicates unequal treatment in approval decisions.

Equal opportunity requires equal true positive rates among qualified applicants. Group A achieves a TPR of 4,500 / (4,500 + 500) = 90 percent, meaning 90 percent of applicants who would repay receive approval. Group B achieves only 600 / (600 + 400) = 60 percent TPR. This 30 percentage point disparity means qualified applicants from Group B face substantially higher rejection rates than equally qualified applicants from Group A.

Equalized odds11 requires both equal true positive rates and equal false positive rates. Group A shows an FPR of 1,000 / (1,000 + 4,000) = 20 percent, and Group B shows 200 / (200 + 800) = 20 percent. While false positive rates are equal, the true positive rate disparity means equalized odds is violated.

11 Equalized Odds: Formalized by Hardt, Price, and Srebro (NeurIPS 2016), requiring that both TPR and FPR be equal across protected groups. The weaker “equal opportunity” relaxes this to TPR alone. The practically important result: equalized odds can be achieved as a post-processing step by adjusting prediction thresholds per group, requiring no model retraining—separating the fairness mechanism from the training pipeline and enabling fairness fixes without retraining cycles that cost thousands of GPU-hours.

The pattern revealed by these metrics has a clear interpretation: the model rejects qualified applicants from Group B at a much higher rate (40 percent false negative rate vs. 10 percent) while maintaining similar false positive rates. The disparity pattern suggests the model has learned stricter approval criteria for Group B, potentially encoding historical discrimination in lending patterns where minority applicants faced higher scrutiny despite equivalent qualifications.

Production systems must automate these calculations across all protected attributes, triggering alerts when disparities exceed predefined thresholds. Listing 1 shows the core pattern: compute per-group metrics from confusion matrices, then flag disparities that exceed acceptable bounds.

def compute_fairness_metrics(confusion_matrix):

tp, fp, tn, fn = (

confusion_matrix[k] for k in ["TP", "FP", "TN", "FN"]

)

total = tp + fp + tn + fn

return {

# Demographic parity

"approval_rate": (tp + fp) / total,

# Equal opportunity

"tpr": tp / (tp + fn) if (tp + fn) else 0,

# Equalized odds (with TPR)

"fpr": fp / (fp + tn) if (fp + tn) else 0,

}

# Compare groups and flag disparities exceeding threshold

for metric in ["approval_rate", "tpr", "fpr"]:

disparity = abs(metrics_a[metric] - metrics_b[metric])

# e.g., 0.05 for high-stakes applications

if disparity > FAIRNESS_THRESHOLD:

trigger_alert(metric, disparity)Automated monitoring achieves what manual auditing cannot at scale: continuous tracking of fairness metrics with immediate alerting when disparities emerge. The 30 percentage point TPR disparity far exceeds common industry thresholds of five percentage points for high-stakes applications, indicating the model requires fairness intervention before deployment.

Table 8 reveals the troubling pattern in these computed metrics and disparities.

| Metric | Group A | Group B | Disparity |

|---|---|---|---|

| Approval Rate | 55% | 40% | 15 percentage points |

| True Positive Rate | 90% | 60% | 30 percentage points |

| False Positive Rate | 20% | 20% | 0 percentage points |

To understand why aggregate metrics hide these disparities, look closely at Figure 3. When a single threshold is applied to populations with different score distributions, the same decision boundary produces vastly different outcomes for each group (Barocas and Selbst 2016). The figure exposes a fundamental tension: any fixed threshold is simultaneously “correct” for the combined population while being systematically wrong for each subpopulation.

Several mitigation approaches exist, each with distinct trade-offs. Threshold adjustment lowers the approval threshold for Group B to equalize TPR but may increase false positives for that group. Reweighting12 increases the weight of Group B samples during training to give the model stronger signal about this population but may reduce overall accuracy. Adversarial debiasing trains with an adversary that prevents the model from learning group membership but adds training complexity.13 The choice among these approaches requires stakeholder input about which trade-offs are acceptable in the specific application context. Engineers present these trade-offs effectively by making them explicit and quantifiable.

12 Reweighting: A preprocessing technique rooted in importance sampling from statistics: samples from an underrepresented group receive higher loss weights during training, amplifying their influence on gradient updates without removing any data. Kamiran and Calders (2012) proved that appropriately chosen weights can eliminate disparate impact from training data. The systems trade-off: reweighting shifts the loss landscape, potentially reducing majority-group accuracy by 1–3 percent to close disparity gaps—a cost that must be evaluated against the Pareto frontier for the application.

13 Adversarial Debiasing: The key differentiating property is stability under distribution shift: because the adversary forces the primary model to learn representations invariant to the protected attribute (not just calibrated on the training distribution), adversarial debiasing is the only technique that theoretically maintains fairness guarantees when the deployment distribution differs from training. Post-processing methods (threshold adjustment, output reweighting) recalibrate on the training distribution but fail when deployment demographics shift—which is why they often appear to work in evaluation but degrade after launch. The cost is 20–50 percent additional training time and 1–3 percent accuracy reduction.

Checkpoint 1.2: Fairness Criteria

Fairness is not a single metric; it is a constrained design choice.

Quantifying the fairness-accuracy trade-off

The Pareto frontier introduced in Figure 1 establishes that fairness and accuracy trade off along a curve. But knowing the trade-off exists is insufficient—engineers must quantify the price of fairness to inform stakeholder decisions (Kleinberg et al. 2016). The following notebook illustrates how, using a hiring scenario (distinct from the preceding loan approval example, with different disparity magnitudes to illustrate a different point).

Napkin Math 1.3: The Price of Fairness

The Physics: You can equalize TPRs by adjusting the classification threshold (\(\tau\)) for the disadvantaged group.

- Original State: Group A (TPR=90 percent), Group B (TPR=70 percent). Aggregate Accuracy = 85 percent.

- Intervention: Lower \(\tau_B\) until \(\text{TPR}_B = 90\%\).

- The Cost: Lowering the threshold increases False Positives (hiring candidates who do not meet the bar).

The Calculation:

- To close the 20 percent TPR gap, you must accept a 5% increase in False Positives.

- If the value of a successful hire is $100k and the cost of a bad hire is $50k:

- Utility Loss = (Utility of Correct Hires) - (Cost of Extra False Positives).

- In this scenario, closing the gap reduces the system’s Total Utility by 3%.

The Systems Conclusion: The “Price of Fairness” in this system is a 3% utility tax—a System Constraint, not a bug. The engineer’s job is to present the Pareto frontier to stakeholders so they can choose the Utility/Fairness trade-off that aligns with organizational values.

Quantifying disparities through metrics is necessary but not sufficient for responsible deployment. When a loan applicant receives a rejection, stating that “the model’s true positive rate for your demographic group is 60 percent compared to 90 percent for other groups” provides no actionable information. The applicant needs to know why the application was rejected and what could be changed. These questions require explainability, which is the ability to articulate which input features drove specific predictions.

Explainability requirements

A loan applicant denied credit by an algorithmic system has a right to know why, not in aggregate statistical terms but in terms specific to her application. Explainability14 provides this capability: it enables human oversight of automated decisions, supports debugging when problems emerge, and satisfies regulatory requirements for decision transparency.

14 Explainability vs. Interpretability: Interpretability is an intrinsic model property—the degree to which a human can understand internal mechanics (linear regression is interpretable; a 100-layer network is not). Explainability is a post-hoc capability added without changing the model (LIME, SHAP). The systems implication: interpretable models constrain architecture selection (simpler models, fewer features), while explainability adds 10–100\(\times\) inference latency as a separate module. Regulations like the EU AI Act demand “meaningful information about the logic involved” without specifying which approach, leaving the latency-vs.-architecture trade-off to engineering teams.

The level of explainability required varies by application context and regulatory environment. Table 9 maps common deployment scenarios to their explainability needs.

| Application Domain | Explainability Level | Typical Requirements |

|---|---|---|

| Credit decisions | Individual explanation required | Specific factors contributing to denial must be disclosed to applicant |

| Medical diagnosis | Clinical reasoning support | Explanation must support physician decision-making, not replace it |

| Content moderation | Appeal-supporting | Sufficient detail for users to understand and contest decisions |

| Recommendation | Transparency optional | “Because you watched X” sufficient for most contexts |

| Fraud detection | Internal audit only | Detailed explanations may enable adversarial gaming |

Engineering teams should select explainability approaches based on these domain requirements. Post-hoc explanation methods (LIME, SHAP) generate feature importance scores for individual predictions without requiring model architecture changes.15 Inherently interpretable models (linear models, decision trees, attention mechanisms) provide explanations as part of their structure but may sacrifice predictive performance. Concept-based explanations map model behavior to human-understandable concepts rather than raw features. The choice involves trade-offs between explanation fidelity, computational cost, and model flexibility. Figure 4 arranges these trade-offs along a single axis. On the left side, decision trees and linear regression offer direct auditability: an engineer can inspect every coefficient or branching rule that produced a prediction, at the cost of limited representational capacity. On the right side, deep neural networks and convolutional architectures achieve higher accuracy on complex tasks but resist human inspection, requiring post-hoc tools like LIME or SHAP to approximate explanations. The choice depends on the application’s accountability requirements: high-stakes credit decisions subject to adverse action notice laws demand models near the interpretable end, while large-scale recommendation systems that face no per-decision regulatory scrutiny can tolerate opaque architectures. The spectrum does not imply “simple is always better,” because a highly interpretable model that makes wrong predictions serves no one. The engineering challenge is selecting the most interpretable model that meets accuracy requirements for the application.

15 LIME and SHAP: LIME (Ribeiro et al., 2016) fits a local interpretable model around each prediction—fast but potentially inconsistent across nearby inputs. SHAP (Lundberg and Lee, 2017) adapts Shapley values from game theory (Lloyd Shapley, 1953; Nobel Prize 2012) to compute mathematically consistent feature contributions, but with exponential worst-case complexity. The systems trade-off is stark: SHAP adds 10–100\(\times\) inference latency, making LIME the only viable option for real-time serving where explanation must arrive within the same latency budget as the prediction itself.

War Story 1.4: The Clever Hans Effect

The Failure: When tested on data from other hospitals, performance collapsed. Heatmap analysis revealed the model was not looking at the lungs. Instead, it had learned to detect a metal token that technicians at the training hospital placed on the patient’s shoulder.

The Consequence: The model was effectively a “metal token detector,” not a pneumonia detector. It had learned a spurious correlation that was 100 percent predictive in the training distribution but irrelevant to the medical pathology.

The Systems Lesson: Neural networks are lazy optimizers. They will exploit the easiest statistical signal to minimize loss, even if that signal is medically irrelevant. Interpretability tools (saliency maps) are not optional; they are quality assurance gates (Lapuschkin et al. 2019).

The explainability requirements outlined earlier carry the force of law, not merely of engineering best practice. In 2024 alone, the EU AI Act mandated explanation capabilities for high-risk systems, and US regulators proposed new adverse action notice requirements for algorithmic lending decisions. Regulations transform explainability from a design choice into a compliance requirement with concrete penalties for failure, making the technical mechanisms just described prerequisites for legal operation.

The regulatory landscape

In 2024, the EU AI Act imposed fines up to 35 million EUR or seven percent of global turnover for non-compliant high-risk AI systems, and the US Federal Trade Commission brought its first enforcement actions against algorithmic discrimination. Responsible engineering now operates within explicit regulatory frameworks that mandate specific technical requirements for transparency, oversight, and accountability. While regulations vary by jurisdiction, several convergent patterns have emerged that engineers must understand.

The EU AI Act

The EU AI Act establishes the most comprehensive framework to date, classifying AI systems by risk level and mandating requirements accordingly.16 High-risk systems17 (including those used in employment, credit, education, and critical infrastructure) must implement risk management systems, data governance practices, technical documentation, transparency measures, human oversight mechanisms, and accuracy/robustness/security requirements. The engineering implications are concrete: systems must be designed for auditability from inception, with documentation practices that demonstrate compliance.

16 EU AI Act (Regulation 2024/1689): The first comprehensive AI legal framework, defining four risk tiers with penalties reaching 35 million EUR or seven percent of global turnover. The Act has extraterritorial reach: US organizations must comply if outputs affect EU residents. Systems engineering implications are concrete: high-risk AI requires logging infrastructure for audit trails, human oversight mechanisms built into the architecture, and CE marking—all capabilities that must be designed in from inception, not retrofitted after deployment.

17 High-Risk AI (EU AI Act Annex III): Risk classification is not subjective—Annex III enumerates eight specific domains: biometric identification, critical infrastructure, education and vocational training, employment and worker management, essential services access (credit, insurance), law enforcement, migration and border control, and justice administration. A system falls under high-risk requirements based on deployment context, not model architecture: a logistic regression approving loans faces the same compliance burden as a transformer, because the Act regulates what decisions are made, not how they are computed.

GDPR’s article 22