Three Framework Problems

ML Frameworks

Purpose

Why does the framework—the layer between your math and your hardware—silently constrain every decision that follows?

Neural networks are defined by mathematics (matrix multiplications, gradient computations, activation functions), but mathematics does not execute itself. Between the equations and the silicon lies a translation layer that decides how operations are scheduled on hardware, how memory is allocated across the compute hierarchy, and how gradients flow backward through the computational graph. The framework is this translation layer, and the translation is not neutral. An eager-mode framework that prioritizes debugging flexibility sacrifices the graph-level optimizations that can halve inference latency. A framework lacking support for the target accelerator renders the hardware investment useless. A framework with a rich training API but no export path to edge devices means the model cannot reach the deployment target it was designed for. Architecture choices are at least visible: engineers debate model size, layer count, and attention mechanisms explicitly. Framework choices are more insidious because they operate below the level of daily attention, silently determining which optimizations are possible, which hardware is reachable, and which deployment paths exist. In the AI Triad (Introduction), the framework is the invisible mediator between Algorithm and Machine, and its design choices—baked into its compilation stack, memory management, and operator libraries—are difficult to reverse. Migrating between frameworks invalidates data pipelines, serving infrastructure, model checkpoints, and team expertise, typically requiring months of engineering effort for production systems. Framework selection is therefore an infrastructure commitment that determines what the system can do on the hardware it must run on.

- Explain how ML frameworks solve three core problems: execution (computational graphs), differentiation (automatic differentiation), and abstraction (hardware-optimized operations)

- Compare eager, static, and hybrid (JIT) execution strategies using the Compilation Continuum and Dispatch Overhead principles to determine when compilation benefits outweigh costs

- Describe the nn.Module abstraction pattern for hierarchical composition, automatic parameter discovery, and mode-dependent behavior

- Analyze how the memory wall drives framework optimization strategies including kernel fusion, mixed-precision training, and activation checkpointing

- Evaluate major framework architectures (TensorFlow, PyTorch, JAX) based on their execution models, differentiation approaches, and deployment trade-offs

- Evaluate framework selection trade-offs by matching model requirements, hardware constraints, and deployment targets across the cloud-to-edge spectrum

Two lines of code: model = transformer(...) followed by loss.backward(). Between them, invisible to the programmer, the framework orchestrates billions of floating-point operations across memory hierarchies, computes exact gradients through millions of parameters using automatic differentiation (the systematic application of the chain rule to compute derivatives), schedules thousands of GPU kernel launches, and manages gigabytes of intermediate state. The simplicity is an illusion. Those two lines trigger machinery as complex as a compiler, because that is exactly what a modern ML framework is.

The architectures defined in Network Architectures specify what computations neural networks perform, but knowing what to compute is entirely different from knowing how to compute it efficiently. A transformer’s attention mechanism (introduced in Network Architectures) requires coordinating computation across memory hierarchies and accelerator cores in patterns that naive implementations would execute 100\(\times\) slower than optimized ones. Implementing these operations from scratch for every model would make deep learning economically infeasible. ML frameworks exist to bridge this gap by translating high-level model definitions into hardware-specific execution plans that extract maximum performance from silicon.

A framework is to machine learning what a compiler is to traditional programming. A C compiler translates human-readable code into optimized machine instructions, managing register allocation, instruction scheduling, and memory layout. An ML framework translates high-level model definitions into hardware-specific execution plans, managing operator fusion, memory reuse, and device placement. This analogy is more than metaphor: modern frameworks literally include compilers, as we will see throughout this chapter.

Every ML framework, regardless of API or design philosophy, must solve three core problems. First, the execution problem: when and how should computation happen? Should operations execute immediately as written (eager execution1), or should the framework build a complete description first—a computational graph2 (a structured representation of operations and their dependencies)—and optimize before executing (graph execution)? This choice shapes debugging capability, optimization potential, and deployment flexibility. Second, the differentiation problem: how should the framework compute gradients automatically? As established in Neural Computation, training (the complex orchestration detailed in Model Training) requires derivatives of a loss function with respect to millions or billions of parameters, and manual differentiation is error-prone at this scale. Frameworks must implement automatic differentiation systems that compute exact gradients for arbitrary compositions of operations while managing the memory overhead of storing intermediate values. Third, the hardware abstraction problem: how should the framework target diverse hardware from a single interface? The same model definition should run on CPUs, GPUs, Tensor Processing Units (TPUs), and mobile devices, each with different memory constraints and optimal execution patterns.

1 Eager Execution: This mode executes each operation sequentially and immediately, which enables direct debugging with standard tools but sacrifices the global view needed for graph-level optimizations. Without seeing the full sequence of computations, the framework cannot fuse operations or pre-plan memory, forfeiting potential speedups of over 30 percent that compilers like torch.compile can provide.

2 Computational Graph: The “optimize before executing” distinction in the triggering sentence is the key design choice. By capturing the full program as a data structure (pioneered by Theano in 2010), the framework can fuse multiple operations into a single GPU kernel before any code runs, reducing overhead by over 10\(\times\). The engineering cost of this visibility is that the executed program differs from the source code, making debugging significantly harder, a trade-off every graph-based framework must justify against the performance gain.

These three problems are deeply interconnected. The execution model determines when differentiation occurs and what optimizations are possible. The abstraction layer must support both execution styles across all hardware targets. Solving any one problem in isolation leads to frameworks that excel in narrow contexts but fail in broader deployment. Because these problems are ultimately about translating mathematics into efficient hardware execution, a useful perspective is to view frameworks not as libraries but as compilers.

Systems Perspective 1.1: The ML Compiler

Your “Source Code” is the model architecture (the \(O\) term). The framework’s job is to take this high-level math and compile it into a series of hardware-specific kernel launches that:

- Minimize Data Movement (\(D_{\text{vol}}\)) through techniques like kernel fusion.

- Maximize Utilization (\(\eta\)) by matching operations to specialized hardware units like Tensor Cores.

- Minimize Overhead (\(L_{\text{lat}}\)) through efficient asynchronous dispatch and graph capture.

Choosing a framework means choosing the compiler that determines how efficiently a model uses hardware.

With these three problems in mind, we can now define what a machine learning framework fundamentally is.

Definition 1.1: Machine Learning Frameworks

Machine Learning Frameworks are software systems that translate high-level mathematical model definitions into hardware-optimized execution plans by managing the computational graph, automatic differentiation, kernel dispatch, and memory allocation across the hardware hierarchy.

- Significance (Quantitative): Frameworks directly determine the system efficiency (\(\eta\)) term in the iron law. XLA’s operator fusion, for example, eliminates intermediate memory writes between consecutive elementwise operations: fusing a matrix multiplication, bias add, and rectified linear unit (ReLU) into a single kernel reduces the total data movement (\(D_{\text{vol}}\)) by 2–3\(\times\) vs. three separate kernel launches, yielding observed end-to-end speedups of 1.5–2\(\times\) on transformer training without any model changes.

- Distinction (Durable): Unlike a numerical library such as NumPy, which executes each operation immediately (eager evaluation), an ML framework can defer execution to analyze the full computational graph and apply global optimizations: operator fusion, memory layout transformations, and parallel scheduling. These optimizations are impossible when operations are evaluated one at a time.

- Common Pitfall: A frequent misconception is that frameworks are interchangeable API wrappers. Framework choice determines which hardware optimizations are available: a PyTorch model using the default eager execution mode cannot benefit from XLA’s graph-level fusion until explicitly compiled with

torch.compile(), and the resulting throughput difference can exceed 2\(\times\) on the same hardware.

The compiler metaphor is not decorative. An ML framework translates logical intent into physical execution under the constraints of the iron law, deciding how to partition computation across memory hierarchies, when to trade numerical precision for throughput, and how to schedule operations so that the dominant term (data movement, computation, or overhead) is minimized. The framework is where the governing physics developed throughout this book becomes executable code.

The scale of this translation is not obvious from the API surface. A single call to loss.backward() triggers operation recording, memory allocation for gradients, reverse-order graph traversal, and hardware-optimized kernel dispatch—machinery that would require hundreds of lines of manual calculus for even a three-layer network. For a contemporary language model, the framework additionally orchestrates billions of floating-point operations across distributed hardware, coordinating memory hierarchies, communication protocols, and numerical precision. Building this from scratch would be economically prohibitive for most organizations, which is why the history of ML frameworks is a history of progressively automating these layers.

The three problems—execution, differentiation, and abstraction—did not emerge simultaneously. Each arose as a response to scaling limitations in the previous generation of tools. Tracing this evolution reveals why modern frameworks are designed as they are and why the particular trade-offs they embody were, in hindsight, inevitable.

The Ladder of Abstraction

In 1979, writing a matrix multiplication in Fortran that saturated the hardware required deep knowledge of cache lines, register scheduling, and vector units. By 2016, a single line of Python (torch.matmul(A, B)) achieved the same peak throughput without the programmer knowing anything about the silicon. That compression of effort did not happen in one step; it accumulated across four decades of abstraction, each layer solving a bottleneck that made the previous generation impractical for scaling. The result is a Ladder of Abstraction where each rung automates what the rung below exposed.

- Solving Performance (1979–1992): The Basic Linear Algebra Subprograms (BLAS)3 and LAPACK4 solved the problem of Hardware Primitives. They provided standardized, highly optimized implementations of matrix operations such as general matrix multiply (GEMM)5. This layer ensures that

C = A @ Bruns at near-peak silicon speed, regardless of the language calling it.

3 BLAS (Basic Linear Algebra Subprograms): The 1979 API specification that forms the bottom rung of the ladder described here. By decoupling C = A @ B from its hardware implementation, BLAS forced vendors to compete on optimized libraries (NVIDIA cuBLAS, Intel MKL) for a fixed set of primitives. Every framework above it on the ladder inherits this bargain: a single BLAS call from any language can saturate an A100, achieving over 312 TFLOPS for GEMM alone, without the framework knowing anything about the silicon.

4 LAPACK (Linear Algebra PACKage): Extends BLAS by providing a standard API for higher-level routines (SVD, eigendecomposition, least-squares) that vendors implement with chip-specific code layered on top of fast GEMM kernels. This layered design is the architectural pattern every ML framework inherits: high-level operations delegate downward to hand-tuned primitives, so a vendor-optimized LAPACK call can execute over 10\(\times\) faster than a naive implementation without the framework author writing a single line of hardware-specific code.

5 GEMM: The single operation that the “near-peak silicon speed” claim rests on. Hardware vendors hand-tune GEMM for their specific chips because every layer in a neural network reduces to matrix multiplication, making this one routine the performance floor for all frameworks above it on the ladder. The catch: GEMM achieves peak throughput only when matrix dimensions satisfy strict alignment constraints (multiples of eight for NVIDIA Tensor Cores), and violating these rules drops a framework from over 90 percent to roughly 30 percent of \(R_{\text{peak}}\).

- Solving Usability (2006): NumPy6 solved the problem of Developer Velocity. By wrapping low-level BLAS routines in high-level Python, it allowed scientists to write code in a friendly language while executing it in optimized C/Fortran. This “Vectorization” pattern, where the slow language handles logic and the fast language handles loops, became the standard contract for scientific computing.

6 NumPy (Numerical Python): In 2005, Travis Oliphant unified two competing Python array libraries (Numeric and Numarray) into a single package, giving the scientific computing community one BLAS-backed array standard at the moment it needed to scale. The “vectorization” contract this created (write logic in Python, execute loops in C/Fortran via BLAS) became the design template for every ML framework that followed: PyTorch tensors and TensorFlow arrays are direct descendants, extending the same n-dimensional array abstraction to GPUs. Python’s dominance in ML is a direct inheritance from this consolidation decision.

- Solving Differentiation (2015–present): Deep Learning Frameworks (Theano7, TensorFlow, PyTorch) solved the problem of Gradient Computation. While NumPy required manual derivation of backpropagation gradients (error-prone and slow), these frameworks introduced Automatic Differentiation via the computational graph (Rumelhart et al. 1986). This turned the chain rule into a software primitive, allowing researchers to define forward passes and get backward passes for free.

7 Theano: Developed at the Montreal Institute for Learning Algorithms (MILA) under Yoshua Bengio starting in 2007, Theano was the first framework to compile symbolic mathematical expressions into optimized CPU and GPU code via computational graphs (Bergstra et al. 2010). Its key insight – that a Python-defined computation graph could be compiled to CUDA without the researcher writing GPU code – became the architectural template for TensorFlow (2015) and influenced PyTorch’s autograd design. Theano was retired in 2017, but every modern framework inherits its core abstraction.

As Figure 1 illustrates, this progression reveals a critical insight: frameworks exist to bridge the gap between mathematical intent and silicon reality. As we move up the ladder, we gain productivity but lose transparency—a trade-off we explore in the Execution Problem (Section 1.2).

Each generation abstracted away details that consumed engineering effort in the previous one, yet each abstraction introduced new trade-offs. BLAS hid assembly-level optimization but fixed the interface. NumPy hid memory management but required manual differentiation. Modern frameworks hide gradient computation but introduce the execution model choice we examine next.

All modern frameworks converge on the same three core problems: how to execute computation, how to differentiate it, and how to abstract across hardware. We begin with the most visible of these: the execution problem, because its resolution determines what optimizations the other two problems can exploit.

Execution Problem

Consider two engineers writing the same neural network. The first debugs interactively, printing tensor shapes after each operation, inspecting intermediate values, and stepping through code with pdb. The second waits 30 seconds for compilation, then watches the model run 3\(\times\) faster with no ability to inspect any intermediate state. Both are correct; they have simply made different choices about the execution problem, the question of whether operations should execute immediately as written or be recorded for later execution. This choice creates a cascade of engineering trade-offs that shape every aspect of framework behavior, from debugging workflows to deployment options to peak hardware utilization.

Why execution strategy matters: The memory wall

To understand why execution strategy matters so much, consider the memory wall (first introduced in Neural Computation), the growing gap between processor computational speed and memory bandwidth. Modern GPUs can perform arithmetic far faster than they can fetch data. On an A100 GPU with 312 TFLOPS of compute and 2.0 TB/s of memory bandwidth, element-wise operations like ReLU achieve less than one percent of peak compute capacity, not because the hardware is slow, but because they spend nearly all their time waiting for data. The Roofline Model (The roofline model) formalizes this trade-off, showing exactly when operations are memory bound vs. compute bound.

The memory wall creates a critical classification: operations are either compute-bound (limited by arithmetic throughput, like large matrix multiplications) or memory-bound (limited by data movement, like activation functions and normalization). Most individual neural network operation types (activations, normalizations, element-wise operations) are memory bound, though the large matrix multiplications that dominate total compute time can be compute bound.

The key optimization for memory-bound operations is kernel fusion, combining multiple operations into a single GPU function (called a kernel)8 to avoid intermediate memory traffic. Fusing a sequence of LayerNorm, Dropout, and ReLU into one kernel can yield 5\(\times\) speedup by eliminating intermediate writes between operations. FlashAttention9 fuses the entire attention computation, reducing HBM traffic by 10–20\(\times\) and achieving 2–4\(\times\) wall-clock speedup.

8 Kernel (GPU): In GPU programming, a kernel is the function dispatched to execute in parallel across thousands of threads. Each kernel launch incurs 5–20 \(\mu\)s of CPU-side overhead for parameter assembly and GPU signaling, which means that small, unfused operations spend more time on launch overhead (\(L_{\text{lat}}\)) than on useful arithmetic. Reducing kernel count through fusion is therefore a direct attack on the overhead term of the iron law.

9 FlashAttention: Kernel fusion taken to its logical extreme, fusing the entire attention computation (Q, K, V projections, softmax, output) into a single kernel that tiles data to fit in SRAM (introduced in Network Architectures). By reducing HBM traffic 10–20\(\times\), FlashAttention transforms a memory-bound operation into a compute-bound one, demonstrating that framework-level fusion can shift an operation’s position on the Roofline Model from bandwidth-limited to throughput-limited.

A framework can only fuse operations it can see together. If operations execute immediately one at a time (eager execution), the framework cannot fuse them. If operations are recorded first into a graph (deferred execution), the framework can analyze and optimize the entire computation. This is why execution strategy matters so much: it determines what optimizations are even possible.

The computational graph

Kernel fusion is the key optimization for memory-bound operations, but fusion requires seeing multiple operations together. How do frameworks represent computation in a way that makes this visibility possible? The answer is the computational graph, a directed acyclic graph (DAG) where nodes represent operations and edges represent data dependencies. This graph is the framework’s internal model of the computation.

To ground this abstraction, examine Figure 2: computing \(z = x \times y\) maps onto two input nodes (\(x\) and \(y\)), one operation node (multiplication), and one output node (\(z\)). The execution problem asks: when is this graph constructed, and when is it executed?

Real machine learning models require much more complex graph structures. Figure 3 extends this representation to show a neural network computation graph alongside the system components that reason about it. In the left panel, notice how data flows through six operation nodes in a directed acyclic graph—each node’s output becomes the next node’s input. The right panel reveals what the framework gains by having this graph: it can query the structure to plan memory allocation for each tensor’s lifetime, and it can assign operations to devices based on data dependencies rather than execution order. The critical insight is that the graph exists independently of execution, enabling the framework to optimize before any arithmetic occurs.

This graph representation is more than a visualization; it is the data structure that enables both efficient execution and automatic differentiation. The answer to when this graph is constructed creates a design choice with cascading implications:

- For debugging: Can you print intermediate values? Step through code with a debugger?

- For optimization: Can the framework see multiple operations at once to fuse them?

- For deployment: Can the model run without a Python interpreter?

- For flexibility: Can control flow depend on computed tensor values?

No single execution model optimizes all these dimensions. Frameworks must choose their position in this trade-off space, and practitioners must understand these trade-offs to select appropriate tools and write efficient code. The following sections examine how different execution strategies navigate these constraints.

Three execution strategies

The computational graph representation enables global optimization, but it raises a critical design question: when should the framework build this graph? Consider a simple operation like y = x * 2. Two distinct approaches exist:

Immediate execution: Perform the multiplication right now, storing the result in

y. Natural and debuggable, but the framework sees only one operation at a time.Deferred execution: Record the intention to multiply, building a graph of operations. Execute later when explicitly requested. Less intuitive, but the framework sees the complete computation, enabling optimization.

Neither approach dominates; each embodies different trade-offs between flexibility and optimization potential. Modern frameworks have explored three primary execution strategies: eager execution with dynamic graphs, static computation graphs, and hybrid approaches that combine just-in-time (JIT) compilation with eager development. We examine each through its systems implications.

Eager execution with dynamic graphs

Example 1.1: Eager vs. Graph Execution Code Comparison

PyTorch (Eager Execution):

import torch

x = torch.tensor([1.0, 2.0])

y = x * 2

print(f"Intermediate value: {y}") # Works immediately

z = y.sum()TensorFlow one.x (Static Graph):

import tensorflow as tf

x = tf.placeholder(tf.float32)

y = x * 2

# print(y) -> Prints Tensor("mul:0"...), not value!

z = tf.reduce_sum(y)

with tf.Session() as sess:

result = sess.run(z, feed_dict={x: [1.0, 2.0]})Eager execution runs operations immediately as encountered, building the computation graph dynamically during execution. When a programmer writes y = x * 2, the multiplication happens instantly and the result is available for immediate use.

This provides the flexibility of normal programming: developers can print intermediate values, use conditionals based on computed results, and debug with standard tools. The framework records operations as they happen, constructing a dynamic graph that reflects the actual execution path taken.

For gradient computation, the framework records a history of operations in what is called an autograd tape10, a transient data structure built during execution. Each tensor operation creates a node that records: the operation performed, references to input tensors, and how to compute gradients. These nodes form a directed acyclic graph (DAG) of operations built during forward pass execution, not before.

10 Autograd Tape: A transient data structure built during forward execution, where each node records the operation type, input tensor references, saved intermediate values, and the backward function for chain rule application. The tape’s memory cost scales linearly with network depth and is destroyed after the backward pass. For deep models, this transient graph can consume more memory than the model weights themselves, which is why activation checkpointing (trading recomputation for memory) becomes necessary for training models that would otherwise exhaust accelerator memory.

Consider this example using PyTorch, which implements eager execution as its default mode. Listing 1 shows how operations are recorded as they execute.

import torch

x = torch.tensor([1.0], requires_grad=True)

y = x * 2 # Executes immediately; records MulBackward node

z = y + 1 # Executes immediately; records AddBackward node

# The autograd tape exists NOW, built during executionAfter these two operations, the framework has constructed an autograd tape with two nodes: one for the multiplication and one for the addition. The tape records that z depends on y, and y depends on x.

Calling z.backward() traverses this tape in reverse topological order, applying the chain rule at each node:

- Compute \(\frac{\partial z}{\partial z} = 1\) (seed gradient)

- Call

AddBackward0.backward()\(\rightarrow \frac{\partial z}{\partial y} = 1\) - Call

MulBackward0.backward()\(\rightarrow \frac{\partial z}{\partial x} = 2\) - Accumulate gradient in

x.grad

After backward() completes, the autograd tape is destroyed to free memory. The next forward pass builds a completely new tape. This design enables memory-efficient training: the system pays for gradient computation storage only during the backward pass.

War Story 1.1: The Silent Gradient Killer

x += 1 instead of x = x + 1.

The Failure: In-place operations modify the data directly in memory. However, the autograd tape (the computational graph) often needs the original value of x to compute gradients for previous layers. By overwriting x, the engineer destroyed the history needed for the chain rule.

The Consequence: The framework did not crash. Instead, it computed gradients using the modified value of x, resulting in mathematically incorrect updates. The model trained, but its loss plateaued at a high value. The team spent weeks debugging hyperparameters, never suspecting that a “memory optimization” had silently corrupted the calculus.

The Systems Lesson: Frameworks are graph construction engines, and in-place operations violate the immutability required for automatic differentiation. Writing x += 1 does not merely add a number: it sabotages the graph’s history (Paszke et al. 2019).

Follow this “define-by-run” execution model step by step in Figure 4. Notice the alternating pattern: define, execute, define, execute. Each operation completes entirely before the next begins, which is why standard Python debuggers work—a developer can set a breakpoint between any two operations and inspect the actual tensor values. This contrasts sharply with static graphs, where all operations must be defined before any execution occurs.

Systems implications: Flexibility

The dynamic autograd tape enables capabilities impossible with static graphs. Conditionals and loops can depend on tensor values computed during execution, enabling algorithms like beam search, dynamic recurrent neural network (RNN) lengths, or adaptive computation that adjust their behavior based on intermediate results. Different iterations can process tensors of different sizes without redefining the computation—essential for natural language processing where sentence lengths vary. Because operations execute immediately in standard Python, developers can print tensors, inspect values, and use standard debuggers (pdb, breakpoints) to diagnose errors in the same way they would debug any Python program.

Systems implications: Overhead

This flexibility comes with performance costs that map directly to the iron law (Iron Law of ML Systems). Each forward pass rebuilds the autograd tape from scratch, adding Python object creation, reference counting, and node linking overhead to \(L_{\text{lat}}\) on every iteration. Every operation goes through Python dispatch—function lookup, argument parsing, type checking—costing ~10μs per operation, which becomes significant for models with thousands of operations. Because the graph is built during execution, the framework cannot see across operations to fuse kernels, so each operation launches its own GPU kernel, inflating both \(O\) and \(D_{\text{vol}}\). The autograd tape itself stores references to all intermediate tensors and Function nodes, increasing memory consumption by 2–3\(\times\) compared to forward-only execution and adding pressure to \(D_{\text{vol}}\). Together, these costs create a performance ceiling that becomes visible as models grow smaller and dispatch overhead dominates computation.

For a typical ResNet-50 forward pass, eager execution overhead adds approximately 5–10 ms compared to an optimized compiled version, with the majority spent in Python dispatch and tape construction rather than actual computation.

The dispatch tax: Python overhead vs. GPU reality

Eager execution’s performance ceiling is driven by a fundamental systems mismatch: the speed of the host-side interpreter vs. the speed of the device-side silicon. We quantify this using The Dispatch Tax, defined as the fraction of time spent in the host-side orchestration (Python) vs. actual device execution (GPU).

Every operation in an eager framework (like standard PyTorch) must pay a fixed “Tax” of approximately 10 \(\mu\)s for Python to lookup the function, check tensor types, and launch the kernel.

- For small operations (for example, a ReLU on a small vector), the kernel might execute in only 1 \(\mu\)s. The dispatch tax is 90 percent, meaning the GPU spends the vast majority of its time waiting for the next command.

- For large operations (for example, a massive \(4096\times4096\) matrix multiply), the kernel executes for 100 \(\mu\)s. The dispatch tax drops to 9 percent, and the system becomes compute bound.

The dispatch tax explains why models with many small layers run significantly slower than their raw FLOP count predicts. To reach the “Titan” standard of efficiency, frameworks must move from Kernel-by-Kernel Dispatch to Graph-Level Execution, where the dispatch tax is paid once for the entire graph rather than per operation. The hybrid JIT and compilation strategies in Section 1.2.4.2 exist precisely to address this overhead.

The overhead costs of eager execution raise a natural question: what if we could see the entire computation before executing any of it? This is precisely what static computation graphs provide.

Static computation graphs

Static graph execution defines the complete computational graph as a symbolic representation first, then executes it separately. This “define-then-run” execution model means the graph exists before any computation occurs, enabling aggressive ahead-of-time optimization. The key insight is that if the framework sees the entire computation before running it, the framework can analyze, transform, and optimize the graph globally—a visibility impossible when operations execute immediately one at a time.

Two-phase execution

Static graphs implement a clear separation between graph construction and execution. Listing 2 illustrates the two phases using TensorFlow one.x, which pioneered this approach: symbolic definition creates placeholders and operations without computation, while explicit execution triggers actual arithmetic:

# Phase 1: Graph Construction (symbolic, no computation)

import tensorflow.compat.v1 as tf

tf.disable_v2_behavior()

# Define graph symbolically

x = tf.placeholder(tf.float32, shape=[1]) # Just a placeholder

y = x * 2 # Not executed, just recorded

z = y + 1 # Still no execution

# At this point, nothing has been computed

# Phase 2: Graph Execution (actual computation)

with tf.Session() as sess:

result = sess.run(z, feed_dict={x: [1.0]})

# Now computation happens: result = [3.0]Compare this with the dynamic model by examining Figure 5. Notice the clear boundary between phases: in the definition phase (left), the framework builds a complete blueprint without touching any data; in the execution phase (right), data flows through an already-optimized graph. This separation enables the framework to answer questions during the definition phase that are impossible to answer operation-by-operation: “Which intermediate tensors can share memory?” “Which operations can fuse into a single kernel?” “What is the total memory footprint?” By the time execution begins, these optimizations are already baked in.

The key difference from eager execution is that during construction, x, y, and z are not tensors containing values but rather symbolic nodes in a graph. Operations like * and + add nodes to the graph definition without performing any arithmetic. The print(y) line in the code example would reveal this distinction—it would print tensor metadata, not a computed value. Execution is triggered explicitly through sess.run(), at which point the framework analyzes the complete graph, optimizes it, and executes the optimized version with the provided input data.

Ahead-of-time optimization

Because the framework has the complete graph before execution, it can perform optimizations impossible in eager mode. The kernel fusion opportunity introduced in Section 1.2.1 becomes actionable here: because the framework sees y = x * 2 and z = y + 1 together in the graph, it can fuse them into z = x * 2 + 1, eliminating the intermediate y and halving memory traffic. With the full graph visible, the compiler can also calculate exact memory requirements for all tensors before execution, pre-allocating memory in a single pass and reusing buffers where lifetimes do not overlap. Tensor layouts can be transformed globally (for example, NCHW to NHWC) to match hardware preferences without runtime copying. Dead-code elimination (DCE)11 removes operations whose results are never consumed, and constant folding pre-computes operations on constant values at graph construction time, so the cost is paid once rather than on every forward pass.

11 Dead Code Elimination (DCE): Removes graph nodes whose results are never consumed by any downstream operation. In ML graphs, dead code arises from debugging operations left in production (print nodes, assertions), unused conditional branches, and gradient computations for frozen layers. For large transformer models, DCE eliminates 5–15 percent of graph nodes, reducing both \(O\) (fewer operations) and \(L_{\text{lat}}\) (fewer kernel launches). The DAG structure makes this safe: the framework verifies no downstream node depends on a candidate before removing it.

These optimizations map directly to iron law terms: kernel fusion reduces \(D_{\text{vol}}\) by eliminating intermediate memory writes, constant folding reduces \(O\) by computing values once, memory pre-allocation reduces \(L_{\text{lat}}\) by avoiding runtime allocation overhead, and dead code elimination reduces both \(O\) and \(D_{\text{vol}}\). Concretely, in large transformer models, constant folding and dead code elimination can reduce total FLOPs by 5-10% before the first batch even arrives.

Compilation frameworks like XLA (Accelerated Linear Algebra)12 (Google 2025) take this further, compiling the TensorFlow graph to optimized machine code for specific hardware. For a transformer encoder block, XLA can achieve 1.5–2\(\times\) speedup over unoptimized execution through aggressive fusion and hardware-specific code generation.

12 XLA (Accelerated Linear Algebra): The “optimized machine code” in the triggering sentence means XLA fuses an entire subgraph into one kernel, eliminating both launch overhead (\(L_{\text{lat}}\)) and intermediate memory writes (\(D_{\text{vol}}\)). The 1.5–2\(\times\) speedup for transformer blocks is modest because their large GEMM operations are already compute bound, leaving little overhead for fusion to remove. Memory-bound models see 3–10\(\times\) gains, where fusion hides the relative cost of many small, sequential operations.

Systems implications

Static graphs achieve high performance through ahead-of-time optimization. Kernel fusion reduces memory bandwidth requirements (often the bottleneck for ML workloads), and hardware-specific compilation enables near-peak utilization.

The cost of this performance is reduced flexibility. Standard Python control flow (if, for) cannot depend on computed tensor values in static graphs. TensorFlow provides graph-level control flow primitives (tf.cond and tf.while_loop) that support data-dependent conditions, but these require special syntax that diverges from standard Python, making code harder to write and reason about. Debugging is difficult because stack traces point to graph construction code, not execution code. Error messages often reference symbolic node names rather than the actual operations that failed.

Hybrid approaches: JIT compilation

Can we have both eager debugging and graph optimization? JIT compilation attempts this by capturing computation at runtime. The core trade-off is fidelity vs. generality. Tracing captures the exact execution path taken during a sample run, producing high fidelity to that specific input but missing branches not taken. Source-level compilation (scripting) analyzes the full program structure, preserving all control flow branches but requiring a restricted language subset. Both approaches produce an intermediate representation (IR)13 that enables the same ahead-of-time optimizations available to static graphs: operator fusion, constant folding, dead code elimination, and buffer reuse.

13 Intermediate Representation (IR): The “intermediate” captures this format’s architectural role: a language-independent layer that decouples the frontend (Python capture) from the backend (hardware code generation), exactly as LLVM IR decouples C/Rust/Swift frontends from x86/ARM backends. ML frameworks adopted this compiler pattern because it reduces the \(O(M \times N)\) cost of supporting \(M\) frontends and \(N\) backends to \(O(M + N)\): a single graph capture mechanism (TorchDynamo, tf2xla) can target multiple hardware backends without rewriting the capture logic.

The eager-vs.-compiled trade-off has a direct iron law consequence. JIT compilation amortizes the \(L_{\text{lat}}\) (dispatch overhead) across the compiled region. Longer compiled regions mean more overhead amortized per operation, which explains why graph breaks are performance-critical: each break forces a return to eager dispatch, resetting the amortization.

PyTorch’s TorchScript exemplifies both strategies. Tracing executes a function once with example inputs and records every tensor operation into a static computation graph. Listing 3 demonstrates the approach: the traced module becomes a compiled artifact that can be serialized, optimized, and executed independently of the Python interpreter:

import torch

def forward(x):

y = x * 2

z = y + 1

return z

# Trace the function by running it once

x_example = torch.tensor([1.0])

traced = torch.jit.trace(forward, x_example)

# traced is now a compiled TorchScript module

# Can serialize: torch.jit.save(traced, "model.pt")

# Can optimize: fusion, constant folding

# Can run without Python interpreterThe critical limitation of tracing reveals the fidelity-generality trade-off concretely. Because tracing records a single execution path, it cannot handle data-dependent control flow. Listing 4 illustrates a silent correctness failure.

def conditional_forward(x):

if x.sum() > 0: # Data-dependent condition

return x * 2

else:

return x * 3

traced = torch.jit.trace(conditional_forward, torch.tensor([1.0]))

# Tracing captures ONLY the x.sum() > 0 branch

# If input later has sum <= 0, traced version

# still executes x * 2 branchTracing records whichever branch executed during the example input. Subsequent executions always follow the traced path regardless of input values, silently producing incorrect results for inputs that would have taken the other branch. This failure mode is particularly dangerous because it produces no error, only wrong outputs. In production, such bugs can persist for months before anyone notices that a small fraction of inputs are being misclassified—and by then, debugging is a forensic exercise.

The alternative, scripting, achieves generality by analyzing Python source code directly and compiling it to TorchScript IR without executing. The scripting compiler parses the abstract syntax tree (AST), converts supported operations to IR operations, and preserves the branching structure so that both branches of a conditional exist in the compiled representation. The cost of this generality is a restricted Python subset: type annotations are required where inference fails, arbitrary Python objects and standard library modules are excluded, and dynamic metaprogramming is forbidden.

Tracing suits feed-forward models without conditionals (ResNet, VGG, Vision Transformer) and models where control flow depends only on hyperparameters fixed at trace time. Scripting suits models with data-dependent control flow (RNN variants, recursive networks, adaptive computation) and deployment to environments without a Python interpreter. The following examples demonstrate scripting syntax (Listing 5), control flow preservation (Listing 6), language restrictions (Listing 8), and IR inspection (Listing 7).

@torch.jit.script

def forward(x):

y = x * 2

z = y + 1

return z

# Compiles Python source code to TorchScript IR

# No example inputs needed

# Preserves control flow structureThe key advantage of scripting appears when handling conditionals. Unlike tracing, which captures only one branch, scripting preserves both paths in the IR.

@torch.jit.script

def conditional_forward(x: torch.Tensor) -> torch.Tensor:

if x.sum() > 0:

return x * 2

else:

return x * 3

# Both branches preserved in IR

# Correct branch executes based on runtime input valuesTo understand what the compiler produces, we can inspect the generated intermediate representation directly.

@torch.jit.script

def example(x: torch.Tensor) -> torch.Tensor:

return x * 2 + 1

# Inspect generated IR:

print(example.graph)

# graph(%x : Tensor):

# %1 : int = prim::Constant[value=2]()

# %2 : Tensor = aten::mul(%x, %1)

# %3 : int = prim::Constant[value=1]()

# %4 : Tensor = aten::add(%2, %3, %3)

# return (%4)However, scripting imposes constraints on what Python constructs are supported.

@torch.jit.script

def invalid_script(x):

import numpy as np # ERROR: Cannot import arbitrary modules

result = np.array([1, 2, 3]) # ERROR: NumPy not supported

print(f"Debug: {x}") # ERROR: f-strings not supported

return result

# Valid alternative:

@torch.jit.script

def valid_script(x: torch.Tensor) -> torch.Tensor:

# Use TorchScript-compatible operations

result = torch.tensor([1, 2, 3], dtype=x.dtype, device=x.device)

return resultScripting requires a restricted Python subset because TorchScript must statically analyze code that Python normally interprets dynamically. Function signatures and variables need explicit type annotations when type inference fails, and only tensor operations, numeric types, and standard containers (lists, dicts, tuples) are permitted—no arbitrary Python objects, no standard library modules like os or sys, and no dynamic class modification or metaprogramming. These constraints are the price of compilation: every feature that makes Python flexible also makes it unpredictable for a compiler.

The TorchScript IR represents operations using the aten namespace for core tensor operations, the prim namespace for primitives and control flow, static types for every value, and static single-assignment (SSA) form, where each variable is assigned exactly once to simplify compiler analysis. This IR enables optimizations independent of Python: operator fusion combines adjacent operations into single kernels, constant folding evaluates constant expressions at compile time, dead code elimination removes unused operations, and memory optimization reuses buffers when possible. Table 1 summarizes the key trade-offs between these two approaches.

| Aspect | Tracing | Scripting |

|---|---|---|

| Input requirement | Example inputs needed | No inputs needed |

| Control flow | Cannot handle data-dependent | Supports data-dependent |

| Conversion ease | Simpler (just run function) | Harder (restricted Python) |

| Type annotations | Not required | Required when inference fails |

| Error detection | Runtime (wrong results) | Compile time (syntax errors) |

| Best for | Feed-forward models | Models with conditionals |

Modern compilation: torch.compile

The previous approaches force a choice: write flexible code (eager execution) or fast code (static graphs). Modern JIT compilation attempts to eliminate this trade-off by automatically compiling eager code into optimized graphs with minimal developer intervention.

PyTorch 2.0’s torch.compile (Ansel et al. 2024) represents this approach: developers write natural Python code that executes eagerly during development, but the framework automatically captures and compiles hot paths into optimized kernels for production. Listing 9 shows the basic usage pattern:

@torch.compile

def forward(x):

return x * 2 + 1

# First call: captures execution, compiles optimized kernel (~100ms)

result1 = forward(torch.tensor([1.0]))

# Reuse compiled code

forward(torch.randn(10, 10))The compilation overhead in these examples (approximately 100 ms to compile the first time, microseconds to reuse) illustrates why torch.compile is so effective. The deeper question is why compilation helps so much. The answer lies in understanding the physics of software overhead. Dispatch costs that seem negligible for a single operation—a few microseconds here and there—compound dramatically across the thousands of operations in a forward pass. The following analysis quantifies this phenomenon.

Napkin Math 1.1: The Physics of Software Overhead

The Constants of Latency:

- Python Dispatch: ~10 μs per operation.

- Kernel Launch: ~5 μs per operation.

- Memory Access (VRAM): ~1 μs.

Scenario one: Eager Mode (The “Tiny Op” Trap) Consider a simple activation block: y = relu(x + bias).

Operations: two (Add, ReLU).

Execution:

- Launch

AddKernel: 15 µs overhead. - Read/Write Memory: \(2N\) bytes.

- Launch

ReLUKernel: 15 µs overhead. - Read/Write Memory: \(2N\) bytes.

- Launch

Total Overhead: 30 µs.

Total Memory Traffic: \(4N\) bytes.

Scenario two: Compiled Mode (Fusion) The compiler fuses this into one kernel: FusedAddRelu.

Execution:

- Launch

FusedKernel: 15 µs overhead. - Read/Write Memory: \(2N\) bytes (intermediate result stays in registers).

- Launch

Total Overhead: 15 µs (2\(\times\) speedup).

Total Memory Traffic: 2N bytes (2\(\times\) bandwidth efficiency).

The Conclusion: Compilation is not magic; it is overhead amortization. For small, element-wise operations such as LayerNorm, Gaussian Error Linear Unit (GELU), and Add, overhead often exceeds compute time by 10–100\(\times\). Fusing them is the only way to use the hardware effectively.

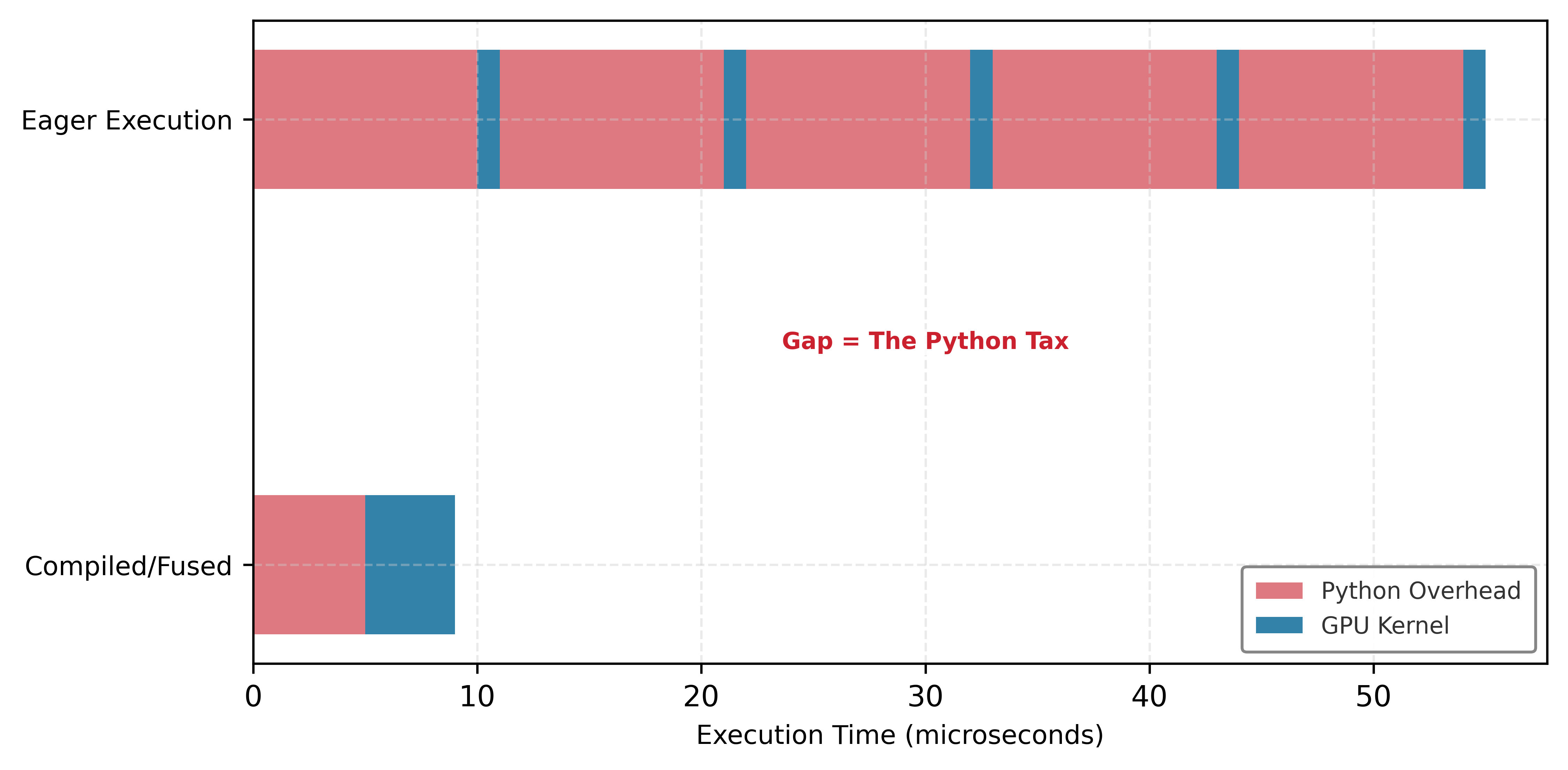

See this tax play out concretely in Figure 6. Notice how eager execution (top) creates “gaps” where the GPU sits idle while Python dispatches the next kernel. The blue compute regions are short; the red dispatch regions are comparatively long. Compilation (bottom) fuses these operations into a single kernel launch, eliminating the gaps entirely so the GPU spends nearly all its time computing rather than waiting.

The natural question is: can this fusion happen automatically? PyTorch 2.0’s torch.compile14 attempts exactly this by capturing eager code and compiling it into fused kernels without requiring users to write custom CUDA.15

14 torch.compile: It enables this automatic fusion by intercepting Python bytecode (via TorchDynamo) to extract a computational graph from unmodified eager code. This graph is then compiled into optimized kernels, trading a one-time compilation delay for a permanent 1.3–\(2\times\) throughput gain on transformer models by reducing kernel launch overhead.

15 CUDA (Compute Unified Device Architecture): NVIDIA’s parallel computing platform (2007) serving as the foundational layer between high-level Python operations and GPU silicon. When PyTorch executes torch.matmul(A, B), the call traverses the framework’s dispatcher, selects a cuBLAS kernel, and launches it on the GPU. Each launch incurs 5–20 \(\mu\)s of CPU-side overhead. For small operations, this dispatch overhead (\(L_{\text{lat}}\)) exceeds the useful compute time, which is why compilation (fusing \(N\) operations into one kernel launch) yields speedups proportional to the reduction in launch count rather than the reduction in arithmetic.

Architecture: Three-stage compilation pipeline

torch.compile consists of three coordinated components, each handling a distinct phase of the compilation process:

TorchDynamo (graph capture): Intercepts Python bytecode execution using CPython’s PEP 523 frame evaluation API. Unlike

torch.jit.trace, which records a single execution path and silently ignores alternative branches, TorchDynamo also captures operations during execution but inserts graph breaks when it encounters unsupported code (print statements, arbitrary Python), ensuring correctness rather than silent failure. The current graph is finalized for compilation, unsupported code executes eagerly, and a new graph begins after.FX Graph (intermediate representation): Operations captured by TorchDynamo are converted to FX graph format, PyTorch’s node-based directed acyclic graph where each node represents an operation with explicit inputs and outputs. The FX graph serves as PyTorch’s analog to LLVM IR: a standardized representation that separates frontend (Python code capture) from backend (hardware-specific code generation). This design allows different backends such as TorchInductor, Open Neural Network Exchange (ONNX) Runtime, and TensorRT to consume FX graphs and enables optimization passes such as dead code elimination, constant folding, and pattern matching for fusion opportunities.

TorchInductor16 (code generation): The default backend that compiles FX graphs to optimized machine code. For CUDA GPUs, TorchInductor generates Triton17 kernels, a Python-based GPU kernel language that compiles to Parallel Thread Execution (PTX)18. For CPUs, it generates C++ code with vectorization instructions (AVX2, AVX-512). TorchInductor applies three key optimizations: kernel fusion (combining operations to reduce memory traffic), memory layout optimization (choosing tensor layouts that minimize access overhead), and autotuning (measuring performance across implementation variants to select the fastest).

16 TorchInductor: The use of Triton to generate GPU code is a deliberate trade-off that prioritizes fast JIT compilation speed over achieving maximum hardware performance. This makes on-the-fly optimization practical for an eager-execution framework, even if the resulting kernels are 5–20 percent slower than highly optimized, hand-written CUDA.

17 Triton: TorchInductor generates Triton because its Python-like syntax provides a simpler, more stable compilation target than raw CUDA, making automated code generation tractable. This abstraction allows the compiler to handle complex GPU details like memory coalescing automatically, a requirement for performing kernel fusion. The accepted trade-off is achieving 80–95 percent of hand-tuned CUDA performance in exchange for enabling the compiler to effectively autotune kernels and reduce development time from weeks to hours.

18 PTX: An intermediate representation (IR) from NVIDIA that serves as a stable compilation target for high-level GPU languages like Triton. This allows TorchInductor to generate portable code, as the NVIDIA driver—not the framework—is responsible for the final translation to hardware-specific machine code (SASS). This forward compatibility, however, can result in performance that is 10–15 percent slower than kernels hand-tuned for a specific GPU architecture.

The generated code is cached on disk: TorchInductor maintains its own compilation cache, and Triton kernels are additionally cached in ~/.triton/cache/. Subsequent runs with the same input shapes can skip compilation and directly execute cached code.

Execution flow

The first execution follows a multi-step process: TorchDynamo intercepts bytecode and records operations into FX graph, FX graph is passed to TorchInductor for compilation (5–30 seconds for transformer models), and compiled code is cached and executed. Subsequent executions with the same input shapes dispatch directly to compiled code with microseconds overhead. If input shapes change, TorchInductor must recompile for the new shapes (shape specialization). PyTorch maintains separate compiled versions for each unique shape configuration.

Graph breaks: Causes and detection

Graph breaks occur when torch.compile encounters code it cannot compile, forcing execution to fall back to eager mode. Understanding graph break causes provides the foundation for achieving good performance.

Data-dependent control flow requires tensor values unavailable at compile time, as shown in Listing 10.

@torch.compile

def conditional_compute(x):

if x.sum() > 0: # Graph break: tensor value needed

return x * 2

else:

return x * 3

# Creates two compiled regions: operations before

# and after the if statement

# The if statement itself executes eagerlyTorchDynamo creates a graph break: operations before the if statement are compiled, the if statement executes eagerly (evaluating which branch to take), and the chosen branch is compiled as a separate region.

Unsupported operations also cause graph breaks, as Listing 11 demonstrates.

print force a graph break, splitting compiled code into two regions with eager execution in between.

@torch.compile

def debug_compute(x):

y = x * 2

print(f"y = {y}") # Graph break: I/O operation

z = y + 1

return z

# Creates two compiled regions: before and after printCommon unsupported operations include I/O (print, file operations), custom Python objects, and calls to non-PyTorch libraries. Each graph break incurs overhead: tensors must be marshalled from compiled code back to Python (possibly copying from GPU to CPU), the eager operation executes, and results are marshalled into the next compiled region.

Shape changes prevent compiled code reuse, as Listing 12 illustrates.

@torch.compile

def variable_length(x, length):

return x[:, :length] # Shape changes each call

# Each unique length triggers recompilation

for i in range(10):

result = variable_length(x, i) # 10 recompilationsDetect graph breaks using Listing 13.

TORCH_LOGS to graph_breaks prints each break location and reason during execution.

TORCH_LOGS="graph_breaks" python train.pyThis prints each break location and reason: Graph break in user code at file.py:15/Reason: call to unsupported function print. Minimizing graph breaks is key to performance: move unsupported operations outside compiled regions, replace data-dependent control flow with conditional execution (torch.where), or accept eager execution for inherently dynamic sections.

Compilation modes and backends

As a project matures from prototyping to production, engineers progressively increase compilation aggressiveness. The default mode (mode='default') applies moderate optimization with fast compilation (5–30 seconds for transformer models), making it suitable for development and training where compilation overhead is amortized over many iterations. When deploying an inference server with fixed input shapes, mode='reduce-overhead' minimizes Python interpreter overhead by aggressively capturing operations and enabling CUDA graphs that batch kernel launches, improving throughput by 20–40 percent over the default. For production training that will run for days, mode='max-autotune' generates and benchmarks multiple implementation variants for each operation, increasing compilation time (minutes to hours for large models) but improving runtime performance by 10–30 percent. This progression—default for development, reduce-overhead for inference, max-autotune for long training runs—mirrors the Compilation Continuum principle we formalize later.

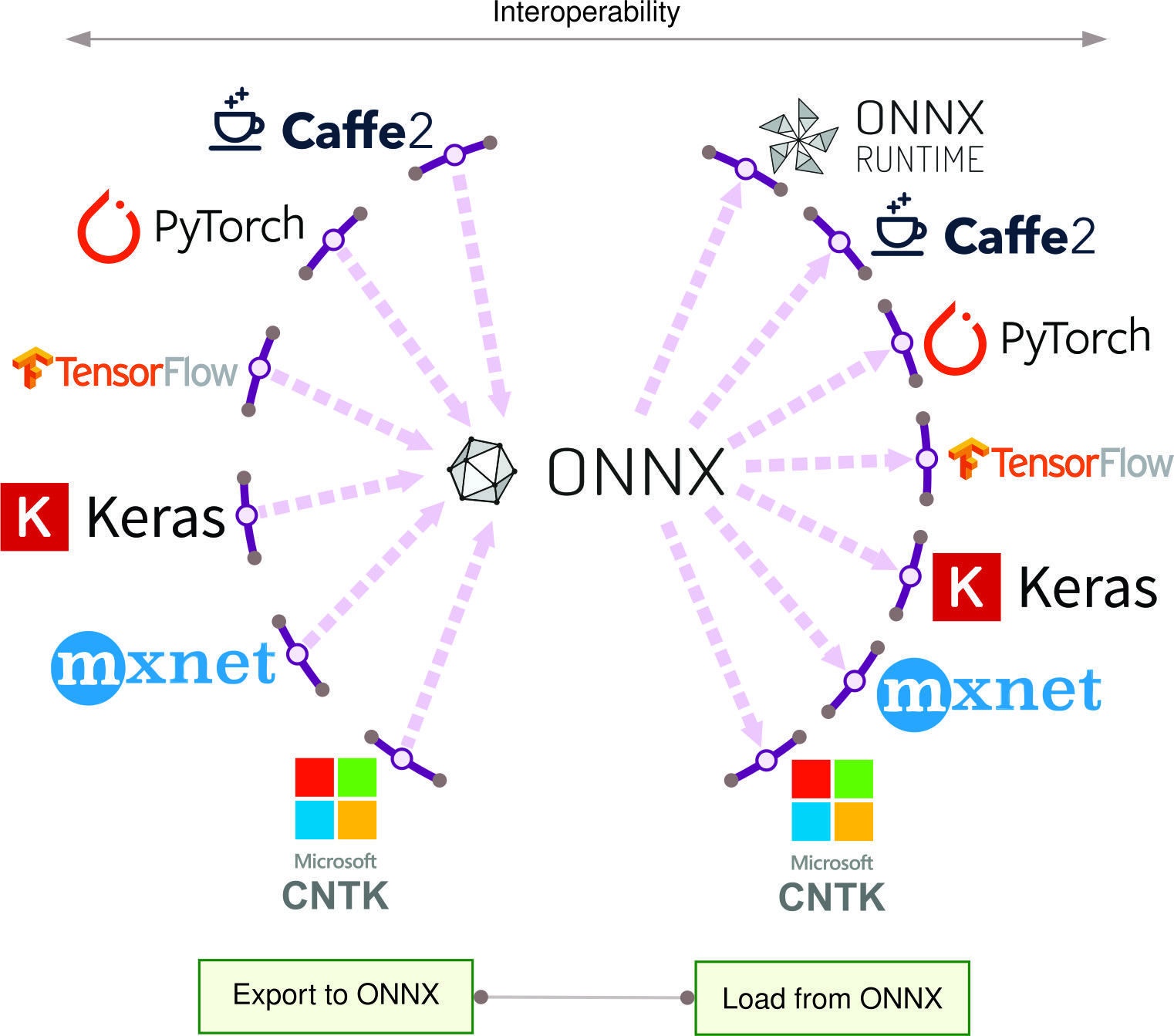

The compilation mode controls how aggressively to optimize; the backend controls what target to optimize for. TorchInductor (the default) generates Triton kernels for CUDA and C++ for CPU, providing the best general-purpose performance for both training and inference. When cross-platform deployment is required, the ONNX Runtime backend exports the FX graph to ONNX format, enabling execution on CPUs, GPUs, mobile, and edge devices—though limited ONNX operation coverage may cause more graph breaks. For maximum inference throughput on NVIDIA GPUs, the TensorRT backend compiles to NVIDIA’s inference engine with aggressive int8 quantization, layer fusion, and kernel autotuning, often achieving 1.5–2\(\times\) speedup over TorchInductor. The trade-off is clear: each backend narrows the target to unlock deeper optimization, echoing the flexibility-vs.-performance axis that distinguishes eager from graph execution.

Practical example: Measuring speedup

Listing 14 implements correct GPU benchmarking methodology, incorporating CUDA synchronization, warmup iterations to exclude compilation time, and sufficient iterations to amortize measurement overhead:

import torch

import time

def forward(x, w):

return torch.matmul(x, w).relu()

x = torch.randn(1024, 1024, device="cuda")

w = torch.randn(1024, 512, device="cuda")

# Eager mode benchmark

torch.cuda.synchronize() # Ensure GPU operations complete

start = time.time()

for _ in range(100):

y = forward(x, w)

torch.cuda.synchronize() # Wait for GPU kernel completion

eager_time = time.time() - start

# Compiled mode benchmark

forward_compiled = torch.compile(forward)

forward_compiled(x, w) # Warmup: trigger compilation

torch.cuda.synchronize()

start = time.time()

for _ in range(100):

y = forward_compiled(x, w)

torch.cuda.synchronize()

compiled_time = time.time() - start

print(f"Speedup: {eager_time/compiled_time:.2f}$\times$ ")

# Typical: 2-5x speedup for matrix operationsCritical benchmarking details: (1) Use torch.cuda.synchronize() because CUDA operations are asynchronous; without synchronization, timing measures only kernel launch time, not execution time. (2) Warmup compilation by calling once before timing to exclude compilation from measurements. (3) Run 100+ iterations to amortize measurement overhead.

Systems implications

First execution includes compilation time: 5–10 s for small models, 30–60 s for BERT-base transformers, 5–10 min for GPT-3 scale models. This overhead is amortized across training (compile once, train for thousands of iterations) but impacts development iteration time. Compiled kernels are cached on disk; subsequent runs skip compilation.

Compilation adds overhead: 100–500 MB for FX graph construction, 500 MB–2 GB peak during Triton compilation, 10–100 MB per compiled graph for storage. Runtime memory usage is similar to eager mode (kernel fusion can reduce intermediate tensors but compiled code may allocate temporary buffers). Compiled models typically use 90–110 percent of eager mode memory.

Errors in compiled code produce stack traces pointing to generated code, not source Python code. Print statements inside compiled regions cause graph breaks (executed eagerly, not compiled). For debugging, remove @torch.compile to revert to eager execution, fix bugs, then re-enable compilation. Use TORCH_COMPILE_DEBUG=1 for verbose compilation logs.

When to use torch.compile

The decision follows directly from the compilation cost model. Long training runs amortize compilation overhead across hundreds of iterations, and stable architectures with fixed control flow minimize graph breaks—making training the strongest use case. Inference is equally compelling: a deployed model compiles once at startup and serves thousands of requests, where mode='reduce-overhead' minimizes per-request overhead. Compilation should be deferred, however, during rapid prototyping, where the overhead slows iteration time and the architecture has not yet stabilized. Models with frequent graph breaks or dynamic shape changes prevent effective compilation, and debugging is harder in compiled mode because error locations point to generated code rather than source Python. The practical strategy is to develop in eager mode, stabilize the architecture, then enable compilation for training and deployment.

Comparison of execution models

Table 2 contrasts the three execution models across six dimensions, revealing that hybrid JIT compilation achieves most of static graph performance while preserving much of eager execution’s flexibility:

| Aspect | Eager + Autograd Tape (PyTorch default) | Static Graph (TensorFlow 1.x) | JIT Compilation (torch.compile) |

|---|---|---|---|

| Execution Model | Immediate | Deferred | Hybrid |

| Graph Construction | During forward pass | Before execution | First execution (cached) |

| Optimization | None (per-operation) | Ahead-of-time | JIT compilation |

| Dynamic Control Flow | Full support | Limited (static unroll) | Partial (graph breaks) |

| Debugging | Easy (standard Python) | Difficult (symbolic) | Moderate (mixed) |

| Performance | Baseline | High (optimized) | High (compiled regions) |

Eager mode’s primary value is in the “Workflow Iteration” loop (ML Workflow): it allows using standard Python debuggers (like PDB) to inspect variables mid-execution, whereas graph-mode debugging often requires specialized framework tools. This immediate feedback accelerates the prototyping phase of the ML lifecycle.

Beyond these core execution trade-offs, Table 3 highlights additional systems-level distinctions between static and dynamic approaches:

| Aspect | Static Graphs | Dynamic Graphs |

|---|---|---|

| Memory Management | Precise allocation planning, optimized memory usage | Flexible but potentially less efficient |

| Hardware Utilization | Can generate highly optimized hardware-specific code | May sacrifice hardware-specific optimizations |

| Research Velocity | Slower iteration due to define-then-run requirement | Faster prototyping and model experimentation |

| Integration with Legacy Code | More separation between definition and execution | Natural integration with imperative code |

These trade-offs are not binary choices. Modern frameworks offer a spectrum of options, which raises the quantitative question of where on this spectrum a given project should operate.

Quantitative principles of execution

These execution models present a spectrum of trade-offs, but engineers need more than intuition to navigate them. Two quantitative principles formalize the decision. The Compilation Continuum Principle establishes when the performance gains from compilation justify its development cost, expressed as a ratio of production executions to development iterations. The Dispatch Overhead Law quantifies the per-operation cost of framework flexibility, revealing why small operations in eager mode can spend more time in Python overhead than in actual computation. Together, these principles transform framework selection from subjective preference into measurable engineering analysis.

The compilation continuum principle

The Execution Problem demands a quantitative principle: when should a project compile?

The execution models form a continuum from maximum flexibility to maximum optimization, visualized in Equation 1:

\[ \text{Eager} \xrightarrow{\text{tracing}} \text{JIT} \xrightarrow{\text{AOT}} \text{Static Graph} \xrightarrow{\text{synthesis}} \text{Custom Hardware} \tag{1}\]

Each step rightward sacrifices flexibility for performance. The practical question is where on this continuum a given project should operate. The optimal compilation strategy depends on the ratio of development iterations to production executions (Equation 2):

\[ \text{Compilation Benefit} = \frac{N_{\text{prod}} \cdot (T_{\text{eager}} - T_{\text{compiled}})}{T_{\text{compile}} + N_{\text{dev}} \cdot T_{\text{compile}}} \tag{2}\]

Where:

- \(N_{\text{prod}}\) = number of production executions (dimensionless count: inference requests, training steps)

- \(N_{\text{dev}}\) = number of development iterations requiring recompilation (dimensionless count)

- \(T_{\text{eager}}\) = time per execution in eager mode (seconds)

- \(T_{\text{compiled}}\) = time per execution in compiled mode (seconds)

- \(T_{\text{compile}}\) = one-time compilation cost (seconds)

Decision Rule: Compile when \(\text{Compilation Benefit} > 1\). The ratio is dimensionless.

Table 4 provides representative throughput data across execution modes and model architectures:

| Model | Eager (img/sec) | torch.compile (img/sec) | TensorRT (img/sec) | Compile Time (seconds) |

|---|---|---|---|---|

| ResNet-50 | 1,450 | 2,150 | 3,800 | 15–30 |

| BERT-Base | 380 | 520 | 890 | 30–60 |

| ViT-B/16 | 620 | 950 | 1,650 | 25–45 |

| GPT-2 (124M) | 180 | 260 | 420 | 45–90 |

These throughput differences across execution modes raise a practical question—which framework execution strategy best serves each workload archetype.

Lighthouse 1.1: Framework Strategy by Archetype

| Archetype | Dominant iron law Term | Optimal Framework Strategy | Rationale |

|---|---|---|---|

| ResNet-50 | \(\frac{O}{R_{\text{peak}} \cdot \eta}\) (Compute) | TensorRT (inference) | Kernel fusion maximizes MFU; compute-bound |

| (Compute Beast) | torch.compile (training) | workloads benefit most from optimization | |

| GPT-2 | \(\frac{D_{\text{vol}}}{BW}\) (Memory Bandwidth) | torch.compile | Kernel fusion reduces HBM round-trips; |

| (Bandwidth Hog) | keeps data in cache to mitigate bandwidth | ||

| DLRM | \(\frac{D_{\text{vol}}}{BW}\) (Random Access) + | Eager with specialized kernels | Embedding lookups are inherently irregular |

| (Sparse Scatter) | \(T_{network}\) | (FBGEMM) | and dynamic; compilation gains are small |

| DS-CNN | \(L_{\text{lat}}\) (Overhead) | AOT compilation (TFLite, ONNX) | Sub-ms inference; every microsecond of |

| (Tiny Constraint) | Python overhead is unacceptable |

Key insight: Compilation benefits scale with how much of the workload is optimizable. Compute Beasts (Table 4: ResNet-50 sees 2.6\(\times\) speedup from TensorRT) benefit most. Sparse Scatter workloads gain little because their bottleneck (embedding lookups) is inherently irregular.

This principle has concrete implications across three regimes. In research prototyping (\(N_{\text{dev}} \gg N_{\text{prod}}\)), teams should stay eager. If the architecture changes every few minutes, compilation overhead dominates. A 30-second compile time with ten iterations/hour means five minutes lost to compilation per hour, often more than the runtime savings.

For training runs (\(N_{\text{prod}} \gg N_{\text{dev}}\)), compilation pays off. A typical training run executes millions of forward/backward passes, so even 60 seconds of compilation amortizes to microseconds per step. From Table 4, torch.compile provides ~48 percent speedup on ResNet-50 (2,150 vs. 1,450 img/sec); this pays off after the breakeven point in Equation 3:

\[ N_{\text{breakeven}} = \frac{T_{\text{compile}}}{T_{\text{eager}} - T_{\text{compiled}}} = \frac{30\text{s}}{(1/1450 - 1/2150)\text{s/img}} \approx 134{,}000 \text{ images} \tag{3}\]

For ImageNet (1.28M training images), compilation pays off within the first epoch.

For production inference (\(N_{\text{dev}} \approx 0\), \(N_{\text{prod}} \rightarrow \infty\)), teams should maximize compilation. With no development iterations and potentially millions of requests, every optimization matters. Using mode='max-autotune' despite hour-long compilation is worthwhile because the cost is amortized over the deployment lifetime.

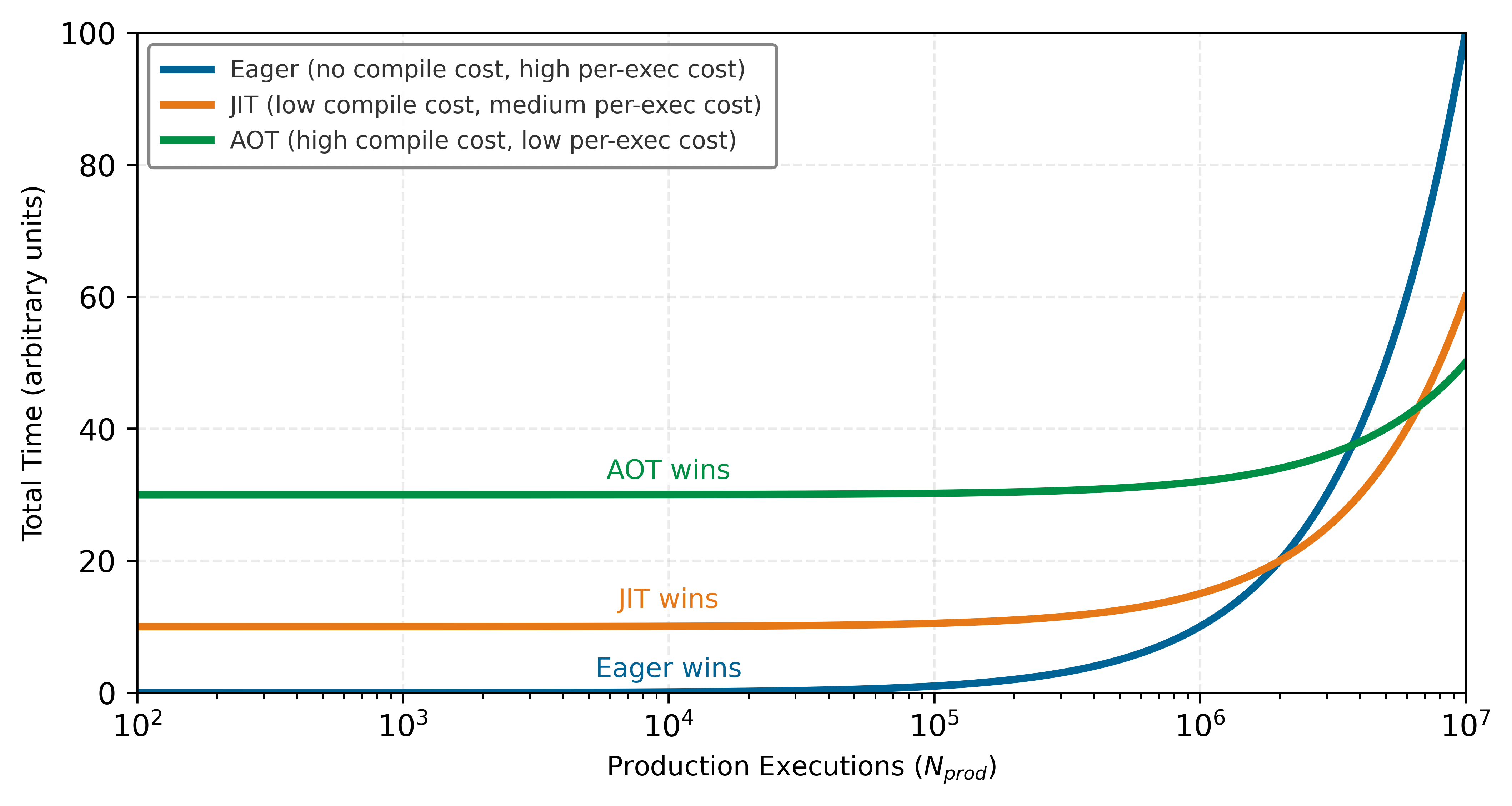

These three regimes create distinct regions in the compilation decision space. Figure 7 maps out these regions so engineers can identify where each strategy wins. Watch for the crossover points: the steep eager line (highest per-execution cost) eventually overtakes JIT’s moderate slope, while the gentlest compiled line (lowest per-execution cost but largest upfront investment) wins only after millions of executions. The slopes reveal per-execution cost; the vertical offsets reveal compilation overhead. A project’s position on the x-axis determines which line it should be on.

The dispatch overhead law

A second principle emerges from the Dispatch Overhead Equation (Equation 4): when does framework overhead, rather than compute or memory, dominate execution time? Let \(N_{\text{ops}}\) be the number of operations (count), \(t_{\text{dispatch}}\) the per-operation dispatch overhead (seconds), and \(T_{\text{compute}}\) and \(T_{\text{memory}}\) the total compute and memory times (seconds). Framework overhead dominates when operations are small relative to dispatch cost:

\[ \text{Overhead Ratio} = \frac{N_{\text{ops}} \cdot t_{\text{dispatch}}}{T_{\text{compute}} + T_{\text{memory}}} \tag{4}\]

When Overhead Ratio \(> 1\), the model is overhead-bound. Compilation provides maximum benefit for overhead-bound workloads because it eliminates per-operation dispatch.

From the case study in Section 1.9, we can quantify this effect.

This cumulative latency creates what is effectively a dispatch tax on execution. We define \(T_{\text{hw}}\) as hardware execution time and \(T_{\text{sw}}\) as software overhead time; both are measured in seconds.

Napkin Math 1.2: The Dispatch Tax

Problem: When does Python overhead kill performance?

Scenario one: Small multilayer perceptron (MLP) (Overhead Bound)

- Compute: 6 small matrix/element-wise operations.

- Hardware Time: T_hw ≈ 2.6 μs (mostly memory latency).

- Software Overhead: T_sw ≈ 6 ops\(\times\) 5.0 μs/op = 30 μs.

- Ratio: 30 / 2.6 ≈ 11.5.

- Conclusion: The system spends 92 percent of time waiting for Python. Compilation yields 13\(\times\) speedup.

Scenario two: GPT-3 Layer (Compute Bound)

- Compute: Huge matrix multiplications.

- Hardware Time: T_hw ≈ 100 ms = 100000.0 μs.

- Software Overhead: \(T_{sw} \approx 50.0 \, \mu s\).

- Ratio: 50.0 / 100000.0 ≈ 0.0005.

- Conclusion: Python overhead is negligible. Compilation helps only via kernel fusion (memory bandwidth), not dispatch elimination.

The principle’s implication is that small models benefit disproportionately from compilation. A 100-parameter toy model might see 10\(\times\) speedup from torch.compile, while a 175 B-parameter model sees only 1.3\(\times\). This explains why compilation matters most for efficient inference on smaller, deployed models.

The dispatch tax analysis reveals that small operations are overhead-bound regardless of hardware capability. This observation matters most at the extreme edge of the deployment spectrum, where the entire Python runtime is itself an unacceptable overhead.

Frameworks for the edge: TinyML and micro-runtimes

The compilation continuum reaches its extreme at the far edge. While cloud frameworks like PyTorch and TensorFlow two.x prioritize flexibility through eager execution, TinyML19 systems operating on microcontrollers (MCUs) with kilobytes of memory cannot afford the overhead of a Python interpreter or a dynamic runtime.

19 TinyML: Systems designed for microcontrollers (MCUs) that cannot afford the memory or processing overhead of a Python interpreter. Instead of flexible eager execution, frameworks compile models ahead-of-time (AOT) into self-contained C/C++ executables with no dynamic memory allocation. This is a hard requirement, as a single malloc() failure on a device with just 256 KB of RAM is unrecoverable.

Lighthouse 1.2: Lighthouse Example: Smart Doorbell (TinyML)

The Constraint: A standard PyTorch runtime occupies ~500 MB. The Python interpreter itself occupies ~20 MB. Both are orders of magnitude larger than the entire device.

The Framework Solution: Micro-frameworks like TensorFlow Lite Micro (TFLM) and PyTorch ExecuTorch solve this through Extreme AOT Compilation:

- Static memory planning: The framework calculates the exact memory address for every tensor at compile time. There is no dynamic

malloc()or garbage collection. - Kernel specialization: Only the specific kernels used by the model (for example, Conv2D, DepthwiseConv) are compiled into the binary. Unused code is stripped away.

- No-interpreter execution: The model is converted into a flat sequence of function calls or a simple “Command Buffer” that the MCU executes directly in C/C++.

The Silicon Contract: On TinyML devices, the contract is strictly Memory-Bound. The framework’s primary job is to ensure the model’s intermediate activations (the “working set”) fit within the MCU’s tiny SRAM.

These micro-runtimes represent the “Pure AOT” endpoint of the continuum. By sacrificing all dynamic flexibility, they enable machine learning to run on devices consuming milliwatts of power, fulfilling the Energy-Movement Invariant (formalized in Data Engineering) by keeping all data movement local to the chip.

The spectrum of execution strategies, from dynamic eager execution to static graph compilation and specialized micro-runtimes, requires developers to make deliberate trade-offs. The following checkpoint summarizes the key decision points before we address the second core problem.

Checkpoint 1.1: Execution Models

The choice of execution mode determines both developer velocity and model performance.

Debuggability vs. Speed

The Modern Compromise

The execution problem determines when computation happens and what optimizations are possible. Neural network training, however, requires a capability that no amount of clever scheduling can provide: the ability to compute gradients automatically.

Consider what training actually requires: for each of millions of parameters, compute how a tiny change would affect the loss. Doing this manually for even a simple three-layer network requires deriving and implementing dozens of partial derivatives. For a modern transformer with billions of parameters, manual differentiation is economically impossible. A framework that executes efficiently but cannot differentiate can run inference but cannot learn.

Differentiation Problem

The differentiation problem asks: how should frameworks compute gradients20 automatically? Neural network training requires derivatives of a scalar loss \(\mathcal{L}\) with respect to millions or billions of parameters, making manual differentiation impractical. Because a single scalar loss depends on all parameters, reverse-mode automatic differentiation (AD)21 is the optimal strategy: one backward pass computes all parameter gradients simultaneously, while forward mode would require a separate pass for each parameter. All major ML frameworks therefore implement reverse-mode AD by default (Baydin et al. 2018).

20 Automatic Differentiation (AD): The “automatically” in the triggering sentence is the key word: AD mechanizes the chain rule as a graph traversal, eliminating the manual derivative computation that made scaling beyond toy networks impractical. The systems trade-off that makes this feasible is the choice of reverse mode, which exploits the many-to-one topology of training (many parameters, one scalar loss) to compute all gradients in a single backward pass. Forward mode would require one pass per parameter, making billion-parameter training computationally impossible.